There is a measurement gap sitting at the center of every Amazon PPC automation system, and it is costing advertisers more money than almost any other inefficiency in the stack. The gap is this: the data your bidding algorithm uses to make decisions — impression share reports, placement breakdowns, search term reports — describes a world that stopped existing 12 to 24 hours ago. Your algorithm is optimizing for yesterday. The auction is happening right now.

This is not a new problem, but it has become a critical one in 2026. The introduction of Rufus AI into Amazon’s core search experience has fundamentally changed the topology of the search results page. Where once a top-of-search position was a relatively stable competitive landscape — your ASIN versus three or four direct competitors — it is now a dynamic, intent-weighted composition that includes AI-generated product suggestion modules, personalized recommendation inserts, and sponsored placements that shift in rank not just between days or hours, but between individual search sessions and geographic locations.

For developers and architects building the next generation of PPC automation systems, integrating a real-time Monitor ad placements API is no longer a feature on a product roadmap. It is the foundational data primitive on which competitive bidding logic must be built. Without it, every sophisticated model you build — your Dayparting engine, your Share of Voice tracker, your competitive displacement alert system — is running on stale fuel.

This guide walks through the technical landscape in full: why existing data sources fail, what a properly designed real-time Amazon ad placement tracking API must deliver, how to architect the integration across a multi-tenant SaaS platform, and what the emerging Rufus AI placement signals mean for your API response schema in the months ahead.

The Critical Role of Real-time Placement Data in Modern AdTech

To understand why real-time placement data matters so much, you need to understand the specific failure mode of report-based data in a high-frequency auction environment. Amazon’s Sponsored Products auction runs continuously. Every search query triggers an instantaneous auction, and the winning bids and resulting placements change with every auction cycle. A keyword that costs $1.40 CPC to hold the top position at 9 AM may require $2.20 at 7 PM, and only $0.95 at 3 AM. These dynamics are invisible in any data source that aggregates over hours or days.

Why Standard Reporting APIs Are Insufficient for Algorithmic Bidding

Amazon’s official Advertising API offers three primary reporting endpoints relevant to placement analysis: the Campaign Performance Report, the Placement Report, and the Search Term Report. Each of these has structural limitations that make them unsuitable as the data backbone for real-time bidding logic.

The Campaign Performance Report provides impression, click, spend, and conversion data, but aggregates at the daily level by default and has a data freshness lag of 12-24 hours. The Placement Report breaks down performance by placement zone (top of search, rest of search, product pages), but again at the daily or hourly granularity at best, and it represents historical performance rather than current placement state. Neither report answers the question a bidding algorithm needs answered in real time: where is this ad appearing right now, in this zip code, on this device?

The fundamental difference is between report-based data and live-placement data. Report-based data tells you what happened. Live-placement data tells you what is happening. A bidding engine that optimizes only on historical reports is like a chess player who only sees the board position from three moves ago — technically informed, but practically disadvantaged against someone who sees the current state.

The 2026 SERP Complexity Layer: Rufus AI and Dynamic Modules

The challenge of real-time placement monitoring is compounded in 2026 by the structural evolution of the Amazon SERP itself. Rufus AI, Amazon’s LLM-based shopping assistant, is now deeply embedded in the standard search experience, not just in a separate chat interface but as an active shaper of the search results page composition. When a user searches for “wireless headphones for gaming under 80 dollars,” Rufus parses the composite intent — gaming use case, price constraint, wireless requirement — and assembles a results page that may include an AI-generated comparison module, sponsored product slots calibrated to the parsed intent, and organic listings ranked against a multi-factor relevance score that includes Rufus’s interpretation of product-query fit.

For a live ad position monitoring API, this creates three new data capture challenges: the SERP structure is no longer deterministic (different users with different session histories may see different module compositions), the sponsored placement slots may be interspersed with AI-generated content blocks in ways that change the effective “position” numbering, and the granularity of what constitutes “Top of Search” in a Rufus-augmented SERP may differ from the definition in the Advertising Console. Any production-grade real-time Monitor ad placements API must be designed to handle this structural variability, ideally outputting placement data that distinguishes between traditional sponsored slots and slots adjacent to or within AI-generated modules.

Key Technical Requirements for a Real-time Ad Placement API

Not all placement monitoring solutions are equal. For developers evaluating whether to build or buy a real-time Amazon ad placement tracking API, the following technical requirements define the minimum viable specification for a production system.

Latency and Frequency: The Sub-Minute Imperative

The frequency question is the most important one to resolve first, because it drives every other architectural decision. How often do you need to know where an ad is placed? The answer depends on your use case, but the floor for any meaningful Dayparting or competitive alert system is one data point per keyword per target geography every 5-10 minutes for priority keywords. For a portfolio of 50 keywords across 5 geographic targets, that is 250-500 API calls every 10 minutes, or 1,500-3,000 calls per hour.

At this frequency, latency matters enormously. A placement data point that takes 90 seconds to return from query to structured JSON is essentially real-time for most applications. One that takes 10 minutes is no longer real-time — it is lagged monitoring that may miss the fast-moving competitive events (competitor bid spikes, flash promotions, coupon activations) that are the most damaging to share of voice.

The live ad position monitoring API you choose or build must deliver a median response time under 60 seconds for structured placement data, with a 95th-percentile latency under 120 seconds. It must also support parallel concurrent requests without degradation — if your monitoring system issues 50 simultaneous placement checks, the API should handle this request pattern without rate-limiting your critical priority keywords while the long tail waits.

Geographic Granularity: Zip-code Level Data as a Non-Negotiable

Amazon’s delivery experience — and therefore its search result composition — varies at the zip-code level. The same ASIN may display a Prime next-day delivery badge in zip code 10001 (Manhattan) but a 3-day delivery estimate in zip code 85001 (Phoenix), and this difference influences the sponsored placement algorithm’s expected conversion probability for that ASIN in each geography. A real-time placements API that does not support zip-code level request parameters is returning a geographic average that may be meaningless for any specific target market.

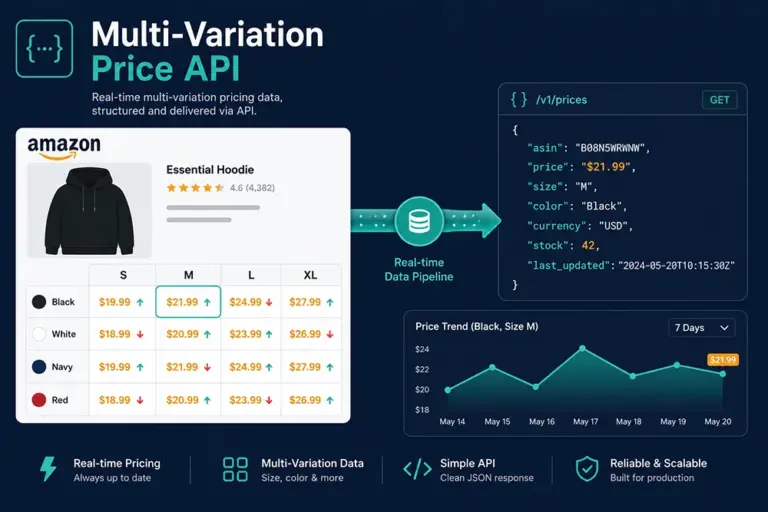

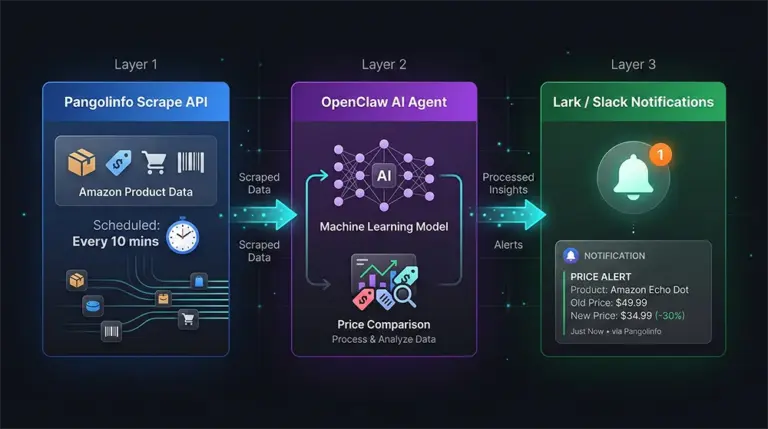

For enterprise advertisers running national campaigns on competitive keywords, the geographic placement landscape can be strikingly uneven. The practical implication: your API must support passing an arbitrary US zip code as a request parameter, and the system must execute the SERP query from infrastructure physically located in or proxying that geographic area — not simply filtering a centralized response dataset by zip code. Pangolinfo’s Scrape API supports this through its designated zip-code query configuration, where each API call can specify the target zip code to receive the authentic user-perspective SERP response from that geography.

Device Parity: Desktop, Mobile App, and Voice Search

The Amazon search experience is not uniform across devices. On desktop, a typical SERP may display 4-7 sponsored products in the top-of-search banner. On mobile, the same search may show only 1-2 sponsored slots before the first organic result, with the remaining sponsored products interspersed at fixed intervals throughout the page. This compression of visible sponsorship real estate on mobile dramatically increases the competitive intensity of the top-1 position — and correspondingly increases the revenue impact of losing it.

Voice search through Alexa presents a third distinct profile: there is no visual SERP, and the “top ad” is whatever product Alexa decides to verbally recommend, which is influenced by a different ranking signal cocktail that includes voice-specific purchase history, product rating, and an undisclosed Alexa Commerce algorithm. Tracking sponsored presence in voice-search contexts requires a fundamentally different query approach from visual SERP monitoring.

A production-grade placement API should support specifying the device context (desktop browser, mobile browser, mobile app simulation) as a first-class request parameter, with distinct response schemas that accurately reflect each device context’s SERP structure.

Comparative Analysis: Scraped Real-time Data vs. Official Amazon Ads API

There is a persistent misconception in the AdTech developer community that the “right” way to get placement data is through Amazon’s official API, and that third-party data sources are inherently less reliable. In practice, the opposite is true for the specific use case of real-time placement monitoring — and understanding why reveals a lot about the information architecture of Amazon’s advertising system.

What the Official Amazon Ads API Actually Provides

The Amazon Advertising API is excellent for what it was designed to do: programmatic campaign management (creating, updating, pausing campaigns, ad groups, and bids), aggregated performance reporting, and portfolio-level analytics. It was not designed to answer the consumer-experience question: “right now, if a user in zip code 10001 searches for [keyword] on their iPhone, where does this ad appear?”

This distinction is fundamental. The official API gives you the advertiser’s perspective on a historical auction outcome. The real-time Monitor ad placements API approach — executing actual SERP queries from the consumer perspective — gives you the consumer’s current experience. For bidding optimization, the consumer’s current experience is the ground truth. What Amazon’s reporting system says happened in an aggregated historical bucket is a useful signal, but it cannot replace direct observation of current placement state.

The technical gap manifests in several specific ways. Official API rate limits prevent high-frequency polling at the keyword-by-geography granularity required for real-time systems. The official API does not expose zip-code level placement data — its geographic breakdown stops at the country/marketplace level. And crucially, the official API cannot tell you what organic and sponsored content surrounds your ad, which is essential context for understanding your effective share of the consumer’s attention on a given SERP.

Handling 2026’s Dynamic SERP Structure

The structural variability of the 2026 Amazon SERP — with Rufus AI modules, personalized inserts, and dynamically composed result pages — creates a parsing challenge that commodity scraping solutions handle poorly. A robust real-time ad placement tracking API must maintain current parsing templates that account for: the presence or absence of Rufus recommendation carousels, the variable position of “Sponsored” labels within AI-generated content blocks, the different slot numbering conventions on mobile versus desktop, and the occasional test/experiment SERP layouts that Amazon deploys to subsets of users.

Pangolinfo’s Scrape API maintains specialized Amazon SERP parsing templates that are updated to reflect structural SERP changes, enabling consistent structured JSON output even as Amazon evolves its page layouts. The API achieves a 98%+ data extraction success rate specifically for Amazon sponsored placement data — the highest documented rate for this extraction task in the market. This is not a marketing claim; it reflects the investment required in maintaining CAPTCHA handling, request fingerprint rotation, and parsing template accuracy in a platform that actively defends against automated queries.

Implementation Strategy: Integrating Placement APIs into Your SaaS Workflow

Integrating real-time placement monitoring into a multi-tenant SaaS platform requires careful architectural design beyond simply making API calls and storing results. The following sections describe the key architectural components, with implementation patterns appropriate for enterprise-scale deployments.

Data Ingestion and Normalization for Multi-Tenant Platforms

For a SaaS platform serving multiple advertiser clients, each with distinct keyword portfolios, geographic targets, and monitoring frequencies, the ingestion layer must handle heterogeneous request volumes without per-client rate limiting collisions. The recommended architecture separates the scheduling layer from the execution layer:

# placement_monitor/scheduler.py

# Priority Queue-based scheduling for multi-tenant placement monitoring

import heapq

import asyncio

import aiohttp

import json

from dataclasses import dataclass, field

from typing import Optional

from datetime import datetime, timedelta

PANGOLIN_API_ENDPOINT = "https://api.pangolinfo.com/scrape"

PANGOLIN_API_KEY = "YOUR_API_KEY"

@dataclass(order=True)

class MonitoringTask:

"""Priority queue task for a single placement check."""

priority: float # Unix timestamp of next scheduled execution

client_id: str = field(compare=False)

keyword: str = field(compare=False)

zip_code: str = field(compare=False)

asin: str = field(compare=False)

interval_seconds: int = field(compare=False, default=300) # 5-minute default

device: str = field(compare=False, default="desktop")

class PlacementMonitorScheduler:

"""

Multi-tenant placement monitoring scheduler using async priority queue.

Ensures priority keywords get checked more frequently without starving

long-tail keywords of other clients.

"""

def __init__(self, max_concurrent_requests: int = 20):

self.task_queue: list = [] # min-heap by next execution time

self.max_concurrent = max_concurrent_requests

self.semaphore = asyncio.Semaphore(max_concurrent_requests)

self.results_buffer = []

def register_keyword(self, client_id: str, keyword: str, zip_code: str,

asin: str, interval_seconds: int = 300, device: str = "desktop"):

"""Register a keyword-geography pair for monitoring at specified interval."""

task = MonitoringTask(

priority=datetime.now().timestamp(),

client_id=client_id,

keyword=keyword,

zip_code=zip_code,

asin=asin,

interval_seconds=interval_seconds,

device=device

)

heapq.heappush(self.task_queue, task)

async def fetch_placement(self, task: MonitoringTask) -> dict:

"""

Execute a single placement check via Pangolinfo Scrape API.

Returns structured placement result normalized for database ingestion.

"""

async with self.semaphore:

payload = {

"url": f"https://www.amazon.com/s?k={task.keyword.replace(' ', '+')}",

"country": "US",

"zip_code": task.zip_code,

"output_format": "json",

"parse_ads": True,

"device": task.device,

"bypass_cache": True

}

headers = {

"Authorization": f"Bearer {PANGOLIN_API_KEY}",

"Content-Type": "application/json"

}

async with aiohttp.ClientSession() as session:

async with session.post(PANGOLIN_API_ENDPOINT, json=payload, headers=headers) as resp:

raw = await resp.json()

# Normalize placement result into standard schema

return self._normalize_placement_result(raw, task)

def _normalize_placement_result(self, raw_response: dict, task: MonitoringTask) -> dict:

"""

Normalize raw API response into canonical placement schema.

Handles structural variations from Rufus AI module insertions.

"""

sponsored_slots = raw_response.get("sponsored_results", [])

organic_slots = raw_response.get("organic_results", [])

ai_modules = raw_response.get("rufus_modules", []) # Pangolinfo identifies Rufus blocks

# Find target ASIN position

my_ad: Optional[dict] = None

for i, slot in enumerate(sponsored_slots):

if slot.get("asin") == task.asin:

my_ad = {

"position": i + 1,

"placement_zone": slot.get("placement_zone"),

"adjacent_ai_module": slot.get("adjacent_rufus_module", False)

}

break

# Compute Share of Voice: slots in Top-3 owned by same brand domain

competitor_asins_in_top3 = [

s["asin"] for s in sponsored_slots[:3]

if s.get("asin") != task.asin

]

return {

"schema_version": "2.1",

"timestamp": datetime.utcnow().isoformat() + "Z",

"client_id": task.client_id,

"keyword": task.keyword,

"zip_code": task.zip_code,

"device": task.device,

"target_asin": task.asin,

"placement": my_ad,

"total_sponsored_slots": len(sponsored_slots),

"competitor_asins_top3": competitor_asins_in_top3,

"ai_modules_present": len(ai_modules) > 0,

"ai_module_count": len(ai_modules)

}

async def run_cycle(self):

"""Execute one monitoring cycle: process all tasks due for execution."""

now = datetime.now().timestamp()

due_tasks = []

while self.task_queue and self.task_queue[0].priority <= now:

task = heapq.heappop(self.task_queue)

due_tasks.append(task)

if not due_tasks:

return []

# Concurrent execution of all due tasks

results = await asyncio.gather(

*[self.fetch_placement(task) for task in due_tasks],

return_exceptions=True

)

# Reschedule each task for its next execution

for task in due_tasks:

task.priority = (datetime.now() + timedelta(seconds=task.interval_seconds)).timestamp()

heapq.heappush(self.task_queue, task)

return [r for r in results if not isinstance(r, Exception)]

Setting Up Placement Alerts and Automated Bidding Triggers

The value of real-time placement data is realized through automated response logic. A placement alert system must distinguish between transient fluctuations (normal auction volatility that self-corrects) and sustained position losses (competitive displacement requiring bid adjustment). Alerting on every single position change creates noise that desensitizes operators; alerting only on sustained patterns misses fast-moving competitive events.

# placement_monitor/alert_engine.py

# Stateful alert engine with hysteresis to reduce noise

from collections import deque

from typing import Callable, Optional

import statistics

class PlacementAlertEngine:

"""

Stateful alert engine that tracks placement history per keyword-geography pair

and fires alerts only when position loss exceeds configurable thresholds

over a sliding window — reducing noise from normal auction volatility.

"""

def __init__(self,

window_size: int = 6, # Number of recent data points to evaluate

alert_threshold_position: int = 3, # Alert if avg position > this

consecutive_alerts_to_escalate: int = 3):

self.window_size = window_size

self.alert_threshold = alert_threshold_position

self.escalation_threshold = consecutive_alerts_to_escalate

# state[client_id][keyword][zip_code] = deque of recent positions

self.history: dict = {}

self.alert_counts: dict = {}

def _get_key(self, client_id: str, keyword: str, zip_code: str, device: str) -> str:

return f"{client_id}::{keyword}::{zip_code}::{device}"

def process_result(self, result: dict,

on_alert: Optional[Callable] = None,

on_escalate: Optional[Callable] = None):

"""

Process a normalized placement result and evaluate alert conditions.

on_alert: called when position exceeds threshold (first-level alert)

on_escalate: called when alert persists for N consecutive windows

"""

key = self._get_key(

result["client_id"], result["keyword"],

result["zip_code"], result["device"]

)

if key not in self.history:

self.history[key] = deque(maxlen=self.window_size)

self.alert_counts[key] = 0

placement = result.get("placement")

position = placement["position"] if placement and placement.get("found") else 999

self.history[key].append(position)

if len(self.history[key]) < 3:

return # Not enough data for meaningful evaluation

avg_position = statistics.mean(self.history[key])

competitor_intrusion = len(result.get("competitor_asins_top3", [])) > 0

# Level 1: Position alert

if avg_position > self.alert_threshold:

self.alert_counts[key] += 1

alert_payload = {

"type": "POSITION_ALERT",

"keyword": result["keyword"],

"zip_code": result["zip_code"],

"device": result["device"],

"current_position": position,

"window_avg_position": round(avg_position, 2),

"competitor_intrusion": competitor_intrusion,

"competitor_asins": result.get("competitor_asins_top3", []),

"ai_modules_present": result.get("ai_modules_present", False),

"suggested_bid_adjustment": self._calculate_bid_adjustment(avg_position),

"timestamp": result["timestamp"]

}

if on_alert:

on_alert(alert_payload)

# Level 2: Escalation

if self.alert_counts[key] >= self.escalation_threshold and on_escalate:

on_escalate({**alert_payload, "type": "POSITION_ESCALATION",

"consecutive_alerts": self.alert_counts[key]})

else:

self.alert_counts[key] = 0 # Reset on recovery

def _calculate_bid_adjustment(self, avg_position: float) -> float:

"""

Heuristic bid adjustment suggestion based on position deficit.

In production, replace with your ML-based bid optimization model.

"""

if avg_position <= 4:

return 0.05 # 5% increase

elif avg_position <= 6:

return 0.12 # 12% increase

else:

return 0.20 # 20% increase — significant displacement

Visualization: Building Share of Voice Heatmaps for Clients

For client-facing SaaS dashboards, raw placement data needs to be aggregated into visualizations that communicate strategic insights rather than data points. The most effective visualization for time-series placement data is the Share of Voice (SOV) heatmap: a matrix where each row is a keyword, each column is a time bucket (hourly or 6-hour blocks), and each cell’s color intensity represents the percentage of checks in that time bucket where the client’s ASIN appeared in the top-3 sponsored slots.

This visualization style is directly actionable: a dark blue cell (high SOV) at 9-11 AM Monday tells the advertiser they are strongly positioned in that window; a pale gray cell at 7-9 PM Friday tells them they are losing the highest-value peak shopping window. The AMZ Data Tracker offers a no-code version of this SOV visualization layer built on top of the same Pangolinfo data infrastructure, making it accessible for clients who want insights without managing API integration directly.

For custom implementations, the SOV computation from normalized placement records is straightforward:

# placement_monitor/sov_calculator.py

# Share of Voice heatmap data generator

from collections import defaultdict

from typing import List, Dict

import math

def compute_sov_heatmap(

placement_records: List[dict],

time_bucket_hours: int = 6,

top_n_positions: int = 3

) -> Dict[str, Dict[str, float]]:

"""

Compute Share of Voice heatmap data from placement records.

Returns: {keyword: {time_bucket_label: sov_percentage}}

where sov_percentage = (checks with position <= top_n) / total_checks * 100

"""

from datetime import datetime

# Group records by keyword and time bucket

buckets: dict = defaultdict(lambda: defaultdict(lambda: {"hits": 0, "total": 0}))

for record in placement_records:

ts = datetime.fromisoformat(record["timestamp"].replace("Z", "+00:00"))

bucket_hour = (ts.hour // time_bucket_hours) * time_bucket_hours

bucket_label = f"{ts.strftime('%a')} {bucket_hour:02d}:00"

keyword = record["keyword"]

placement = record.get("placement")

position = placement["position"] if placement and placement.get("found") else 999

buckets[keyword][bucket_label]["total"] += 1

if position <= top_n_positions:

buckets[keyword][bucket_label]["hits"] += 1

# Compute SOV percentages

heatmap = {}

for keyword, time_data in buckets.items():

heatmap[keyword] = {}

for bucket_label, counts in time_data.items():

sov = (counts["hits"] / counts["total"] * 100) if counts["total"] > 0 else 0

heatmap[keyword][bucket_label] = round(sov, 1)

return heatmap

Full API documentation for the Pangolinfo placement data endpoints — including response schemas, rate limit specifications, and webhook configuration for push-based alert delivery — is available at Pangolinfo Documentation Center. To test the API with your own keyword set, you can access the Pangolinfo Console with a free trial allocation.

Future-Proofing Your Ad Stack: Beyond 2026

The architectural investments you make today for real-time placement monitoring will need to evolve alongside Amazon’s platform. Three trends in particular will reshape the requirements for a production real-time ad placement tracking API in the 2026-2028 timeframe.

The first is Rufus AI placement tracking. As Rufus AI becomes more sophisticated, the line between “organic recommendation” and “sponsored placement” will blur further. Rufus may begin surfacing sponsored products within conversational recommendation responses without the traditional “Sponsored” label appearing in the standardized SERP position. Any placement API aiming to capture complete share of voice will need to monitor both traditional SERP slots and Rufus conversational output — which requires a fundamentally different query mechanism (simulating a Rufus conversation rather than a keyword search).

The second is cross-marketplace placement convergence. Amazon is increasingly harmonizing its search experience across international marketplaces, but significant structural differences remain between Amazon.com, Amazon.de, Amazon.co.jp, and emerging markets like Amazon.in. Enterprise advertisers running global campaigns need a placement API that can serve all major Amazon marketplaces with the same geographic and device granularity as the US market — the technical infrastructure challenge here is substantial.

The third is real-time bidding API integration. As Amazon eventually opens up lower-latency bidding mechanisms beyond the current Advertising API (potentially through DSP partnerships or new API tiers), the “observe placement, adjust bid, verify new placement” feedback loop that currently takes minutes could compress to seconds. The placement monitoring API architecture you build today should be designed to plug into a lower-latency bidding trigger mechanism when that capability becomes available, rather than requiring a complete rebuild.

Conclusion: Choosing the Right API Partner for Enterprise-grade Real-time Ad Placement Monitoring

The technical landscape for real-time Monitor ad placements API solutions is narrower than it appears. The operational requirements — sub-minute latency, zip-code level granularity, device parity, 98%+ extraction success rate against Amazon’s anti-scraping infrastructure, structured JSON output with Rufus AI module awareness — rule out most commodity scraping services and all standard reporting API approaches.

What remains is a small set of specialized data infrastructure providers that have invested specifically in Amazon SERP data quality at the precision and frequency that algorithmic bidding demands. The evaluation criteria should be ruthlessly practical: what is the documented extraction success rate for Amazon sponsored placement data specifically (not web scraping generally)? What is the geographic coverage for zip-code level queries? Does the provider maintain and update their Amazon parsing templates as Amazon evolves its SERP structure? Can the API serve the per-keyword, per-geography, per-device request pattern at the frequency your bidding model requires without prohibitive cost?

Pangolinfo’s Scrape API has been purpose-built for this use case, with 98%+ sponsored placement extraction rates, designated zip-code query support, multi-device SERP coverage, and a structured JSON output schema that is maintained against Amazon’s evolving page structure. The combination of the Scrape API for data collection and AMZ Data Tracker for visualization provides a complete stack from raw placement data to client-facing Share of Voice intelligence.

The gap between report-based advertising optimization and real-time placement-driven optimization is measurable, substantial, and widening as Rufus AI makes the Amazon SERP more dynamic. Building on a real-time Monitor ad placements API as your foundational data primitive is not a competitive advantage that will fade — it is the architectural prerequisite for any PPC automation system that aims to remain effective in the Amazon ecosystem of 2026 and beyond. Start with the API documentation, run your first placement check in under 10 minutes, and see what your bidding engine has been missing.

🚀 Ready to integrate real-time placement data? Start with the Pangolinfo Scrape API — the industry’s leading solution for Amazon ad placement monitoring at enterprise scale. Access full documentation at docs.pangolinfo.com and claim your free trial allocation in the Pangolinfo Console.