I. Macroeconomics and Artificial Intelligence Policy Restructuring of Amazon Cross-Border E-commerce in 2026

As the global business timeline steadily advances to 2026, the Amazon cross-border e-commerce ecosystem has completed a historic leap from the early “extensive traffic-driven” model to a data and Generative AI dual-driven paradigm, marking a full-scale Amazon E-commerce AI Transformation. Against this macroeconomic backdrop, the scale and complexity of the cross-border e-commerce market have reached unprecedented heights. According to the latest core market data analysis, Amazon’s total annual revenue in 2025 reached an astonishing $716.9 billion, with the fourth quarter alone contributing $213.4 billion, demonstrating exceptional market resilience and seasonal explosive power. More importantly, within this massive business empire, the power of third-party sellers has taken an absolute dominant position. Data shows that third-party sellers not only contributed $172.2 billion in service revenue, but the number of items they sold also accounted for 62% of Amazon’s total sales volume, with the number of active third-party sellers globally reaching 1.9 million. This series of data irrefutably proves that Amazon’s flywheel effect is increasingly reliant on the third-party seller network composed of SMEs, brand owners, and international supply chains — a foundation that further accelerates and sustains the Amazon E-commerce AI Transformation across the entire platform.

However, accompanying the rapid expansion of market size is a sharply deteriorating competitive environment and soaring operating costs. The inflation of advertising expenditures is particularly notable. In 2025, Amazon’s advertising business revenue grew by 22% year-over-year to $68.6 billion, securing its position as the world’s third-largest digital advertising platform. Reflected in the actual operations of sellers, this means that the average Cost Per Click (CPC) for Sponsored Products has climbed to between $0.95 and $1.20, while the average Advertising Cost of Sales (ACOS) for site-wide ad campaigns has solidified in the high range of 25% to 30%. Squeezed by across-the-board increases in logistics, warehousing, and traffic acquisition costs, the traditional operating model relying on manual mass-listing and experience-based price adjustments has irreversibly reached a dead end.

Meanwhile, 2026 is hailed as the true inaugural year of AI reshaping the global retail landscape. Facing the explosion of Generative AI and Agentic AI, Amazon’s official stance has been a dual strategy of proactive embrace and strict regulation. Internally, Amazon has vigorously promoted the Seller Assistant intelligent system built on Amazon Bedrock, allowing sellers to obtain business insights and content generation support through natural language. Furthermore, in March 2026, Amazon officially implemented the watershed “AI Agent Automation Policy”. This policy clearly defined the compliance boundaries for AI agents and automated tools in managing seller accounts for the first time. The new rules require that all automated programs executing pricing, inventory adjustments, and data scraping must connect through the official SP-API (Selling Partner API) and must embed “Human authorization checkpoints” alongside complete audit logs. More fatally, the new rules strictly crack down on browser automation and unofficial scraping behaviors relied upon by traditional tools. Once behavioral patterns are found to mimic human browsing to bypass rate limits, the associated tools will be directly banned, potentially even compromising the sellers’ store accounts.

Caught in the crossfire of such a stringent compliance framework and white-hot competition, the strategic question determining life or death for Amazon cross-border e-commerce enterprises in the next decade is: How to deeply digitally transform in the 2026 AI wave, break the path dependency of old strategies, and use compliant, real-time, massive data to feed an autonomously built AI analysis and decision-making framework. This report will begin by dissecting the path dependency of current strategies, deeply evaluate the pros and cons of mainstream SaaS tools, and extensively dismantle how to use enterprise-grade API data infrastructure like Pangolinfo to reconstruct the intelligent foundation of e-commerce business flows.

II. The “Path Dependency” Dilemma of Current Core Strategies in Amazon Cross-Border E-commerce

Over the past few years, the Amazon seller community has formed a highly templated Standard Operating Procedure (SOP). This workflow greatly drove GMV growth during the platform’s traffic dividend period, but from the perspective of the “Path Dependency” theory in sociology and economics, this early strategic inertia has evolved into a systemic shackle that is hard to shake off. In the new AI-driven business environment, these strategies, which rely on historical experience and static rules, are leading to severe resource misallocation and decision lags.

First, regarding product selection strategy, there is a severe “rearview mirror effect” among current sellers. The traditional path of cross-border e-commerce product selection highly relies on linear extrapolation of Amazon’s Best Sellers Rank (BSR) lists and simple estimations of competitors’ historical sales. Operations teams are accustomed to using market research tools to scan “red ocean” categories that have already generated massive sales, attempting to find tiny differentiation spaces within them (such as changing the color of a product or adding a small accessory). This rule-oriented product selection method based on historical data completely ignores subtle changes in potential consumer sentiment and niche market trends at the macro level. In 2026, product life cycles have drastically shortened. By the time historical data in a certain category is sufficient to support a product selection software’s conclusion of “market potential,” that track is often already overcrowded, followed inevitably by brutal price wars and inventory clearance crises.

Second, in daily operations and monitoring, the “Frankenstein” system composed of manual labor and spreadsheets has become a black hole for efficiency. Most sellers still rely on humans logging into Seller Central daily to download multiple data reports, performing VLOOKUP matches in local Excel spreadsheets to calculate true gross margins, track refund rates, or monitor inventory redundancy. The path dependency of this operating model lies in an infatuation with traditional “what you see is what you get” dashboards. Operations personnel have fallen into severe “data overload.” Facing hundreds of SKUs, human power simply cannot discover hidden correlations within massive data. For instance, when inventory turnover drops, the system only passively issues a stagnant inventory warning, rather than proactively analyzing whether the sales decline is due to seasonal changes, a competitor’s price drop, or a specific negative review. This lagging response mechanism directly leads to exorbitant long-term storage fees and out-of-stock risks.

Furthermore, the path dependency of PPC (Pay-Per-Click) advertising manifests as rigid rule engines and the ineffective drain of budgets. Current mainstream ad adjustment strategies are almost entirely based on simple “If-Then” logic. For example, an operator might set a rule: “If the ACOS of a keyword exceeds 35%, lower the bid by 20%.” However, in the highly dynamic auction market of 2026, the consumer journey is non-linear. This single-dimensional rule-based bidding ignores multi-dimensional factors such as dayparting, real-time inventory status, cross-channel attribution, and competitors’ promotional behaviors. A common disastrous scenario is: A product’s inventory is nearly depleted, but because its ad conversion rate is extremely high, the traditional rule engine not only fails to lower the bid to protect inventory, but continues to add budget, ultimately causing the product to run out of stock and wiping out its hard-earned Organic Ranking weight overnight.

Finally, regarding keyword strategy and SEO optimization, sellers are deeply trapped in the old dream of simply piling up long-tail keywords to cater to Amazon’s A9 algorithm. Traditional cross-border e-commerce SEO focuses on obtaining high-search-volume keywords through reverse engineering and stuffing them into titles, Bullet Points, and backend Search Terms. However, the traffic gateway in 2026 has fundamentally shifted. As large language models integrate into mainstream search engines, traditional SEO is being replaced by AEO (AI Engine Optimization). According to statistics, currently in search engines like Google, 13% to 30% of information queries are directly covered by AI Overviews, with an extremely high coverage rate in “How-to” queries. AI Overviews occupy the “Rank Zero” of search results, directly answering user questions and causing click-through rates on traditional organic search links to plummet. If cross-border sellers’ keyword strategies still only focus on on-site bidding rankings and fail to generate structured, verifiable facts that conform to the validation logic of AI large models, their brands will completely disappear from the off-site AI recommendation ecosystem, losing the highest-converting intent traffic.

III. Market Size, Tool Limitations, and Underlying Drawbacks of the Mainstream Seller Software Ecosystem

With the prosperity of the Amazon third-party market, the SaaS (Software as a Service) seller tool ecosystem growing around it has also seen explosive development. It is projected that by 2033, the global digital retail market, including e-commerce supporting services, will soar to a massive scale of $155.98 trillion. Faced with this enormous pie, numerous SaaS platforms with various functions have emerged in the market. The software with the highest frequency of use and largest market share among current sellers includes Helium 10, Jungle Scout, SellerSprite, and Analyzer.Tools. Through a horizontal comparison of the core functions of these tools, we can clearly see the technological boundaries and pain points of the current industry.

| Tool / Software Name | Core Market Positioning & Functional Advantages | 2026 Applicable Scenarios & Core Limitations Analysis |

| Helium 10 | Comprehensive full-funnel growth platform, offering deep analysis from product research to ad automation. Its core advantage lies in unique Child ASIN-level sales estimation technology; meanwhile, its Cerebro tool has extremely high granularity when reverse-engineering competitor keywords. | Best suited for mid-to-large brand sellers managing a large number of SKUs and needing refined operations. However, its limitation is an extremely complex system architecture with a steep learning curve. More importantly, its built-in PPC automation module (Adtomic) still leans towards advanced rule engines, lacking the AI decision-making capability to combine LLMs for multi-modal real-time market gaming. |

| Jungle Scout | Focuses on market intelligence, opportunity discovery, and supply chain matchmaking. Processing over 500 million data points daily, its Opportunity Finder is highly advantageous in validating market size and competition heat, and its interface is extremely beginner-friendly. | Suitable for startups and product development teams focused on uncovering niche markets. However, in handling complex large ad campaign optimization, high-frequency bid adjustments, and deep correlation refund tracking, its functional depth falls short of Helium 10, making it hard to meet the refined demands of advanced operations. |

| SellerSprite | A powerful comprehensive tool balancing localization and internationalization, offering excellent usability and rich data mining modules, considered a comprehensive solution for multi-country market expansion. | Highly advantageous in localized operations across multiple languages and sites. But facing the interception of off-site traffic by Generative AI (like Google AI Overviews) in 2026, it has not yet formed a complete technical closed loop from off-site AI sentiment tracking to on-site keyword repository feedback. |

| Analyzer.Tools | A finance-oriented tool focused on bulk product scanning. Supporting both Windows desktop and web, it can rapidly scan large inventory lists, automatically deduct various FBA fees in real-time, and calculate net profit and ROI. | Deeply favored by high-volume wholesalers and arbitrage model sellers. But its data dimensions are single, severely lacking support for text sentiment analysis within the product lifecycle, consumer trend tracking, and Share of Voice monitoring. |

Although the aforementioned tools have freed up operators’ hands to some extent, from the grand perspective of AI digital transformation in 2026, these mainstream software have fatal underlying drawbacks, urgently requiring a new generation of architecture to solve these problems.

The primary issue is the deep-rooted “data silo” effect. Mainstream SaaS software are mostly closed black-box systems; users can only view charts and export limited-format reports within their preset UI interfaces. These tools lack flexible, Headless underlying API output capabilities. When enterprises wish to deeply integrate Amazon sales data with their own ERP systems, Shopify data from independent sites, or even self-built Enterprise Large Language Model Knowledge Bases, they often encounter massive technical hurdles. This fragmentation prevents enterprises from establishing a unified “Single Source of Truth,” making cross-departmental collaboration and global business decision-making exceptionally sluggish.

Secondly, there is the compliance crisis and high maintenance costs of reverse engineering and data scraping. To provide so-called “panoramic data,” many unauthorized seller tools have long relied on stealthy Browser Automation technologies to simulate human clicks in the background for web scraping. However, as mentioned earlier, Amazon’s AI Agent new rules issued in March 2026 declared war on such behaviors. Once Amazon updates the page DOM structure or upgrades anti-scraping verifications like ReCAPTCHA, these script-based tools will immediately fail. If enterprises choose to build their own scraper teams to cope with these changes, they not only face an initial 2-to-3-month R&D cycle costing several senior engineers, but the hidden cost just to maintain the system’s operation is as high as $150,000 to $200,000 annually, while still failing to escape the compliance risk of account bans.

Finally, existing tools severely lack a strategic perspective against the dimension of AI Search (SGE). Whether Helium 10 or Jungle Scout, their keyword logic is mainly confined within Amazon’s on-site A9 algorithm ecosystem, supplemented at most by basic search volume data from Google Keyword Planner. But in 2026, the consumer’s shopping journey often begins by asking an AI assistant (e.g., “Which portable fan is best for camping and has the longest battery life?”). If tools cannot scrape and analyze the real-time recommendation logic, the authority of cited sources, and the brand mention rates of competitors within Google AI Overviews, sellers will completely become trapped beasts fighting in the on-site red ocean, losing the first-mover advantage of intercepting high-net-worth traffic from the upper funnel of the internet.

IV. Reshaping the Growth Curve: Building a Full-Funnel AI Analysis, Decision-Making, and Recommendation Framework

To completely break free from the aforementioned path dependency and tool drawbacks and achieve true digital transformation, Amazon cross-border e-commerce enterprises must evolve from mere “SaaS tool users” to “Architects of intelligent business flows.” This means enterprises must abandon the practice of piling up scattered software and instead build an AI analysis, decision-making, and recommendation framework running through the entire lifecycle, with Agentic AI at its core. The construction of this framework should not merely introduce LLMs for simple copywriting polish, but must endow AI with the capability to reason, plan, and directly execute complex business actions under compliant authorization. In the cutting-edge practices of 2026, a complete AI decision-making framework must encompass the following core intelligent modules.

Intelligent Inventory and Demand Forecasting is the logistics foundation of the entire framework. AI systems need to completely replace error-prone spreadsheets, pulling real-time Amazon FBA inventory levels, in-transit inventory, and sales velocities across multiple channels (like Walmart, Shopify). By ingesting historical sales trends and superimposing external macroeconomic variables, this module can accurately predict future sales peaks and valleys, automatically calculating the optimal restocking timing and quantity. More critically, the AI framework must possess capital defense capabilities: before slow-moving goods trigger Amazon’s punitive long-term storage fees, it must proactively issue decision recommendations for price-drop promotions or inventory transfers to the operations team, thus finding the perfect mathematical balance between “out-of-stock crises” and “inventory backlog”.

At the very front end of transactions, the Real-Time Dynamic Pricing module bears the heavy responsibility of maximizing profit. Under the premise of protecting the absolute profit red line set by the seller (after deducting all FBA fees, refund losses, and taxes), this AI module can track the price fluctuations of core competitors and the ownership of the “Buy Box” at nearly millisecond frequencies. Combined with dayparting strategies, AI can fine-tune prices during night hours when competitors’ budgets are exhausted to obtain higher premiums, or automatically implement penetration pricing aimed at predatory market share grabs when traffic peaks arrive. This 24/7 automated gaming is something no manual operation can match.

For Predictive Niche Discovery, which determines the enterprise’s future lifeline, the AI framework must break through the limitations of on-site data. It needs not only to scan the surging charts of on-site sub-categories but also to connect to network-wide Social Listening signals, academic paper trends, and patent application dynamics. Through multi-dimensional Competitor Gap Analysis, AI can acutely capture those long-tail demands frequently complained about in consumer reviews but not yet satisfied by existing top brands due to technological or supply chain reasons, thereby guiding the R&D department to conduct preemptive product innovation and capture the niche market before competitors can react.

Complementing this is the AI-Powered PPC Management matrix. The AI engine needs to deeply intervene in the highly complex real-time bidding environment, automatically completing high-converting Keyword Harvesting and negative search term exclusion through uninterrupted A/B testing and conversion probability predictions. A higher level of synergy lies in the real-time dialogue between the advertising AI module and the aforementioned inventory AI module: when the remaining inventory of a blockbuster product is insufficient to support normal sales for the next 7 days, the AI will automatically lower or even pause aggressive ad placements for that product, extending the product’s sales cycle by hitting the brakes, thereby preserving the Organic Ranking weight accumulated over the years from out-of-stock penalties.

Finally, the Sentiment Analysis & Review Monitoring module constitutes the brand’s defense line. By feeding network-scraped buyer reviews, return/exchange reasons, and Q&A data into NLP models, the framework can unravel specific product defects and consumer emotional fluctuation curves from unstructured text. When negative sentiment in a specific dimension (e.g., “overheating during charging”) spikes abnormally, the system will immediately trigger the highest-level alert, pushing it directly to the quality control and supply chain departments, killing potential large-scale return crises in the cradle. Simultaneously, these deeply ingrained consumer feedbacks are fed back into a closed loop to the marketing team, becoming precise materials for optimizing product Listings and ad copy in the next step.

V. Building the Data Foundation: Providing Continuous Data Fuel for AI via the Pangolinfo API Matrix

Designing a perfect AI framework is merely drawing the blueprint. Any AI model—no matter how exquisite its algorithm—will become a worthless empty shell if it lacks continuous, real-time, structured, and authentic data sources as fuel. In dealing with Amazon’s increasingly stringent anti-scraping mechanisms and ever-changing page structures, relying on internal teams to write fragile custom scraper scripts has proven to be a dead end; this not only consumes hundreds of thousands of dollars in hidden development and maintenance costs but also leads to frequent data stream interruptions, directly paralyzing the AI decision-making system.

To provide continuous power to the massive and complex AI decision-making framework, enterprises must integrate professional enterprise-grade data infrastructure into their IT pipelines. In this regard, Pangolinfo provides a full suite of API matrices (https://www.pangolinfo.com/) tested by massive high concurrency, aiming to help developers and cross-border sellers build an indestructible “Data + AI” data moat at the lowest integration cost. The deep access of the following four core APIs is the key to completing this data empowerment.

First is the Scrape API (Universal Data Scraping Interface), which reconstructs the underlying commercial competitive intelligence system. In high-frequency cross-border e-commerce transactions, mastering competitors’ real-time BSR rankings, true price changes, and promotional methods is a prerequisite for dynamic pricing. Pangolinfo’s Scrape API provides a cloud-based data engine with extremely strong anti-blocking capabilities. It features a built-in deep residential Proxy Rotation system and can automatically and seamlessly handle anti-bot systems like CAPTCHA verifications that hinder large-scale scraping. This API not only extracts data from Amazon but also covers cross-platform arenas like Walmart and Shopify, guaranteeing up to a 99.9% enterprise-grade request success rate with latency often controlled at the millisecond level. More critically, this API supports outputting highly structured JSON format data directly. Compared to processing noisy and structurally variable raw HTML code, this structured data contract not only greatly reduces the workload of subsequent data cleaning but also minimizes Token waste when inputting into LLMs for reasoning, enabling the AI framework to smoothly ingest category trend data at set frequencies (e.g., every 15 minutes) and render accurate competitive panoramas on backend dashboards in real-time.

Next is the AI SERP API (Artificial Intelligence Search Engine Results Presentation Interface) used to seize the “Rank Zero” dividend of off-site search and the AEO era. When up to 30% of organic traffic is directly intercepted by generative overviews like Google AI Overviews, traditional ranking monitoring tools become completely invalid. Pangolinfo’s AI SERP API is specially designed to cope with this technological paradigm shift. It can deeply mine and parse the underlying structure of AI-generated results, capturing algorithmic recommendation logics that standard crawlers cannot touch. By analyzing the cited links, source authority, and even the Share of Model of specific brand terms extracted from AI overviews, a seller’s marketing AI system can quickly reverse-engineer the large model’s preferences, thereby adjusting content strategy. Furthermore, this API natively supports UULE parameters, allowing sellers to accurately simulate localized search results in any global city (such as New York or Tokyo), which is crucial for evaluating a product’s localization performance in different cross-border markets. Not only that, this interface can also automatically generate a real-time full-screen webpage screenshot (attached in Base64 format) while extracting data, providing indisputable dual visual verification evidence for corporate compliance reviews and cross-departmental confirmations.

On the deep battlefield of product R&D and consumer insights, the Amazon Review API plays a vital role. Buyer reviews are excellent materials for optimizing products and guiding R&D, but manually reading and categorizing tens of thousands of multilingual reviews is unrealistic. This Review API provides a standardized RESTful interface supporting refined filtering and deep scraping of reviews by star rating, time, and region. It not only scrapes text content but also synchronously extracts review timestamps, buyer persona correlation info, and highly persuasive buyer-show images. Through its provided Webhook mechanism, the AI framework can receive push notifications of incremental reviews in real-time. When these massive review data streams are formatted into JSON and continuously fed to Claude models deployed on Amazon Bedrock, powerful large language models can quickly execute Sentiment Analysis, high-frequency word cloud extraction, and pro-con comparisons. This automated process can help brands instantly locate core pain points amidst massive noise and guide supply chains for targeted improvements.

Finally, on the compliance level of brand globalization and risk prevention, the WIPO API (World Intellectual Property Organization Interface) builds a solid defense wall. Transnational intellectual property infringement is a fatal factor leading to instant Amazon store closures. By connecting to the WIPO API provided by Pangolinfo, the AI analysis framework can automatically conduct high-concurrency screening and cross-retrieval of patents, trademarks, industrial designs, and copyrights globally at the very front end of the product selection process. This preemptive automated risk exclusion greatly reduces the probability of a new product being taken down due to complaints after launch. Meanwhile, through continuous automated scanning of core competitors’ recent patent application trends, AI can deeply deduce the other party’s next-generation product technology roadmap, helping enterprises adjust their own R&D direction and market entry schedule in advance, turning passive defense into active attack.

By deeply combining and applying this API matrix, cross-border e-commerce enterprises completely break through the data barriers from market intelligence acquisition and consumer sentiment analysis to intellectual property defense, laying the most solid and broad data highway for the upper complex AI decision-making framework. Furthermore, its billing model has high elasticity and transparency, significantly reducing the Total Cost of Ownership (TCO) compared to self-built underlying infrastructure.

VI. Moving Towards Artificial General Intelligence: Agent Generic Skill Practices Encapsulated for Scrape API and AI SERP API

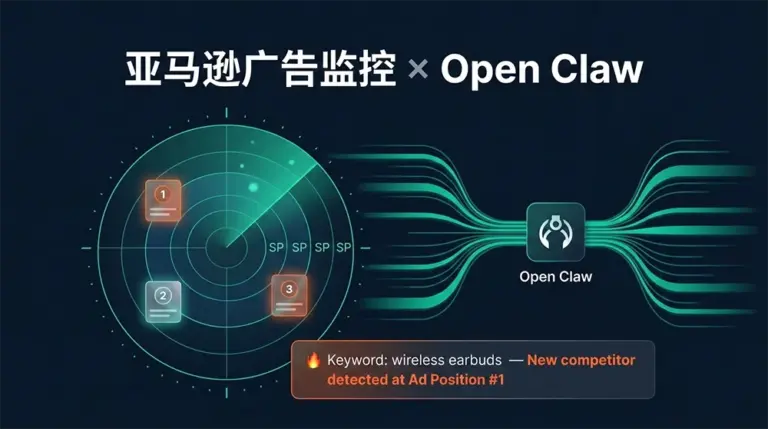

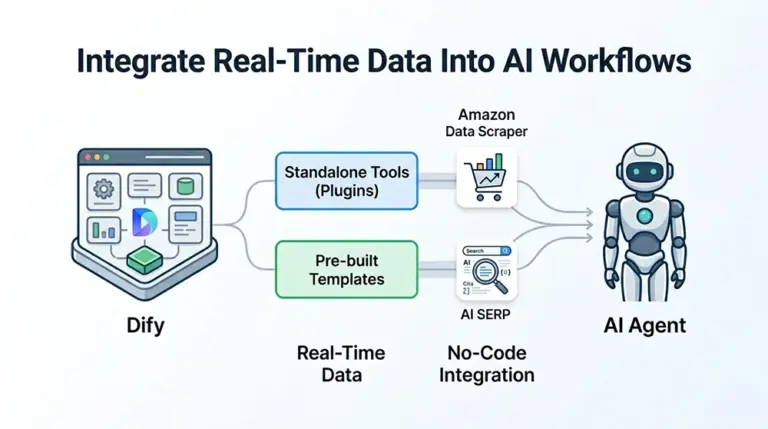

In the mature developer ecosystem and AI application environment of 2026, the logic of building enterprise technical teams is evolving from “writing rigid API calling scripts” to “assembling intelligent Agents with autonomous interaction and reasoning capabilities.” To enable advanced Agent IDEs like OpenClaw, Claude Code, Cursor, and even Devin to directly and seamlessly converse with the complex external data world, Pangolinfo proactively encapsulated its powerful underlying APIs into out-of-the-box “Skill” components, greatly lowering the engineering threshold for implementing AI frameworks, so that business personnel or LLMs who know nothing about underlying scraping logic can easily fetch global trade data.

The most representative one is the Amazon Scraper Skill used for e-commerce data extraction (for detailed documentation, see: “https://www.pangolinfo.com/amazon-scraper-skill/“). This is a thoroughly No-code data agent skill package specially designed to convert noisy, massive, and ever-changing raw Amazon webpage HTML into structured JSON data with deterministic fields, thereby completely preventing hallucinations and Token waste produced by large models when processing unstructured data. In actual business deployment, developers only need to input an extremely simple command in the command line (e.g., npx clawhub@latest install pangolinfo-amazon-scraper --force) to grant this skill to the agent. Once installed, the agent’s working mode undergoes a revolutionary change. Operations managers can directly issue instructions to the Agent via natural language, for example: “Help me analyze the Top 1000 Best Sellers in the ‘portable fan’ category on the US site, extract their core price bands, and filter out dark horse products ranked in the top 50 but with fewer than 500 reviews.” Upon receiving the instruction, the Agent will automatically mount the Amazon Scraper Skill, concurrently schedule up to 50 proxy threads in the cloud, bypass Amazon’s CAPTCHA verifications in milliseconds, accurately extract the required massive JSON data, and complete filtering, comparison, and statistics in memory. Ultimately, it not only reports the analytical conclusion in natural language but also automatically pushes the cleaned price and inventory data streams synchronously to team BI dashboards like Lark, Airtable, or Google Sheets for persistent tracking. This closed loop from instruction input to data delivery completely liberates the originally tedious manual collection work.

Another extremely powerful component is the AI SERP Skill focused on panoramic off-site search engine insights (for detailed documentation, see: “https://www.pangolinfo.com/ai-serp-skill/“). This skill is not only capable of executing traditional keyword searches, but its core lies in specialized deep feature extraction targeting generative overviews like Google AI Overviews. By introducing Multi-modal native dialogue support, this skill upgrades a single-dimensional crawler into a continuous mining engine with memory and context correlation capabilities. In commercial combat, PR marketing teams can use this Skill to deploy a “Brand Cyber Monitoring Agent.” Every day, this agent retrieves pain-point keywords related to its own brand and competitors on global multi-language search engines, utilizes the AI SERP Skill to extract the summary evaluations generated by large models, and tracks the core cited sources behind these evaluations. Even more uniquely, the skill’s visual engine can capture a real-time full-screen webpage screenshot at the moment of data scraping and attach it to the data packet. If the agent discovers that a regional Google AI recommendation engine starts citing large-scale negative news against its brand, it immediately triggers commercial alerts in internal communication channels (like Slack), accompanied by screenshot evidence with concrete timestamps and detailed analysis reports, and can even directly generate several targeted SEO crisis PR article outlines based on the scraped context for human decision-makers to review and confirm. Through this tool, enterprises truly implement AEO (AI Engine Optimization) from a concept into a day-by-day automatically executed defensive counter-attack strategy.

VII. Conclusion

In summary, as we enter 2026, the competitive landscape of Amazon cross-border e-commerce has irreversibly upgraded from pure supply chain dividend battles and low-level human labor stacking to multi-dimensional algorithmic gaming deeply empowered by AI and driven by real-time massive data. Today, as profit margins are continuously compressed and compliance policies grow stricter, sellers still intoxicated by past success, relying on manual processing of lagging reports, and depending on simple rule engines for ad bidding are destined to be dragged into the abyss by this “path dependency.”

The core essence of digital transformation lies in reconstructing the enterprise’s business brain and nervous system, that is, building an Agentic AI analysis, decision-making, and recommendation framework equipped with self-learning, forward-looking prediction, and compliant execution capabilities. And what grants this brain wisdom and vitality is precisely the continuous, anti-blocking, and highly structured real-world business data at the bottom layer. By strategically and comprehensively integrating the enterprise-grade interfaces provided by Pangolinfo—including the Scrape API for breaking information barriers, the AI SERP API for dominating the AEO era, the Amazon Review API for peering into consumer souls, and the WIPO API for building a global intellectual property defense net—and avant-gardely utilizing encapsulated Agent generic Skills (like Amazon Scraper Skill and AI SERP Skill) to endow agents with powerful execution and interaction capabilities, Amazon sellers can complete the intelligent reshaping of their core business chains at extremely low trial-and-error costs and extremely high operational efficiency.

In this new trade era dominated by computing power, algorithms, and high-quality data, only cross-border e-commerce enterprises that fully embrace AI-driven frameworks and deeply integrate underlying data infrastructure with top-level business logic can consistently seize opportunities under the wash of the global digital wave and forge an indestructible business moat that spans economic cycles.

Get free instant access to the Pangolinfo API or view the integration guide.