1. Introduction: The Bottleneck of Traditional SaaS and the Paradigm Shift to Agentic AI

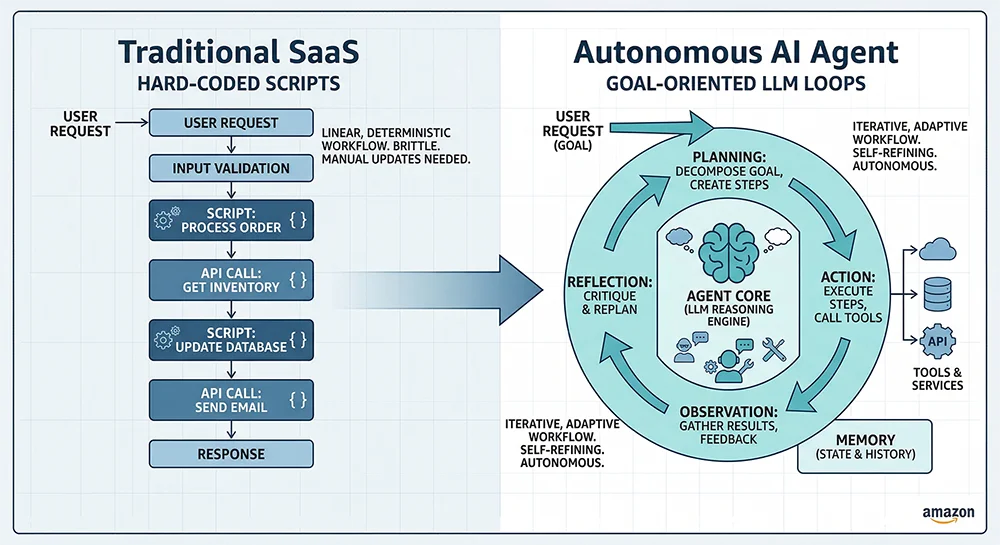

In the exponentially dynamic cross-border e-commerce network, Amazon’s foundational architecture is undergoing a profound transformation driven by computational power limits and Large Language Model (LLM) breakthroughs. Traditional automated software and rule-based scripts are fundamentally constrained by hard-coded conditional branches; they can only rigidly execute pre-defined linear schedules (e.g., timed repricing or automated emailing). However, confronted with an infinite expansion of SKUs, hyper-competitive Cost-Per-Click (CPC) inflation, and erratic weighting shifts in the A10 algorithm, this legacy paradigm reveals fatal flaws: sluggish response times, burdensome maintenance loops, and systemic paralysis when facing edge-case black swan events.

Empirical observations show that top-tier Amazon operations teams are hitting an absolute human threshold—managing hundreds of long-tail SKUs while processing dozens of intersecting data dimensions across narrowing two-hour decision windows. This cognitive bottleneck naturally catalyzed the emergence of Agentic AI. Unlike generic dialogue LLMs acting merely as passive natural language processors, commercial AI agents exhibit goal-oriented behavioral loops. They possess environmental perception, multi-step logical planning, capabilities to interact with external tools, and cross-cycle episodic memory evolution. However, the true efficacy of this digital “prefrontal cortex” relies solely on the purity and depth of its underlying data lake—data ingested for pre-training, fine-tuning, RAG deployment, and continuous RLHF correction.

2. Reconstructing Perception: Natural Language & Multi-Modal Semantic Data Processing

Whether navigating complex customer service mediation to prevent A-to-Z claims or optimizing product listing semantics (SEO) for organic search retention, interactions within Amazon’s B2C ecosystem orbit around a highly coupled natural language matrix. Consequently, digesting immense volumes of high-dimensional text laden with emotional intent remains the critical starting point in assembling Amazon AI Agent Training Data.

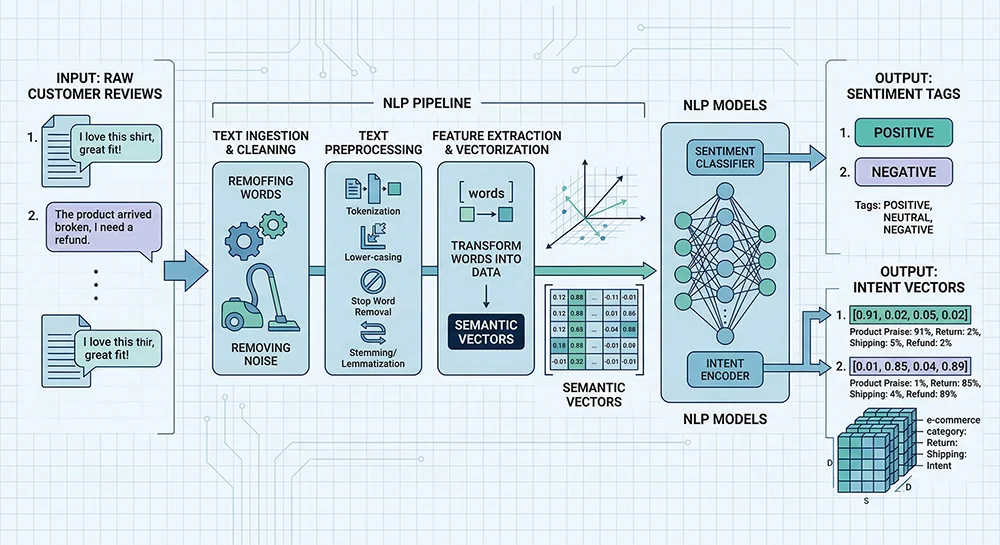

2.1 Mining Unstructured Reviews and Q&A Clustering Corpora

Beyond determining macro conversion rates, customer reviews represent a semantic goldmine of long-tail feature demands and granular product defects. Training a market research Agent requires feeding it continuous streams of raw noisy text, multidimensional star-rating representations, cross-regional timestamps, and crucial “Helpful” voting vectors. Through Transformer-based architectures like BERT, the Agent executes sentiment analysis and opinion entity mining. It utilizes tokenization, lemmatization, and dependency parsing to filter out meaningless adpositions and lock onto high-value purchase intents. For example, identifying the entity “power bank” coupled with the action defect “rapid battery loss in cold” allows the agent to iteratively propose “cold-weather endurance” as a highly convertible long-tail keyword for front-end organic funnels.

However, acquiring this data presents an engineering dilemma. Traditional reverse-engineering scraping methods trigger draconian anti-bot countermeasures, often yielding mangled DOM fragments. Utilizing dedicated enterprise interfaces like the Reviews Scraper API bypasses these obstacles. It cleanses garbled Amazon HTML through headless cloud nodes into pristine, structured data streams perfectly formatted for vector embedding, laying an unshakeable foundation for subsequent semantic AI training.

2.2 Intent Classification from Multilingual Customer Service Trajectories

Deploying a 24/7 autonomous customer service agent demands millions of deeply vetted cross-lingual interaction logs. The intent recognition network requires pairs of intent-response mappings to navigate ambiguities (e.g., mapping “I moved” to an explicit `update_order_address` API call). Furthermore, a confidence scoring framework must be integrated to mandate rigid operational boundaries. When the NLP engine detects a high sequence of negative sentiment verbs or critical account security triggers, the agent instantaneously falls back to human-in-the-loop (HITL) escalation, isolating the seller’s account metrics from unpredictable algorithmic hallucinations.

3. Anchoring the Underlying Search Logic: Structured Catalogs and Knowledge Graphs

While natural language acts as the agent’s sensory organs, Amazon’s rigid, monumental structured product database and strict taxonomy mechanics form the marketplace’s skeleton. To automate catalog insertion and organic SEO seamlessly, an AI must be tightly coupled with these underlying protocols.

3.1 Internalizing Browse Nodes and Item Type Dynamics

The A9/A10 search ranking engines rely heavily on the granular Browse-tree structure. Each node branch dictates distinct traffic weightings. Training a listing agent demands terabytes of time-series node classification datasets. The Agent leverages supervised learning to extrapolate the precise, minimum-competitive leaf node dynamically based solely on a dry textual product description. Aligning structured attribute data (e.g., multi-region material compliance parameters) structurally prevents catastrophic suppression events triggered by algorithmic sweeps detecting missing sub-category validations.

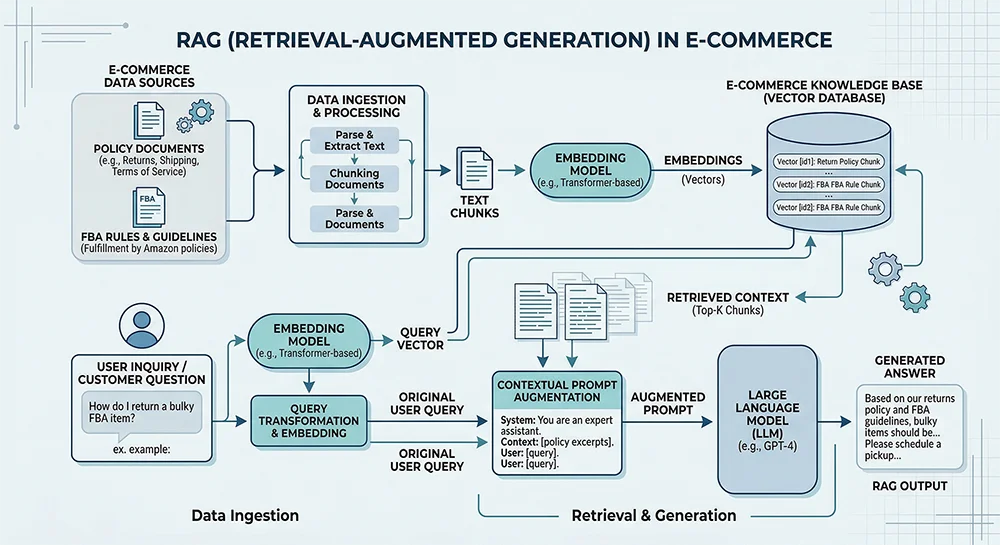

3.2 Mitigating Hallucinations via Retrieval-Augmented Generation (RAG)

Large language models possess a potentially lethal inclination to hallucinate procedural realities using probabilistic interpolation. In highly regulated vectors like Amazon FBA hazardous materials handling or complex warehousing logistics, an hallucinated instruction risks account suspension. Integrating a Retrieval-Augmented Generation (RAG) data flow becomes the absolute baseline safeguard. By embedding the latest FBA dimensional policies, international compliance certificates, and internal Standard Operating Procedures (SOPs) into vector storage spaces via high-dimensional floating-point arrays, the Agent anchors its logic. When queried regarding “lithium battery FBA requirements,” the agent bypasses internal hallucination and performs a rapid cosine similarity retrieval against authoritative documentation chunks, passing them into the LLM context window to output a 100% traceable, factual directive.

4. Dynamic Quantitative Benchmark Networks: Orchestrating Millisecond Commerce

Granting an AI Agent “commercial intuition” necessitates enormous volumes of time-series data and autoregressive modeling frameworks to survive Amazon’s draconian ROI models and supply-chain pressures.

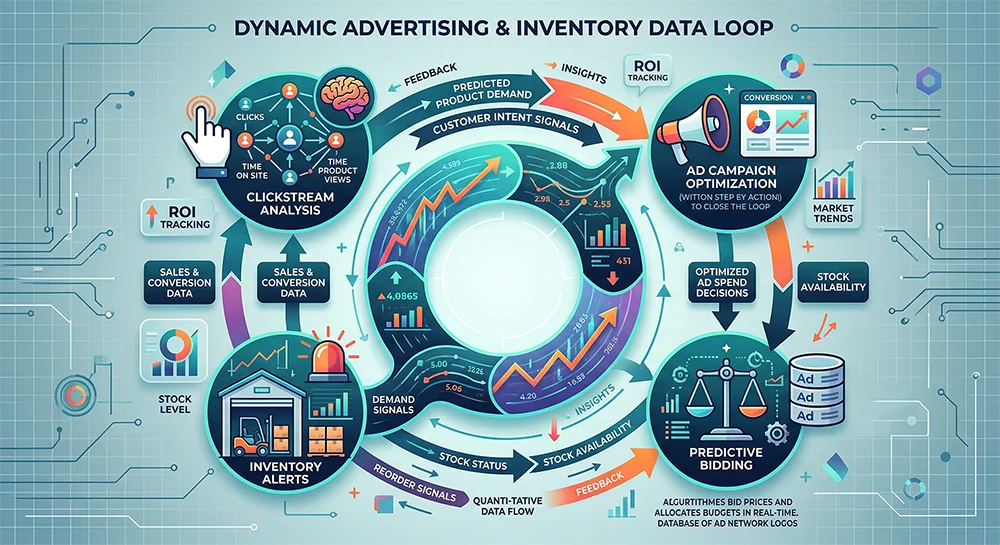

4.1 Clickstream Ad Strategy and High-Frequency Matrix Tracking

PPC (Pay-Per-Click) autonomous agents depend upon the most data-intensive reinforcement learning loops. Continuously digesting market impressions, CTR, CPC, and TACoS across thousands of keywords over varying match types builds the predictive foundation. By overlaying Bayesian optimization or polynomial regression models over long-tail keyword performances spanning several months, the Agent can dynamically sniper-bid the optimal marginal conversion value at any given second. Sourcing this real-time stream is notoriously difficult; relying on antiquated daily reports is insufficient. Utilizing enterprise pipelines like the Pangolinfo Scrape API guarantees minute-level refresh rates. This unthrottled, compliant ingestion feeds AI bidding engines with the highest-velocity intelligence, creating an insurmountable competitive information moat.

4.2 Supply Chain Autopilot: Inventory Flux and Sales Velocity Predictions

A superior supply chain intelligence Agent constantly absorbs rolling 14-to-30-day sales velocity metrics alongside absolute stock depths across FBA and 3PL nodes. Integrating a price elasticity function allows the agent to conduct preemptive macroeconomic simulations. If the agent detects an impending stockout combined with an active Purchase Order (PO) delay, it autonomously elevates retail display prices to throttle demand—preventing irreversible ranking damages from zero-inventory days—only to plunge the price back down to capture the Buy Box the precise second new inventory clears Amazon’s receiving dock.

5. The 2026 Compliance Paradigm: BSA Regulations and Non-Invasive Data Guardrails

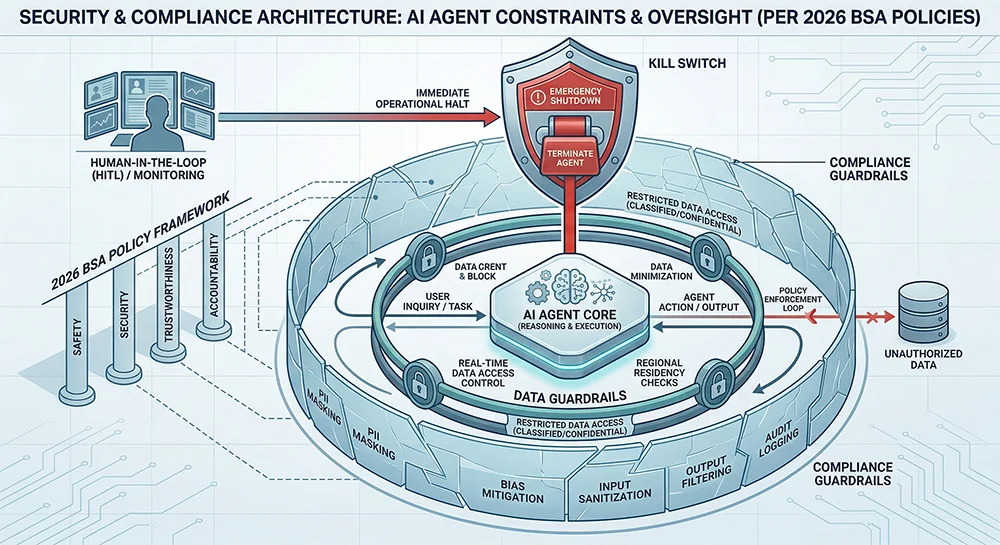

The proliferation of AI has triggered an intense counter-escalation in platform oversight. The 2026 updates to the Amazon Business Solutions Agreement (BSA) mandate unprecedented data acquisition restrictions, particularly surrounding the outright prohibition of programmatic reverse engineering aimed at using Amazon’s interface to train external multi-modal AI models.

5.1 The Dynamic ‘Kill Switch’ and Identity Verification

Modern compliance demands that any Agent intersecting with Amazon architectures declare its robotic identity in HTTP/API header payloads. Furthermore, the orchestrator node must incorporate a hard-coded, zero-latency “Kill Switch” routing logic graph. If an anomalous access signal or threshold block is triggered by Amazon’s proprietary Web Application Firewall, the agent must instantaneously self-terminate its connection loop to definitively bypass associated seller-account suspension metrics.

5.2 Secure Decoupled Ingestion via Enterprise Proxies (The Pangolinfo Approach)

With “data vacuuming” via raw DOM headless rendering strictly penalized, enterprise operations are forced into isolated cloud sandboxes to maintain their independent Data Lakes. Starving the data lake, however, starves the AI matrix. Bridging this gap demands leveraging specialized intermediary layers like the Pangolinfo Scrape API. By offloading raw scraping payloads to an immensely distributed, SLA-backed proxy infrastructure perfectly decoupled from the seller’s sensitive operational hubs, businesses receive fully scrubbed, API-ready JSON streams dynamically. This architecture ensures zero-touch adherence to reverse-engineering prohibitions while maintaining the massive ingestion throughput required to train bleeding-edge algorithmic models continually.

6. Final Conclusions & Strategic Roadmap

Constructing a versatile Agentic AI system for the fiercely hostile Amazon marketplace surpasses the mere utilization of text generators. From distilling multi-modal semantics into vector clusters and securing knowledge architectures through RAG guardrails, to operating high-frequency time-series optimization graphs under brutal BSA compliance redlines, success depends entirely on the orchestration of pure, immense, and compliant data streams.

Future oligopolies will be formed by enterprises that preemptively deploy decoupled data infrastructure today. We advise businesses to demolish legacy fragmented scripts and embrace highly scalable, transparent acquisition channels like Pangolinfo. By feeding an autonomous AI brain legitimate, high-frequency signals, organizations transform these theoretical blueprints into staggering operational supremacy in the e-commerce hyper-competitive arena.

Actionable Next Step: Do not let your AI infrastructure stall due to antiquated data blockages. Evaluate the unparalleled capabilities of the Pangolinfo Scrape API architecture today, and build the ultimate enterprise-grade data pipeline to fuel your Amazon AI Agents!