Every Amazon seller chases the same goal: find that product with real demand, thin competition, and a margin worth fighting for. The industry calls it a “winning product” or a “blue ocean opportunity”—and just about every tool vendor promises to help you find one. The reality is far messier. Most sellers spend weeks digging through spreadsheets, third-party dashboards, and browser extension popups, only to discover that the “opportunity” they identified already had three new entrants by the time their purchase orders cleared customs.

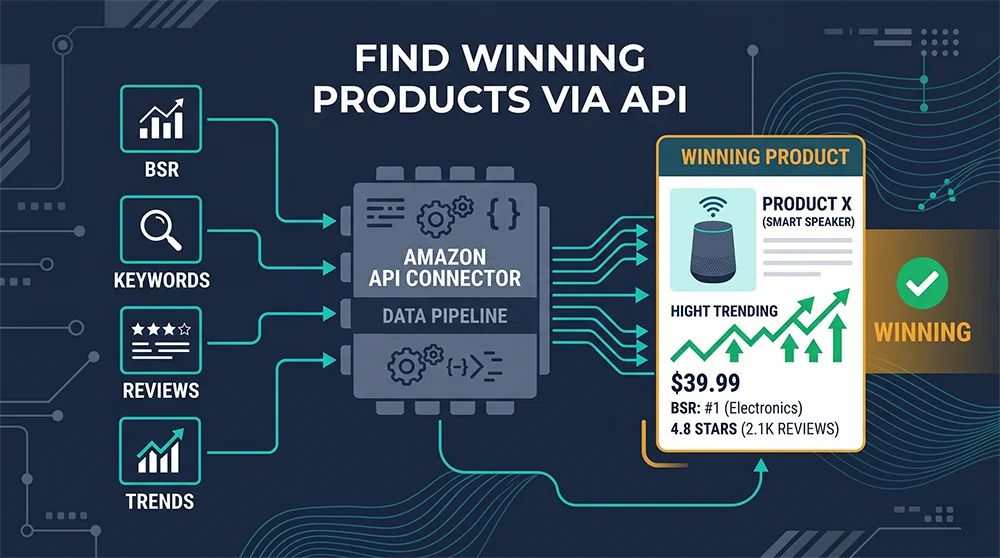

The root cause isn’t a lack of effort. It’s a data infrastructure problem. The ability to find winning products on Amazon via API—using real-time, first-hand data rather than processed, time-delayed snapshots—has become the single biggest competitive differentiator between sellers who catch opportunities early and those who arrive at the party after it’s over.

In 2026, the average active seller count per category on Amazon has grown by roughly 35-40% compared to 2023. Category windows have compressed. A product that might have had a three-month blue-ocean window in 2021 now gets discovered, copied, and commoditized within weeks. Against this backdrop, continuing to rely on weekly-updated SaaS tools or manual browsing of public listings is roughly equivalent to using yesterday’s weather report to decide whether to carry an umbrella today.

This guide breaks down every major Amazon product research API and data source type available in 2026—what each delivers, what it can’t, and how to combine them into a discovery pipeline that can actually help you find winning products on Amazon via API before your competitors even know the opportunity exists. Whether you’re a solo seller just starting to scale or a technical team building automated sourcing workflows, there’s a practical path forward here.

Why Traditional Methods Fail to Find Winning Products on Amazon

Before mapping out which data sources actually work, it’s worth understanding precisely why the conventional approach breaks down. The typical product research workflow looks something like this: a seller visits Amazon’s Best Sellers list, cross-references it with a browser extension that shows estimated monthly sales, exports the data to a spreadsheet, and then runs it against a SaaS tool’s competitor analysis module. It sounds thorough. In practice, it has three compounding failure modes.

The data lag problem. Every subscription-based research tool runs on a crawl-and-cache model: data is scraped from Amazon at some interval (often once every 24-48 hours for most categories, sometimes less frequently for niche nodes), processed, and stored in the tool’s database. By the time that data reaches your screen, it might be 72 hours old. For a hot new release or a fast-moving trend, 72 hours is an eternity. The sellers who learn about an opportunity from a tool’s database are, by definition, not the first movers.

The dimensionality problem. Identifying a winning product requires synthesizing multiple data signals simultaneously: BSR trend velocity, keyword search volume trajectory, sponsored ad density (which indicates monetization competition), review growth rate, price compression over time, and category-level inventory dynamics. No single free tool covers all of these. Most sellers end up stitching together information from three to five different sources, introducing inconsistencies in time horizons and measurement methodologies that make cross-dimensional analysis unreliable.

The homogenization problem. When thousands of sellers use the same tool to identify the same “opportunity signals,” those signals stop being signals. The market processes the information quickly, and what looked like a blue ocean becomes a crowded pool before most people can react. Using a discover winning Amazon products workflow that’s identical to your competitors’ workflow is a race where you have no structural advantage.

The Data Source Landscape: 6 Ways Sellers Research Amazon Products

With those failure modes in mind, here’s a structured breakdown of the six major approaches to Amazon product research data, ranked roughly from lowest to highest data quality and real-time fidelity.

1. Amazon’s Native Public Listings

Amazon’s Best Sellers, New Releases, Movers & Shakers, and Hot New Releases pages are genuinely valuable—and underused in their raw form. The Best Sellers list updates hourly, making it one of the most current free signals available. Movers & Shakers shows products with the largest BSR rank improvements over 24 hours, which is a strong early indicator of demand acceleration. The limitation is depth: these pages tell you what’s moving, not why, and they provide no contextual data about competition intensity, keyword dynamics, or margin potential. They function best as a first-pass filter, not a decision tool.

2. Google Trends and External Demand Signals

Google Trends captures consumer interest at the search intent level, which sometimes precedes Amazon sales by weeks. If a search term is trending steeply upward on Google but hasn’t yet generated significant Amazon search volume or supply-side competition, that’s a genuinely useful early signal. The challenge is that translating Google-side demand into Amazon-side opportunity requires additional validation—not all Google searchers become Amazon buyers, and the category economics on Amazon can look completely different from what external trend data suggests.

3. Social Media and Community Platforms

TikTok, Reddit, Pinterest, and Instagram have become legitimate product discovery channels, with documented cases of products going from obscure to high-velocity within 48-72 hours of viral exposure. These platforms offer the highest early-warning fidelity available to anyone, but the signal-to-noise ratio is extremely low and the conversion path from social virality to Amazon sales is variable. They work best as supplementary sensors that trigger deeper investigation via more reliable data channels.

4. Subscription-Based Research Tools

Helium 10, Jungle Scout, and their peers have genuine value in organizing large volumes of Amazon data into structured interfaces that lower the cognitive load of research. Annual plans typically run $600-$1,200 for a single user, and the core value proposition is convenience. The structural issue, as described above, is the data lag: these tools optimize for breadth and usability, not for real-time fidelity. For sellers who are primarily doing strategic-level category exploration rather than tactical opportunity sniping, they remain useful. For anyone trying to catch early-stage opportunities before the market processes them, the lag is a fundamental constraint that can’t be engineered away within a subscription model.

5. Third-Party Market Reports and Industry Data

Research firms and industry publications produce periodic Amazon market analysis—category growth reports, seller surveys, advertising spend benchmarks. These are valuable for forming macro-level hypotheses about which categories to focus on, but they’re too coarse-grained to support individual product decisions. Think of them as directional orientation, not navigation.

6. Real-Time API Data Infrastructure

This is where the structural advantage lives for sellers and teams willing to invest in a more sophisticated data workflow. A well-implemented Amazon product research API bypasses the intermediary layer entirely: instead of consuming a tool’s processed cache of Amazon data, you’re querying the source directly and getting results that reflect the current state of the marketplace, not the state it was in when someone last ran a crawl. This is the foundation for a genuinely differentiated ability to find winning products on Amazon via API.

Find Winning Products on Amazon via API: How It Works

The mechanics of API-based product research are more accessible than most non-technical sellers assume. At its core, an Amazon data API accepts a request—for a specific URL, ASIN, keyword, or category node—and returns structured data about that page in real time. No waiting for a nightly batch update. No consuming someone else’s cached snapshot from two days ago.

The data you can access through an Amazon product research API includes: bestseller rank by category node and hourly timestamp, keyword search results pages with full sponsored ad inventory, product detail pages with price history, review counts, and Buy Box status, category browse pages across any marketplace and zip code, and new releases and trending product lists. This breadth means you can construct multi-dimensional analyses that were previously impossible without significant manual effort or expensive enterprise tools.

The practical workflow for a seller using API-based research typically looks like this: define a set of target category nodes and high-intent keywords → set up automated data collection at a frequency that matches the pace of your target market → apply filtering logic to identify products that meet your criteria for market size, competition level, and growth trajectory → route candidates into a deeper analysis layer for review mining and competitive positioning. The entire pipeline can be automated, meaning you’re continuously scanning for opportunities without manual intervention.

Cost efficiency is another significant advantage. With a usage-based API, you pay for the data you actually collect, not for a fixed seat license that bundles dozens of features you don’t need. For a seller running a focused product research operation, the economics typically come out meaningfully lower than maintaining multiple SaaS subscriptions—and the data quality is superior.

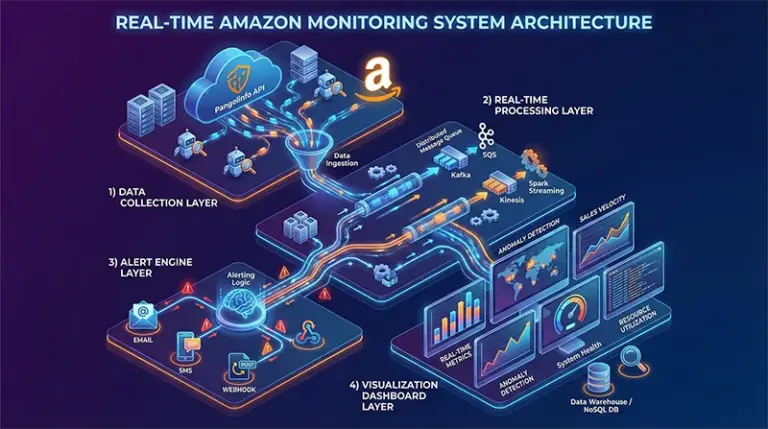

Pangolinfo Amazon Product Research API: Real-Time Data Infrastructure

For teams looking to build a serious, scalable approach to finding winning products on Amazon, Pangolinfo Scrape API provides the foundational data infrastructure. It covers the full range of Amazon data types that product research requires: bestseller and trending lists, keyword search result pages (including all sponsored ad slots, with a documented 98% capture rate for SP ad positions—an industry-leading figure), product detail pages, category nodes, and review data across any Amazon marketplace.

Data latency runs at the minute level rather than the daily or multi-day delays typical of subscription tool databases. This means that when a product begins accelerating in BSR rank or when a new keyword starts attracting significant sponsored competition, you’re seeing that signal within minutes rather than discovering it 48 hours later. For early-stage opportunity identification, that time compression is the difference between being a first mover and being a late entrant.

The API also supports geographic targeting—you can specify delivery zip codes to get localized pricing and availability data, which matters for marketplace-specific research and for understanding regional demand variations. Output formats include raw HTML, clean Markdown, and structured JSON, making it straightforward to plug into whatever analysis stack you’re working with, whether that’s a Python data pipeline, a no-code automation tool, or an AI agent workflow.

For sellers who prefer a visual interface over direct API integration, AMZ Data Tracker wraps the same real-time data infrastructure in a configurable dashboard. You define the categories, ASINs, or keywords you want to monitor; the system handles data collection on your specified schedule and visualizes the results as trend charts and comparative tables. No coding required, and the underlying data pipeline is the same real-time infrastructure as the API—so you’re not trading data quality for convenience.

The review data dimension deserves specific mention for product research purposes. The Reviews Scraper API provides access to Amazon’s full review dataset for any ASIN, including the complete text of negative reviews. For product opportunity analysis, this is invaluable: systematic negative review mining across category leaders reveals the functional gaps that current products fail to address. Those gaps are often the highest-value entry points for a differentiated product—genuine blue ocean opportunities hiding inside the criticism of existing red ocean competitors.

You can explore all available API endpoints and get your API key at the Pangolinfo console, with usage-based pricing that scales with your actual data needs rather than locking you into a fixed annual commitment.

Step-by-Step: Build Your Own Winning Product Discovery Pipeline

Here’s a concrete implementation framework that translates the API-based research approach into an actionable discovery system. It’s designed to be modular—you can implement each stage independently and layer them together as your operation scales.

Stage 1: Define Your Research Scope

Before writing a single line of code or configuring any tool, be specific about your target universe. Vague scopes like “home goods” produce overwhelming data with low signal density. A well-defined scope looks like: “Kitchen appliances, specifically the Coffee Makers subcategory, products ranked BSR 50-500, price range $25-$85, with fewer than 500 reviews.” Tighter boundaries significantly improve the signal-to-noise ratio of everything downstream.

Stage 2: Set Up Real-Time Data Collection

Use the best Amazon API for product research to establish automated collection across your target scope. The following Python example demonstrates a basic but functional discovery pipeline using Pangolinfo Scrape API:

import requests

import json

from datetime import datetime

API_KEY = "your_pangolinfo_api_key"

BASE_URL = "https://api.pangolinfo.com/v1/scrape"

def fetch_bestsellers(category_path: str, marketplace: str = "US") -> list:

"""

Fetch real-time bestseller data for a specific Amazon category node.

Returns structured product list with BSR, price, review count, and ad data.

"""

payload = {

"url": f"https://www.amazon.com/best-sellers/{category_path}",

"parse_type": "bestsellers",

"marketplace": marketplace,

"include_sponsored": True,

"output_format": "json"

}

headers = {"Authorization": f"Bearer {API_KEY}"}

response = requests.post(BASE_URL, json=payload, headers=headers, timeout=30)

response.raise_for_status()

return response.json().get("products", [])

def fetch_keyword_results(keyword: str, marketplace: str = "US") -> dict:

"""

Fetch keyword search results page including sponsored ad positions.

Useful for measuring advertising competition intensity.

"""

payload = {

"keyword": keyword,

"parse_type": "search_results",

"marketplace": marketplace,

"include_ads": True,

"output_format": "json"

}

headers = {"Authorization": f"Bearer {API_KEY}"}

response = requests.post(BASE_URL, json=payload, headers=headers, timeout=30)

response.raise_for_status()

return response.json()

def score_opportunity(product: dict, keyword_data: dict) -> float:

"""

Multi-dimensional opportunity scoring:

- Market traction: BSR rank position (lower = better)

- Competition headroom: review count (lower = more room to enter)

- Ad market efficiency: sponsored ad density as proxy for monetization competition

- Growth signal: BSR improvement trend

"""

bsr = product.get("bsr_rank", 9999)

reviews = product.get("review_count", 9999)

bsr_trend = product.get("bsr_7d_change", 0) # positive = rank improving

ad_density = keyword_data.get("sponsored_ratio", 1.0) # 0-1, lower = less competition

market_score = max(0, (500 - bsr) / 500) * 40 # 40 points max

competition_score = max(0, (500 - reviews) / 500) * 30 # 30 points max

trend_score = min(bsr_trend / 100, 1.0) * 20 # 20 points max

ad_score = (1 - ad_density) * 10 # 10 points max

return round(market_score + competition_score + trend_score + ad_score, 2)

# ---- Main discovery run ----

target_categories = ["appliances/coffee-makers", "kitchen/pour-over-coffee"]

target_keywords = ["pour over coffee maker", "cold brew coffee maker compact"]

print(f"[{datetime.now()}] Starting product discovery scan...")

all_products = []

for cat in target_categories:

products = fetch_bestsellers(cat)

all_products.extend(products)

keyword_metrics = {}

for kw in target_keywords:

keyword_metrics[kw] = fetch_keyword_results(kw)

# Score and filter candidates

candidates = []

for product in all_products:

kw_data = keyword_metrics.get(target_keywords[0], {})

score = score_opportunity(product, kw_data)

if score >= 55: # Minimum threshold for shortlisting

candidates.append({**product, "opportunity_score": score})

# Sort by opportunity score

candidates.sort(key=lambda x: x["opportunity_score"], reverse=True)

print(f"Found {len(candidates)} shortlisted candidates:")

for c in candidates[:10]:

print(f" ASIN: {c['asin']} | Score: {c['opportunity_score']} | BSR: {c['bsr_rank']} | Reviews: {c['review_count']}")

Stage 3: Review Mining for Differentiation Intelligence

For your top shortlisted ASINs, pull full review datasets and apply categorical analysis to the negative reviews (3-star and below). Cluster the complaints by functional theme—battery life, size/fit issues, durability, missing features. The cluster with the most complaints and the clearest solution path is your product differentiation brief. This step transforms the research from “find a product” to “find a problem worth solving better.”

Stage 4: Decision Matrix and Validation

Before committing to sourcing, run your top two or three candidates through a structured decision matrix that weights: total addressable market (BSR position and category size), competitive barriers to entry (required review velocity to reach page one), margin sustainability (current price distribution and price compression trend), and time-to-market feasibility. This final gate ensures you’re not just chasing an interesting data signal but making a commercially viable decision.

The Right Data Infrastructure Changes What’s Possible

The ability to find winning products on Amazon via API isn’t just a technical capability upgrade—it’s a fundamental shift in how competitive intelligence works for e-commerce sellers. When your data is more current, more complete, and more proprietary than what your competitors are working with, every decision in the product development and launch process starts from a stronger position.

Free data sources remain useful for broad market orientation. Subscription tools still have a role for teams that need a low-friction, pre-packaged research interface. But neither is a substitute for real-time, first-hand data access when the goal is to identify opportunities before the market converges on them. That’s the gap that a dedicated Amazon product research API fills—and in 2026’s compressed competitive environment, it’s a gap that matters more than ever.

The entry point has never been more accessible. Usage-based pricing means you can start with a focused, low-volume discovery workflow and scale your data consumption as your operation grows. The pipeline described in this guide is not the exclusive domain of large enterprises or technical teams with large engineering budgets—it’s a framework any seller who wants a systematic, data-driven approach to discovering winning Amazon products can implement and iterate on.

Start building your real-time product discovery pipeline with Pangolinfo Scrape API. Free to register, usage-based pricing, no annual lock-in.

Prefer a no-code setup? Configure your product monitoring dashboard directly in the Pangolinfo console.➡️Pangolinfo API Documentation