A conversation in the Amazon seller community recently caught our attention. The discussion wasn’t about a new algorithm update or a platform policy shift—it was about something harder to solve: the paralysis that comes from having too much data, none of it connected. As a team that builds data infrastructure for Amazon sellers every day, we’ve seen this play out in nearly every client conversation we have. The problem isn’t a lack of information. It’s the opposite.

Amazon seller data overload is a real operational condition. A seller we spoke with recently pulled up his desktop to show us his setup: fourteen browser tabs, all open simultaneously—BSR trackers, ABA keyword downloads, ad performance reports, a Seller Central dashboard, and two third-party browser plugins. Every tab was refreshing. He was cycling between them constantly, cross-referencing figures by eye, building Excel sheets on the side. Then he looked at us and said, “I’ve been staring at data for three hours. Ask me what decision I made today. I genuinely can’t tell you.”

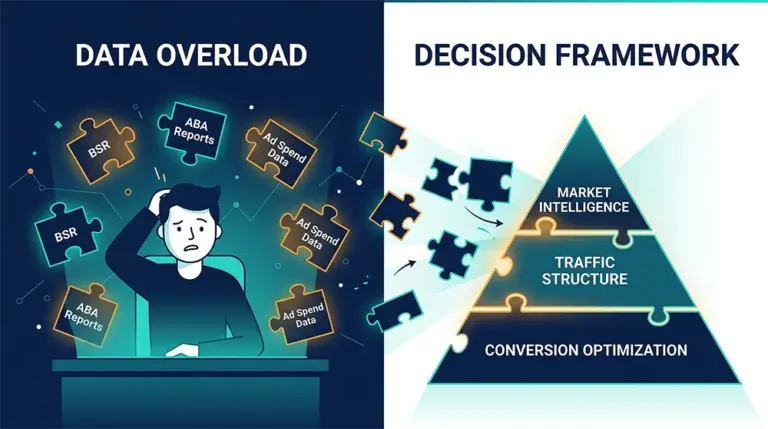

That moment describes the core failure mode of Amazon data-driven decision making when it’s done wrong. The signals are all there. The data is real and mostly accurate. But without a framework to connect them, they remain fragments—individually meaningful, collectively overwhelming. This is what we’d call the data overload trap: you’re drowning not because the water isn’t there, but because no one handed you a map of where you’re supposed to be swimming.

Why Amazon Data-Driven Decision Making Breaks Down in Practice

From where we sit—providing data scraping and API infrastructure to sellers, tool builders, and brand analytics teams—we see a pattern that repeats itself regardless of seller size. The sellers who struggle most with Amazon seller analytics aren’t the ones with the worst data. They’re often the ones with the most data, accessed through the most tools, producing the most reports. The breakdown happens at the integration layer: the point where raw numbers are supposed to become a coherent picture of what’s happening and what to do about it.

Amazon’s data landscape is organized across three fundamentally disconnected channels. BSR rankings tell you about market-level sales velocity. ABA (Amazon Brand Analytics) keyword data tells you about search behavior and traffic-side demand signals. Advertising reports tell you about conversion-side efficiency and spend allocation. Each data source is accurate within its own domain. But they sit in separate interfaces, update on different schedules, use different metrics, and require different skills to interpret. The causal chain between them—the “why” behind any given outcome—exists nowhere except in the head of whoever is manually trying to piece them together.

Consider a simple scenario. Your BSR drops 25 positions over a 48-hour window. That’s a real signal, but it’s ambiguous on its own. The cause could be a competitor running a Lightning Deal that pulled category traffic. It could be your main sponsored ad placement getting displaced. It could be a natural correction after a Coupon-driven sales spike. It could even be a review score dip that softened your conversion rate. Each of these causes is different. Each demands a different response. Add ad spend blindly and you’ve burned budget without addressing the root issue. Pull spend and you may compound the traffic loss. The data is there, but it isn’t organized in a way that makes the answer legible.

This is the deeper problem with Amazon seller analytics as most sellers practice it: the tools optimize for data collection, not for answering questions. Every new plugin, every additional download, every extra report adds signal volume without necessarily improving signal clarity. Sellers end up in what we call the “data collector’s trap”—acquiring data as if owning it provides protection, when in reality, uninterpreted data provides no protection at all. It just generates the feeling of being informed.

The practical result of this state is predictable: sellers default to heuristic shortcuts under cognitive overload. They add budget when rankings drop, cut prices when conversion slips, react to competitor moves with symmetric responses, and generally substitute activity for analysis. The moves look like decisions. They often aren’t.

Three Core Business Questions at the Heart of Every Amazon Operation

When we work with clients on structuring their data infrastructure, we use a forcing function that’s proven consistently useful: before we talk about what data to collect, we ask what questions the data needs to answer. Every Amazon operation, regardless of category or scale, is ultimately trying to answer a small number of high-stakes questions. The data strategy should be built backward from those questions, not forward from whatever the tools happen to provide.

Through working with sellers across multiple categories and team structures, we’ve found that the critical questions almost always collapse into three:

First: Is my core business stable right now? This is the diagnostic question—the one that governs daily operational defense. Any listing has a baseline performance state. Understanding whether that baseline is holding, and diagnosing the exact variable responsible if it isn’t, is the foundation of professional Amazon operations. When BSR drops, is it a keyword ranking issue, an ad placement issue, or a conversion rate issue? Answering this precisely requires seeing BSR movement, keyword rank changes, and ad position data in the same temporal view—something that’s nearly impossible when those three data sources live in separate tools on separate refresh cycles.

Second: Where is my competitor vulnerable right now? This is the competitive intelligence question. Competitor analysis is most useful not as a static snapshot but as a directional read—which way is a competitor moving? Where are they pulling back? Where have they left a gap? A BSR increase in a competitor’s listing might mean they’re running a promotion (temporary, don’t overreact), or it might mean they’ve broken through on a long-tail keyword with strong organic momentum (durable advantage, worth studying). The distinction matters enormously for how you respond, and it requires tracking competitor trajectory, not just current state.

Third: How is their growth flywheel actually structured? This is the reverse-engineering question—and it’s the one that most sellers skip because it requires more patience and data depth than the first two. When a competitor is growing consistently, the valuable question isn’t “what are they selling?” but “where is their traffic coming from?” Are they predominantly organic-traffic driven, or ad-dependent? How did they build keyword authority—through review velocity, through structured launch campaigns, through external traffic? Unpacking a competitor’s growth architecture gives you a map for replication or disruption, neither of which is possible if you’re only looking at their current BSR position.

These three questions don’t change much over time. A seller focused on category defense is always trying to answer Question One. A seller scouting new product opportunities is always trying to answer Question Three. The data requirements that flow from these questions—what you need to collect, how often, at what resolution—are much more specific than “everything,” and much more actionable than “more.”

This reframe, from data-first to question-first, is the structural shift that separates sellers who use Amazon seller analytics effectively from those who drown in it. And it’s the frame we use every time we help a client design their data pipeline.

From Data Chaos to Structured Analytics: Tools That Close the Loop

Having a clear framework for what questions you’re trying to answer is necessary but not sufficient. You also need infrastructure that can deliver the right data, at the right cadence, in a format that connects the dots rather than scattering them further. This is where the technical layer matters.

Two dimensions define whether a data tool actually supports structured Amazon analytics or just adds to the noise: timeliness and structured output. Timeliness is straightforward—Amazon’s competitive landscape moves fast, and BSR rankings, sponsored ad positions, and review counts all change on intraday timescales during active promotional periods. If your data is refreshing daily or weekly, you’re making decisions based on a market that no longer exists. Structured output is subtler but equally important. Raw data—HTML pages, unprocessed keyword downloads, manually assembled Excel sheets—isn’t usable for analysis until someone has done the work of parsing it into fields with consistent semantics. The more of that work you have to do manually, the more cognitive load sits between the data and the decision.

For teams with technical capacity who need custom data pipelines, Pangolinfo’s Scrape API is built around exactly these two requirements. It covers Amazon product detail pages, BSR rankings, keyword SERP pages (including sponsored ad position extraction), review data, and price tracking—outputting clean, structured JSON that can be written directly into a database and queried by any analytics layer you choose to build on top. A few capabilities that come up consistently in seller conversations: the sponsored ad position capture rate is meaningfully higher than most alternatives in the market, which matters for competitive research at scale; zipcode-level targeting for data collection supports region-specific price and ranking analysis; and complete Customer Says extraction enables the kind of sentiment analysis that feeds back into product development and listing optimization decisions.

For teams without a dedicated engineering function, AMZ Data Tracker offers a no-code path to the same structural outcome. The configuration interface lets you define the ASINs, competitors, and keywords you want tracked; the platform handles collection, structuring, and visualization automatically. The design intention is precisely to replace the multi-tab, multi-tool browsing session that characterizes most sellers’ current data practice—giving you a single view where BSR trend, keyword rank movement, and ad position change can be read on the same timeline, without manual assembly.

One case that illustrates the operational impact: a brand-registered seller in the outdoor furniture category came to us with a team of three operators, each managing different ASINs, each using a personal toolkit of plugins and spreadsheets. Weekly team meetings were consumed by reconciling conflicting data before any strategic conversation could happen. After migrating their core ASIN and competitor tracking into AMZ Data Tracker, the data reconciliation phase of their weekly meeting disappeared. Strategy time increased. Decision quality improved not because the data got better in any absolute sense, but because everyone was looking at the same structured view of the same information at the same time.

Building the Data Pipeline: A Technical Reference

For teams that want to build their own structured analytics pipeline—combining BSR, keyword SERP, and ad position data into a unified time-series view—here’s a reference architecture using the Scrape API as the data intake layer.

The core principle is: collect raw data on a schedule, parse it into a consistent schema, store it in a queryable database, and surface it through whichever visualization layer your team is comfortable with (Grafana, Metabase, Redash, or even a well-structured Google Sheet with scheduled data pulls). The value is in the time alignment—having BSR data, keyword rankings, and ad position occupancy all timestamped to the same interval, so causal relationships become visible.

import requests

import json

from datetime import datetime

# Pangolinfo Scrape API - Structured Amazon Data Pipeline

# Purpose: Unified BSR + keyword ranking + ad position tracking

API_KEY = "your_pangolinfo_api_key"

BASE_URL = "https://api.pangolinfo.com/v1/amazon"

def collect_asin_snapshot(asin: str, marketplace: str = "US") -> dict:

"""

Collect structured product data for a given ASIN.

Returns BSR by category, review count/rating, price, and metadata.

Suitable for time-series storage and trend analysis.

"""

headers = {"Authorization": f"Bearer {API_KEY}"}

payload = {

"asin": asin,

"marketplace": marketplace,

"fields": ["bsr", "price", "reviews", "ratings", "availability"],

"output_format": "json"

}

response = requests.post(f"{BASE_URL}/product", headers=headers, json=payload)

result = response.json()

result["collected_at"] = datetime.utcnow().isoformat()

result["asin"] = asin

return result

def collect_keyword_serp(keyword: str, marketplace: str = "US") -> dict:

"""

Collect keyword SERP data including organic rank positions and sponsored placements.

Key fields: organic_ranks (list of ASIN + position), sp_positions (sponsored slots),

total_results_count.

Use case: Track how your ASIN's organic ranking changes relative to SP position

occupancy on the same keyword—reveals whether organic and paid traffic are reinforcing

each other or cannibalizing budget.

"""

headers = {"Authorization": f"Bearer {API_KEY}"}

payload = {

"keyword": keyword,

"marketplace": marketplace,

"include_ad_positions": True,

"output_format": "json"

}

response = requests.post(f"{BASE_URL}/keyword-serp", headers=headers, json=payload)

result = response.json()

result["collected_at"] = datetime.utcnow().isoformat()

result["keyword"] = keyword

return result

# Example: Daily data collection for a monitored ASIN and its core keywords

if __name__ == "__main__":

WATCHED_ASIN = "B09XXXXXXX"

CORE_KEYWORDS = ["camping chair lightweight", "portable outdoor chair", "folding camp chair"]

# ASIN snapshot

asin_data = collect_asin_snapshot(WATCHED_ASIN)

print(f"[{WATCHED_ASIN}] BSR: {asin_data.get('bsr')} | Reviews: {asin_data.get('reviews')}")

# Keyword SERP snapshots

for kw in CORE_KEYWORDS:

serp_data = collect_keyword_serp(kw)

sp_count = len(serp_data.get("sp_positions", []))

organic_position = serp_data.get("your_asin_organic_rank", "not ranked")

print(f"[{kw}] Organic position: {organic_position} | SP slots on page: {sp_count}")

# Write to database here — schema suggestion:

# TABLE: asin_snapshots (asin, marketplace, bsr, price, reviews, collected_at)

# TABLE: keyword_snapshots (keyword, marketplace, organic_rank, sp_position_count, collected_at)

Scheduled daily (or more frequently during active campaigns), this pipeline produces a structured log of how BSR and keyword positions move in relation to each other. When you visualize this as parallel time-series lines, causal relationships that were previously invisible—a BSR dip coinciding with a sponsored position displacement on a core keyword, for instance—become apparent immediately. That’s the practical difference between analyzing data and being drowned by it.

For teams not ready to build this infrastructure, AMZ Data Tracker provides the same time-aligned, multi-metric view in a no-code configuration. API documentation is available at docs.pangolinfo.com.

The Real Advantage Is Knowing What You’re Solving For

Something we’ve noticed consistently over the years: the sellers operating most effectively at scale tend to use fewer tools and track fewer metrics than their less-efficient counterparts. That’s not because they’re incurious or less sophisticated. It’s because they’ve done the work of identifying the small number of questions that actually drive their business outcomes, and they’ve built data systems that answer those questions reliably. Everything else is noise they’ve learned to ignore.

Amazon seller data overload is ultimately a symptom of a framework problem, not a data problem. The solution isn’t better tools in isolation—it’s a clearer definition of what questions those tools exist to answer. When that definition is in place, the right data infrastructure follows naturally. When it isn’t, more tools just create more fragments.

The broader shift we’re watching across the Amazon data ecosystem points in a clear direction: away from tools that maximize data volume, toward tools that maximize decision clarity. That shift drives what we build. Whether you’re designing a custom analytics pipeline with the Scrape API, or configuring a no-code monitoring dashboard with AMZ Data Tracker, the goal is the same—turning fragmented data back into a coherent operational picture you can actually act on.

Ready to move from data overload to structured Amazon analytics? Explore AMZ Data Tracker for a no-code monitoring solution, or access Pangolinfo Scrape API to build your own data pipeline. Free trial credits available. Documentation: docs.pangolinfo.com | Console: tool.pangolinfo.com