Here’s a pattern that keeps showing up with Amazon sellers who fail their first few launches: they didn’t pick the wrong product — they made the right decision with the wrong data.

A seller spends three months sourcing, only to find that when the shipment lands at the warehouse, a competitor had already slashed prices by 30% two months prior, liquidating inventory and reshaping the BSR landscape. What looked like an open market in the research phase had already begun its concentration cycle. Had they been monitoring real-time competitor pricing and inventory levels throughout the sourcing window, the decision to place that order would have looked very different.

Another common story: a seller sees solid keyword search volume numbers — but those numbers came from a tool report generated six months ago. By the time they’re ready to launch, the subcategory is in a demand trough. They made a present-day decision using past-tense data, and the results matched accordingly.

This guide is about fixing that problem at the root. We’re going to answer, systematically and specifically, the questions that matter: What data do you actually need for Amazon product research? Where does each type of data come from? What are the access methods and their real-world limitations? And what does a modern, scalable data infrastructure look like for serious sellers?

We won’t be vague about it. Every section deals with specific data types, specific sources, and specific tradeoffs — because that’s the only kind of content that actually helps you build something useful.

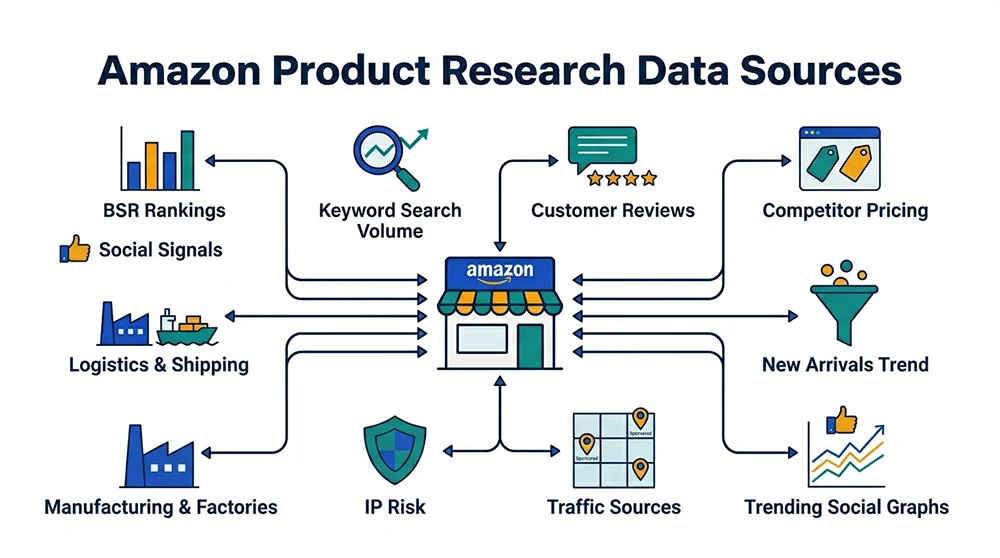

Part 1: The 12 Data Types Amazon Product Research Actually Requires

Most sellers operate with an incomplete data picture. They check sales rank and review count, but miss traffic structure. Or they focus on keywords while ignoring competitor supply chain dynamics. Product launch failures are often traceable to exactly one or two missing data dimensions. Here’s what a comprehensive Amazon product research data source framework looks like.

1. BSR Data (Best Seller Rank)

BSR is the most important public sales signal Amazon provides. It doesn’t tell you absolute units sold — but through BSR trends and historical curves, you can estimate relative sales velocity and competitive positioning within a category.

The value of BSR data isn’t a single snapshot — it’s movement. A product climbing from rank 5,000 to 500 over six weeks has passed market validation and still has room. A product that drifted from 200 to 2,000 is telling you a very different story: the growth phase is over, or a stronger competitor has entered. Multi-tier BSR matters too. A product with a strong sub-category BSR but weak main category rank suggests the sub-niche is small or undertrafficked — which could mean blue ocean opportunity or a size constraint, depending on how you read it.

2. Keyword Search Volume and Trend Data

Keywords are the most direct quantification of demand. A keyword’s monthly search volume sets the traffic ceiling for a product category. But raw volume alone is misleading without three additional dimensions:

Seasonal patterns, which in some categories can cause peak-to-trough swings of 10x or more — average annual numbers mask the fact that you’re entering a market six weeks past its seasonal peak. Purchase intent signals embedded in particular keyword structures (compare “bluetooth speaker” versus “bluetooth speaker for shower” — very different conversion profiles). And keyword competition density, which on Amazon is measured by how many top-brand ASINs dominate the sponsored ad positions for that term, directly determining how much any new listing needs to pay for visibility.

3. Competitor ASIN Data

Serious competitor analysis goes well beyond price and star rating. A complete competitor ASIN data package should tell you: which keywords the title is targeting (revealing the competitor’s traffic strategy); how A+ content frames the product’s value proposition (revealing how they read buyer motivation); which variants sell and which are catalog filler; price history over 12+ months (did they run a clearance campaign? Is the current low price sustainable?); and how fast their review growth slope is (tells you how aggressively they’re driving early sales velocity).

The most critical use of ASIN data in product research is quantifying competitive moats. Review walls — categories where the top 5 ASINs each have 15,000+ reviews — aren’t impossible to breach, but they require a very different resource commitment than a market where the frontrunner has 800. When you quantify the wall before you commit capital, you can plan realistically instead of discovering the problem six months into your launch budget.

4. Price Distribution and Margin Data

Price band analysis answers two questions: whether you can sell at all, and whether you can survive. A highly concentrated price band — where 80% of products cluster in a tight range — signals strong consumer price anchoring and leaves very little room for premium positioning without exceptional differentiation. A distributed price band indicates that different product quality tiers have found their buyers, meaning the category still rewards differentiation.

The companion to price data is cost data: FBA fees (which scale sharply with dimensional weight), category referral fees (8%–15% depending on category), advertising cost of sale (ACOS), and COGS. Running all four against the price ceiling before you source a product isn’t optional — it’s the difference between actually knowing your margin and guessing it. Plenty of competent sourcing decisions fail because no one ran the numbers until after the container was booked.

5. Review Count and Quality Data

Review volume is a quantified entry barrier. A category where the top 10 ASINs average 12,000 reviews requires a fundamentally different timeline and capital plan than a category where the frontrunner has 300. That’s not a subjective judgment — it directly determines how long you’ll be subsidizing reviews while competing for keyword relevance.

Review content, on the other hand, is a product differentiation radar. When a competitive set’s 1-star and 2-star reviews keep hitting the same issues — durability problems, confusing assembly, materials that feel cheap — those are your product improvement vectors. Sellers who systematically mine competitor reviews for negative pattern clusters consistently find more defensible differentiation angles than those who brainstorm from scratch.

Amazon’s newer Customer Says feature (AI-generated review summaries) represents a useful addition: it shows you what the platform itself sees as the dominant buyer satisfaction themes, which functions as a weighted signal of what your target customers care most about.

6. Traffic Structure Data

Where a product’s traffic comes from shapes the entire operational playbook. Organic-search-dominant categories reward keyword-heavy listing optimization and long-term SEO compounding. Ad-dependent categories mean you need a PPC budget from day one — silence in that environment doesn’t produce organic growth, it produces zero visibility. Traffic driven by “Frequently Bought Together” and “Similar Products” associations reveals purchase path adjacency, pointing toward bundle opportunities or logical brand extension directions.

The frustrating reality: Amazon doesn’t publish traffic source breakdowns publicly. You have to infer structure from a combination of third-party tool estimates and manual analysis of search results page ad/organic position ratios across multiple keyword samples.

7. Inventory Depth and Supply Stability Data

Competitor inventory patterns are a real-time signal of their operational health. Rapid inventory draw-down suggests either hot sales or a deliberate BSR push. Stagnant inventory over weeks flags a conversion problem or a pricing miscalculation. Intermittent stockouts reveal upstream supply chain fragility.

Stockout windows are the most tactically useful signal: a competing ASIN going out of stock for 5–15 days is one of the most reliable organic traffic redistribution events in the Amazon ecosystem. Sellers who are monitoring these patterns in near-real-time and have pre-positioned inventory can significantly outpace those who only notice a competitor’s stockout when they happen to check manually.

8. Ad Placement Data (SP/SB/SD)

The density and brand distribution of Sponsored Product ads on a category’s first-page results reflects competitive monetization pressure. A page where most ad slots are owned by one or two brands signals category consolidation and indicates that a new entrant won’t get keyword relevance without outbidding the incumbent in early launch. A page with diverse, fragmented ad placements suggests the category is still in a competitive scramble that can be disrupted.

CPC benchmarks embedded in ad data measure the cost of acquiring keyword traffic. Counterintuitively, the best early traffic opportunities for new products often lie in medium-volume keywords with low CPCs and decent conversion rates — not the high-volume head terms where incumbents are happy to burn budget to keep new entrants off the first page.

9. New Product Launch Trend Data

Tracking how recently launched ASINs accelerate through the BSR curve gives you a clear read on how “open” a market is to new players. If multiple new ASINs are breaking into the top 100 within 60 days of launch, this category is distributing traffic and giving new products a genuine shot. If new ASINs consistently plateau below rank 1,000 for months regardless of review count, market traffic may be severely consolidated around legacy brands or the ranking algorithm is heavily biased toward review history.

New product trend data is also useful for identifying category windows — sub-niches where a new product form factor is appearing but saturation hasn’t set in yet. These are among the highest-value signals for blue ocean positioning.

10. Intellectual Property and Compliance Data

IP risk assessment belongs in the product research phase, not in your inbox six months after launch. What you need to know before you source: trademark registrations (USPTO, EUIPO, CNIPA for China), design patents, utility patents, and whether any active Brand Registry holders are running enforcement campaigns in the relevant category.

These data types require querying dedicated IP databases — WIPO Global Brand Database, Google Patents, the USPTO patent search portal. The research overhead is real, but so is the downside cost of launching into a product space with active IP enforcement activity. One ASIN suspension after a $50,000 inventory commitment is significantly worse than the six hours spent on due diligence upfront.

11. Supply Chain and Sourcing Data

Product research doesn’t end at the Amazon front end. Supply-side viability is equally important. A product with validated demand but a specialized manufacturing process, low yield rates, or prohibitive minimum order quantities is only a good opportunity for sellers with the right factory relationships.

B2B platforms (1688, Alibaba, Made-in-China) provide a quick read on supplier concentration: few suppliers with wide quoted price ranges signals supply chain risk. Many suppliers with standardized products signals cost negotiability and backup sourcing options. For a deeper look, US Customs import records — accessible through services like ImportGenius or Panjiva — show you who is supplying your competitors’ inventory, at what volume, and on what shipping cadence. This is one of the most underutilized competitive intelligence data sources available.

12. Social Media and Off-Amazon Demand Signals

Amazon search data reflects current demand. Social platform signals can capture demand before it reaches Amazon — sometimes 6–18 months earlier. TikTok view counts and comment sentiment on product-adjacent content, Google Trends search curves (which are public and free), Reddit community discussion velocity, Pinterest save rates on product category boards — these signals are scattered, hard to aggregate, but valuable when you develop a systematic way to monitor them.

Specialized trend intelligence platforms (Exploding Topics, Trend Hunter) aggregate some of these signals into more actionable formats. For sellers who want to position ahead of the curve rather than chase it, building a monitoring system for off-platform demand signals produces distinctly better sourcing decisions than relying purely on what’s currently ranking in Amazon search.

Part 2: Where These Data Types Actually Come From

The 12 data types above map onto three distinct acquisition tiers — each with real trade-offs that determine what you can actually do with the data you get.

Tier 1: Amazon’s Official Channels — First-Party but Constrained

Seller Central analytics: For active sellers, Brand Analytics provides Search Frequency Rankings, click share data, and conversion funnel metrics for keywords. The data is high-quality — it’s directly from Amazon’s internal systems. The constraint: it only covers your own ASINs and keywords you’re already running ads against. It tells you nothing about categories or competitors you haven’t yet entered.

Amazon’s public front-end data: Every product detail page shows BSR, review count, rating, price, Q&A, listing structure, and variations. This data is real and current on every page load, which means it’s the most accurate view of the market available at any given moment. The problem is scale. Manually checking dozens of ASINs is feasible. Monitoring thousands across multiple categories over time is not a human task — it requires automation.

Movers & Shakers, Best Sellers, New Releases rankings: These three lists are Amazon’s own curated signal sets for market movement. Movers & Shakers in particular — updated hourly, showing the largest BSR gains over the past 24 hours — is an early warning system for demand spikes. The catch is that hourly updates only matter if your monitoring is continuous. A weekly manual check of Movers & Shakers is like reading last week’s stock ticker.

Amazon Selling Partner API (SP API): For technical teams, the SP API provides access to reports, advertising data, and catalog information. But the coverage is strictly limited to your own seller-side data. Competitor data, category-level analytics, and market trend information are entirely outside the SP API scope. It’s a useful integration for operational automation, not for market research.

Tier 2: Third-Party SaaS Tools — Wide Coverage, Structural Limitations

This is where the majority of Amazon sellers currently get their product research data. The main players — Helium 10, Jungle Scout, Viral Launch — offer broad feature sets covering keywords, competitor tracking, margin calculation, and listing optimization.

These tools work well within a specific use case: an individual seller or small team making qualitative product research decisions on a case-by-case basis. The structural problems become visible at scale or when decision velocity matters:

Data freshness is the biggest issue. Most SaaS tools refresh their data on a daily or weekly cycle — not in real time. The underlying acquisition method is the same as a DIY scraper (batch crawling Amazon’s front end), but the refresh cadence is constrained by cost management. When you’re looking at a competitor’s pricing data that’s 72 hours old in a market that moved in the last 48, your analysis starts at a deficit.

Sales volume estimation accuracy is another known weakness. Third-party tools can’t access Amazon’s actual transaction data, so they model sales from BSR movement using historical conversion assumptions. Those assumptions can be off by 30%–60% in categories with atypical BSR dynamics. The tools don’t tell you when their confidence intervals are wide — you get a number and assume it’s reliable.

Query limits and API access restrictions make bulk analysis cumbersome. Most plans cap the number of ASIN lookups per day, making systematic analysis of large competitor sets a multi-day process rather than an automated pipeline. And the data format is designed for human consumption in a dashboard, not for programmatic ingestion into your own data systems.

Tier 3: Custom Data Pipelines — Maximum Control, Maximum Cost

For enterprise-scale operations, SaaS companies building their own tooling, or sophisticated data-driven sellers, building a proprietary Amazon data collection system is the path to complete flexibility over what data you collect, when, and at what frequency.

The technical stack typically involves: a job scheduling system for parallel crawl tasks, a rotating residential IP proxy pool (datacenter IPs alone are insufficient for Amazon’s detection systems), headless browser handling for JavaScript-rendered pages, an HTML parsing and field extraction layer, and a time-series data storage system with historical archiving capability.

The persistent challenge isn’t the initial build — it’s the ongoing arms race with Amazon’s counter-scraping systems. Amazon’s anti-bot stack is sophisticated: it includes behavioral fingerprinting, CAPTCHA challenge sequences, device and browser attribute analysis, and what appears to be machine-learning-based anomaly detection on request patterns. Every time Amazon updates their detection logic, a portion of the existing crawler infrastructure fails silently, writing null values or incomplete records to your database until someone notices the data quality drop. Maintaining a reliable Amazon scraper at scale requires a dedicated engineering allocation that most teams consistently underestimate.

During high-demand periods — Prime Day preparation windows, Q4, major category trend events — Amazon’s counter-scraping intensity typically increases significantly, which means the data reliability of DIY scrapers drops at exactly the times when accurate, current data is most valuable.

Part 3: Five Data Access Problems Nobody Talks About Honestly

The gaps in Amazon product research data infrastructure are widely acknowledged in the seller community and rarely fixed. Here’s why each one is harder than it looks.

Problem 1: Data Staleness Corrupts Decision Quality

This is the foundational issue across all Amazon product research data sources that aren’t real-time. Markets on Amazon move in hours, not days. Movers & Shakers updates hourly. BSR can shift dramatically over a single weekend of promotional activity. Competitor pricing adjustments can reshape an entire category’s competitive dynamics within 24 hours during a promotional event.

When your research data is 72 hours old, every decision made from it carries a margin of error that you can’t see or quantify. This is particularly acute during high-velocity market events: Prime Day, pre-holidays, major category launches driven by viral social media content. These are the moments when right-now data has the most value — and when most sellers are working with the most stale information.

Problem 2: Coverage Gaps Force Blind Spots

No single tool covers all 12 data types we outlined above. Helium 10’s inventory tracking accuracy is a consistent complaint in its user community. Jungle Scout’s traffic models use estimation assumptions that can diverge significantly from reality in non-US marketplaces. Keepa’s price history is excellent but doesn’t integrate keyword data. Merchant Words gives you keyword volume but nothing about BSR dynamics.

The practical result is that serious sellers end up subscribing to multiple tools simultaneously — exporting from one into a spreadsheet, manually cross-referencing another, logging a third in a different tab — and doing their data integration by hand. Beyond the obvious time cost, this creates data consistency problems: two tools showing materially different monthly sales estimates for the same ASIN force subjective judgment calls about which number to trust, which undermines the whole point of using data.

Problem 3: Model-Based Estimates Are Optimistically Biased

Third-party tools can’t access Amazon’s actual transaction data. Their sales estimates are models built from BSR movement patterns and historical conversion rate assumptions. Those assumptions were calibrated on average category behavior — they’re structurally less accurate in high-velocity niches, seasonally distorted categories, or markets dominated by a small number of private label sellers whose BSR behavior doesn’t match typical patterns.

Tools don’t communicate their confidence intervals. You get a number — “estimated monthly sales: 3,200 units” — with no indication of whether the underlying model thinks it’s 80% confident or 45% confident in that estimate. For a category the tool encounters frequently with typical behavior, accuracy might be reasonable. For an unusual niche or an atypical competitive structure, the estimate can be systemically skewed. Sellers have no way to distinguish which situation they’re in without additional validation data.

Problem 4: Cross-Source Integration Is Expensive in Time and Accuracy

The workflow for a properly multi-dimensional product research analysis currently looks something like this: export keyword data from Tool A (Excel) → look up price history in Tool B (manual screenshot) → check estimated sales in Tool C (manual note) → scrape reviews from Amazon front end (manual pagination) → query supplier pricing on 1688 (manual inquiry) → consolidate everything in a spreadsheet → run your margin calculation. Per ASIN, this process takes 2–4 hours done thoroughly. Researching 20 competitor ASINs for a single category entry decision is a week of analytical work.

This timeline isn’t just inefficient — it introduces a temporal problem. By the time the analysis is complete, the market data from the beginning of the process may no longer accurately reflect current conditions. You’re always looking at a partially outdated picture, because the data collection process itself takes time and the market doesn’t pause while you work.

Problem 5: Self-Built Systems Have Hidden Ongoing Costs

The total cost of maintaining a reliable, production-grade Amazon scraping infrastructure is consistently underestimated. The initial engineering build is visible and budgeted. The ongoing costs are less obvious: monitoring and alerting when field extraction fails; updating parsing logic after Amazon front-end changes (which happen without notice); managing residential IP rotation costs (high-volume residential IP packages run into thousands of dollars per month at scale); handling Captcha failures and retry logic; debugging silent data quality degradation; and most expensively, dedicating engineering time to counter-measure updates when Amazon tightens its detection systems.

Two or three engineers working on scraping infrastructure maintenance sounds like a lot — and it often is more than the team anticipated when they decided to DIY rather than use a third-party data source. The build vs. buy calculation, when done honestly with the full ongoing maintenance cost included, often favors buying specialized data infrastructure from a provider for whom this is a core competency.

Part 4: Three Approaches Honestly Compared

Given the data needs and the friction points, here’s a clear-eyed comparison of the three main approaches to building Amazon product research data infrastructure.

SaaS Subscription Tools

Best for: individual sellers or small teams in the early stages of building their product research capability. The value proposition is real — you get broad feature coverage with zero technical setup and a low learning curve.

The limitations compound as you scale. Data freshness constraints (daily or weekly refresh cycles) become more consequential as decision velocity increases. Query limits constrain the scope of analysis you can do in a given time window. Data export formats are built for human dashboard consumption, not for pipeline integration. As monthly ASIN analysis requirements grow past a few hundred, the tool cost-to-value ratio deteriorates while the platform’s fundamental limitations remain unchanged.

Custom-Built Scraping Systems

Best for: engineering-heavy organizations for whom data collection is a core competency and competitive moat, and where the full ongoing maintenance cost is understood and budgeted. The flexibility ceiling is higher than any other approach — you control every field, every collection frequency, every data output format.

Realistic total cost: 2–3 engineers’ time at partial allocation for ongoing maintenance; residential proxy costs scaling with request volume; infrastructure hosting; and a tolerance for periodic data quality disruptions during counter-measure updates. For teams that genuinely need this level of control and have the resources to sustain it, this is the right path. For everyone else, reassess carefully.

Managed Data API Services

Best for: data-driven sellers who need programmatic access to real-time Amazon data without the infrastructure burden; SaaS companies building seller tools on top of reliable data feeds; teams deploying AI-driven product selection systems that need a stable, high-quality data layer.

The fundamental difference from SaaS tools: instead of consuming pre-packaged, pre-aggregated, schedule-based data, you request exactly what you need exactly when you need it. Every API call retrieves current data from the live Amazon page, not from a 72-hour-old cached snapshot. Instead of self-built scrapers, the infrastructure complexity (IP management, anti-bot countermeasures, parsing accuracy, uptime) is handled by a provider for whom this is the core technical investment.

Part 5: How Pangolinfo Approaches the Data Infrastructure Problem

Pangolinfo is built around a specific conviction: the right layer to solve the Amazon data access problem is the data infrastructure layer, not the analytics and visualization layer that most SaaS tools address. What that means in practice:

Real-Time Data Retrieval

When you make a request to Pangolinfo’s Scrape API for an Amazon ASIN, what you get back reflects the state of that page at the moment of your request — not a cached version from yesterday’s batch crawl. BSR, current price, availability status, review count, A+ content, variant structure: all live, all timestamped to the actual request moment.

For the use cases where data freshness is most critical — real-time competitor price monitoring, tracking Movers & Shakers velocity, identifying competitor stockout windows — this distinction changes what you can actually do with the information. You’re working from a current picture instead of a historical one.

Review Data at Depth

Pangolinfo’s Reviews Scraper API is purpose-built for the competitor review analysis use case — extracting structured review data with filtering by time range, star rating, keyword presence, and verified purchase status. The Customer Says AI summary field is supported, enabling the kind of bulk, automated review pattern analysis that finds differentiation opportunities in negative review clusters at a scale that human review reading can’t match.

Ad Placement Capture — A Specific Technical Advantage

One concrete technical capability worth highlighting: Pangolinfo achieves a 98% SP ad placement capture rate on Amazon search results pages. For anyone using ad data to reverse-engineer competitor keyword bidding strategies or quantify first-page ad density by competitor brand, this accuracy delta relative to alternatives directly affects the quality of the analysis.

AMZ Data Tracker: Visual Layer for Non-Technical Users

For teams that want the real-time data advantage without the engineering overhead of API integration, AMZ Data Tracker provides a configurable monitoring dashboard where you define the ASIN watchlists, keyword sets, ranking categories, and refresh cadence — and the system handles the continuous data collection and visualization. It’s the middle path between a rigid SaaS tool and a fully custom API integration.

AI Agent Integration

For teams building AI-driven product selection workflows, Pangolinfo offers an Amazon Scraper Skill compatible with the MCP protocol — enabling AI Agents to directly invoke Amazon data retrieval through natural language instructions without any additional code layer. This makes Pangolinfo’s data infrastructure a native component of fully automated product research pipelines, where the agent can dynamically query market data as part of a broader analytical workflow.

Sample Implementation: Building a Real-Time Competitor Intelligence Pipeline

Here’s a working Python example showing how API-based data retrieval fits into an automated product research workflow:

import requests

import json

from datetime import datetime

import time

# -----------------------------------------------

# Pangolinfo Scrape API - Real-Time Competitor Monitoring

# Documentation: https://docs.pangolinfo.com/

# -----------------------------------------------

API_KEY = "your_api_key_here"

PRODUCT_ENDPOINT = "https://api.pangolinfo.com/v1/amazon/product"

REVIEWS_ENDPOINT = "https://api.pangolinfo.com/v1/amazon/reviews"

# Competitor ASINs to monitor

WATCH_LIST = {

"B08N5KWB9H": "Competitor Alpha",

"B09G3HRMVW": "Competitor Beta",

"B07PFFMP9P": "Competitor Gamma",

}

HEADERS = {

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json",

}

def fetch_product_snapshot(asin: str, marketplace: str = "US") -> dict:

"""

Retrieve a real-time product snapshot from Amazon.

Returns structured JSON with BSR, price, inventory, and review metrics.

"""

payload = {

"asin": asin,

"marketplace": marketplace,

"include_bsr": True,

"include_variants": True,

"include_reviews_summary": True,

}

resp = requests.post(PRODUCT_ENDPOINT, json=payload, headers=HEADERS, timeout=30)

resp.raise_for_status()

data = resp.json()

return {

"asin": asin,

"fetched_at": datetime.utcnow().isoformat() + "Z", # critical for audit trail

"title": data.get("title", ""),

"brand": data.get("brand", ""),

"bsr_main": data.get("bsr", {}).get("main_category_rank"),

"bsr_sub_ranks": data.get("bsr", {}).get("sub_category_ranks", []),

"price_current": data.get("price", {}).get("current"),

"price_original": data.get("price", {}).get("original"),

"in_stock": data.get("availability") == "In Stock",

"review_count": data.get("ratings", {}).get("total_count"),

"rating": data.get("ratings", {}).get("average"),

"customer_says": data.get("customer_says", ""),

"variant_count": len(data.get("variants", [])),

}

def fetch_negative_reviews(asin: str, star_filter: int = 2) -> list[dict]:

"""

Extract low-star reviews for differentiation gap analysis.

Returns structured list of review objects with text, date, and attributes.

"""

payload = {

"asin": asin,

"marketplace": "US",

"star_rating_filter": star_filter,

"sort_by": "recent",

"page": 1,

}

resp = requests.post(REVIEWS_ENDPOINT, json=payload, headers=HEADERS, timeout=30)

resp.raise_for_status()

return resp.json().get("reviews", [])

def run_competitor_analysis(watch_list: dict) -> None:

"""

Batch analysis run: fetch real-time data for all monitored competitors,

log alerts, and surface differentiation signals from negative reviews.

"""

print(f"\n{'='*60}")

print(f"Competitor Intelligence Run — {datetime.utcnow().strftime('%Y-%m-%d %H:%M UTC')}")

print(f"{'='*60}\n")

for asin, label in watch_list.items():

print(f"[{label}] ASIN: {asin}")

snapshot = fetch_product_snapshot(asin)

print(f" BSR: #{snapshot.get('bsr_main', 'N/A')} (main category)")

print(f" Price: ${snapshot.get('price_current', 'N/A')}")

print(f" Reviews: {snapshot.get('review_count', 'N/A')} @ {snapshot.get('rating', 'N/A')}★")

print(f" Stock: {'✓ In Stock' if snapshot.get('in_stock') else '✗ OUT OF STOCK ← opportunity window'}")

# Trigger negative review analysis for insight into product weaknesses

if snapshot.get("review_count", 0) > 100:

bad_reviews = fetch_negative_reviews(asin)

if bad_reviews:

print(f" Top negative review themes ({len(bad_reviews)} fetched):")

for r in bad_reviews[:2]:

snippet = r.get("text", "")[:120].replace("\n", " ")

print(f" ↳ [{r.get('rating', '?')}★] {snippet}...")

print()

time.sleep(1) # polite pacing between requests

if __name__ == "__main__":

run_competitor_analysis(WATCH_LIST)

This example runs against Pangolinfo’s live API and returns current-state data each time it executes. Wrap it in a cron job for continuous monitoring, or call it on-demand when you need a current competitive snapshot before a sourcing decision. The technical documentation is at docs.pangolinfo.com.

Part 6: Using Amazon Product Research Data Well — Common Failure Modes and Fixes

Having access to good data is a necessary but not sufficient condition for good product decisions. Here are the application-level mistakes that cause well-equipped sellers to still make bad calls.

Confirmation Bias Masquerading as Data Analysis

The most common analytical failure: you’ve already decided you want to launch a product, and you’re using data to confirm the decision rather than interrogate it. You note the keyword volume that supports the thesis, ignore the competitive intensity data that challenges it, and interpret ambiguous BSR trends in the direction of optimism.

The discipline required is true falsifiability: before you start the analysis, define the specific data conditions that would lead you to not launch. Then actually check those conditions with equal rigor. Data is useful precisely because it can tell you you’re wrong — which only works if you’re willing to hear it.

Using the Wrong Time Resolution For the Data Type

Different data types have appropriate analytical time horizons, and mixing them up degrades decision quality. BSR trend analysis needs 4–8 weeks of data points to be meaningful (single snapshots are noise). Keyword seasonality assessment requires a full 12-month view. Competitor stockout identification requires near-real-time monitoring — checking inventory status once a week makes the signal nearly useless. Applying a weekly check cadence to a data type that only matters when checked hourly is the data equivalent of checking the weather once a week and wondering why you keep getting caught in the rain.

Multi-Dimensional Validation Is the Actual Standard

Individual data points mislead. Cross-validated multi-dimensional signals are where decision confidence actually comes from. Three illustrative pattern combinations:

Strong keyword volume + multiple recent new entrants in top 100 + low average review count across the competitive set + fragmented ad landscape → Genuinely open market with validated demand and multiple actionable entry vectors. Worth serious pursuit.

Strong keyword volume + dominant brand with 20,000 reviews + first-page ads 90% owned by two brands + new ASINs consistently stalling below rank 1,000 → Demand is real, but the market is effectively gated. Without a compelling differentiation thesis and a substantial launch budget, this is a trap that looks like an opportunity.

Strong keyword volume + first-page BSR held by new entrants (sub-12 months old) + competitor 1-2 star reviews concentrated on 2-3 specific product attributes → This is the signal set for a product improvement play. Early entrants validated the demand and exposed clear gaps — which you can systematically address.

Monitoring as an Ongoing System, Not a One-Time Task

The gap between sellers who consistently find the right opportunities and those who consistently miss them often isn’t analytical sophistication — it’s whether they have a continuous monitoring system that finds patterns over time, or whether they’re doing fresh research from scratch each time they want to make a decision.

Real competitive intelligence is cumulative. Knowing that a competitor’s BSR has been steadily declining for three weeks is significantly more actionable than knowing their BSR right now. Knowing that their inventory has been trending downward and they’ve been out of stock twice in the last 45 days is far more useful than a single inventory check. That kind of cumulative signal detection requires continuous data collection through an API pipeline — not a manual tool check whenever you remember to do it.

Part 7: Frequently Asked Questions

Q: Does Amazon provide any free official API for product research data?

Amazon’s Selling Partner API provides data access for sellers’ own operational data — their listings, orders, advertising performance. Competitor data, category trend data, and market-level intelligence are entirely outside the SP API’s scope by design. For product research purposes, you will always be relying on third-party tools or data collection APIs regardless of which path you choose.

Q: How accurate are the sales estimates from tools like Helium 10 and Jungle Scout?

Accurate enough to be useful in many situations; materially wrong in enough situations to require skepticism. The fundamental limitation is that these estimates are model-derived from BSR movement, not from actual transaction data. In categories with typical behavior where the tool has substantial calibration data, estimates can be reasonably reliable. In unusual niches, high-velocity promotional periods, or categories with atypical BSR dynamics, estimates can be off by 30–50% or more. Use them as directional inputs, not as precise figures — and always cross-reference against at least one independent signal before making capital commitments.

Q: Is scraping Amazon for research data legal?

This is jurisdiction-dependent and fact-dependent. The US Ninth Circuit’s 2022 ruling in hiQ Labs v. LinkedIn established that scraping publicly accessible data (available without login) does not violate the Computer Fraud and Abuse Act. Amazon’s Terms of Service prohibit unauthorized automated access, but ToS violation is a contractual matter, not a criminal one. The practical risk for businesses doing large-scale collection: account suspension if Amazon links your scraping activity to your seller account. Using a compliant third-party data API service transfers the legal and operational risk to a provider whose core competency is managing these issues — it’s the appropriate approach for business-scale data collection.

Q: What does Pangolinfo’s API pricing look like?

Pangolinfo uses request-based pricing rather than flat subscription tiers. New users can start with a free trial; production usage scales with actual consumption. For teams analyzing tens of thousands of ASINs monthly, the total cost typically runs lower than multiple stacked SaaS tool subscriptions — with meaningfully better data freshness. Current pricing is visible in the Pangolinfo Console.

Q: Can non-technical sellers use API-based data solutions?

Yes, through AMZ Data Tracker, which provides the real-time data collection advantage through a visual configuration interface — no code required. You configure ASIN watchlists, keyword tracking sets, and BSR monitoring parameters through a UI; the platform handles continuous collection and presents the data in dashboards and exportable tables. The API itself targets teams with development resources who want to integrate Amazon data directly into their own systems or analysis workflows.

Q: How is Amazon data collection different across US, European, and Asian marketplaces?

Each Amazon marketplace is operationally independent, with its own BSR ranking system, keyword search behavior, competitive landscape, and consumer psychology. US bestsellers often don’t translate to European markets; European front-runners frequently have strong local brand preferences that don’t apply elsewhere; Japan’s consumer behavior and product standard expectations differ materially from Western markets. Multi-marketplace sellers need independent data collection and analysis for each target market — a US-calibrated mental model applied to DE or JP search data will consistently produce misdirected decisions. Pangolinfo’s API supports major Amazon marketplaces (US, UK, DE, FR, CA, JP, etc.) through a marketplace parameter, enabling consistent data structure across different regional collections.

Closing: The Right Amazon Product Research Data Source Makes the Difference You Think Market Insight Does

The question “where do sellers get their Amazon product research data?” has a surface answer and a deeper answer. The surface answer covers Helium 10, Jungle Scout, Brand Analytics, and a few manual research habits. The deeper answer is about information quality: how current is the data you’re making decisions from, how complete is the picture it gives you, and how systematically are you integrating the signals that actually determine whether a sourcing decision will succeed.

The gap between sellers who build consistent, scalable Amazon businesses and those who burn through capital on failed launches often traces to data infrastructure quality at least as much as it traces to product selection intuition. Better BSR analysis and better competitive intelligence don’t automatically make you right — but they substantially reduce the frequency of being wrong for avoidable reasons.

If your current data setup involves manually checking a few tools and exporting spreadsheets, the first step is an honest audit: which of the 12 data types listed in this article are you systematically monitoring? Which are you missing entirely? Which are you relying on data that’s too stale to be actionable? That audit usually reveals 2–3 data dimensions where closing the gap would meaningfully improve decision quality.

For teams ready to upgrade the data foundation — whether through the API for full programmatic access or through AMZ Data Tracker for a visual monitoring setup — the starting point is a free trial at Pangolinfo Scrape API. Technical documentation is at docs.pangolinfo.com. The difference between yesterday’s data and right-now data is real — and for competitive Amazon product research, it matters more than most sellers currently assume.

🚀 Start your free trial with Pangolinfo Scrape API — real-time Amazon data collection that solves the data freshness problem at the core of Amazon product research.