Why Everyone Thinks OpenClaw Has “No Use Case” in E-Commerce

Across Amazon seller communities and cross-border e-commerce forums, the same skepticism keeps resurfacing in slightly different forms: What can OpenClaw actually do? How is it genuinely different from just talking to ChatGPT? Can it handle the operational complexity of a real business? These questions share a common assumption—that AI agent e-commerce automation, despite all the noise, remains fundamentally impractical when a seller tries to deploy it against an actual workflow.

That skepticism is not baseless. Most teams who attempt to deploy OpenClaw or a similar AI agent framework hit an identical wall early on: the system is set up, the workflow is designed, but the AI operates like a brilliant analyst with no access to current information. It can reason, plan, and generate reports with impressive coherence—but it has no idea what is happening in the market right now. Did a key competitor drop their price last night? Which ASIN just cracked the top 100 in a high-competition category? What new entrant appeared on the new releases chart this morning? These are the operational signals that cross-border sellers live and die by, and without them, any AI agent is essentially doing gymnastics in a vacuum.

The root of the problem is conceptual. Most people approach OpenClaw as a smarter conversation interface rather than as an execution framework that requires continuous external data to function. Think of it like a military commander: no matter how sophisticated the battle plan, the commander cannot act effectively without real-time intelligence from scouts in the field. OpenClaw’s power scales directly with the quality and freshness of its data inputs.

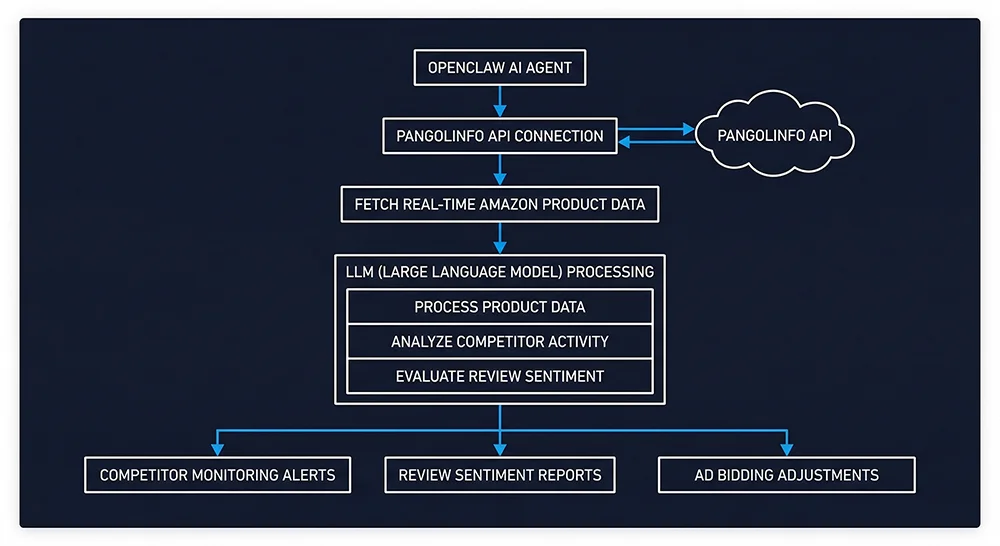

The solution is architectural, not prompt-engineering. You need to wire OpenClaw into a stable, real-time, structured data source built for Amazon’s scale. That is exactly what Pangolinfo Scrape API is designed to deliver.

The Hidden Cost of AI-Written Amazon Scrapers

When the conversation turns to providing live data for an AI agent, many technically-inclined sellers reach for the same solution: have the AI write a custom scraper. On the surface, the appeal is obvious—no licensing fees, full customization, modify it whenever you need. But once you actually run this approach against Amazon at production scale, you find yourself inside a cost trap that compounds aggressively over time.

Cost Layer One: The Stability Illusion and Maintenance Abyss

Amazon’s anti-bot infrastructure has evolved into a sophisticated, multi-layered detection system over the past several years. Dynamic rendering, behavioral fingerprinting, IP reputation scoring, rotating CAPTCHA challenges—any static scraping script deployed against this system has an average survival window of under two weeks before it starts failing at scale. That timeline means your engineering team needs to maintain a rotating proxy pool, monitor error logs continuously, and periodically rewrite core extraction logic. What accumulates over time is a fragile, interdependent codebase that consumes engineering resources while generating zero direct business value. The maintenance burden is not a one-time cost; it is an open-ended tax on your team’s productivity.

Cost Layer Two: The Token Explosion That Kills Your AI Economics

Set aside the anti-bot problem for a moment. Assume your scraper somehow succeeds and retrieves a raw Amazon product page. This is where the real disaster begins.

A standard Amazon product detail page carries a raw HTML payload of between 300KB and 600KB. That document is packed with CSS class declarations, JavaScript execution blocks, advertising injection scripts, redundant navigation structures, and dozens of nested elements that carry zero informational value for your AI agent. If you feed this document directly to a large language model and ask it to extract fields like “current BSR ranking,” “Best Seller badge status,” or “review count,” the token consumption is typically ten times or more what a clean JSON response would require. Processing speed collapses. API costs spike. And perhaps most critically for downstream decision quality, the signal-to-noise ratio is so poor that the model’s hallucination rate on field extraction tasks increases significantly.

This is a structural problem in AI-powered Amazon seller tools that rarely gets discussed: dirty data does not just waste money upstream—it corrupts every decision the agent makes downstream. The smarter your model, the more tokens it burns trying to parse chaos.

Cost Layer Three: The Scaling Ceiling You Will Hit Sooner Than You Think

A DIY scraper that handles 10 ASINs adequately will not gracefully scale to 500 ASINs. Moving from single-category monitoring to cross-category competitive intelligence creates concurrency demands that no ad-hoc scraping system is built to sustain. Without enterprise-grade infrastructure underneath, this path hits a hard ceiling—usually at the worst possible moment, when your business most needs reliable data.

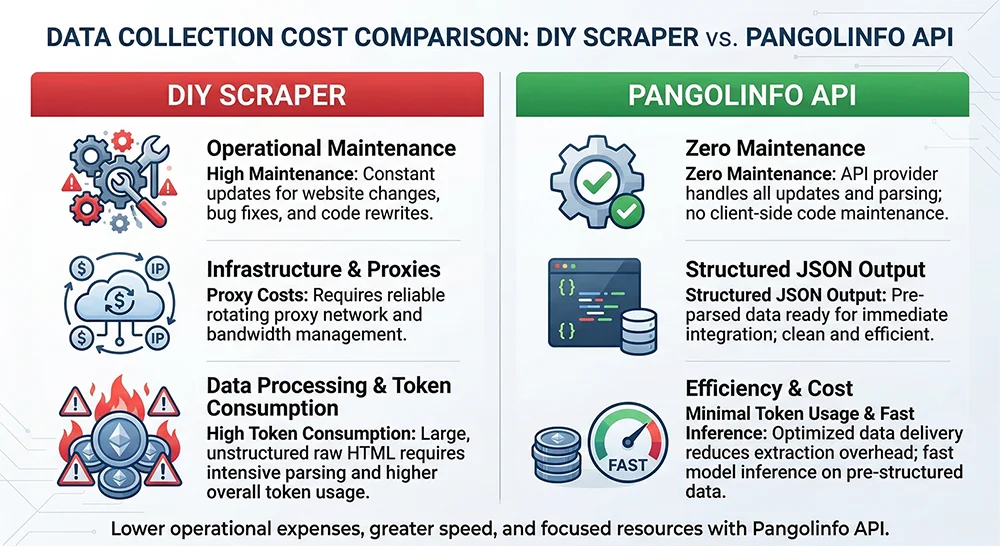

API vs. DIY Scraper: A Five-Dimension Comparison

When both approaches are placed on the same evaluative framework, the gap is significantly larger than intuition suggests. The table below compares them across the five dimensions that matter most for production AI agent deployments:

| Dimension | AI DIY Scraper | Pangolinfo API |

|---|---|---|

| Stability | Fragile and ephemeral; constant anti-bot countermeasures required; short operational lifespan | Enterprise-grade reliability; bot detection handled at the infrastructure level; SLA-backed uptime |

| Scale | Concurrency-limited; scaling requires architectural rebuilds with exponentially rising costs | Native support for tens of millions of pages per day; linear cost scaling with no ceiling |

| Data Freshness | Unpredictable latency; data gaps whenever anti-bot countermeasures trigger | Minute-level refresh cycles; AI agent decisions always based on current market intelligence |

| Token Cost | Raw HTML input consumes 10x+ tokens vs. structured JSON; slow processing; elevated hallucination risk | Clean JSON output; minimal token consumption; fast inference; high extraction accuracy |

| Maintenance Cost | Requires dedicated engineering time; ongoing proxy pool and parser maintenance | Zero maintenance burden; API updates handled server-side; development effort focused on business logic |

This is not a tradeoff analysis. It is a comparison between two paths with fundamentally different endpoints. One path traps your engineering resources in a low-value infrastructure maintenance loop indefinitely. The other frees every member of your technical team to focus entirely on the automation logic that creates actual competitive advantage.

Pangolinfo API: Structured Data That Gives Your AI Agent Eyes

Pangolinfo Scrape API is not a conventional web scraping utility. Its core design principle is to ensure that every upstream consumer—whether a large language model, an automation workflow, or a data analytics pipeline—only ever interacts with clean, structured data. All of the complexity: anti-bot evasion, proxy rotation, HTML parsing, field normalization, is fully encapsulated within the API layer, invisible to the caller.

For teams exploring genuine cross-border e-commerce AI workflow deployments, the practical impact is immediate. There are no DOM structure changes to track. No bot-detection middleware to maintain. No raw HTML being fed to a language model. A single API call returns a precise JSON payload: product title, current price, BSR rank, rating, review count, seller information, promotional status—every field a clean, directly addressable key-value pair.

That is exactly what OpenClaw needs to function as a real operational system. Installing the Pangolinfo skill package into OpenClaw’s skill library is straightforward:

# Install the Pangolinfo OpenClaw skill package

git clone https://github.com/Pangolin-spg/openclaw-skill-pangolinfo.git

# Configure your API key per the README and OpenClaw gains

# full access to real-time Amazon structured data capabilitiesComplete installation documentation, multi-scenario workflow configuration files, and annotated code examples are available as open source at GitHub: openclaw-skill-pangolinfo.

Three Automation Workflows You Can Deploy Today

Once OpenClaw has a continuous, structured data feed from Pangolinfo API, AI agent e-commerce automation stops being a pitch deck concept and becomes three concrete systems you can build right now.

Workflow One: 24/7 Competitor Price and Ranking Intelligence

Configure OpenClaw to call Pangolinfo API on a scheduled interval, pulling current price, BSR rank, and Best Seller badge status for a defined list of competitor ASINs. The language model compares each incoming data point against the established baseline and evaluates whether the delta exceeds predefined alert thresholds. When a competitor’s rank jumps by more than a set percentage within 24 hours, or when a significant price reduction is detected, the system automatically pushes a structured alert to your Lark or Slack channel, including an anomaly summary and a recommended response action. From data collection to alert delivery, the entire chain runs without human intervention.

Workflow Two: Automated Voice-of-Customer Review Intelligence

Using the Reviews Scraper API, OpenClaw periodically pulls the latest review data for selected competitor or own-brand ASINs—including rating distribution, review text, Verified Purchase status, and Helpful vote counts. The language model performs semantic clustering and sentiment analysis on this already-structured text, identifying high-frequency negative keywords, recurring product defects, and unresolved pain points that competitors have not yet addressed. A curated product iteration brief is automatically compiled and delivered to the product team on a weekly schedule—dramatically more efficient than any team reading reviews manually.

Workflow Three: Real-Time Sales Data to Ad Bidding Intelligence

When API data shows a tracked ASIN’s BSR climbing from the 3,000 range into the top 800 over a 48-hour window, it signals that the product has entered a high-traffic tier in its category. An AI agent equipped with this signal can trigger pre-defined advertising strategy adjustments—increasing the daily budget cap for the relevant Campaign, activating defensive bidding on competitor keywords—substantially faster and more systematically than manual monitoring allows. This is how how to connect OpenClaw with Amazon product data API translates into a business outcome that compounds over time.

Integration Guide: Five Steps to Connect OpenClaw with Pangolinfo

The code below demonstrates a minimal but production-ready pattern for retrieving structured Amazon product data via Pangolinfo API and passing it to OpenClaw’s reasoning layer:

import requests

import json

# Pangolinfo Scrape API — Structured Amazon product data retrieval

BASE_URL = "https://api.pangolinfo.com/v1/amazon/product"

HEADERS = {

"Authorization": "Bearer YOUR_API_KEY",

"Content-Type": "application/json"

}

def get_product_data(asin: str, marketplace: str = "US") -> dict:

"""

Retrieve real-time structured Amazon product data.

Response is pre-cleaned JSON — zero HTML parsing required.

Feeds directly into OpenClaw's decision layer with minimal token overhead.

"""

payload = {

"asin": asin,

"marketplace": marketplace,

"fields": ["price", "bsr", "rating", "review_count",

"title", "availability", "best_seller_badge"]

}

response = requests.post(BASE_URL, headers=HEADERS, json=payload, timeout=30)

response.raise_for_status()

return response.json()

# Batch competitor ASIN monitoring

target_asins = ["B09XXXXX1", "B09XXXXX2", "B09XXXXX3"]

for asin in target_asins:

data = get_product_data(asin)

# data is clean structured JSON

# Pass directly to OpenClaw reasoning layer — no preprocessing needed

print(json.dumps(data, indent=2))

The JSON payload returned is compact and precisely structured. Token consumption for a language model processing this response is typically over 90% lower than equivalent raw HTML. Using GPT-4o as a benchmark, processing one product’s structured JSON costs approximately 1/12th of what raw HTML extraction would require, with inference speed improving by a factor of five or more and extraction accuracy increasing substantially due to the eliminated signal-to-noise problem.

For the complete OpenClaw skill installation guide, multi-workflow configuration templates, and full API field documentation, visit Pangolinfo Documentation Center or explore the open-source repository at GitHub: openclaw-skill-pangolinfo.

Let the API Handle Data. Let the Agent Handle Decisions. That Is the Architecture That Works.

AI agent e-commerce automation fails in practice almost never because the AI is insufficiently capable—it fails because the AI is operating without reliable access to current market data. OpenClaw and frameworks like it represent the next generation of operational infrastructure for serious cross-border sellers. But that potential is contingent on a data layer that is stable, real-time, and structured enough for a language model to consume without waste.

Pangolinfo API collapses the entire data complexity problem into a single dependency. Anti-bot evasion, proxy management, HTML-to-JSON parsing, field normalization—all of it handled at the infrastructure level, invisible to the developer. Your AI agent consumes clean structured data and directs its full reasoning capacity toward decisions that generate revenue.

Professional problems require professional tools. Let AMZ Data Tracker and Scrape API handle the high-volume, high-reliability data supply. Let OpenClaw orchestrate the business logic. That division of responsibility is what enterprise-grade AI agent e-commerce automation actually looks like in production.

Visit Pangolinfo Scrape API to explore full capabilities, or start your free trial at the Pangolinfo Console and give your OpenClaw agent eyes into the real Amazon marketplace.