Amazon Sales Estimation Method: Core Solution for Product Research Pain Points

The hardest question in Amazon product research is never “does this listing look good?” It is always “how many units does this actually sell per month?” The first question has subjective answers. The second one demands data and a verified Amazon sales estimation method — but Amazon releases none of its sellers’ real sales figures to anyone, leaving only indirect data signals for sellers to analyze and deduce.

Learning how to estimate Amazon sales data is therefore a foundational skill for any seller serious about reducing sourcing risk. This guide covers the three core estimation models (BSR reverse-engineering, review velocity modeling, and keyword-weighted estimation), details the 7 data types needed to build a complete estimation system, and maps free and paid acquisition paths for each. If you are starting from scratch, What Is Amazon Sales Estimate Data explains the conceptual foundation — this article is the hands-on implementation guide.

1. The Three Core Estimation Models

Model 1: BSR Reverse-Engineering — The Foundation, and Its Limits

BSR (Best Seller Rank) is the most widely used signal for Amazon sales estimation. Amazon’s BSR is a real-time weighted snapshot of recent sales velocity, which means there is a statistical relationship between rank position and monthly units sold. By building a “BSR range → monthly units range” lookup calibrated to each top-level category, you can infer sales for any ASIN.

Sampling correctly: You must use the 30-day average BSR, not a point-in-time reading. During promotional events (Prime Day, Black Friday), instantaneous BSR can drop 10x below normal, causing catastrophic overestimates if used directly. Record daily BSR for 30 days, compute the average, then apply the category mapping table.

Category specificity: The BSR-to-sales relationship follows a power-law curve that differs dramatically across top-level categories. BSR #1,000 in Home & Kitchen may imply 5x the monthly units of the same rank in Books. Never apply a generic cross-category table — build separate calibration models for each category you analyze.

Accuracy ceiling: Single-signal BSR estimation carries 30–50% single-ASIN error rates. This is a methodological constraint, not a tool limitation. Any tool claiming sub-15% error on BSR alone is either using additional signals or overstating precision.

Model 2: Review Velocity Modeling — A Smoother Cross-Validation Signal

Amazon’s average review conversion rate (the percentage of buyers who leave a review) is relatively stable within categories, typically ranging from 1% to 3%. This creates a reliable back-calculation path:

Formula: Estimated monthly sales = New reviews in past 30 days ÷ Category average review rate

Example: An ASIN added 25 reviews in the past 30 days; category average review rate is approximately 1.5%. Implied monthly sales ≈ 25 ÷ 0.015 = 1,667 units, with a reasonable range of 1,250–2,500 units (across the 1%–2% review rate band).

Review velocity reflects actual cumulative purchase behavior, insulated from promotional noise that distorts BSR. Its limitations: systematic variation in review rates across price tiers (higher-priced products attract proportionally more reviews), early-stage review incentive effects that inflate conversion rates for new products, and Amazon’s increasingly aggressive fake-review filtering introducing some data noise in certain categories.

Best practice: Use review velocity as a cross-validation signal for BSR estimates. Convergence within ±30% increases confidence materially. Divergence above 50% signals an anomaly — promotion, manipulation, or stockout — requiring investigation before finalizing the estimate.

Model 3: Keyword Search Volume Weighting — Validating Against Demand Ceilings

The first two models work from the supply side (existing ranked ASINs). The keyword-weighted approach works from the demand side, providing an external ceiling against which supply-side estimates can be validated.

Logic: Total category monthly demand ≈ Core keyword monthly search volume × Purchase conversion funnel (click-through rate by rank position × average conversion rate). Distribute this total across ASINs proportionally to their traffic share (organic rank + ad placement frequency) to derive each ASIN’s implied sales volume.

Reference click-through rates: organic position #1 ≈ 30–40%; positions #2–3 ≈ 10–15%; positions #4–10 ≈ 2–8%; below position #10 drops below 1%. Category average purchase conversion rates typically run 2–10%, depending on price tier and competition intensity.

The primary diagnostic value: if BSR-derived TOP 10 ASIN sales estimates sum to more than keyword search volume can plausibly support, the BSR estimates are likely inflated — prompting a review of input data quality before making sourcing decisions.

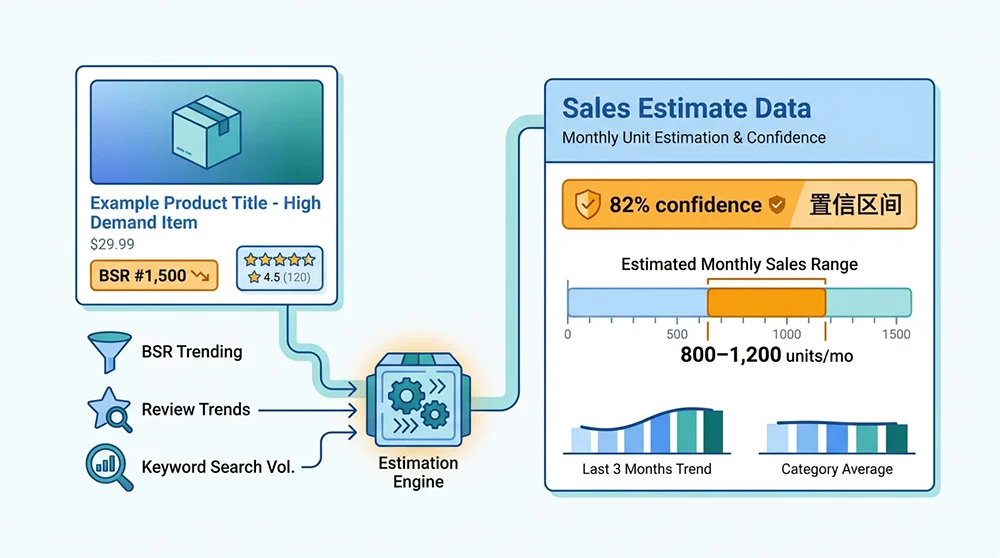

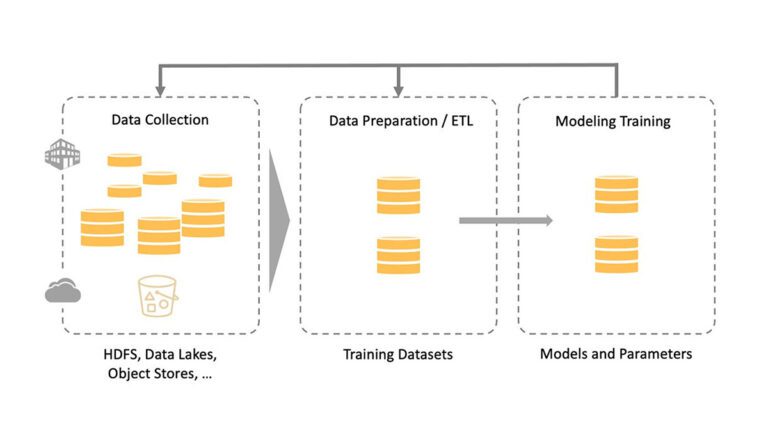

Multi-Signal Fusion: The 2026 Industry Standard

Current high-precision estimation systems combine all three methods as feature inputs to category-specific machine learning models, supplemented by price history, FBA inventory signals, and ad placement frequency. AMZ Data Tracker implements this multi-signal architecture with minute-level BSR sampling and category-calibrated models trained on millions of ASINs, achieving 15–25% single-ASIN error in mainstream categories — roughly half the error rate of daily-sampling BSR-only approaches.

2. The 7 Data Types Required for Accurate Estimation

Data Type 1: BSR Time-Series Data

A single BSR reading is nearly meaningless. What matters is the BSR trajectory over time. Ideally, 90 days of daily average data — enough to distinguish structural growth trends from promotional spikes and to extract seasonality baselines. Thirty days of data is sufficient for current-state assessment but insufficient for trend analysis.

Data Type 2: Review Count and Velocity

Total review count reflects historical cumulative sales — useful for gauging ASIN maturity, not current velocity. The actionable metric is review addition rate: how many new reviews per week or month. An ASIN with 5,000 total reviews but zero added in the past 90 days is in a different sales state than one with 200 reviews gaining 10 per week.

Data Type 3: Listing Age (ASIN First Available Date)

ASIN age is a required correction factor for review velocity estimation. Review conversion rates are systematically higher in a product’s first three months (early adopters are more inclined to review new products) than in maturity. Ignoring this skews monthly sales estimates for new products downward. The correction: apply a higher review rate assumption for ASINs under 6 months old.

Data Type 4: Price History and Volatility

Price is the most important explanatory variable for BSR movement. A BSR spike could mean organic sales acceleration or aggressive discounting — behaviors that require fundamentally different strategic responses. Overlaying price history on BSR curves disambiguates these scenarios. Periods with more than 15% price deviation from 90-day average should be flagged and either weighted down or excluded from estimation inputs.

Data Type 5: Competitor Count and Market Concentration

The number of competing ASINs in a search result, and the share of total category sales held by the top 3 ASINs, directly determines category entry viability. Concentration above 60% signals strong incumbent barriers; below 40% indicates a fragmented market with room for new entrants. This metric cannot be computed without multi-ASIN sales estimates across the full category.

Data Type 6: Core Keyword Monthly Search Volume

The demand-side foundation for the keyword-weighted model and the cross-check ceiling for supply-side estimates. Critical caveat: different tools have different measurement bases (global vs. US-only, exact-match vs. broad-match). Ensure consistent methodology when comparing tools or combining data sources.

Data Type 7: Sponsored Ad Placement Frequency

The proportion of first-page search result positions occupied by Sponsored Products ads signals category advertising cost intensity. When 70%+ of the first page is sponsored, organic traffic share for non-advertising ASINs is structurally limited — organic sales velocity estimates should reflect this constraint. This signal is also an input into total category demand distribution modeling.

3. Acquisition Paths for All 7 Data Types

BSR History

Free: Keepa’s free tier provides 90 days of BSR history charts per ASIN. Amazon product detail pages show only current BSR — no history.

Paid: Keepa subscription (≈€19/month) enables bulk historical BSR download. Pangolinfo Scrape API supports minute-level BSR sampling for building proprietary time-series databases — the only path to the sub-20% error rates that minute-level data enables.

Review Data

Free: Manual recording from product pages; browser extensions (Keepa) for basic tracking.

Paid: Helium 10 Cerebro, Jungle Scout for review tracking dashboards; Reviews Scraper API for bulk historical review extraction with timestamp filtering, enabling automated monitoring pipelines.

Listing Age

Free: Keepa historical data can infer first-availability date; Wayback Machine for archived snapshots.

Paid: Pangolinfo Scrape API real-time extraction of the “Date First Available” field from product detail pages.

Price History

Free: Keepa price history charts (90-day free); CamelCamelCamel (free, comprehensive coverage).

Paid: Keepa API for enterprise-scale bulk price history; custom collection pipelines for continuous monitoring.

Competitor Count and Concentration

Free: Manual TOP 100 BSR compilation with estimated sales per ASIN.

Paid: AMZ Data Tracker category-wide analysis — bulk estimated sales for all category ASINs with automatic concentration metric calculation.

Keyword Search Volume

Free: Amazon Brand Analytics search term report (brand-registered sellers only, 2+ week delay); Google Trends as supplementary reference.

Paid: Helium 10 Magnet, SellerSprite, Jungle Scout Keyword Scout for US-market Amazon search volume estimates.

Ad Placement Frequency

Free: Manual count on search result pages (extremely low throughput, not scalable).

Paid: Pangolinfo Scrape API’s dedicated ad placement collection — distinguishes Sponsored Products, Sponsored Brands, and organic positions with 98% SP placement capture rate (industry-leading), enabling large-scale category ad landscape analysis.

4. Four-Step Implementation Workflow

Step 1: Define the Target Category and Extract TOP 100 ASINs

Start with a specific second- or third-level subcategory. Extract the top 100 BSR-ranked ASINs — this is your market baseline sample. Manual extraction takes 30–45 minutes (4–5 page turns at 16–24 ASINs per page). Pangolinfo Scrape API’s category ranking endpoint delivers the same structured JSON data in under 2 minutes, with scheduled automation available for continuous monitoring.

Step 2: Build the Multi-Dimensional Data Collection Matrix

For each ASIN in your set, collect in parallel: current BSR (main + subcategory), 30-day daily BSR average, total review count and 30-day new review count, current price and promotional status, listing first-available date, FBA availability status. Target collection cadence: BSR daily minimum (hourly ideal), reviews weekly, price daily. After 30 days of collection, you have sufficient time-series data for trend analysis.

Step 3: Apply Cross-Method Estimation, Output Confidence Intervals

Base estimate via BSR: apply the 30-day average BSR to your category-specific mapping table for a unit range. Cross-check via review velocity: 30-day new reviews ÷ category average review rate (1.5%–2.5%) = second sales estimate. If the two estimates diverge by less than 40%, take their average as the point estimate with ±30% as the confidence interval. If divergence exceeds 40%, investigate for promotional events, manipulation, or stockout periods before finalizing.

Step 4: Aggregate Category Data, Build the Market Map

Sum the estimated monthly sales across all TOP 100 ASINs to derive total category monthly demand. Calculate: (TOP 10 ASIN sales ÷ total category sales) = market concentration; median ASIN (rank #50) monthly sales = category entry viability baseline; 3-month sales trend direction = category lifecycle signal; compare to keyword-derived demand ceiling for estimate reliability validation.

Conclusion

Accurately estimating Amazon sales data requires combining multiple imperfect signals into a coherent confidence framework — not finding a single magic formula. BSR reverse-engineering provides the structural backbone, review velocity modeling adds a less volatile cross-check, and keyword-weighted analysis validates the demand ceiling. The 7 data types detailed here are the non-negotiable inputs for any estimate worth acting on.

For sellers building this capability, start with Keepa’s free BSR charts paired with manual review tracking — imperfect but sufficient to develop data-informed instincts. As scale demands grow, AMZ Data Tracker provides automated multi-ASIN monitoring without engineering overhead; for teams building proprietary estimation infrastructure, Pangolinfo Scrape API provides the minute-level BSR sampling and full-category collection throughput that precision estimation requires.

For the complete implementation framework — including 2026 AI-enhanced estimation advances, the six most common estimation errors, and a step-by-step system build guide — see the Amazon Sales Estimate Data: Complete 2026 Playbook.

Start tracking competitor sales velocity with AMZ Data Tracker, or build minute-level BSR collection with Pangolinfo Scrape API.