The Real Cost of Skipping Amazon Category Competition Analysis Before Entry

Most sellers who enter a new Amazon category based on intuition or secondhand reports share a common experience: the category looked viable at a 30,000-foot view, but the moment they were actually in it — running PPC, waiting for reviews, watching BSR — the picture looked nothing like the original assessment. The category wasn’t uncompetitive. It just required resources, timelines, and cost structures that were never visible in the surface-level research.

Amazon category competition analysis done properly isn’t about answering “is this category profitable?” It’s about answering a more precise question: “Is this category enterable for me, at this point in time, with this product position and this resource level?” The gap between those two questions is where sourcing capital gets destroyed.

The more specific failure mode is qualitative assessment masquerading as analysis. Sellers see a subcategory with “only 200 reviews on the top products” and call it a blue ocean — without checking whether those 200 reviews represent 18 months of natural accumulation or 3 months of aggressive launch campaigns that existing sellers will replicate for any new entrant. They see strong monthly revenue estimates and assume there’s room for another player — without pricing in the PPC cost structure that’s already consuming 40% of those revenues for everyone in the category. The data was there. It just wasn’t asked the right questions.

What follows is a structured framework for Amazon niche entry difficulty assessment — four quantifiable dimensions that, combined, produce a decision-grade picture of any category you’re considering.

The Four-Dimension Amazon Market Competitiveness Evaluation Framework

Each dimension measures a distinct axis of competitive difficulty. None of them alone is sufficient; their interaction is what shapes the full landscape.

Dimension 1: BSR Concentration. What percentage of the estimated category sales volume do the top 3 products account for, within the BSR Top 20? When the answer exceeds 60%, the category exhibits strong winner-take-most dynamics — advertising auctions are typically at ceiling prices, organic ranking is structurally biased toward established listings, and new products entering on merit alone face long timelines to visibility. When concentration is below 40%, the traffic distribution is flatter, which usually indicates more accessible paths to ranking for new entrants. This metric requires live BSR data — a week-old snapshot can misrepresent a category going through rapid reshuffling.

Dimension 2: Review Barrier. What is the median review count across BSR Top 20 listings? And critically: what fraction of those reviews were added in the most recent 90 days (review velocity)? A median of 2,000+ reviews doesn’t just mean your product needs to catch up — it means you need approximately 12 to 18 months of consistent sales and review solicitation to approach parity on social proof, during which period your conversion rate will lag competitors meaningfully. Even more telling is review velocity: a category where the top products are still adding 100+ reviews per month is one where the competitive gap is actively widening, not static.

Dimension 3: Price Band Density. Map the price distribution of BSR Top 50 products. Which $10 increment contains the most listings? What is the margin structure within that dominant price band after factoring in FBA fees, PPC spend, and COGS? Categories where a single price band contains 60%+ of the top 50 listings, and where that band is driven by large established sellers with cost advantages, are structurally hostile to new entrant margins. Conversely, a multi-modal price distribution with clear gaps between established tiers often signals accessible entry points that aren’t immediately obvious from category-level revenue estimates.

Dimension 4: New Product Survival Rate. Of all products that entered the BSR Top 100 in the past six months (identifiable by first-available date), what percentage are still ranked in the Top 100 today? A survival rate below 30% suggests aggressive suppression — either by incumbent advertising dominance, seasonal volatility, or structural ranking bias — making it genuinely difficult for new products to establish durable positions regardless of product quality. A survival rate above 60% indicates natural category metabolism: new products can enter, establish, and hold rankings, which is the foundational prerequisite for any viable new entry strategy. This metric is only computable by comparing two time-point BSR snapshots — it’s invisible to any single-point-in-time data pull.

The scoring table below translates these dimensions into a comparable, stackable score:

| Dimension | Low competition (5 pts) | Medium competition (3 pts) | High competition (1 pt) |

|---|---|---|---|

| BSR Concentration | Top-3 share <40% | 40–60% | >60% |

| Review Barrier | Median <500 | 500–2,000 | >2,000 |

| Price Band Density | Clear gaps exist | Partially crowded | All bands saturated |

| New Product Survival | >60% | 30–60% | <30% |

Score 14–20: Category is enterable — focus on the weakest dimension to shape differentiation approach. Score 8–13: High-risk category, viable only with a strong supply chain moat or patent protection. Score 7 or below: Recommend redirecting research to adjacent subcategories.

Why Live Data Changes What Amazon Category Competition Analysis Tells You

The scoring framework above is only as valuable as the data feeding it. Running the same framework on data from three weeks ago versus live data can produce materially different conclusions — especially on BSR concentration (which shifts as promotional campaigns launch and expire) and new product survival rate (which is invisible without two-point snapshot comparison).

The standard research workflow — pull from a subscription tool, export to spreadsheet, manually derive metrics — has two structural limitations. First, subscription databases refresh on 24 to 72-hour cycles at best, meaning the BSR distribution you’re analyzing may have shifted since the last sync. Second, the metrics are predetermined by the tool’s data model. “New product survival rate” as defined above — percentage of products that entered the Top 100 in past six months and are still there — is not a native metric in any major subscription tool. You’d have to export historical data, reconcile ASINs across time periods, and compute it manually. Most teams don’t, so the survival rate dimension gets dropped or approximated, and that’s often precisely where the most important signal lives.

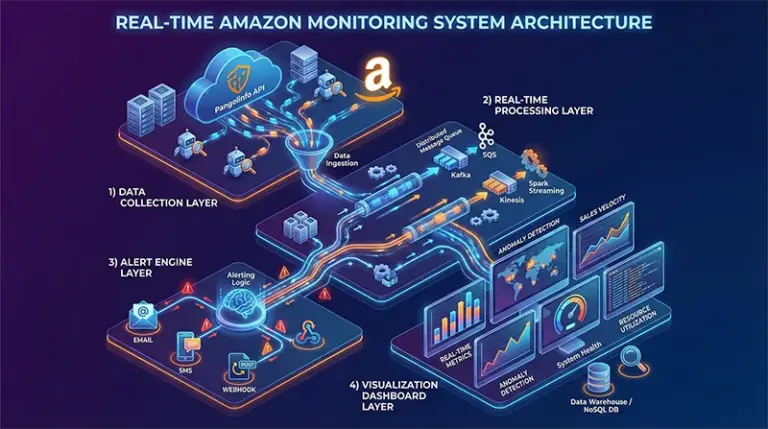

API-based live collection solves both problems. Pangolinfo Scrape API pulls current BSR data directly from Amazon’s pages at request time — no database intermediary, no refresh lag. Combined with a scheduled pull 30 or 90 days prior (stored and compared against the current pull), the survival rate calculation becomes fully automatable. The price band density and review barrier computations are immediate — they run directly on the current BSR output.

The comparison that matters most: subscription tools give you the metrics they chose to track. An API-based data layer gives you access to any public data point Amazon exposes, in whatever aggregation or temporal comparison your analysis requires. That’s not a marginal difference — it’s the difference between answering the questions the tool was designed for and answering the questions your sourcing decision actually needs.

From Difficulty Score to Differentiation Direction: The Data Workflow

The competition score tells you whether to enter. The differentiation direction tells you how. Both require real-time data — but from different endpoints.

For product-level differentiation signals: Run a live review collection on the BSR Top 10’s most recent 1–2 star reviews. Filter for reviews posted in the last 30 to 60 days. The complaint themes that appear consistently across multiple top competitors — and that none of them have visibly resolved in their listing updates — are the product engineering gaps that a differentiated new entrant can address. Pangolinfo Scrape API supports star-rating filtering and recency sorting in review collection, which means you can isolate exactly this signal set without wading through thousands of historical reviews that may no longer reflect the current product landscape.

For traffic-level differentiation signals: Analyze the Sponsored Product ad placement coverage of Top 10 competitors. Which keyword clusters are they heavily investing in? Which related terms have thin or absent coverage? Ad placement gaps often correspond to organic ranking opportunities and frequently signal underserved demand segments — exactly the conditions where a new product can establish rankings without immediately hitting full PPC competition. Pangolinfo’s SP ad placement collection achieves a 98% capture rate across Amazon’s advertising placements, making it the most complete source for this analysis.

For teams monitoring multiple candidate categories simultaneously, AMZ Data Tracker provides the monitoring layer: configure BSR change alerts, review velocity notifications, and price movement triggers for any set of competitor ASINs. Instead of manually re-running category assessments, the system surfaces signals as they emerge — freeing analysts to focus on strategic interpretation rather than data retrieval.

Developer teams and tool builders can take this further by connecting Pangolinfo Amazon Scraper Skill — the MCP-compatible interface — directly to an AI Agent, enabling fully automated category assessment workflows where the AI calls live data endpoints during reasoning and generates scoring reports without human intervention in the data pipeline.

Implementation: Building the Four-Dimension Assessment Pipeline with Python

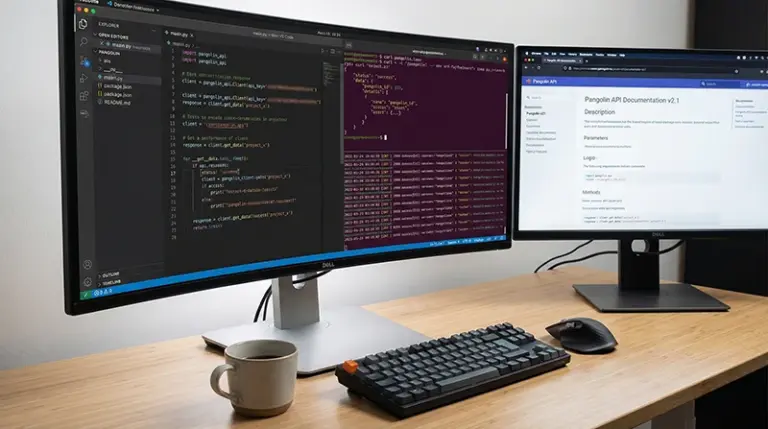

The following Python implementation runs the complete four-dimension Amazon market competitiveness evaluation against live data from Pangolinfo Scrape API:

import requests

import json

import os

from collections import Counter

from datetime import datetime, timedelta

API_KEY = os.getenv("PANGOLINFO_API_KEY")

BASE_URL = "https://api.pangolinfo.com/v1/scrape"

HEADERS = {"Authorization": f"Bearer {API_KEY}", "Content-Type": "application/json"}

def fetch_live_bsr(category_id: str, location: str = "10001") -> list:

"""Pull real-time Amazon BSR data for a category node."""

resp = requests.post(

BASE_URL,

json={"target": "amazon_bestsellers", "category_id": category_id,

"location": location, "output_format": "json"},

headers=HEADERS, timeout=30

)

resp.raise_for_status()

return resp.json().get("results", [])

def score_bsr_concentration(bsr: list, top_n: int = 3) -> tuple[float, int]:

"""BSR Concentration: estimated top-N share of Top-20 volume."""

top20 = bsr[:20]

weights = [(20 - i) for i in range(len(top20))] # rank proxy

total = sum(weights)

top_n_total = sum(weights[:top_n])

concentration = top_n_total / total if total else 0

score = 5 if concentration < 0.4 else (3 if concentration <= 0.6 else 1)

return round(concentration, 3), score

def score_review_barrier(bsr: list) -> tuple[int, int]:

"""Review Barrier: median review count across Top-20 listings."""

counts = sorted([item.get("reviews_count", 0) for item in bsr[:20]])

median = counts[len(counts) // 2] if counts else 0

score = 5 if median < 500 else (3 if median <= 2000 else 1)

return median, score

def score_price_density(bsr: list, top_n: int = 50) -> tuple[str, float, int]:

"""Price Band Density: concentration in the modal $10 price bracket."""

prices = []

for item in bsr[:top_n]:

try:

p = float(str(item.get("price", "0")).replace("$", "").replace(",", ""))

if p > 0:

prices.append(p)

except (ValueError, TypeError):

continue

if not prices:

return "N/A", 0.0, 3

bands = Counter(f"${int(p // 10) * 10}–${int(p // 10) * 10 + 10}" for p in prices)

dominant, count = bands.most_common(1)[0]

density = count / len(prices)

score = 5 if density < 0.35 else (3 if density <= 0.55 else 1)

return dominant, round(density, 2), score

def score_survival_rate(bsr: list, lookback_months: int = 6) -> tuple[float, int]:

"""

New Product Survival Rate:

Fraction of BSR Top-100 products with first_available within lookback window.

For a true survival rate, compare two BSR snapshots; this is a proxy

using first-available date as a heuristic for 'new entrant.'

"""

cutoff = (datetime.now() - timedelta(days=lookback_months * 30)).strftime("%Y-%m")

recent = [

item for item in bsr[:100]

if item.get("date_first_available", "") >= cutoff

]

rate = len(recent) / 100 if bsr else 0.0

score = 5 if rate > 0.6 else (3 if rate >= 0.3 else 1)

return round(rate, 3), score

def run_assessment(category_id: str, category_name: str) -> dict:

"""Full four-dimension Amazon category competition analysis."""

print(f"\n📊 Starting Amazon Category Competition Analysis: {category_name}")

bsr = fetch_live_bsr(category_id)

if not bsr:

return {"error": "BSR fetch failed"}

concentration, s1 = score_bsr_concentration(bsr)

median_reviews, s2 = score_review_barrier(bsr)

dominant_band, density, s3 = score_price_density(bsr)

survival, s4 = score_survival_rate(bsr)

total = s1 + s2 + s3 + s4

verdict = (

"✅ Enterable — address weak dimensions in your differentiation plan"

if total >= 14 else

"⚠️ High-risk — viable only with supply chain moat or IP protection"

if total >= 8 else

"❌ Avoid — redirect to adjacent subcategories"

)

report = {

"category": category_name,

"timestamp": datetime.utcnow().isoformat() + "Z",

"dimensions": {

"bsr_concentration": {"value": f"{concentration:.1%}", "score": s1},

"review_barrier": {"value": f"{median_reviews:,} reviews (median)", "score": s2},

"price_band_density": {"value": f"{dominant_band} @ {density:.0%} density", "score": s3},

"new_product_survival": {"value": f"{survival:.1%}", "score": s4}

},

"total_score": f"{total}/20",

"verdict": verdict

}

print(json.dumps(report, indent=2))

return report

if __name__ == "__main__":

run_assessment("284507", "Kitchen — Coffee Makers (US)")

The data feeding this pipeline comes directly from Amazon’s live pages via Pangolinfo Scrape API — no subscription database, no refresh lag. Store the BSR output with a timestamp and compare against a re-run 30 or 90 days later to compute a true survival rate rather than the first-available-date proxy shown above.

Amazon Category Competition Analysis Ends at “How to Enter,” Not “Whether to Enter”

The four-dimension scoring framework gives you a calibrated picture of entry difficulty. But the most important output from this process isn’t the go/no-go decision — it’s the specific weak dimension that defines your differentiation entry angle. A category with high BSR concentration but low review barrier and a visible price gap is a completely different strategic scenario than a category with low concentration but near-zero new product survival. The score tells you the operating conditions; the differentiation direction tells you how to exploit them.

The quality of this entire Amazon market competitiveness evaluation depends on the freshness of its inputs. Running the framework on stale subscription data produces stale conclusions. Running it on live, API-sourced Amazon data — current BSR rankings, week-of review counts, today’s price distribution — produces conclusions you can actually act on without a secondary verification step. If you want to validate how this compares to your current research workflow, sign up for a free trial at Pangolinfo’s Console, run the four-dimension assessment on a category you know well, and compare the signal quality against what you’re currently working with.

Connect Pangolinfo Scrape API to your research workflow today — real-time Amazon BSR data, review metrics, and price distributions powering a quantified Amazon category competition analysis framework that replaces gut-feel with decision-grade intelligence.