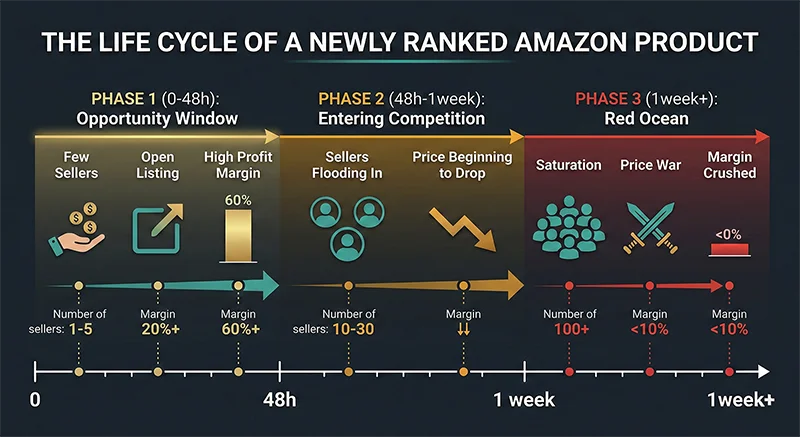

Here’s the dynamic that determines who wins in Amazon product sourcing: every day, new products quietly climb into bestseller lists across Amazon’s categories. In the first 48 hours after a product reaches that threshold, it exists in an unusual state — market demand has been validated by real sales velocity, but most competing sellers haven’t found it yet. Follow-selling slots are still open. Prices haven’t been driven down. The listing page isn’t yet crowded with a dozen alternatives.

That 48-hour window is what an effective Amazon bestseller tracker is designed to catch.

Most sellers operate outside it. When a newly ranked product shows up in their information flow — through seller communities, influencer posts, or peer recommendations — it’s already been on the bestseller list for 5-7 days. The window has closed. Follow-selling competition has consolidated. Price compression is underway.

The gap between “hearing about it five days later” and “catching it in the first 24 hours” is the gap between monitoring infrastructure and absence of monitoring infrastructure. It’s not about discipline or effort. It’s about what your system can detect, and when.

This article covers: how the bestseller ranking opportunity window works, what “hidden champion” ASINs are and why they’re the highest-value targets, why manual scanning structurally fails, how AMZ Data Tracker automates the full pipeline from data collection to AI-powered opportunity scoring, and how to evaluate follow-selling risk before committing.

Why Timing Is Everything in Amazon Bestseller Monitoring

If you trace back how you’ve discovered product opportunities in the past, you’ll likely find a pattern: by the time a promising ASIN reaches you through normal information channels, it’s already been ranked for at least a week.

This isn’t coincidence — it’s the structure of information propagation:

Hour 0: Product first enters the bestseller top 100. Only systems running automated tracking can detect this in real time.

Days 1-2: A handful of sellers with manual monitoring processes notice it and begin evaluation. The first follow-sellers trickle in.

Days 3-5: Active community members, analysts, and content creators pick up the signal and share it. Wave of sellers simultaneously begins evaluation.

Days 5-7+: The product appears in your community feeds. You open the listing — there are already 12 follow-sellers, and prices are 15-20% lower than the original launch price.

Every stage of that chain is structurally inevitable — except the first. Whether you detect a newly ranked product in hour 1 or day 7 depends entirely on your monitoring infrastructure.

Why Manual Bestseller Scanning Structurally Fails

Many sellers do check bestseller lists daily. The problem isn’t effort — manual scanning has four structural limitations that discipline cannot overcome:

| Limitation | Specific Problem | Consequence |

|---|---|---|

| Frequency ceiling | Once daily vs. system-level hourly updates | Up to 24 hours of missed new-entry signals |

| Scope limitation | Humans can realistically track 1-3 categories | Cross-category opportunities systematically missed |

| No historical comparison | Can’t tell if an ASIN is genuinely “newly ranked” | Old stable products mistaken for new opportunities |

| No AI pre-filtering | Every ASIN requires manual individual evaluation | Low efficiency; high-potential products get skipped |

The third limitation is the most fundamental. When you manually check a bestseller list, you see the ranking but you have no way of knowing whether the ASIN at position #47 has been there for two years or two days. Without historical comparison data, “scanning the bestseller list” is actually “looking at a static snapshot of existing bestsellers” — not at all the same thing as identifying new entries.

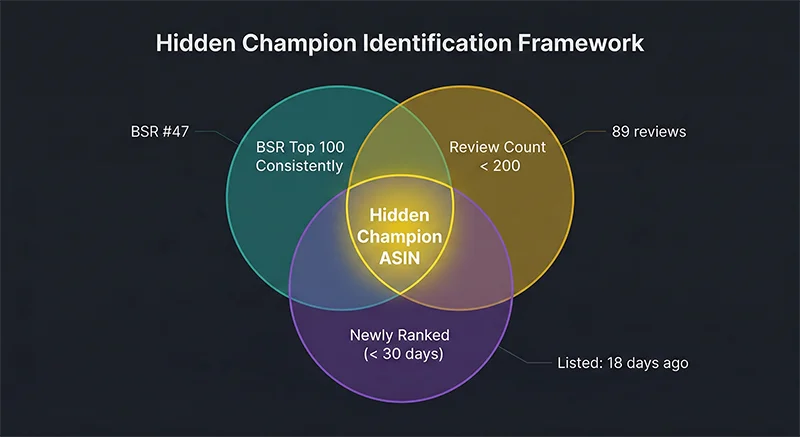

The Hidden Champion: What Newly Ranked Low-Review ASINs Signal

Not every newly ranked ASIN deserves equal attention. Within the group of products that recently entered bestseller rankings, a specific subset carries the highest opportunity density: the hidden champion.

Three defining characteristics intersect to create this category: consistent top-100 BSR performance, low review count (typically under 200, often under 100), and recent entry — listed within the past 30 days or recently achieved its current ranking level.

Why does this intersection matter so much?

Demand is market-validated. BSR performance is driven by real sales velocity — Amazon’s algorithm cannot be gamed at scale with fake purchases. If a product is consistently ranked in the top 100, it’s selling. The demand signal is reliable.

Social proof barriers haven’t calcified. A product with 80 reviews hasn’t locked up buyer decision-making the way a product with 5,000 reviews has. There’s still genuine competitive openness — a new entrant can win the buy box and accumulate reviews against this baseline.

Cognitive bias protects the window. Most sellers evaluate follow-selling candidates by review count. A product with “only 80 reviews” doesn’t register as a dominant competitor in most sellers’ mental models — even if it’s genuinely outselling alternatives. This misperception is precisely what creates and sustains the opportunity window.

Differentiation development has room. Low review counts mean the category’s improvement space hasn’t been systematically mined by competitors. An analysis of negative reviews across existing products will surface addressable pain points that no current seller has effectively resolved — the foundation of a differentiated product strategy rather than pure follow-selling.

Why Identifying Hidden Champions Requires Automated Tracking

The three criteria above require historical comparison data to actually apply. Specifically:

You can check BSR and review count from any current listing. But you cannot determine from a static view whether an ASIN is “newly ranked” — meaning it has recently entered the top 100 for the first time, as opposed to being a stable incumbent that has occupied that position for years.

This is the data problem that makes manual scanning structurally insufficient for hidden champion identification. You need a system that continuously records bestseller data over time, then compares each new collection against historical records to identify what’s new. That’s the technical core of automated Amazon bestseller tracking.

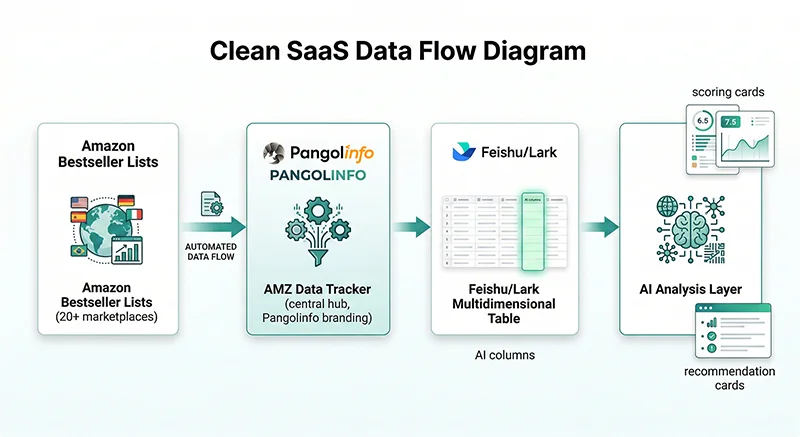

AMZ Data Tracker: Automating the Full Bestseller-to-Decision Pipeline

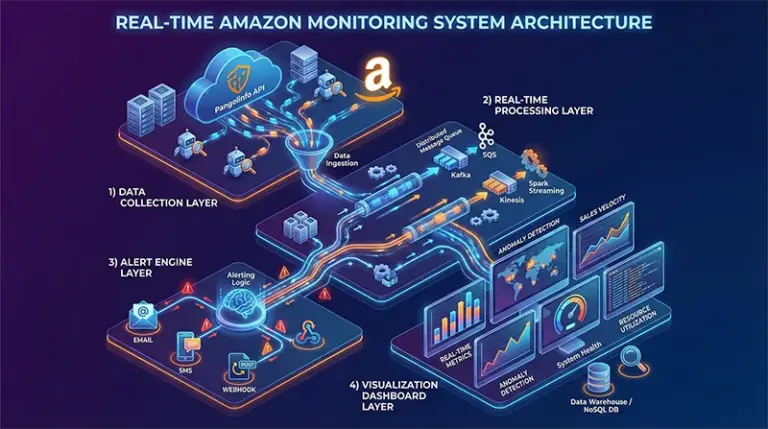

AMZ Data Tracker compresses this entire process into an automated system with three functional layers:

Layer 1: Automated Data Collection

Configure tracking for Amazon bestseller lists across any combination of category and marketplace. Coverage spans 20+ Amazon sites — including US, UK, DE, JP, AU, MX, CA, and others. The system supports Best Sellers, New Releases, Movers & Shakers, and Hot New Releases list types.

Execution frequency options: manual, daily, or weekly. For bestseller opportunity scanning, daily execution means the system automatically runs a full bestseller collection and new-entry identification every 24 hours with no manual intervention required.

Layer 2: Feishu Multidimensional Table Integration

Collected data syncs automatically to your Feishu workspace. The data is entirely private — it lives in your Feishu account, not on Pangolinfo’s servers. Your competitive monitoring data, selection criteria, and business insights are not visible to any third party.

Data fields are configurable: ASIN, product title, BSR rank, review count, rating, price, and — most importantly — a system-generated “newly ranked” flag that automatically identifies ASINs appearing for the first time in the current collection versus all previous records.

Layer 3: AI-Powered Analysis

The Feishu AI columns are where the system delivers its core differentiation. For each newly ranked ASIN, AI automatically runs three analytical passes:

- Hidden champion qualification: Yes/No determination based on your configured business rules (review count range, BSR threshold, rating floor)

- Qualification rationale: Natural language explanation of which criteria were met and which weren’t — making the decision logic transparent and auditable

- Product upgrade recommendations: For qualified hidden champions, AI generates differentiation direction suggestions based on competitive review analysis

Reading the Output: What AMZ Data Tracker’s AI Analysis Looks Like in Practice

The screenshot above shows actual output from AMZ Data Tracker’s Feishu integration. The key columns to understand:

“本次筛选” (Current Filter): The system’s automated new-entry detection. ASINs appearing for the first time in the tracked category are tagged “新上榜” (newly ranked). This is the field that makes historical comparison concrete and visible.

“隐形冠军判断依据” (Hidden Champion Basis): The AI’s reasoning for each ASIN — which criteria were met, which weren’t, in readable natural language. Example from the screenshot: for ASIN B0DHCWJX29, the AI notes that review count of 2,169 satisfies the sub-5-year product age condition but must also satisfy the >100, <1000 review threshold to fully qualify as a hidden champion.

“隐形冠军判断” (Hidden Champion Determination): The binary Yes/No output. For ASIN B07JL74R2J with 58,043 reviews, the determination is No — the review count substantially exceeds the configured threshold, correctly excluding a mature established product from the hidden champion pool.

“冠军产品升级优化建议” (Champion Product Upgrade Recommendations): Differentiation direction suggestions for qualified products — drawn from customer review analysis of competing ASINs. These recommendations are the starting point for product development decisions rather than pure follow-selling.

Configuring Custom Business Rules

The AI analysis fields operate against your own rule configuration, not generic defaults. Configurable parameters include:

- Review count range (minimum and maximum thresholds)

- Minimum rating requirement

- BSR rank ceiling

- Price range filters

This means you’re encoding your own sourcing judgment model into the system — not outsourcing decisions to an algorithm with unknown criteria. The AI applies your rules and explains its reasoning.

Follow-Selling Risk Assessment: Not Every New ASIN Is Worth It

The AI output tells you which products have potential. The final follow-selling decision requires additional risk evaluation across four dimensions:

Dimension 1: Brand Protection Status

This is the hardest gate. If an ASIN’s brand has Amazon Brand Registry active and an enforcement posture against third-party sellers, the follow-selling risks are serious:

- ASIN removal petitions (brand files counterfeit or IP infringement claims)

- Account warning or suspension in severe cases

- Inventory stranded in FBA with no appeal path

How to check: Before listing against any ASIN, confirm: (1) whether existing third-party sellers are already present on the listing — if so, it’s currently accessible; (2) whether the brand name has a Brand Registry symbol; (3) whether the brand has a history of IP enforcement actions using tools like IP Alert.

Dimension 2: Pessimistic Margin Viability

A newly ranked product’s current price is not a stable price. When follow-sellers enter simultaneously, pricing compresses as sellers compete to win the buy box. Projecting margins at current price systematically overestimates the actual economics you’ll operate within.

Stress-test approach:

- Base case price: current listing price × 0.85 (pessimistic stable price after follow-seller pressure)

- Calculate fully-loaded margin at this price: FBA fees + inbound freight + COGS + advertising allowance

- Threshold: if net margin at the stress-tested price is below 8%, the project’s risk/return profile is unfavorable

Dimension 3: Supply Chain Replicability

Follow-selling requires sourcing an identical or near-identical product in reasonable time. Certain product types are structurally difficult to replicate quickly:

- Products requiring specialized manufacturing processes or materials (proprietary coatings, patented connection systems)

- Products requiring regulatory certifications for marketplace eligibility (CE/FCC/UL/etc.)

- Highly customized products where visual similarity creates intellectual property exposure

Dimension 4: Competition Saturation Timing

Use current follow-seller count and timing to assess window status:

| Follow-seller count | Time since ranking | Window status | Recommendation |

|---|---|---|---|

| 0-3 sellers | 0-48 hours | 🟢 Prime window | Fast evaluation, rapid entry |

| 4-10 sellers | 3-7 days | 🟡 Window narrowing | Requires price or quality differentiation |

| 10+ sellers | 1+ week | 🔴 Price war territory | Full margin analysis required before committing |

Follow-Selling vs. Differentiated Development: Choosing the Right Path

Identifying a hidden champion doesn’t lock you into pure follow-selling. The AI upgrade recommendation field is the entry point for a different strategy: building a differentiated version of the product that addresses documented buyer pain points in existing listings.

- Pure follow-selling: Faster time-to-revenue, lower differentiation investment, higher sensitivity to price competition and brand enforcement risk. Best for teams with responsive supply chains and high inventory turnover tolerance.

- Differentiated development: Requires systematic analysis of competitor reviews to identify unresolved pain points. Longer development cycle, but creates defensible positioning. Pangolinfo Reviews Scraper API supports bulk ASIN review extraction with structured output including Amazon’s Customer Says aggregated insight tags — the data foundation for this analysis.

The two paths aren’t mutually exclusive. For a newly ranked hidden champion in the 48-hour window, a common approach is to enter as a follow-seller immediately to capture early-window margin, while simultaneously running the review analysis to inform a differentiated product development timeline.

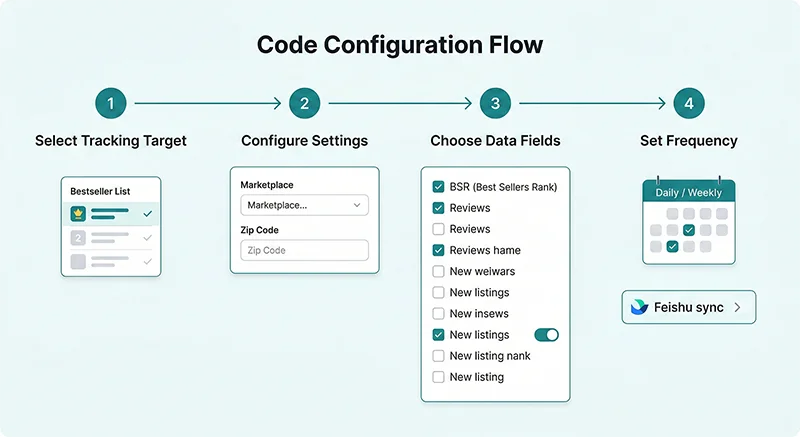

4-Step Setup: Building Your Amazon Bestseller Tracker

The full configuration is completed without code in the AMZ Data Tracker console:

Step 1: Select Your Tracking Target

Choose “Best Sellers” as your tracking type. Additional task configurations for New Releases or Movers and Shakers can run in parallel with separate configurations.

Step 2: Configure Settings

Select your target marketplace (Amazon.com for US, amazon.co.uk for UK, etc.), configure the ZIP/postal code for marketplace-appropriate delivery context, and add your target categories. Multiple categories can be added per task, and bulk import from Excel is supported.

Step 3: Choose Data Fields

Under “Best Seller Data” fields, select:

- ASIN, product title, BSR rank

- Review count and rating

- Current price

- New listing flag (system auto-generates based on historical comparison)

Step 4: Set Frequency and Sync Method

Set execution frequency to “Daily.” Under output, choose “Sync to Feishu.” After the first sync, add AI analysis columns to your Feishu table — configuring hidden champion qualification rules against your defined thresholds for review count, BSR range, and rating. The system then runs the full pipeline automatically each day.

Free-tier accounts include sufficient credits for initial testing: Start free with AMZ Data Tracker →

The Core Shift

The difference between catching an opportunity in its first 24 hours and hearing about it seven days later isn’t discipline or attention — it’s monitoring infrastructure. Manual processes cannot maintain the detection frequency and historical comparison capability required to consistently find newly ranked ASINs before the competition does.

Hidden champion identification adds a layer of precision to the raw detection: within the universe of newly ranked ASINs, filtering for those with structurally low competitive barriers while demand is confirmed — the products where your entry has the best economics.

Follow-selling risk assessment closes the loop: ensuring that favorable-looking opportunities are genuinely viable before inventory is committed, with explicit evaluation of brand protection exposure, stress-tested margin, supply chain access, and competition saturation timing.

AMZ Data Tracker automates the first two steps. Your judgment handles the third. That’s an efficient use of both machine and human capability — and it’s available for evaluation right now.

Frequently Asked Questions

Which Amazon marketplaces does AMZ Data Tracker support for bestseller tracking?

AMZ Data Tracker covers 20+ Amazon marketplaces including Amazon.com (US), Amazon.co.uk (UK), Amazon.de (Germany), Amazon.co.jp (Japan), Amazon.com.au (Australia), Amazon.com.mx (Mexico), Amazon.ca (Canada), Amazon.fr (France), Amazon.it (Italy), and others. Multiple marketplaces can be tracked simultaneously, enabling cross-marketplace bestseller comparison in a single Feishu workspace.

How does the system identify “newly ranked” ASINs versus stable bestsellers?

The system automatically compares each new data collection against all previous records for the same category and marketplace. ASINs that appear in the current collection but were absent from prior records — or that have newly entered the configured rank threshold range — are automatically tagged as newly ranked in the Feishu output. No manual comparison is required.

Is my competitive intelligence data private?

Yes. All collected data, configured business rules, and AI analysis results are stored in your Feishu workspace, governed by your Feishu account permissions. Pangolinfo’s servers do not retain copies of your tracking data or analysis outputs. Your competitive intelligence is completely private to your workspace.

What are the primary follow-selling risks on Amazon to evaluate?

The four critical dimensions: (1) Brand protection status — whether the brand has Amazon Brand Registry and an enforcement posture against third-party sellers; (2) Margin viability under pessimistic pricing — projecting margins at 85% of current price to account for follow-seller compression; (3) Supply chain replicability — whether you can source a comparable product within acceptable timelines; (4) Competition saturation timing — how many follow-sellers have already entered and how recently the product ranked. Products with 0-3 existing follow-sellers in the 0-48 hour window represent the best entry conditions.