Every year, thousands of sellers launch products on Amazon. Every year, a large portion of those products quietly disappear within three months of going live. When you trace these failures back to their origin, the same culprit appears with startling frequency: a flawed product research decision made before a single unit was manufactured.

The internet is full of Amazon product research advice. Most of it focuses on the same thing: how to find opportunities. Look for low-review categories with high search volume. Scan the BSR Top 100 for gaps. Use a tool to estimate monthly sales. These frameworks aren’t useless, but they share a critical blind spot — they’re optimized for discovery, not for filtration.

The distinction matters enormously in 2026. When nearly every competitive seller has access to the same data tools and the same research playbooks, the edge no longer comes from finding opportunities first. It comes from correctly rejecting the opportunities that look real but aren’t. That’s what the five rules in this article are designed to do.

These aren’t guidelines assembled from theory. They’re iron rules reverse-engineered from the most common and expensive product research failures — the ones that were predictable in hindsight, and preventable with the right analytical framework upfront. Each rule comes with a real failure pattern, a counter-intuitive implication, and a concrete validation method you can apply today.

Why Amazon Product Research Intuition Is Breaking Down in 2026

Before 2022, experienced Amazon sellers could rely on market instinct developed over years of observation. Spot a trending category, check the review counts, estimate margins, and move. The speed advantage went to whoever developed the fastest instincts. This era is functionally over.

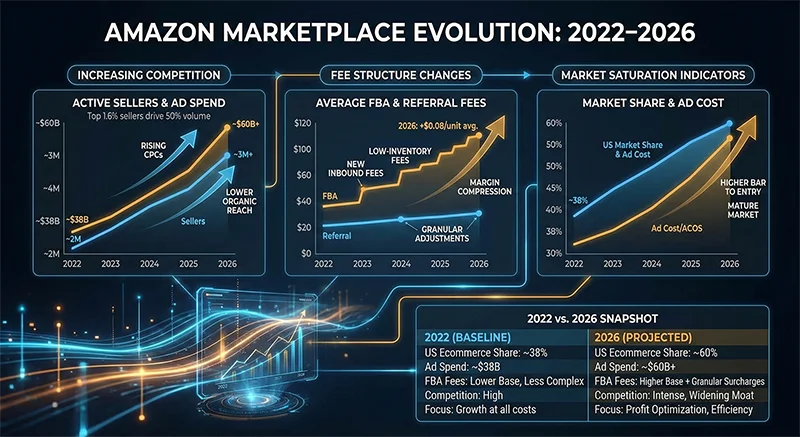

Three structural changes have fundamentally altered the product research landscape. First, information democratization: the widespread adoption of data tools means that any category showing “opportunity” signals in structured data is simultaneously visible to thousands of competitors. The window from discovery to saturation has compressed from months to weeks.

Second, platform cost structure evolution: Amazon has significantly adjusted FBA fee structures in 2024 and 2025, with continued pressure in 2026 particularly affecting high-volume/low-margin product categories. Products that had workable margins under the 2022 cost model are now structurally unprofitable at the same price points. Any product research methodology that doesn’t account for this cost reality is working with an outdated map.

Third, and most consequentially for product research, is the maturation of buyer expectations. The effective rating threshold for new product survival has shifted. Products launching in competitive categories today need to sustain 4.3+ ratings to remain visible — and sustaining that requires products that genuinely address real user needs, not just aesthetically differentiated versions of what already exists.

In this environment, the five rules below represent not best practices but survival requirements — the minimum conditions under which any Amazon product research decision can be considered defensible.

The 5 Iron Rules of Amazon Product Research in 2026

Rule 1: Demand Must Be Structural, Not Event-Triggered

The most seductive trap in Amazon product research is confusing spike demand with stable demand. During COVID-19, home fitness equipment, sanitizers, and masks generated extraordinary sales data on Amazon — data that launched entire product lines and left warehouses full of inventory when the cycle ended. The category-wide BSR charts from that period looked like textbook product opportunities right up until they didn’t.

The more insidious version of this mistake plays out with viral products: a TikTok video drives explosive search volume, the tool-generated monthly sales estimates look extraordinary, sellers rush in — and by the time goods arrive at FBA warehouses, the moment has passed. The demand was real; it just wasn’t durable.

The consistent validation method is to pull 24-month BSR history rather than 30 or 90-day snapshots. Structurally sound demand looks like stable oscillation within a reasonable range, with predictable seasonal variation. Event-triggered demand shows a sharp peak followed by return to pre-event levels, or outright collapse. If the BSR chart resembles a cardiac monitor reading, treat the underlying demand as unvalidated until you can identify what’s actually driving purchases independent of the triggering event.

Tools like AMZ Data Tracker enable long-horizon BSR tracking across competitor products within a category, making this 24-month pattern check a routine part of the research workflow rather than a manual data-gathering exercise.

Rule 2: Category Concentration — Not Review Count — Is the Real Competitive Moat

The “low review count = low competition” heuristic has been the dominant gating criterion in Amazon product research for years. It deserves to be retired. Review count is a historical artifact reflecting past sales volume, not a reliable indicator of current market concentration or future competitive dynamics.

Consider a category where the top 10 products each have fewer than 300 reviews — the traditional “safe” threshold — but three of those products are owned by the same seller or brand, combined representing 65% of category revenue. That category is effectively oligopolistic. No amount of launch budget will overcome the compounding advantages of a dominant player: superior conversion rate from accumulated social proof, better organic ranking from sustained sales velocity history, and likely a pricing advantage from supply chain scale. The low review counts masked a highly concentrated market structure.

The counterintuitive implication deserves emphasis: high-review categories can actually be safer entry points if review distribution is genuinely dispersed. Heavy review counts confirm real, sustained demand. And if those reviews are spread across dozens of sellers with no single entity holding more than 5-8% market share, no one has completed brand capture — the category still has room for a well-differentiated entrant. A sparse-review category with concentrated ownership is a significantly worse research outcome than a mature category with distributed competition.

Rule 3: Logistics Cost Is a Hard Constraint, Not an Optimization Variable

A common mistake in Amazon product research is treating logistics cost as something to “figure out later” once the product concept is validated. This approach consistently produces decisions that look viable in the planning spreadsheet and turn unprofitable in execution. The core problem is that sellers use base-case logistics assumptions — normal freight rates, standard FBA fees — when their financial model essentially requires best-case conditions to work.

The discipline required here is straightforward but psychologically difficult: run your margin analysis using worst-case logistics assumptions, not average or optimistic ones. For a 2026 analysis, that means: inbound freight at the 12-month peak rate (not current), FBA fees at 110% of the current published rate (building in buffer for the next adjustment), and carrying cost calculated on a 60-day inventory age assumption. If net margin under these conditions drops below 12%, the product’s financial foundation is structurally fragile — it’s profitable only when things go right, which logistics markets consistently fail to guarantee.

The physical attributes that drive logistics cost should be treated as selection criteria with hard cutoffs during the product research phase, not as details left to negotiation with suppliers: weight, volumetric weight, material classification for hazmat, and packaging compressibility. Sellers who treat these as primary filters rather than afterthoughts avoid an entire class of margin-erosion surprises.

Rule 4: Never Enter a Category You Cannot Genuinely Understand from the Buyer’s Perspective

This rule is the one most frequently dismissed as obvious and most consistently violated in practice. The product research models that treat Amazon as a data game — find the numbers, check the margins, place the order — systematically underperform because they produce products without real differentiation, which in a competitive market means competing on price alone.

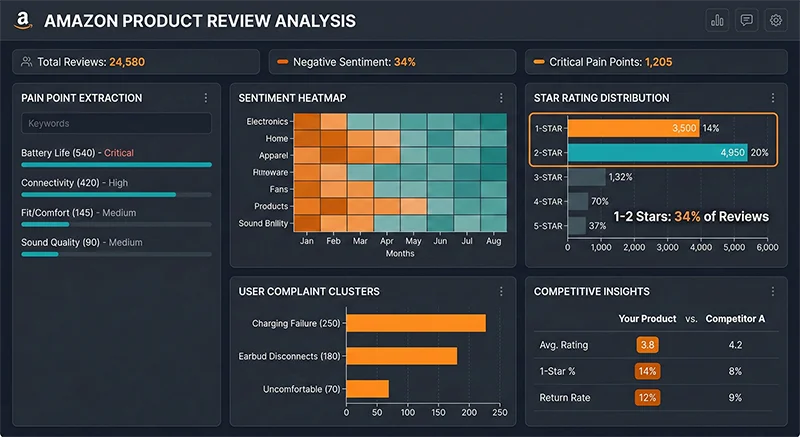

The differentiated products that consistently survive in competitive Amazon categories share one characteristic: their product improvements originate from genuine user pain point insights, not from cosmetic variation on existing competitors. And those insights come from taking competitive review analysis seriously as a research discipline, not as a box to check.

A concrete example: a seller entering the kitchen timer category because the BSR data looked favorable, without any real understanding of purchase drivers or user frustrations. Their product was aesthetically differentiated but functionally identical to the field. They accumulated negative reviews concentrated on a problem they’d never considered — alarm volume too high for households with young children. This user frustration was explicitly documented across multiple competitor review sets, clearly readable to anyone who ran systematic analysis before finalizing the product spec. A competitor who had addressed this exact issue was generating significantly higher satisfaction scores as a result.

The validation test for this rule requires three specific answers: In what context does the target buyer actually use this product? What are the top three complaints users have with existing products — derived from review data, not assumption? Does your product address any of those complaints in a materially meaningful way? If any of these questions produces “I’m not sure” as an honest answer, that’s the research gap to fill before proceeding, not after.

For systematic competitive review analysis at scale, Reviews Scraper API enables bulk extraction of competitor reviews across multiple ASINs, including Amazon’s Customer Says aggregated tags — machine-learning-generated summaries of the highest-frequency themes in a product’s review corpus. These tags are one of the fastest available entry points into what buyers actually care about and complain about, which is precisely the data needed to pass Rule 4.

Rule 5: BSR Data Only Has Meaning in a Multi-Marketplace Context

The default scope of Amazon product research for most English-speaking sellers is the US marketplace, occasionally supplemented by UK data. This is a meaningful analytical limitation in 2026, where the spread between marketplace maturity levels across Amazon’s global footprint represents one of the clearest remaining structural opportunity sources in cross-border ecommerce.

A category’s competitive dynamics in the US marketplace are not predictive of its structure in Germany, Japan, or Australia. Categories that are fully saturated in the US often exist in early-stage development in specific other marketplaces — fewer competitors, less established brand presence, but equivalent underlying consumer demand. Sellers who conduct product research exclusively through a US-centric lens systematically miss these opportunities.

More importantly for product research validation purposes, multi-marketplace corroboration is the strongest available evidence that a demand pattern is structurally robust. A product with stable BSR performance across US, Germany, Japan, and Australia simultaneously has demand that isn’t dependent on a single cultural context, a specific market event, or a platform-specific algorithmic factor. This cross-market confirmation dramatically increases confidence in the durability of the demand being researched. A product supported only by single-marketplace data carries genuine uncertainty about whether it reflects a universal need or a localized anomaly.

Executing this validation requires the ability to collect comparable BSR and category data across 20+ Amazon marketplaces efficiently. Pangolinfo Scrape API covers 20+ Amazon marketplaces with unified structured JSON output — meaning data from Amazon.com and Amazon.co.jp follows identical schema, enabling direct multi-marketplace comparison without marketplace-specific parsing logic. This is the infrastructure that makes systematic cross-market validation operationally practical rather than prohibitively manual.

Turning the Five Rules Into an Executable Validation Process

Understanding the five rules matters less than operationalizing them as a consistent decision framework. The following gate-based structure translates each rule into a specific pass/fail criterion with an actionable verification step:

Gate 1 — Demand Durability (Rule 1)

Pull 24-month BSR history for the top 20 products in the target category. Flag any product showing a “spike and collapse” pattern. If more than 30% of top performers show non-sustained BSR trajectories, treat category demand as unvalidated pending identification of the structural demand driver independent of any triggering events.

Gate 2 — Concentration Assessment (Rule 2)

Estimate top 3 seller revenue share using BSR and review-based proxies. If top-3 market concentration exceeds 55%, escalate to detailed competitive defensibility analysis before proceeding. Do not reject solely on this basis, but explicitly evaluate whether differentiation can address the concentration through a different buyer segment or use case.

Gate 3 — Logistics Floor Check (Rule 3)

Build margin model under three scenarios: base-case, worst-case freight + current FBA fees, and worst-case freight + 10% fee increase. Gate passes only if worst-case net margin exceeds 12%. Any product that requires base-case assumptions to reach minimum viable margin has an embedded structural fragility that should be explicitly documented as an accepted risk before proceeding.

Gate 4 — User Pain Point Clarity (Rule 4)

Require written documentation of: (a) top three user complaints in target category derived from competitive review analysis, not assumptions, and (b) explicit product differentiation response to at least one of those complaints. This documentation must cite specific review data or Customer Says tag frequencies as evidence. Qualitative assertions without data citation do not pass this gate.

Gate 5 — Multi-Marketplace Confirmation (Rule 5)

Verify category performance across a minimum of five Amazon marketplaces. Products with single-marketplace data support should be explicitly flagged as carrying unvalidated demand scope, with implications for initial inventory commitment and market expansion planning documented before launch approval.

The Real Skill in Amazon Product Research Is Learning to Say No

The dominant metaphor in Amazon product research is prospecting — sifting through vast amounts of data to find the one product that will hit. The metaphor is misleading because it centers the skill on finding, when the actual competitive differentiation in 2026 is in filtering. In a market where discovery advantages are rapidly equalizing, the sellers who consistently make better product decisions are distinguished not by their ability to find opportunities, but by their ability to correctly reject the ones that will fail.

The five rules in this article are, at their core, five rejection criteria. Event-triggered demand: reject. Concentrated categories where your differentiation cannot plausibly address the moat: reject. Products where worst-case logistics destroys margins: reject. Categories you cannot understand from the buyer’s perspective without real review data: reject. Products with single-marketplace validation: reject. Holding this line is not about making product research harder. It’s about concentrating resources on the decisions that are actually worth making.

Data infrastructure is what makes this level of systematic validation practically executable. Multi-marketplace BSR tracking, demand pattern analysis over 24-month horizons, bulk competitive review analysis — these are data-intensive tasks that require consistent, structured market data to perform reliably. Pangolinfo Scrape API supports this infrastructure across 20+ Amazon marketplaces with unified structured output. You can explore the platform directly at the free trial console — the same data foundation that makes the five rules operational rather than theoretical.

Article Summary

This article argues that effective Amazon product research in 2026 requires a rejection framework rather than a discovery framework. It presents five iron rules: demand must be structural not event-triggered; market concentration (not review count) is the real competitive barrier; logistics cost is a hard constraint with floor requirements; sellers must genuinely understand buyer pain points from real review data; and BSR data only has meaning when validated across multiple marketplaces. Each rule includes a real failure pattern, counter-intuitive implication, and an actionable gate-based validation method. Pangolinfo Scrape API and AMZ Data Tracker are introduced as the data infrastructure enabling systematic multi-marketplace validation.

Build your multi-marketplace product research validation system with Pangolinfo Scrape API — Start free trial