Introduction and the Paradigm Shift in E-commerce Big Data

In today’s rapidly developing global digital trade, the competitive landscape of e-commerce retail has undergone a fundamental paradigm shift. Traditional product selection decisions often rely on the intuition of human experts, limited market sampling surveys, and empirical heuristic rules. This model falls short when facing massive and rapidly changing market data. Modern e-commerce platforms, such as Amazon, Shopee, AliExpress, Lazada, and Walmart, not only act as mediums for commodity transactions but have also evolved into massive data generation engines. To gain a sustainable competitive advantage on these platforms, enterprises must completely abandon manual product selection models and shift to building highly automated, data-driven product selection scoring models. This scoring model is a complex mathematical and engineering composite capable of quantitatively evaluating thousands of potential product niches across multiple dimensions, covering core metrics such as sales velocity, profit margins, market saturation, and supply chain risks.

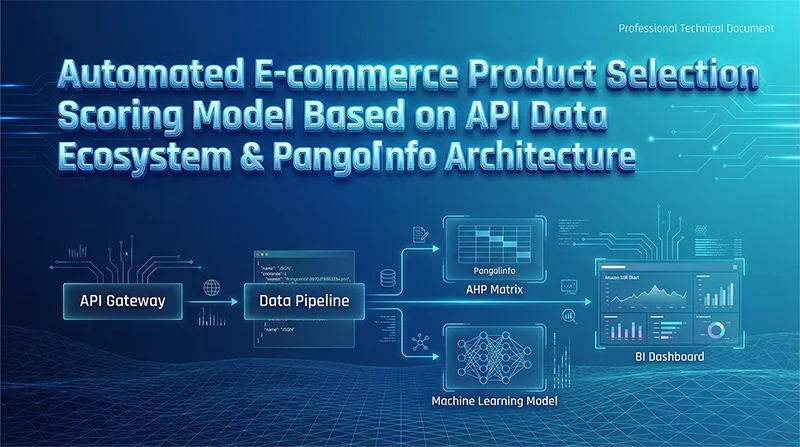

The lifeline of an automated product selection scoring model lies in continuous, high-fidelity, low-latency data input, which is exactly where the Application Programming Interface (API) ecosystem plays a key role. With the increasingly strict anti-scraping mechanisms of e-commerce platforms, traditional web scraping solutions face huge technical barriers and high maintenance costs. At this time, professional API data providers represented by Pangolinfo have emerged. By providing customized Scrape API services, they bypass complex underlying anti-bot mechanisms and directly output structured JSON data payloads, which contain real-time pricing, Best Sellers Rank (BSR), and detailed competitor intelligence. However, simply acquiring data is not equivalent to possessing product selection capabilities. Building a truly commercially valuable automated product selection system requires designing an end-to-end architecture that runs through the entire process. This architecture must organically integrate data acquisition, data pipeline engineering, outlier processing, mathematical normalization, Multi-Criteria Decision Analysis (MCDA), predictive machine learning, and a dynamic Bayesian feedback loop. This report will comprehensively and deeply deconstruct this technical system to provide a detailed architectural guide for developing enterprise-level automated e-commerce product selection scoring models.

Data Acquisition Layer: API Matrix and Pangolinfo Architecture Analysis

The infrastructure of an automated product selection model begins with the systematic collection of market data. Modern e-commerce platforms use dynamic rendering, location-based content variants, and extremely strict request frequency limits. This makes it extremely inefficient and financially unaffordable for enterprises to build and maintain traditional web crawlers internally. Maintaining crawler infrastructure internally not only requires managing a huge pool of proxy IPs, rotating user agents, and handling CAPTCHAs, but also coping with the platform’s weekly or even daily anti-scraping rule updates. Such high hidden costs and the risk of business interruption caused by system downtime (for example, losing millions of dollars due to scraping system crashes during Black Friday) force technical teams to turn to more stable and professional API solutions.

Synergistic Application of Synchronous and Asynchronous API Architectures

When utilizing tools like Pangolinfo’s Scrape API or Lazada’s open API, system architects must design reasonable execution paradigms for different business scenarios. Synchronous API calls are typically used in low-latency scenarios requiring immediate validation, such as the final confirmation of a specific SKU’s real-time inventory status or current Buy Box price at the last minute of a purchasing decision. The advantage of the synchronous mode is its rapid response, but its disadvantage is that it is highly prone to network congestion or timeout errors when facing large-scale data requests.

For macro-level product discovery and data collection spanning entire categories or even multiple platforms, an asynchronous processing architecture is indispensable. In an asynchronous data collection architecture, the system submits a request payload containing the target URL and optional geographical context (for example, specifying a US zip code {"zipcode":"90001"} to obtain accurate local shipping prices) via the HTTP POST method. After receiving the request, the API server immediately returns a taskId, freeing the client’s connection. Once the data is scraped and parsed in the cloud, Pangolinfo’s system pushes the formatted JSON data to the client through a pre-configured Webhook callback address. This asynchronous decoupling design fundamentally eliminates network timeout issues, enabling the system to simultaneously process data collection tasks for thousands of product pages with extremely high concurrency, greatly improving the data throughput of the product selection model.

Engineering Strategies for Handling Rate Limits and Quota Exhaustion

In automated data collection, one of the most common engineering challenges is elegantly handling API rate limits. When the request frequency exceeds the platform’s allowed threshold, the API server returns an HTTP 429 “Too Many Requests” status code. E-commerce APIs usually employ Token Bucket or Leaky Bucket algorithms to smooth burst traffic and protect their core infrastructure. To maintain the continuity of the data pipeline, the data extraction layer must implement robust retry logic with Exponential Backoff.

In this retry strategy, the delay time between consecutive retry attempts grows exponentially (e.g., waiting 1 second after the first failure, 2 seconds after the second, 4 seconds after the third), and a random “Jitter” factor must be introduced to prevent multiple concurrent processes from waking up collectively at the same time and causing the “Thundering Herd Problem”. Furthermore, through multi-environment distributed requests, IP rotation strategies, and utilizing Python’s tenacity library or built-in asyncio for asynchronous throttling control, data collection efficiency can be maximized while adhering to API quota protocols.

| Rate Limit Handling Strategy | Technical Implementation Principle | Business Value for Product Selection Model Data Collection |

| Exponential Backoff Retry | Intercepts 429 errors, increments retry time by $2^n$ and adds random jitter. | Prevents the system from being temporarily banned due to frequent requests, ensuring key metrics like historical sales are eventually successfully acquired. |

| Asynchronous Batch Requests | Utilizes asyncio or aiohttp, combined with callback mechanisms to submit tasks in batches. | Breaks through single-thread I/O bottlenecks, achieving minute-level high-concurrency scanning of the entire Amazon Best Sellers list. |

| Header and Environment Rotation | Dynamically changes User-Agent and deploys scraping sessions distributedly across multiple servers. | Evades platform fingerprint tracking on single devices, ensuring the continuity of cross-platform (Shopee, Lazada, etc.) data. |

| Header Quota Monitoring | Parses Retry-After or remaining quota fields in API response headers. | Dynamically adjusts request frequency to avoid exhausting API quotas during key promotional periods, which would halt the product selection model. |

Table 1: Engineering strategies and commercial value comparison for handling e-commerce API rate limits.

Reconstruction of Data Pipeline and Underlying Storage Architecture

The raw responses received from Pangolinfo or major e-commerce native APIs are usually in a highly nested JSON format. Before being injected into the mathematical scoring algorithm, these raw data must undergo systematic transformation, cleaning, and structured storage. This evolutionary process from unstructured data to an analytical data pool relies heavily on an enterprise-level Extract, Load, Transform (ELT) pipeline architecture.

Pipeline Orchestration and Data Lakehouse Construction

Modern product selection model pipeline orchestration generally uses Directed Acyclic Graph (DAG) scheduling tools like Apache Airflow. Airflow is responsible for defining task dependencies, triggering API calls on schedule, monitoring data flow status, and executing automatic recovery logic when local nodes fail. Under the ELT paradigm, extracted data is first loaded directly into a cloud-based modern data warehouse (such as Snowflake, Redshift, or BigQuery) as a raw data lakehouse staging area.

Once inside Snowflake, technical teams typically use dbt (data build tool) to execute complex transformation layer logic using pure SQL. dbt can flatten JSON arrays (such as variant lists, historical review evolution trends, multidimensional price drop records) and reconstruct them into clean, analysis-oriented dimension and fact tables. This architecture separating computation and storage ensures that the product selection model maintains sub-second query performance even when processing tens of millions of historical product records.

The Return of the Relational Paradigm: The Architectural Game Between PostgreSQL and MongoDB

During the database selection phase, architects often face a choice between document-based NoSQL databases (like MongoDB) and relational databases (like PostgreSQL). With its flexible, JSON-like document structure, MongoDB is well-suited as the first landing spot for raw API responses, especially when e-commerce platform attribute structures change frequently or when fast iterations of front-end display prototypes are needed. MongoDB’s schemaless nature provides an irreplaceable agility advantage.

However, when entering the core computation layer of the automated product selection scoring model, PostgreSQL is a more rigorous and inevitable choice. The scoring model is essentially a dense mathematical computation engine that requires the database to provide strict data type constraints, complex cross-table JOIN aggregation capabilities, and absolute ACID data consistency guarantees. To support advanced analysis, the PostgreSQL architecture must follow the Third Normal Form (3NF) for normalized design. By separating user information, product catalogs, historical orders, and category attributes into different dimension tables and eliminating transitive dependencies, PostgreSQL ensures that executing complex window functions, calculating moving averages of historical sales, and aggregating competitive intensity across multinational markets are not only accurate but also avoid storage explosions caused by data redundancy.

| Architectural Dimension | PostgreSQL (Relational Database) | MongoDB (NoSQL Document Database) | Specific Application Role in Product Selection Model |

| Data Model | Strict row-column tables, predefined Schema | Flexible JSON-like documents (BSON) | MongoDB receives raw scrapes; PostgreSQL supports complex scoring. |

| Join Query Capability | Native support for complex JOINs and aggregation functions | Implemented via aggregation pipelines; performance limited for complex cross-table joins | Scoring requires frequent joins of prices, reviews, and competitor history; PostgreSQL excels. |

| Transactions & Consistency | Strong ACID transaction guarantees, MVCC mechanism | Eventual consistency; supports distributed transactions but with high overhead | PostgreSQL guarantees absolute consistency for every weight update and model score. |

| Applicable Business Scenarios | Heavy analytical queries, complex financial and selection calculations | Rapidly changing product attributes, massive unstructured text storage | The computational foundation of the scoring engine (PostgreSQL); logs and raw archives (MongoDB). |

Table 2: Comparison of e-commerce data storage architectures and their division of labor in the product selection model.

Data Cleaning, Outlier Processing, and Metric Normalization

E-commerce data is inherently noisy due to its high market openness and the complexity of its participants. Algorithmic pricing bots can cause extreme price peaks and valleys for a product within a single day; sudden out-of-stock events can artificially inflate or depress Amazon’s Best Sellers Rank (BSR); and the currency units and measurements of different sites vary greatly. Therefore, before any mathematical scoring begins, data must undergo extremely rigorous cleaning and preprocessing procedures.

Normalization, Deduplication, and Entity Resolution

When handling extensive keyword search tasks, APIs often return a large amount of overlapping data. If left unprocessed, this leads to severe double-counting when the model calculates the market capacity and competitive saturation of a certain category. Deduplication algorithms must use composite primary keys (e.g., Platform ID combined with ASIN or SKU) for exact matching; meanwhile, fuzzy matching and entity resolution logic must be introduced to identify records that appear different due to capitalization variants or spelling differences but are actually the same product.

Text normalization is equally indispensable. The system must unify the capitalization of brand names, clean up extra spaces, fix inconsistent Unicode character encoding issues, and convert extracted multi-currency prices (such as Thai Baht, Indonesian Rupiah, US Dollars) into a base currency (like USD or RMB) by calling real-time exchange rate APIs. This establishes a solid data baseline for cross-site product selection comparisons.

Outlier Mitigation: The Statistical Significance of Winsorization

When processing e-commerce sales and price data, extreme outliers can catastrophically distort arithmetic means and the feature space of machine learning algorithms. The traditional method for handling outliers is to delete these extreme records directly, but this is extremely dangerous in e-commerce product selection. An absurdly high-priced or pathetically low-selling competitor might represent a neglected long-tail market demand or a critical supply chain disruption signal. Directly deleting these data would cause the product selection model to lose important competitive context.

To eliminate statistical noise while preserving the overall structure of the dataset, the product selection model introduces Winsorization technology. Winsorization does not delete outliers; instead, it replaces them with percentiles close to the edge of the data distribution. For example, when executing 5% Winsorization in a Python environment using scipy.stats.mstats.winsorize, all extremely small values below the 5th percentile in the dataset will be replaced by the value of the 5th percentile, while all extremely large values above the 95th percentile will be anchored by the value of the 95th percentile. This mechanism not only suppresses false price peaks caused by systemic pricing errors but also fully preserves other useful dimensions of the product record (such as review count and listing time), making the scoring model more robust.

Mathematical Normalization Strategies for E-commerce Metrics

The metrics that a product selection scoring model needs to evaluate often have completely different physical dimensions and numerical ranges. For example, a product’s price might range between 10 and 500, its review rating is limited to 1.0 to 5.0, while Amazon’s BSR could span from 1 to 1,000,000. If these absolute values are directly added or weighted, the metrics with huge values will completely swallow the influence of smaller but equally important metrics. Therefore, mathematical methods must be used to map them into a unified vector space.

Min-Max Normalization

For metrics with clear upper and lower bounds (such as the average star rating of a product or the expected profit margin percentage), the model adopts Min-Max Normalization. This method strictly linearly scales the values into the $$ interval. Its formula is defined as:

$$x’_{i} = \frac{x_i – \min(x)}{\max(x) – \min(x)}$$

The advantage of this method is that the results are intuitive and easy to interpret, but its fatal weakness is its extreme sensitivity to outliers. Without prior Winsorization, a single extreme maximum value would cause the remaining 99% of normal data to be compressed into an extremely narrow interval approaching zero, rendering that feature ineffective in the model.

Z-Score Normalization

For continuous variables with no obvious physical upper limits and wide distributions (such as total sales volume, historical cumulative review count, etc.), Z-Score Standardization shows unparalleled advantages. By subtracting the sample mean ($\mu$) and dividing by the standard deviation ($\sigma$), it transforms the data into a normal distribution shape with a mean of 0 and a standard deviation of 1:

$$z = \frac{x – \mu}{\sigma}$$

In the business context of e-commerce product selection, Z-Score provides a built-in standard for measuring market relativity. A product with a sales volume Z-Score of +2.0 directly tells the model that the product’s monthly sales are two standard deviations higher than the average level of the same category. This not only weakens the data drag caused by long-tail products but also precisely highlights those “hit” candidates whose performance far exceeds the industry average in the model, making the score statistically convincing when measuring horizontal competitiveness.

Logarithmic Non-linear Transformation of Amazon BSR

Amazon’s Best Sellers Rank (BSR) is one of the most complex and core metrics in a product selection model. BSR exhibits two extreme mathematical characteristics: first, it is an inverse metric (the smaller the value, the higher the sales); second, the relationship between BSR and actual sales follows an extreme power-law or Pareto distribution. On the BSR list, the daily sales gap between the 1st and 10th places may be hundreds or thousands of times larger than the sales gap between the 10,001st and 20,000th places.

Directly feeding raw BSR values into a linear scoring model will lead to disastrous misjudgments because the model cannot understand the non-linear sales differences between rankings. To solve this problem, the system first needs to take the reciprocal of the BSR, and then apply a Log Transformation. The log transformation can forcibly flatten the extreme distribution, converting the exponentially decaying sales-ranking relationship into an approximately linear one. As a result, the normalized BSR score can truly and proportionally reflect the market share capacity occupied by the product.

Foundation of Multi-Criteria Decision Making: Weight Allocation System Based on Analytic Hierarchy Process (AHP)

After multiple rounds of cleaning and mathematical normalization, the product selection model faces a series of clean data dimensions within the same scale. However, different data dimensions play unequal roles in corporate strategy. For a large e-commerce enterprise with abundant capital seeking to rapidly seize market share, the potential total sales volume might be given the highest weight; while for a cash-strapped startup cross-border enterprise, Return on Investment (ROI), supply chain stability, and low competitive risk are the top priorities.

How to scientifically, rigorously, and objectively assign weights to dozens of metrics without subjective bias is the core decision-making puzzle faced by automated scoring models. Relying on experience weights decided ad-hoc by a team is not only unquantifiable but also difficult to trace and adjust as the team expands and the market changes. To this end, the Analytic Hierarchy Process (AHP) is introduced into the architecture. AHP is a Multi-Criteria Decision Analysis (MCDA) framework invented by Professor Thomas Saaty of the University of Pittsburgh in the 1970s. Through rigorous mathematical derivation, it translates subjective experience into objective matrix weights.

Constructing the Hierarchical Structure and Pairwise Comparison Matrix

The first step in implementing AHP is to deconstruct the macro goal of product selection into a clear tree-like hierarchical structure. The top layer is “Finding the Optimal Cross-Border E-commerce Product”; the middle layer consists of core dimensions (e.g., market demand potential, profit margin, competitive risk, fulfillment complexity); and the bottom layer comprises various API-normalized metrics (e.g., search term volume, gross margin percentage, median review count of top 10 competitors, product weight-to-volume ratio).

Once the structure is established, internal product selection experts, financial directors, and supply chain managers are no longer asked to directly score each metric. Instead, they conduct a series of “Pairwise Comparisons”. Using the Saaty scale (1 means equally important, 9 means absolutely overwhelmingly more important), experts only need to answer specific questions like, “Under the current strategy, how much more important is profit margin than market demand potential?”

The results of these pairwise comparisons are filled into a judgment matrix $A$. The matrix element $a_{ij}$ represents the relative importance of the $i$-th metric compared to the $j$-th metric. A core mathematical property of the AHP matrix is its reciprocity, i.e., $a_{ji} = 1 / a_{ij}$, and the elements on the main diagonal must all be 1.

| Evaluation Criteria | Profitability | Demand Velocity | Competitive Risk | Eigenvector Weight |

| Profitability | 1 | 3 | 5 | 0.637 |

| Demand Velocity | 1/3 | 1 | 2 | 0.258 |

| Competitive Risk | 1/5 | 1/2 | 1 | 0.105 |

Table 3: Simplified AHP pairwise comparison matrix and weight allocation example, reflecting the mathematical structure of the reciprocal matrix.

Extracting Priorities: Calculation of Eigenvectors and Eigenvalues

Once the matrix is built, mathematical operations take over the remaining work. To calculate a global weight vector that can represent the relative importance of each metric, one must solve for the Principal Eigenvalue ($\lambda_{max}$) and its corresponding Eigenvector of the pairwise comparison matrix.

In matrix algebra, this relationship is expressed as:

$$Aw = \lambda_{max} w$$

Where $A$ is the judgment matrix and $w$ is the weight vector we are looking for. In a Python engineering implementation, this can be efficiently completed with a single line of code using the numpy.linalg.eig function. The calculated eigenvector is then normalized (making the sum of all elements in the vector equal to 1), becoming the absolute weight percentage of each dimension in the scoring model.

Logical Consistency Check: Consistency Ratio (CR)

Human judgment inherently possesses cognitive biases and logical contradictions. For example, an expert might think metric A is more important than B, and B is more important than C, but when comparing A and C, conclude absurdly that C is more important than A. The superiority of the AHP model lies not only in calculating weights but also in its ability to use the Consistency Ratio (CR) to conduct a mathematical audit of the logical consistency of expert judgments.

First, calculate the Consistency Index (CI) using the maximum eigenvalue:

$$CI = \frac{\lambda_{max} – n}{n – 1}$$

Where $n$ is the order of the comparison matrix (i.e., the number of evaluation metrics). Subsequently, divide the CI by the average Random Index (RI) of the same order to obtain the Consistency Ratio CR. According to Saaty’s theorem, if CR is less than or equal to 0.10, the subjective judgment of the matrix is considered to have satisfactory consistency; once it exceeds this threshold, it indicates serious logical conflicts in the expert scoring, and the system will reject these weights and request a re-evaluation of the pairwise comparisons. This built-in mathematical rigidity ensures the absolute robustness of the underlying strategic assumptions of the product selection model.

Deep Integration of Machine Learning and Predictive Modeling

AHP provides an excellent static cross-sectional analysis framework capable of precisely measuring a product’s current market state and strategic fit. However, e-commerce is a typical time-series game, and the success of product selection often depends not on static metrics at this moment, but on the trends over the coming months. To endow the product selection scoring model with the ability to “foresee the future,” the architecture deeply integrates a predictive analysis module based on machine learning, particularly cutting-edge algorithms like Extreme Gradient Boosting (XGBoost), on top of the AHP foundation.

Feature Engineering and Algorithm Selection

Deep data collected via Pangolinfo’s various APIs, such as the volatility of historical price trajectories, the seasonal curves of search term trends, and the exponential growth slope of review counts over time, constitute a rich Feature Space for the predictive model. Furthermore, to introduce unstructured text data into the scoring system, the system applies Natural Language Processing (NLP) technologies. By calculating the Term Frequency-Inverse Document Frequency (TF-IDF) in product descriptions, it extracts core selling point differences of competing listings; utilizing pre-trained large language models like BERT (Bidirectional Encoder Representations from Transformers), it analyzes thousands of historical customer reviews to extract Sentiment Analysis scores, precisely quantifying customer pain points and satisfaction with products in that category.

Among numerous algorithms, XGBoost stands out for its outstanding performance in handling e-commerce Tabular Data. It can not only adaptively handle missing values in data (which are extremely common during API scraping anomalies) but also acutely capture complex non-linear relationships and multicollinearity between features (such as the non-linear threshold trigger effect between “price discount magnitude” and “sales surge”). The model is trained based on massive cohort data of historical product lifecycles on the platform. Its output is no longer an isolated score, but a Probability Score of whether this potential product will break through a specific sales threshold within a specific future time window (e.g., within 6 months).

This winning probability calculated by machine learning is ultimately fed into the previous AHP scoring architecture as an independent main metric with an extremely high weight. Through this hybrid modeling approach, the system perfectly combines the expert system (AHP) based on business common sense and strategic constraints with the underlying law discoverer (XGBoost) driven purely by data, creating a composite product selection scoring engine that boasts both strategic steadfastness and predictive insight.

Closed-Loop Evolution: Dynamic Model Optimization and Reinforcement Learning Based on Real Sales Feedback

An excellent product selection scoring model may perform outstandingly at the beginning of its launch, but if it remains static, its prediction accuracy will inevitably decay as the broader market fluctuates, competitors upgrade their defensive strategies, and consumer aesthetics shift. The highest form of an enterprise-level automated product selection architecture lies in its necessity to build a Feedback Loop capable of feeding results back into itself, achieving self-correction, and continuously evolving.

Quantifying Model Deviation via MAPE

To achieve self-learning, the system must continuously align and compare the initial “predicted score” outputted by the product selection model with the product’s “real market performance” (such as actual first-month sales, real gross margin, organic traffic conversion rate) after it is officially listed for sale. In the fields of regression analysis and prediction evaluation, the Mean Absolute Percentage Error (MAPE) is the most core loss function measurement standard.

The mathematical formula for MAPE is defined as:

$$\text{MAPE} = \frac{100}{n} \sum_{t=1}^{n} \left| \frac{A_t – F_t}{A_t} \right|$$

Where $A_t$ represents the actual sales figure or commercial return of the product, and $F_t$ represents the expected score mapped value initially given by the product selection model. By setting a threshold, once the overall MAPE of the system exceeds the tolerable business range (e.g., an error rate over 25%), it automatically triggers a recalibration process for the underlying model parameters. It’s worth noting that when the actual observation value is extremely small (e.g., a failed product selection leading to near-zero sales), MAPE can produce extreme percentage inflation. Therefore, in practice, Weighted Absolute Percentage Error (wMAPE) or Symmetric MAPE (sMAPE) are often introduced to ensure the statistical robustness of the error evaluation.

Bayesian Inference and Dynamic Adaptive Weights

When the model discovers significant deviations between predictions and reality, relying on human meetings to refill the AHP matrix to adjust weights is not only inefficient but also prone to reintroducing cognitive biases. Modern product selection architectures use Bayesian Updating logic to take over this process, achieving mathematical adaptive adjustment of weights.

Under the Bayesian framework, the weights initially derived from the AHP expert matrix are viewed as Prior Probabilities, i.e., our best guess based on experience before seeing any real market outcomes. As selected products are pushed to the market, real sales data (Evidence) continuously flows back into the PostgreSQL data warehouse via APIs. The system uses the Likelihood function to calculate the probability of various metric weight combinations producing the current actual sales results. Through Markov Chain Monte Carlo (MCMC) simulations or Stochastic Gradient Langevin Dynamics (SGLD), the system continuously updates the Posterior Distributions of the metrics.

For example, if the initial AHP matrix deemed “product listing time” as unimportant (extremely low weight), but through Bayesian inference of massive sales feedback, the model finds that the success rate of recently listed products with rapidly growing review counts far exceeds expectations, the Bayesian update mechanism will automatically and smoothly increase the weight of the “review growth speed” feature in the scoring formula without human intervention. This mechanism ensures the product selection model utilizes human wisdom (AHP) as an escort during the cold start phase, while completely evolving into an intelligent agent driven by real market validation (Bayesian inference) during the data accumulation phase.

Reinforcement Learning and the Exploration-Exploitation Dilemma

In advanced explorations of e-commerce product selection predictions, the system further introduces the idea of Reinforcement Learning (RL), specifically Contextual Bandits algorithms. Product selection inherently faces the classic “Exploration-Exploitation” dilemma. If the scoring model executes a 100% “Exploitation” strategy, it will forever only recommend safe hit products that are extremely similar to past successful cases. This would lead to the homogenization of the enterprise’s product line and missing out on emerging, explosive but data-poor blue ocean markets.

By modeling the product selection process as a multi-armed bandit problem, the reinforcement learning Agent receives corresponding “Reward” signals based on the profit margin ultimately generated by the selected product. Deep Bayesian bandit algorithms mean the model’s output is no longer a deterministic score but a probability distribution of predicted success. The algorithm will consciously and proportionally pick some marginal blue ocean products with large variance, high uncertainty, but staggering potential returns for “Exploration”. The feedback data generated by these exploratory product selections hitting the market will help the system map out a more complete e-commerce market demand boundary, maximizing the long-term cumulative revenue of the automated product selection model.

Business Intelligence and Decision Visualization

No matter how rigorous the underlying architecture design is or how ingenious the Bayesian update algorithms are, if the output results cannot be intuitively understood and trusted by purchasing managers, operations directors, and strategic decision-makers, the model will fail in its business implementation. The ultimate delivery layer of an automated product selection architecture must seamlessly integrate complex analysis results stored in PostgreSQL into advanced Business Intelligence (BI) platforms, such as Tableau or Microsoft Power BI.

Since the system data has been 3NF normalized and calculated in PostgreSQL, BI tools can directly connect to the database, or embed Python scripts (using the psycopg2 driver and pandas library) into dashboards to directly call the cleaned, merged, and normalized latest scoring data panels.

On an enterprise-level visualization platform like Tableau, architects can develop a series of highly interactive product selection decision dashboards. At the macro level, treemaps or bubble charts are used to display the overall market potential and competitive saturation of major categories; at the micro level, lists of high-scoring recommended SKUs are displayed. By configuring interactive Action Filters, a purchaser simply clicks on a high-scoring product category, and the dashboard instantly drills down to display the deep profile of that product: including the BSR historical decay curve pulled in real-time from Pangolinfo, the price fluctuation trends of core competitors after Winsorization, and a clear breakdown of the score (e.g., how much of the score comes from the AHP profit metric and how much comes from the XGBoost hit probability prediction). This design of decision visualization transforms cold, complex mathematical models into intuitive, interpretable strategic screens, completing the full closed loop from API data scraping to final enterprise purchasing and inventory stocking.

Conclusion

In an era where e-commerce has entered a zero-sum game of high homogenization and price wars, building an automated product selection scoring model relying on professional API data sources like Pangolinfo has become a key battle for cross-border retail enterprises to build core moats. This article exhaustively deconstructs the underlying logic and architectural panorama of this massive system. From elegantly bypassing platform anti-scraping limits and acquiring massive JSON payloads through asynchronous calls and exponential backoff strategies, to establishing the central computational status of PostgreSQL relational databases in ELT pipelines; from applying Winsorization to mitigate extreme outlier interference and conducting strict feature normalization, to utilizing the Analytic Hierarchy Process (AHP) to build metric weights conforming to corporate strategy through matrix operations.

The brilliance of this system lies not only in its static dissection of existing data but also in its probabilistic prediction of future sales through the introduction of the XGBoost machine learning algorithm. It is imbued with the vitality of self-correction and exploring unknown blue oceans through closed-loop MAPE monitoring, dynamic Bayesian updates, and reinforcement learning bandit mechanisms. Ultimately, through visualization connections with Tableau and Power BI, complex algorithmic computing power is successfully transformed into tangible business insights. This is a profound revolution from empiricism to algorithmism. Enterprises mastering this architecture will undoubtedly achieve the ultimate leap from passively responding to the market to algorithmically defining blockbusters in the magnificent global e-commerce torrent.

Free to use now or read the calling docs.