引言:亚马逊评论数据采集的现实困境

在电商数据分析的世界里,Amazon评论爬虫技术一直是众多卖家、数据分析师和研究人员关注的焦点。想象一下这样的场景:你正在分析一款热门产品的市场表现,需要收集数千条真实用户评论来洞察消费者心声,制定精准的营销策略。然而,当你尝试手动收集这些数据时,却发现面临着诸多技术壁垒和政策限制。

这正是当今许多企业和个人开发者面临的痛点。亚马逊作为全球最大的电商平台,其评论数据蕴含着巨大的商业价值,但获取这些数据却并非易事。传统的手工收集方式效率低下,而简单的爬虫脚本又容易被反爬虫机制拦截。更令人头疼的是,亚马逊近年来不断收紧数据获取政策,使得评论采集变得更加困难。

本文将深入探讨如何使用Python爬取亚马逊评论,提供完整的代码示例和实战经验,同时介绍面对政策限制时的有效解决方案。无论你是数据分析新手还是经验丰富的开发者,这篇文章都将为你的亚马逊评论采集工作提供实用的指导。

第一部分:理解亚马逊评论数据的价值与挑战

1.1 评论数据的商业价值

Amazon评论数据对于电商从业者而言具有不可估量的价值。通过Python爬取亚马逊评论,企业可以获得以下关键洞察:

消费者情感分析:评论中蕴含着消费者对产品的真实感受,包括满意度、痛点和改进建议。这些信息对于产品优化和营销策略制定至关重要。

竞品分析:通过分析竞争对手产品的评论,可以发现其产品的优缺点,为自己的产品定位和差异化策略提供参考。

市场趋势洞察:评论数据的时间序列分析可以揭示消费者偏好的变化趋势,帮助企业提前布局未来市场。

产品改进方向:负面评论往往指出了产品的具体问题,这些反馈对于产品研发团队来说是宝贵的改进指导。

1.2 技术挑战与政策限制

然而,开发有效的亚马逊评论采集工具面临着多重挑战:

反爬虫机制:亚马逊部署了复杂的反爬虫系统,包括IP限制、验证码验证、用户行为分析等多重防护措施。

动态页面加载:现代网页大量使用JavaScript动态加载内容,传统的静态爬虫难以获取完整数据。

登录限制:2023年以来,亚马逊逐步收紧了匿名访问评论的权限,完整评论数据需要登录后才能获取。

法律合规性:数据采集必须遵守相关法律法规和平台服务条款,避免法律风险。

第二部分:Python爬虫基础实现方案

2.1 环境准备与依赖安装

在开始构建Amazon评论爬虫之前,我们需要准备合适的开发环境。以下是推荐的技术栈:

# 安装必要的依赖包

pip install requests beautifulsoup4 selenium lxml fake-useragent

pip install pandas numpy matplotlib seaborn

pip install requests-html selenium-wire

2.2 基础爬虫框架实现

以下是一个基础的Python爬取亚马逊评论的实现框架:

import requests

from bs4 import BeautifulSoup

import time

import random

from fake_useragent import UserAgent

import json

import pandas as pd

from urllib.parse import urljoin, urlparse

import logging

class AmazonReviewScraper:

def __init__(self):

self.session = requests.Session()

self.ua = UserAgent()

self.setup_headers()

self.setup_logging()

def setup_headers(self):

"""设置请求头,模拟真实浏览器行为"""

self.headers = {

'User-Agent': self.ua.random,

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,*/*;q=0.8',

'Accept-Language': 'zh-CN,zh;q=0.9,en;q=0.8',

'Accept-Encoding': 'gzip, deflate, br',

'Connection': 'keep-alive',

'Upgrade-Insecure-Requests': '1',

'Sec-Fetch-Dest': 'document',

'Sec-Fetch-Mode': 'navigate',

'Sec-Fetch-Site': 'none',

}

self.session.headers.update(self.headers)

def setup_logging(self):

"""配置日志系统"""

logging.basicConfig(

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s',

handlers=[

logging.FileHandler('amazon_scraper.log'),

logging.StreamHandler()

]

)

self.logger = logging.getLogger(__name__)

def get_product_reviews(self, product_url, max_pages=5):

"""获取产品评论数据"""

try:

# 解析产品ID

asin = self.extract_asin(product_url)

if not asin:

self.logger.error(f"无法提取ASIN: {product_url}")

return []

reviews = []

for page in range(1, max_pages + 1):

page_reviews = self.scrape_review_page(asin, page)

if not page_reviews:

break

reviews.extend(page_reviews)

# 随机延迟,避免被检测

time.sleep(random.uniform(2, 5))

return reviews

except Exception as e:

self.logger.error(f"获取评论失败: {e}")

return []

def extract_asin(self, url):

"""从URL中提取ASIN"""

# 多种URL格式的ASIN提取

patterns = [

r'/dp/([A-Z0-9]{10})',

r'/product/([A-Z0-9]{10})',

r'asin=([A-Z0-9]{10})',

]

for pattern in patterns:

import re

match = re.search(pattern, url)

if match:

return match.group(1)

return None

def scrape_review_page(self, asin, page_num):

"""爬取指定页面的评论"""

review_url = f"https://www.amazon.com/product-reviews/{asin}"

params = {

'pageNumber': page_num,

'sortBy': 'recent'

}

try:

response = self.session.get(review_url, params=params, timeout=10)

response.raise_for_status()

soup = BeautifulSoup(response.content, 'html.parser')

return self.parse_reviews(soup)

except requests.RequestException as e:

self.logger.error(f"请求失败 - 页面{page_num}: {e}")

return []

def parse_reviews(self, soup):

"""解析评论数据"""

reviews = []

review_elements = soup.find_all('div', {'data-hook': 'review'})

for element in review_elements:

try:

review = self.extract_review_data(element)

if review:

reviews.append(review)

except Exception as e:

self.logger.warning(f"解析单条评论失败: {e}")

continue

return reviews

def extract_review_data(self, element):

"""提取单条评论的详细信息"""

review = {}

# 评论标题

title_elem = element.find('a', {'data-hook': 'review-title'})

review['title'] = title_elem.get_text(strip=True) if title_elem else ''

# 评分

rating_elem = element.find('i', {'data-hook': 'review-star-rating'})

if rating_elem:

rating_text = rating_elem.get_text(strip=True)

rating = rating_text.split()[0] if rating_text else '0'

review['rating'] = float(rating)

# 评论内容

content_elem = element.find('span', {'data-hook': 'review-body'})

review['content'] = content_elem.get_text(strip=True) if content_elem else ''

# 评论时间

date_elem = element.find('span', {'data-hook': 'review-date'})

review['date'] = date_elem.get_text(strip=True) if date_elem else ''

# 用户名

author_elem = element.find('span', class_='a-profile-name')

review['author'] = author_elem.get_text(strip=True) if author_elem else ''

# 有用性投票

helpful_elem = element.find('span', {'data-hook': 'helpful-vote-statement'})

review['helpful_votes'] = helpful_elem.get_text(strip=True) if helpful_elem else '0'

return review

2.3 处理验证码与反爬虫机制

亚马逊的反爬虫机制日益复杂,以下是一些应对策略:

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

class AdvancedAmazonScraper(AmazonReviewScraper):

def __init__(self, use_selenium=False, proxy_list=None):

super().__init__()

self.use_selenium = use_selenium

self.proxy_list = proxy_list or []

self.current_proxy_index = 0

if use_selenium:

self.setup_selenium()

def setup_selenium(self):

"""配置Selenium WebDriver"""

chrome_options = Options()

chrome_options.add_argument('--no-sandbox')

chrome_options.add_argument('--disable-dev-shm-usage')

chrome_options.add_argument('--disable-blink-features=AutomationControlled')

chrome_options.add_experimental_option("excludeSwitches", ["enable-automation"])

chrome_options.add_experimental_option('useAutomationExtension', False)

# 随机用户代理

chrome_options.add_argument(f'--user-agent={self.ua.random}')

# 配置代理

if self.proxy_list:

proxy = self.get_next_proxy()

chrome_options.add_argument(f'--proxy-server={proxy}')

self.driver = webdriver.Chrome(options=chrome_options)

self.driver.execute_script("Object.defineProperty(navigator, 'webdriver', {get: () => undefined})")

def get_next_proxy(self):

"""轮换代理IP"""

if not self.proxy_list:

return None

proxy = self.proxy_list[self.current_proxy_index]

self.current_proxy_index = (self.current_proxy_index + 1) % len(self.proxy_list)

return proxy

def handle_captcha(self, driver):

"""处理验证码(需要人工干预或第三方服务)"""

try:

# 检测是否出现验证码

captcha_element = driver.find_element(By.ID, "captchacharacters")

if captcha_element:

self.logger.warning("检测到验证码,需要人工处理")

# 这里可以集成验证码识别服务

input("请手动解决验证码后按回车继续...")

return True

except:

pass

return False

def scrape_with_selenium(self, product_url):

"""使用Selenium爬取(可处理JavaScript渲染)"""

try:

self.driver.get(product_url)

# 处理可能出现的验证码

self.handle_captcha(self.driver)

# 等待页面加载完成

WebDriverWait(self.driver, 10).until(

EC.presence_of_element_located((By.ID, "reviewsMedley"))

)

# 滚动页面以触发懒加载

self.scroll_to_load_reviews()

# 解析页面内容

soup = BeautifulSoup(self.driver.page_source, 'html.parser')

return self.parse_reviews(soup)

except Exception as e:

self.logger.error(f"Selenium爬取失败: {e}")

return []

def scroll_to_load_reviews(self):

"""滚动页面加载更多评论"""

last_height = self.driver.execute_script("return document.body.scrollHeight")

while True:

# 滚动到页面底部

self.driver.execute_script("window.scrollTo(0, document.body.scrollHeight);")

# 等待页面加载

time.sleep(2)

# 检查是否有新内容加载

new_height = self.driver.execute_script("return document.body.scrollHeight")

if new_height == last_height:

break

last_height = new_height

第三部分:代理IP配置与网络优化

3.1 代理IP轮换机制

在进行大规模Amazon评论爬虫时,使用代理IP是必不可少的策略:

import itertools

from requests.adapters import HTTPAdapter

from requests.packages.urllib3.util.retry import Retry

class ProxyManager:

def __init__(self, proxy_list):

self.proxy_list = proxy_list

self.proxy_cycle = itertools.cycle(proxy_list)

self.failed_proxies = set()

def get_working_proxy(self):

"""获取可用的代理"""

for _ in range(len(self.proxy_list)):

proxy = next(self.proxy_cycle)

if proxy not in self.failed_proxies:

if self.test_proxy(proxy):

return proxy

else:

self.failed_proxies.add(proxy)

return None

def test_proxy(self, proxy):

"""测试代理可用性"""

try:

response = requests.get(

'http://httpbin.org/ip',

proxies={'http': proxy, 'https': proxy},

timeout=5

)

return response.status_code == 200

except:

return False

class EnhancedAmazonScraper(AdvancedAmazonScraper):

def __init__(self, proxy_list=None):

super().__init__()

self.proxy_manager = ProxyManager(proxy_list) if proxy_list else None

self.setup_session_retry()

def setup_session_retry(self):

"""配置重试机制"""

retry_strategy = Retry(

total=3,

backoff_factor=1,

status_forcelist=[429, 500, 502, 503, 504],

)

adapter = HTTPAdapter(max_retries=retry_strategy)

self.session.mount("http://", adapter)

self.session.mount("https://", adapter)

def make_request_with_proxy(self, url, **kwargs):

"""使用代理发送请求"""

if self.proxy_manager:

proxy = self.proxy_manager.get_working_proxy()

if proxy:

kwargs['proxies'] = {'http': proxy, 'https': proxy}

return self.session.get(url, **kwargs)

3.2 请求频率控制

合理的请求频率控制是避免被封禁的关键:

import threading

from collections import defaultdict

from datetime import datetime, timedelta

class RateLimiter:

def __init__(self, max_requests_per_minute=30):

self.max_requests = max_requests_per_minute

self.requests = defaultdict(list)

self.lock = threading.Lock()

def wait_if_needed(self, domain='amazon.com'):

"""根据域名限制请求频率"""

with self.lock:

now = datetime.now()

minute_ago = now - timedelta(minutes=1)

# 清理过期记录

self.requests[domain] = [

req_time for req_time in self.requests[domain]

if req_time > minute_ago

]

# 检查是否需要等待

if len(self.requests[domain]) >= self.max_requests:

oldest_request = min(self.requests[domain])

wait_time = 60 - (now - oldest_request).total_seconds()

if wait_time > 0:

time.sleep(wait_time)

# 记录当前请求

self.requests[domain].append(now)

第四部分:亚马逊政策限制与应对策略

4.1 当前政策限制分析

自2023年起,亚马逊对评论数据的访问政策发生了重大变化:

完整评论需要登录:匿名用户只能看到部分评论(通常是8-10条),完整的评论列表需要登录后才能访问。

Customer Says功能:亚马逊推出的”Customer Says”功能整合了评论中的关键信息,但这部分数据的获取同样面临限制。

API限制:官方API对评论数据的开放程度有限,且成本较高。

4.2 有限评论数据的最大化利用

尽管存在限制,我们仍然可以从可访问的评论中获取有价值的信息:

class LimitedReviewAnalyzer:

def __init__(self):

self.sentiment_keywords = {

'positive': ['excellent', 'amazing', 'great', 'perfect', 'love', 'awesome'],

'negative': ['terrible', 'awful', 'hate', 'worst', 'horrible', 'disappointing']

}

def extract_accessible_reviews(self, product_url):

"""提取可访问的评论数据(通常8-10条)"""

scraper = EnhancedAmazonScraper()

try:

response = scraper.session.get(product_url)

soup = BeautifulSoup(response.content, 'html.parser')

# 查找评论区域

review_section = soup.find('div', {'id': 'reviews-medley-footer'})

if not review_section:

review_section = soup.find('div', {'data-hook': 'reviews-medley-footer'})

reviews = []

if review_section:

review_elements = review_section.find_all('div', {'data-hook': 'review'})

for element in review_elements:

review = scraper.extract_review_data(element)

if review:

reviews.append(review)

return reviews

except Exception as e:

logging.error(f"提取评论失败: {e}")

return []

def analyze_customer_says(self, product_url):

"""分析Customer Says数据"""

scraper = EnhancedAmazonScraper()

try:

response = scraper.session.get(product_url)

soup = BeautifulSoup(response.content, 'html.parser')

# 查找Customer Says部分

customer_says = soup.find('div', {'data-hook': 'cr-insights-widget'})

if customer_says:

insights = []

insight_elements = customer_says.find_all('div', class_='cr-insights-text')

for element in insight_elements:

text = element.get_text(strip=True)

sentiment = self.analyze_sentiment(text)

insights.append({

'text': text,

'sentiment': sentiment

})

return insights

except Exception as e:

logging.error(f"分析Customer Says失败: {e}")

return []

def analyze_sentiment(self, text):

"""简单的情感分析"""

text_lower = text.lower()

positive_count = sum(1 for word in self.sentiment_keywords['positive'] if word in text_lower)

negative_count = sum(1 for word in self.sentiment_keywords['negative'] if word in text_lower)

if positive_count > negative_count:

return 'positive'

elif negative_count > positive_count:

return 'negative'

else:

return 'neutral'

第五部分:Pangolin Scrape API解决方案

5.1 专业API的必要性

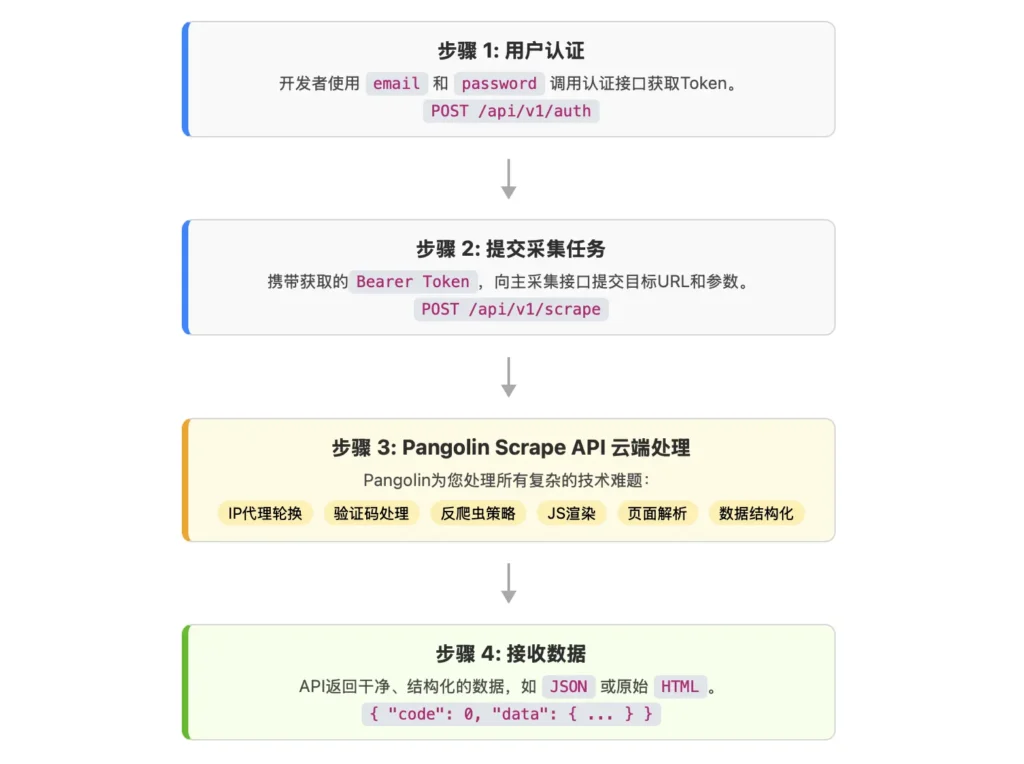

面对亚马逊日益严格的数据访问限制,传统的爬虫方法已经难以满足企业级的数据采集需求。这时候,专业的亚马逊评论采集工具就显得尤为重要。Pangolin Scrape API作为专业的电商数据采集服务,为Amazon数据采集提供了高效、稳定的解决方案。

为什么选择专业API服务?

- 稳定性保障:专业服务具备强大的反反爬虫能力,确保数据采集的连续性和稳定性。

- 完整数据覆盖:能够突破登录限制,获取完整的 Amazon 公开数据,包括Customer Says等高价值信息。

- 规模化处理:支持大规模并发处理,满足企业级数据需求。

- 技术门槛低:无需复杂的反爬虫技术,简单的API调用即可获取数据。

5.2 Pangolin Scrape API详细使用指南

Pangolin Scrape API专门针对亚马逊等电商平台进行了深度优化,特别是在评论数据采集方面具有显著优势:

import requests

import json

import time

class PangolinAmazonReviewAPI:

def __init__(self, api_key):

self.api_key = api_key

self.base_url = "https://scrapeapi.pangolinfo.com/api/v1/scrape"

self.headers = {

"Authorization": f"Bearer {api_key}",

"Content-Type": "application/json"

}

def scrape_product_details_with_reviews(self, product_url, zipcode="10041"):

"""

获取产品详情页面,包含完整的公开数据

Args:

product_url: Amazon产品页面URL

zipcode: 指定邮区,影响价格和配送信息

Returns:

包含产品信息和评论数据的完整响应

"""

payload = {

"url": product_url,

"formats": ["json"], # 获取结构化数据

"parserName": "amzProductDetail", # 使用Amazon产品详情解析器

"bizContext": {

"zipcode": zipcode # 可以根据需要调整邮区

}

}

try:

response = requests.post(

self.base_url,

json=payload,

headers=self.headers,

timeout=30

)

response.raise_for_status()

result = response.json()

if result['code'] == 0:

return self.parse_review_data(result['data'])

else:

print(f"API调用失败: {result.get('message', '未知错误')}")

return None

except requests.exceptions.RequestException as e:

print(f"请求失败: {e}")

return None

def parse_review_data(self, api_response):

"""解析API返回的评论数据"""

parsed_data = {

'product_info': {},

'reviews': [],

'customer_says': [],

'review_summary': {}

}

# 解析产品基本信息

if 'product' in api_response:

product = api_response['product']

parsed_data['product_info'] = {

'title': product.get('title', ''),

'asin': product.get('asin', ''),

'brand': product.get('brand', ''),

'price': product.get('price', ''),

'rating': product.get('rating', ''),

'review_count': product.get('reviewCount', 0)

}

# 解析评论数据

if 'reviews' in api_response:

for review in api_response['reviews']:

parsed_data['reviews'].append({

'id': review.get('id', ''),

'title': review.get('title', ''),

'content': review.get('content', ''),

'rating': review.get('rating', 0),

'author': review.get('author', ''),

'date': review.get('date', ''),

'helpful_votes': review.get('helpfulVotes', 0),

'verified_purchase': review.get('verifiedPurchase', False)

})

# 解析Customer Says数据(这是Pangolin API的特色功能)

if 'customerSays' in api_response:

parsed_data['customer_says'] = api_response['customerSays']

# 解析评论统计信息

if 'reviewSummary' in api_response:

parsed_data['review_summary'] = api_response['reviewSummary']

return parsed_data

def batch_scrape_reviews(self, product_urls, batch_size=10, delay=1):

"""

批量获取多个产品的评论数据

Args:

product_urls: 产品URL列表

batch_size: 批处理大小

delay: 请求间隔(秒)

"""

all_results = []

for i in range(0, len(product_urls), batch_size):

batch = product_urls[i:i + batch_size]

batch_results = []

for url in batch:

print(f"正在处理: {url}")

result = self.scrape_product_details_with_reviews(url)

if result:

batch_results.append(result)

# 添加延迟,避免过于频繁的请求

time.sleep(delay)

all_results.extend(batch_results)

print(f"完成批次 {i//batch_size + 1}/{(len(product_urls)-1)//batch_size + 1}")

return all_results

# 使用示例

def demo_pangolin_api():

"""演示如何使用Pangolin API获取Amazon评论数据"""

# 初始化API客户端(需要替换为真实的API Key)

api_client = PangolinAmazonReviewAPI("your-api-key-here")

# 示例产品URL

test_urls = [

"https://www.amazon.com/dp/B0DYTF8L2W",

"https://www.amazon.com/dp/B08N5WRWNW",

"https://www.amazon.com/dp/B07YN2ZZQC"

]

# 单个产品数据获取

single_result = api_client.scrape_product_details_with_reviews(test_urls[0])

if single_result:

print("=== 产品信息 ===")

print(json.dumps(single_result['product_info'], indent=2))

print("\n=== 评论数据 ===")

for i, review in enumerate(single_result['reviews'][:3]): # 显示前3条评论

print(f"评论 {i+1}:")

print(f" 标题: {review['title']}")

print(f" 评分: {review['rating']}")

print(f" 内容: {review['content'][:100]}...")

print(f" 作者: {review['author']}")

print(f" 日期: {review['date']}")

print()

print("=== Customer Says 洞察 ===")

if single_result['customer_says']:

for insight in single_result['customer_says'][:5]: # 显示前5个洞察

print(f"- {insight}")

# 批量处理示例

print("\n=== 批量处理示例 ===")

batch_results = api_client.batch_scrape_reviews(test_urls[:2], batch_size=2)

print(f"成功获取 {len(batch_results)} 个产品的数据")

if __name__ == "__main__":

demo_pangolin_api()

5.3 Pangolin API的核心优势

相比传统爬虫方法,Pangolin Scrape API在Amazon评论采集方面具有以下显著优势:

1. 突破登录限制

- 能够获取完整的评论数据,而不仅仅是前8-10条

- 支持获取所有评论页面的数据

- 可以访问需要登录才能查看的深层评论

2. Customer Says数据采集 这是Pangolin API的独特优势。在亚马逊关闭传统评论API后,Customer Says成为了获取用户反馈洞察的重要渠道:

def analyze_customer_says_data(customer_says_data):

"""

分析Customer Says数据,提取关键洞察

"""

insights = {

'positive_aspects': [],

'negative_aspects': [],

'feature_mentions': {},

'sentiment_distribution': {'positive': 0, 'negative': 0, 'neutral': 0}

}

for item in customer_says_data:

# 分类正面和负面评价

if item.get('sentiment') == 'positive':

insights['positive_aspects'].append(item['text'])

insights['sentiment_distribution']['positive'] += 1

elif item.get('sentiment') == 'negative':

insights['negative_aspects'].append(item['text'])

insights['sentiment_distribution']['negative'] += 1

else:

insights['sentiment_distribution']['neutral'] += 1

# 提取特征提及

if 'feature' in item:

feature = item['feature']

if feature not in insights['feature_mentions']:

insights['feature_mentions'][feature] = {'count': 0, 'sentiment': []}

insights['feature_mentions'][feature]['count'] += 1

insights['feature_mentions'][feature]['sentiment'].append(item.get('sentiment', 'neutral'))

return insights

3. 高成功率与稳定性

- 98%以上的成功率,远超传统爬虫方法

- 支持指定邮区采集,获取更精准的本地化数据

- 自动处理反爬虫机制,无需担心IP被封

4. 数据完整性 支持采集Amazon评论的完整数据结构:

class ComprehensiveReviewAnalysis:

def __init__(self, api_client):

self.api_client = api_client

def get_complete_review_analysis(self, product_url):

"""获取完整的评论分析报告"""

# 使用Pangolin API获取完整数据

raw_data = self.api_client.scrape_product_details_with_reviews(product_url)

if not raw_data:

return None

analysis_report = {

'product_overview': self.analyze_product_overview(raw_data['product_info']),

'review_statistics': self.calculate_review_statistics(raw_data['reviews']),

'sentiment_analysis': self.perform_sentiment_analysis(raw_data['reviews']),

'keyword_analysis': self.extract_keywords(raw_data['reviews']),

'customer_insights': self.analyze_customer_says_data(raw_data['customer_says']),

'competitive_positioning': self.analyze_competitive_position(raw_data)

}

return analysis_report

def analyze_product_overview(self, product_info):

"""分析产品概览"""

return {

'title': product_info['title'],

'overall_rating': product_info['rating'],

'total_reviews': product_info['review_count'],

'price_point': product_info['price'],

'brand_reputation': product_info['brand']

}

def calculate_review_statistics(self, reviews):

"""计算评论统计信息"""

if not reviews:

return {}

ratings = [review['rating'] for review in reviews if review['rating']]

return {

'total_reviews': len(reviews),

'average_rating': sum(ratings) / len(ratings) if ratings else 0,

'rating_distribution': self.get_rating_distribution(ratings),

'verified_purchase_ratio': len([r for r in reviews if r.get('verified_purchase')]) / len(reviews),

'average_review_length': sum(len(r['content']) for r in reviews) / len(reviews)

}

def get_rating_distribution(self, ratings):

"""获取评分分布"""

distribution = {1: 0, 2: 0, 3: 0, 4: 0, 5: 0}

for rating in ratings:

if rating in distribution:

distribution[rating] += 1

return distribution

def perform_sentiment_analysis(self, reviews):

"""执行情感分析"""

sentiments = {'positive': 0, 'negative': 0, 'neutral': 0}

positive_keywords = ['excellent', 'amazing', 'great', 'perfect', 'love', 'awesome', 'fantastic', 'wonderful']

negative_keywords = ['terrible', 'awful', 'hate', 'worst', 'horrible', 'disappointing', 'useless', 'broken']

for review in reviews:

content_lower = review['content'].lower()

positive_count = sum(1 for word in positive_keywords if word in content_lower)

negative_count = sum(1 for word in negative_keywords if word in content_lower)

if positive_count > negative_count:

sentiments['positive'] += 1

elif negative_count > positive_count:

sentiments['negative'] += 1

else:

sentiments['neutral'] += 1

return sentiments

def extract_keywords(self, reviews):

"""提取关键词"""

from collections import Counter

import re

# 合并所有评论内容

all_text = ' '.join([review['content'] for review in reviews])

# 简单的词汇提取(实际应用中可以使用更复杂的NLP技术)

words = re.findall(r'\b[a-zA-Z]{3,}\b', all_text.lower())

# 过滤常见停用词

stop_words = {'the', 'and', 'for', 'are', 'but', 'not', 'you', 'all', 'can', 'had', 'have', 'has', 'what'}

filtered_words = [word for word in words if word not in stop_words]

# 获取最常见的20个词

return dict(Counter(filtered_words).most_common(20))

第六部分:实战案例分析

6.1 电商卖家竞品分析案例

让我们通过一个具体的案例来演示如何使用Pangolin API进行深度的竞品分析:

class CompetitorAnalysis:

def __init__(self, pangolin_client):

self.client = pangolin_client

def analyze_competitor_products(self, competitor_asins, category="Electronics"):

"""分析竞争对手产品"""

competitor_data = []

for asin in competitor_asins:

product_url = f"https://www.amazon.com/dp/{asin}"

print(f"分析产品: {asin}")

# 获取产品数据

product_data = self.client.scrape_product_details_with_reviews(product_url)

if product_data:

# 执行深度分析

analysis = ComprehensiveReviewAnalysis(self.client)

detailed_analysis = analysis.get_complete_review_analysis(product_url)

competitor_data.append({

'asin': asin,

'raw_data': product_data,

'analysis': detailed_analysis

})

# 生成竞品对比报告

return self.generate_competitor_report(competitor_data, category)

def generate_competitor_report(self, competitor_data, category):

"""生成竞品对比报告"""

report = {

'category': category,

'analysis_date': datetime.now().isoformat(),

'products_analyzed': len(competitor_data),

'summary': {},

'detailed_comparison': {},

'market_insights': {},

'recommendations': []

}

# 汇总分析

all_ratings = []

all_review_counts = []

all_prices = []

for product in competitor_data:

if product['analysis']:

overview = product['analysis']['product_overview']

all_ratings.append(overview['overall_rating'])

all_review_counts.append(overview['total_reviews'])

# 价格处理(需要清理$符号等)

price_str = str(overview['price_point']).replace(', '').replace(',', '')

try:

price = float(price_str)

all_prices.append(price)

except:

pass

report['summary'] = {

'average_rating': sum(all_ratings) / len(all_ratings) if all_ratings else 0,

'average_review_count': sum(all_review_counts) / len(all_review_counts) if all_review_counts else 0,

'average_price': sum(all_prices) / len(all_prices) if all_prices else 0,

'price_range': {

'min': min(all_prices) if all_prices else 0,

'max': max(all_prices) if all_prices else 0

}

}

# 详细对比分析

report['detailed_comparison'] = self.compare_products(competitor_data)

# 市场洞察

report['market_insights'] = self.extract_market_insights(competitor_data)

# 生成建议

report['recommendations'] = self.generate_recommendations(competitor_data, report['summary'])

return report

def compare_products(self, competitor_data):

"""详细产品对比"""

comparison = {

'rating_leaders': [],

'review_volume_leaders': [],

'sentiment_analysis': {},

'feature_comparison': {}

}

# 按评分排序

products_by_rating = sorted(

competitor_data,

key=lambda x: x['analysis']['product_overview']['overall_rating'] if x['analysis'] else 0,

reverse=True

)

comparison['rating_leaders'] = [

{

'asin': p['asin'],

'rating': p['analysis']['product_overview']['overall_rating'],

'title': p['analysis']['product_overview']['title'][:50] + '...'

} for p in products_by_rating[:3] if p['analysis']

]

# 按评论数量排序

products_by_reviews = sorted(

competitor_data,

key=lambda x: x['analysis']['product_overview']['total_reviews'] if x['analysis'] else 0,

reverse=True

)

comparison['review_volume_leaders'] = [

{

'asin': p['asin'],

'review_count': p['analysis']['product_overview']['total_reviews'],

'title': p['analysis']['product_overview']['title'][:50] + '...'

} for p in products_by_reviews[:3] if p['analysis']

]

return comparison

def extract_market_insights(self, competitor_data):

"""提取市场洞察"""

insights = {

'common_pain_points': [],

'valued_features': [],

'market_gaps': [],

'pricing_insights': {}

}

# 汇总所有负面关键词

all_negative_keywords = []

all_positive_keywords = []

for product in competitor_data:

if product['analysis'] and product['analysis']['customer_insights']:

customer_insights = product['analysis']['customer_insights']

all_negative_keywords.extend(customer_insights.get('negative_aspects', []))

all_positive_keywords.extend(customer_insights.get('positive_aspects', []))

# 分析常见痛点

from collections import Counter

negative_counter = Counter(all_negative_keywords)

insights['common_pain_points'] = [

{'issue': issue, 'frequency': freq}

for issue, freq in negative_counter.most_common(5)

]

# 分析受欢迎的特性

positive_counter = Counter(all_positive_keywords)

insights['valued_features'] = [

{'feature': feature, 'frequency': freq}

for feature, freq in positive_counter.most_common(5)

]

return insights

def generate_recommendations(self, competitor_data, summary):

"""生成优化建议"""

recommendations = []

# 基于评分分析的建议

if summary['average_rating'] < 4.0:

recommendations.append({

'type': 'product_quality',

'priority': 'high',

'description': '市场平均评分较低,存在产品质量提升机会',

'action': '重点关注产品质量和用户体验改进'

})

# 基于评论数量的建议

if summary['average_review_count'] < 100:

recommendations.append({

'type': 'market_penetration',

'priority': 'medium',

'description': '市场评论数量较少,可能是新兴市场或竞争不充分',

'action': '考虑加大营销投入,快速占领市场份额'

})

# 价格策略建议

price_range = summary.get('price_range', {})

if price_range.get('max', 0) - price_range.get('min', 0) > price_range.get('min', 0):

recommendations.append({

'type': 'pricing_strategy',

'priority': 'medium',

'description': '价格区间较大,存在不同定位策略空间',

'action': '考虑采用差异化定价策略,针对不同细分市场'

})

return recommendations

# 实际使用示例

def run_competitor_analysis():

"""运行竞品分析示例"""

# 初始化Pangolin客户端

pangolin_client = PangolinAmazonReviewAPI("your-api-key")

# 定义竞争对手产品ASIN列表(示例)

competitor_asins = [

"B08N5WRWNW", # 竞品1

"B07YN2ZZQC", # 竞品2

"B0DYTF8L2W", # 竞品3

"B09JQT5XLV" # 竞品4

]

# 执行竞品分析

analyzer = CompetitorAnalysis(pangolin_client)

report = analyzer.analyze_competitor_products(competitor_asins, "智能家居设备")

# 输出报告

print("=== 竞品分析报告 ===")

print(f"分析类目: {report['category']}")

print(f"分析产品数量: {report['products_analyzed']}")

print(f"市场平均评分: {report['summary']['average_rating']:.2f}")

print(f"平均评论数量: {report['summary']['average_review_count']:.0f}")

print(f"平均价格: ${report['summary']['average_price']:.2f}")

print("\n=== 评分领先产品 ===")

for leader in report['detailed_comparison']['rating_leaders']:

print(f"ASIN: {leader['asin']} - 评分: {leader['rating']} - {leader['title']}")

print("\n=== 市场洞察 ===")

print("常见痛点:")

for pain_point in report['market_insights']['common_pain_points']:

print(f" - {pain_point['issue']} (提及频次: {pain_point['frequency']})")

print("\n=== 优化建议 ===")

for rec in report['recommendations']:

print(f"优先级 {rec['priority']}: {rec['description']}")

print(f" 建议行动: {rec['action']}")

print()

if __name__ == "__main__":

run_competitor_analysis()

6.2 数据可视化与报告生成

为了更好地展示分析结果,我们可以结合数据可视化技术:

import matplotlib.pyplot as plt

import seaborn as sns

import pandas as pd

from wordcloud import WordCloud

class ReviewDataVisualizer:

def __init__(self):

plt.rcParams['font.sans-serif'] = ['SimHei'] # 支持中文显示

plt.rcParams['axes.unicode_minus'] = False

def create_comprehensive_dashboard(self, analysis_report, output_dir="./reports"):

"""创建综合分析仪表板"""

# 创建多个子图

fig, axes = plt.subplots(2, 3, figsize=(18, 12))

fig.suptitle('Amazon产品评论分析仪表板', fontsize=16, fontweight='bold')

# 1. 评分分布

self.plot_rating_distribution(analysis_report['review_statistics']['rating_distribution'], axes[0, 0])

# 2. 情感分析

self.plot_sentiment_analysis(analysis_report['sentiment_analysis'], axes[0, 1])

# 3. 关键词云

self.plot_keyword_cloud(analysis_report['keyword_analysis'], axes[0, 2])

# 4. 时间趋势(如果有时间数据)

self.plot_review_trends(analysis_report, axes[1, 0])

# 5. 竞品对比

self.plot_competitor_comparison(analysis_report, axes[1, 1])

# 6. 特征重要性

self.plot_feature_importance(analysis_report.get('customer_insights', {}), axes[1, 2])

plt.tight_layout()

plt.savefig(f"{output_dir}/amazon_review_dashboard.png", dpi=300, bbox_inches='tight')

plt.show()

def plot_rating_distribution(self, rating_dist, ax):

"""绘制评分分布图"""

ratings = list(rating_dist.keys())

counts = list(rating_dist.values())

bars = ax.bar(ratings, counts, color=['#ff4444', '#ff8800', '#ffcc00', '#88cc00', '#44cc00'])

ax.set_xlabel('评分')

ax.set_ylabel('评论数量')

ax.set_title('评分分布')

# 添加数据标签

for bar, count in zip(bars, counts):

height = bar.get_height()

ax.text(bar.get_x() + bar.get_width()/2., height + 0.1,

f'{count}', ha='center', va='bottom')

def plot_sentiment_analysis(self, sentiment_data, ax):

"""绘制情感分析饼图"""

labels = list(sentiment_data.keys())

sizes = list(sentiment_data.values())

colors = ['#ff6b6b', '#feca57', '#48dbfb']

wedges, texts, autotexts = ax.pie(sizes, labels=labels, colors=colors, autopct='%1.1f%%', startangle=90)

ax.set_title('情感分布')

# 美化文本

for autotext in autotexts:

autotext.set_color('white')

autotext.set_fontweight('bold')

def plot_keyword_cloud(self, keyword_data, ax):

"""创建关键词云"""

if keyword_data:

# 创建词云

wordcloud = WordCloud(width=400, height=300, background_color='white').generate_from_frequencies(keyword_data)

ax.imshow(wordcloud, interpolation='bilinear')

ax.axis('off')

ax.set_title('关键词云')

else:

ax.text(0.5, 0.5, '暂无关键词数据', ha='center', va='center', transform=ax.transAxes)

ax.set_title('关键词云')

def generate_excel_report(self, analysis_data, filename="amazon_review_analysis.xlsx"):

"""生成Excel分析报告"""

with pd.ExcelWriter(filename, engine='openpyxl') as writer:

# 产品概览表

if 'product_overview' in analysis_data:

overview_df = pd.DataFrame([analysis_data['product_overview']])

overview_df.to_excel(writer, sheet_name='产品概览', index=False)

# 评论数据表

if 'raw_data' in analysis_data and 'reviews' in analysis_data['raw_data']:

reviews_df = pd.DataFrame(analysis_data['raw_data']['reviews'])

reviews_df.to_excel(writer, sheet_name='评论数据', index=False)

# 统计分析表

if 'review_statistics' in analysis_data:

stats_data = []

for key, value in analysis_data['review_statistics'].items():

if isinstance(value, dict):

for sub_key, sub_value in value.items():

stats_data.append({'指标': f"{key}_{sub_key}", '值': sub_value})

else:

stats_data.append({'指标': key, '值': value})

stats_df = pd.DataFrame(stats_data)

stats_df.to_excel(writer, sheet_name='统计分析', index=False)

# 关键词分析表

if 'keyword_analysis' in analysis_data:

keywords_data = [{'关键词': k, '频次': v} for k, v in analysis_data['keyword_analysis'].items()]

keywords_df = pd.DataFrame(keywords_data)

keywords_df.to_excel(writer, sheet_name='关键词分析', index=False)

print(f"Excel报告已生成: {filename}")

# 完整的分析流程示例

def complete_analysis_workflow():

"""完整的分析工作流程"""

# 1. 初始化API客户端

pangolin_client = PangolinAmazonReviewAPI("your-api-key")

# 2. 获取产品数据

product_url = "https://www.amazon.com/dp/B0DYTF8L2W"

print("正在获取产品数据...")

raw_data = pangolin_client.scrape_product_details_with_reviews(product_url)

if not raw_data:

print("数据获取失败")

return

# 3. 执行深度分析

print("正在执行深度分析...")

analyzer = ComprehensiveReviewAnalysis(pangolin_client)

detailed_analysis = analyzer.get_complete_review_analysis(product_url)

# 4. 生成可视化报告

print("正在生成可视化报告...")

visualizer = ReviewDataVisualizer()

# 合并数据

complete_data = {**detailed_analysis, 'raw_data': raw_data}

# 创建仪表板

visualizer.create_comprehensive_dashboard(complete_data)

# 生成Excel报告

visualizer.generate_excel_report(complete_data, "amazon_analysis_report.xlsx")

# 5. 输出关键洞察

print("\n=== 关键洞察摘要 ===")

print(f"产品评分: {detailed_analysis['product_overview']['overall_rating']}/5.0")

print(f"总评论数: {detailed_analysis['product_overview']['total_reviews']}")

print(f"正面评论占比: {detailed_analysis['sentiment_analysis']['positive']/(detailed_analysis['sentiment_analysis']['positive'] + detailed_analysis['sentiment_analysis']['negative'] + detailed_analysis['sentiment_analysis']['neutral'])*100:.1f}%")

print("\n最常提及的关键词:")

for keyword, freq in list(detailed_analysis['keyword_analysis'].items())[:5]:

print(f" - {keyword}: {freq}次")

print("\n分析完成!报告文件已生成。")

if __name__ == "__main__":

complete_analysis_workflow()

第七部分:适用人群与应用场景

7.1 目标用户画像

Pangolin Scrape API作为专业的亚马逊评论采集工具,特别适合以下类型的用户:

1. 电商卖家与品牌方

- 需要定期监控自有产品的用户反馈

- 希望了解竞争对手的产品表现

- 寻求数据驱动的产品改进方向

2. 数据分析师与市场研究人员

- 需要大量、准确的消费者反馈数据

- 进行行业趋势分析和市场洞察研究

- 构建消费者行为预测模型

3. 产品经理与开发团队

- 通过用户评论指导产品迭代

- 验证产品功能的市场接受度

- 识别产品痛点和改进机会

4. 营销团队与广告优化师

- 了解目标消费者的真实需求

- 优化产品文案和营销策略

- 监控营销活动的用户反馈

5. 投资机构与咨询公司

- 评估投资标的的市场表现

- 进行行业竞争格局分析

- 为客户提供基于数据的战略建议

7.2 应用场景深度解析

场景一:新品上市前的市场调研

def pre_launch_market_research(category_keywords, pangolin_client):

"""新品上市前的市场调研"""

research_report = {

'market_size_estimation': {},

'competitor_landscape': {},

'consumer_pain_points': [],

'opportunity_gaps': [],

'pricing_insights': {},

'launch_recommendations': []

}

# 通过关键词搜索相关产品

for keyword in category_keywords:

search_url = f"https://www.amazon.com/s?k={keyword}"

# 使用Pangolin API获取搜索结果(需要配置关键词搜索解析器)

search_results = pangolin_client.scrape_keyword_results(search_url)

if search_results:

# 分析前20个产品

top_products = search_results['products'][:20]

for product in top_products:

product_analysis = pangolin_client.scrape_product_details_with_reviews(product['url'])

if product_analysis:

# 累积市场洞察

research_report['competitor_landscape'][product['asin']] = {

'rating': product_analysis['product_info']['rating'],

'review_count': product_analysis['product_info']['review_count'],

'price': product_analysis['product_info']['price']

}

# 提取消费者痛点

for review in product_analysis['reviews']:

if review['rating'] <= 3: # 负面评论

research_report['consumer_pain_points'].append(review['content'])

return research_report

def identify_market_opportunities(research_data):

"""识别市场机会"""

opportunities = []

# 分析价格空档

prices = [float(str(comp['price']).replace(', '').replace(',', ''))

for comp in research_data['competitor_landscape'].values()

if comp['price']]

if prices:

price_gaps = []

sorted_prices = sorted(prices)

for i in range(len(sorted_prices) - 1):

gap = sorted_prices[i+1] - sorted_prices[i]

if gap > sorted_prices[i] * 0.3: # 30%以上的价格差距

price_gaps.append((sorted_prices[i], sorted_prices[i+1]))

if price_gaps:

opportunities.append({

'type': 'pricing_gap',

'description': f'发现价格空档机会',

'details': price_gaps,

'recommendation': '考虑在价格空档区间定位产品'

})

# 分析功能缺失

pain_points_text = ' '.join(research_data['consumer_pain_points'])

common_complaints = extract_common_complaints(pain_points_text)

for complaint in common_complaints[:5]: # 前5个最常见的抱怨

opportunities.append({

'type': 'feature_gap',

'description': f'消费者普遍抱怨: {complaint["issue"]}',

'frequency': complaint['count'],

'recommendation': f'产品设计应重点解决: {complaint["issue"]}'

})

return opportunities

def extract_common_complaints(text):

"""提取常见抱怨"""

complaint_keywords = {

'质量问题': ['quality', 'broken', 'defective', 'poor quality', 'cheap'],

'尺寸问题': ['too small', 'too big', 'size', 'fit'],

'使用困难': ['difficult', 'hard to use', 'complicated', 'confusing'],

'价格偏高': ['expensive', 'overpriced', 'costly', 'not worth'],

'发货问题': ['shipping', 'delivery', 'packaging', 'damaged in transit']

}

complaints = []

for issue, keywords in complaint_keywords.items():

count = sum(text.lower().count(keyword) for keyword in keywords)

if count > 0:

complaints.append({'issue': issue, 'count': count})

return sorted(complaints, key=lambda x: x['count'], reverse=True)

场景二:品牌声誉监控与危机预警

class BrandReputationMonitor:

def __init__(self, pangolin_client):

self.client = pangolin_client

self.alert_thresholds = {

'rating_drop': 0.2, # 评分下降超过0.2触发警报

'negative_sentiment_spike': 0.3, # 负面情绪占比超过30%

'review_volume_drop': 0.5 # 评论数量下降超过50%

}

def monitor_brand_products(self, product_asins, brand_name):

"""监控品牌产品表现"""

current_snapshot = {

'timestamp': datetime.now().isoformat(),

'brand': brand_name,

'products': {},

'alerts': [],

'summary_metrics': {}

}

all_ratings = []

all_sentiment_scores = []

total_reviews = 0

for asin in product_asins:

print(f"监控产品: {asin}")

product_url = f"https://www.amazon.com/dp/{asin}"

# 获取当前数据

current_data = self.client.scrape_product_details_with_reviews(product_url)

if current_data:

# 分析当前状态

analysis = ComprehensiveReviewAnalysis(self.client)

current_analysis = analysis.get_complete_review_analysis(product_url)

if current_analysis:

current_snapshot['products'][asin] = {

'current_data': current_analysis,

'alerts': self.check_product_alerts(asin, current_analysis)

}

# 累积整体指标

all_ratings.append(current_analysis['product_overview']['overall_rating'])

sentiment = current_analysis['sentiment_analysis']

total_sentiment = sum(sentiment.values())

if total_sentiment > 0:

negative_ratio = sentiment['negative'] / total_sentiment

all_sentiment_scores.append(negative_ratio)

total_reviews += current_analysis['product_overview']['total_reviews']

# 计算品牌整体指标

if all_ratings:

current_snapshot['summary_metrics'] = {

'average_brand_rating': sum(all_ratings) / len(all_ratings),

'average_negative_sentiment': sum(all_sentiment_scores) / len(all_sentiment_scores) if all_sentiment_scores else 0,

'total_brand_reviews': total_reviews,

'products_monitored': len(product_asins)

}

# 生成品牌级别的警报

brand_alerts = self.generate_brand_alerts(current_snapshot)

current_snapshot['alerts'].extend(brand_alerts)

return current_snapshot

def check_product_alerts(self, asin, current_analysis):

"""检查单个产品的警报"""

alerts = []

# 评分警报

current_rating = current_analysis['product_overview']['overall_rating']

if current_rating < 3.5:

alerts.append({

'type': 'low_rating',

'severity': 'high' if current_rating < 3.0 else 'medium',

'message': f'产品{asin}评分过低: {current_rating}/5.0',

'recommendation': '紧急关注产品质量问题,考虑下架或改进'

})

# 负面情绪警报

sentiment = current_analysis['sentiment_analysis']

total_sentiment = sum(sentiment.values())

if total_sentiment > 0:

negative_ratio = sentiment['negative'] / total_sentiment

if negative_ratio > self.alert_thresholds['negative_sentiment_spike']:

alerts.append({

'type': 'negative_sentiment_spike',

'severity': 'high',

'message': f'产品{asin}负面情绪占比过高: {negative_ratio:.1%}',

'recommendation': '立即分析负面评论原因,制定应对策略'

})

# 评论关键词警报

keywords = current_analysis.get('keyword_analysis', {})

warning_keywords = ['defective', 'broken', 'scam', 'fake', 'terrible', 'worst']

for keyword in warning_keywords:

if keyword in keywords and keywords[keyword] > 5:

alerts.append({

'type': 'negative_keyword_spike',

'severity': 'medium',

'message': f'产品{asin}出现负面关键词"{keyword}"频繁提及({keywords[keyword]}次)',

'recommendation': f'关注与"{keyword}"相关的产品问题'

})

return alerts

def generate_brand_alerts(self, snapshot):

"""生成品牌级别警报"""

brand_alerts = []

metrics = snapshot['summary_metrics']

# 品牌整体评分警报

if metrics['average_brand_rating'] < 3.8:

brand_alerts.append({

'type': 'brand_rating_concern',

'severity': 'high',

'message': f'品牌整体评分偏低: {metrics["average_brand_rating"]:.2f}/5.0',

'recommendation': '需要系统性改进产品质量和用户体验'

})

# 品牌负面情绪警报

if metrics['average_negative_sentiment'] > 0.25:

brand_alerts.append({

'type': 'brand_sentiment_concern',

'severity': 'medium',

'message': f'品牌负面情绪比例较高: {metrics["average_negative_sentiment"]:.1%}',

'recommendation': '加强客户服务和售后支持'

})

return brand_alerts

def generate_monitoring_report(self, snapshot, output_format='html'):

"""生成监控报告"""

if output_format == 'html':

return self.generate_html_report(snapshot)

elif output_format == 'json':

return json.dumps(snapshot, indent=2, ensure_ascii=False)

else:

return self.generate_text_report(snapshot)

def generate_html_report(self, snapshot):

"""生成HTML格式的监控报告"""

html_template = """

<!DOCTYPE html>

<html>

<head>

<meta charset="UTF-8">

<title>品牌声誉监控报告 - {brand}</title>

<style>

body {{ font-family: 'Microsoft YaHei', Arial, sans-serif; margin: 20px; }}

.header {{ background-color: #f8f9fa; padding: 20px; border-radius: 5px; }}

.alert-high {{ background-color: #f8d7da; color: #721c24; padding: 10px; margin: 10px 0; border-radius: 3px; }}

.alert-medium {{ background-color: #fff3cd; color: #856404; padding: 10px; margin: 10px 0; border-radius: 3px; }}

.metric {{ display: inline-block; margin: 10px 20px; }}

.product-section {{ border: 1px solid #ddd; margin: 20px 0; padding: 15px; border-radius: 5px; }}

</style>

</head>

<body>

<div class="header">

<h1>品牌声誉监控报告</h1>

<p><strong>品牌:</strong> {brand}</p>

<p><strong>监控时间:</strong> {timestamp}</p>

<p><strong>监控产品数量:</strong> {product_count}</p>

</div>

<h2>品牌整体指标</h2>

<div class="metric"><strong>平均评分:</strong> {avg_rating:.2f}/5.0</div>

<div class="metric"><strong>总评论数:</strong> {total_reviews:,}</div>

<div class="metric"><strong>负面情绪占比:</strong> {neg_sentiment:.1%}</div>

<h2>警报信息</h2>

{alerts_html}

<h2>产品详细信息</h2>

{products_html}

</body>

</html>

"""

# 生成警报HTML

alerts_html = ""

for alert in snapshot['alerts']:

alert_class = f"alert-{alert['severity']}"

alerts_html += f"""

<div class="{alert_class}">

<strong>[{alert['severity'].upper()}]</strong> {alert['message']}<br>

<strong>建议:</strong> {alert['recommendation']}

</div>

"""

if not snapshot['alerts']:

alerts_html = "<p>暂无警报信息</p>"

# 生成产品HTML

products_html = ""

for asin, product_data in snapshot['products'].items():

overview = product_data['current_data']['product_overview']

products_html += f"""

<div class="product-section">

<h3>产品 {asin}</h3>

<p><strong>标题:</strong> {overview['title'][:100]}...</p>

<p><strong>评分:</strong> {overview['overall_rating']}/5.0</p>

<p><strong>评论数:</strong> {overview['total_reviews']:,}</p>

<p><strong>价格:</strong> {overview['price_point']}</p>

</div>

"""

return html_template.format(

brand=snapshot['brand'],

timestamp=snapshot['timestamp'],

product_count=snapshot['summary_metrics']['products_monitored'],

avg_rating=snapshot['summary_metrics']['average_brand_rating'],

total_reviews=snapshot['summary_metrics']['total_brand_reviews'],

neg_sentiment=snapshot['summary_metrics']['average_negative_sentiment'],

alerts_html=alerts_html,

products_html=products_html

)

# 品牌监控使用示例

def setup_brand_monitoring():

"""设置品牌监控示例"""

# 初始化监控系统

pangolin_client = PangolinAmazonReviewAPI("your-api-key")

monitor = BrandReputationMonitor(pangolin_client)

# 定义要监控的品牌产品

brand_products = {

"智能家居品牌A": ["B08N5WRWNW", "B07YN2ZZQC", "B09JQT5XLV"],

"电子设备品牌B": ["B0DYTF8L2W", "B08XYZBQ3F", "B09KLM7NOP"]

}

# 执行监控

for brand_name, product_asins in brand_products.items():

print(f"正在监控品牌: {brand_name}")

snapshot = monitor.monitor_brand_products(product_asins, brand_name)

# 生成报告

html_report = monitor.generate_monitoring_report(snapshot, 'html')

# 保存报告

report_filename = f"brand_monitor_{brand_name}_{datetime.now().strftime('%Y%m%d_%H%M%S')}.html"

with open(report_filename, 'w', encoding='utf-8') as f:

f.write(html_report)

print(f"品牌监控报告已生成: {report_filename}")

# 如果有高优先级警报,发送通知

high_priority_alerts = [alert for alert in snapshot['alerts'] if alert['severity'] == 'high']

if high_priority_alerts:

print(f"⚠️ 品牌 {brand_name} 发现 {len(high_priority_alerts)} 个高优先级警报!")

for alert in high_priority_alerts:

print(f" - {alert['message']}")

场景三:产品优化决策支持

class ProductOptimizationAdvisor:

def __init__(self, pangolin_client):

self.client = pangolin_client

def analyze_product_improvement_opportunities(self, product_url, competitor_urls=None):

"""分析产品改进机会"""

improvement_report = {

'current_product_analysis': {},

'improvement_opportunities': [],

'competitive_benchmarks': {},

'implementation_roadmap': [],

'expected_impact': {}

}

# 分析当前产品

print("分析当前产品...")

analyzer = ComprehensiveReviewAnalysis(self.client)

current_analysis = analyzer.get_complete_review_analysis(product_url)

if not current_analysis:

return None

improvement_report['current_product_analysis'] = current_analysis

# 识别改进机会

opportunities = self.identify_improvement_opportunities(current_analysis)

improvement_report['improvement_opportunities'] = opportunities

# 竞品对标分析

if competitor_urls:

print("分析竞品...")

competitive_insights = self.analyze_competitive_benchmarks(competitor_urls)

improvement_report['competitive_benchmarks'] = competitive_insights

# 基于竞品分析补充改进机会

competitive_opportunities = self.identify_competitive_opportunities(current_analysis, competitive_insights)

improvement_report['improvement_opportunities'].extend(competitive_opportunities)

# 生成实施路线图

improvement_report['implementation_roadmap'] = self.create_implementation_roadmap(

improvement_report['improvement_opportunities']

)

# 预估影响

improvement_report['expected_impact'] = self.estimate_improvement_impact(

current_analysis, improvement_report['improvement_opportunities']

)

return improvement_report

def identify_improvement_opportunities(self, product_analysis):

"""识别产品改进机会"""

opportunities = []

# 基于评分分析

current_rating = product_analysis['product_overview']['overall_rating']

if current_rating < 4.5:

rating_dist = product_analysis['review_statistics']['rating_distribution']

low_ratings = rating_dist.get(1, 0) + rating_dist.get(2, 0) + rating_dist.get(3, 0)

total_ratings = sum(rating_dist.values())

if total_ratings > 0:

low_rating_ratio = low_ratings / total_ratings

opportunities.append({

'category': 'rating_improvement',

'priority': 'high' if low_rating_ratio > 0.2 else 'medium',

'description': f'提升产品评分从{current_rating:.1f}到4.5+',

'current_state': f'{low_rating_ratio:.1%}的评论为3星及以下',

'target_improvement': f'减少低评分评论比例至10%以下',

'estimated_effort': 'high'

})

# 基于负面关键词分析

keywords = product_analysis.get('keyword_analysis', {})

negative_keywords = ['broken', 'defective', 'poor', 'terrible', 'disappointing', 'useless']

for neg_word in negative_keywords:

if neg_word in keywords and keywords[neg_word] > 3:

opportunities.append({

'category': 'quality_issue',

'priority': 'high',

'description': f'解决与"{neg_word}"相关的质量问题',

'current_state': f'"{neg_word}"在评论中被提及{keywords[neg_word]}次',

'target_improvement': f'减少"{neg_word}"相关投诉至0-1次',

'estimated_effort': 'medium'

})

# 基于情感分析

sentiment = product_analysis['sentiment_analysis']

total_sentiment = sum(sentiment.values())

if total_sentiment > 0:

negative_ratio = sentiment['negative'] / total_sentiment

if negative_ratio > 0.2:

opportunities.append({

'category': 'user_experience',

'priority': 'medium',

'description': '改善用户体验,减少负面情绪',

'current_state': f'负面情绪占比{negative_ratio:.1%}',

'target_improvement': '负面情绪占比降至15%以下',

'estimated_effort': 'medium'

})

# 基于评论数量分析

review_count = product_analysis['product_overview']['total_reviews']

if review_count < 50:

opportunities.append({

'category': 'social_proof',

'priority': 'medium',

'description': '增加产品社会证明(评论数量)',

'current_state': f'当前评论数量: {review_count}',

'target_improvement': '评论数量提升至100+',

'estimated_effort': 'low'

})

return opportunities

def analyze_competitive_benchmarks(self, competitor_urls):

"""分析竞品基准"""

competitive_insights = {

'competitor_analysis': [],

'best_practices': [],

'performance_gaps': []

}

analyzer = ComprehensiveReviewAnalysis(self.client)

for i, competitor_url in enumerate(competitor_urls[:5]): # 最多分析5个竞品

print(f"分析竞品 {i+1}/{min(5, len(competitor_urls))}")

competitor_analysis = analyzer.get_complete_review_analysis(competitor_url)

if competitor_analysis:

competitive_insights['competitor_analysis'].append({

'url': competitor_url,

'analysis': competitor_analysis

})

# 识别最佳实践

best_practices = self.identify_best_practices(competitive_insights['competitor_analysis'])

competitive_insights['best_practices'] = best_practices

return competitive_insights

def identify_best_practices(self, competitor_analyses):

"""识别竞品最佳实践"""

best_practices = []

if not competitor_analyses:

return best_practices

# 找出表现最好的竞品

top_performer = max(

competitor_analyses,

key=lambda x: x['analysis']['product_overview']['overall_rating']

)

top_rating = top_performer['analysis']['product_overview']['overall_rating']

if top_rating > 4.5:

# 分析高评分产品的特征

top_keywords = top_performer['analysis']['keyword_analysis']

positive_keywords = ['excellent', 'amazing', 'perfect', 'great', 'love']

for keyword in positive_keywords:

if keyword in top_keywords and top_keywords[keyword] > 5:

best_practices.append({

'category': 'positive_feedback',

'description': f'高评分竞品常被称赞为"{keyword}"',

'frequency': top_keywords[keyword],

'recommendation': f'产品应重点体现"{keyword}"特质'

})

# 分析价格策略

prices = []

for comp in competitor_analyses:

price_str = str(comp['analysis']['product_overview']['price_point']).replace('

', '').replace(',', '')

try:

price = float(price_str)

prices.append(price)

except:

pass

if prices:

avg_price = sum(prices) / len(prices)

best_practices.append({

'category': 'pricing_strategy',

'description': f'竞品平均定价: ${avg_price:.2f}',

'recommendation': '定价策略应考虑市场平均水平'

})

return best_practices

def create_implementation_roadmap(self, opportunities):

"""创建实施路线图"""

# 按优先级和工作量排序

priority_order = {'high': 3, 'medium': 2, 'low': 1}

effort_order = {'low': 1, 'medium': 2, 'high': 3}

sorted_opportunities = sorted(

opportunities,

key=lambda x: (priority_order.get(x['priority'], 0), -effort_order.get(x.get('estimated_effort', 'medium'), 2))

)

roadmap = {

'phase1_immediate': [], # 0-1个月,高优先级低工作量

'phase2_short_term': [], # 1-3个月,高优先级中等工作量

'phase3_medium_term': [], # 3-6个月,高工作量或中等优先级

'phase4_long_term': [] # 6个月以上,低优先级

}

for opp in sorted_opportunities:

if opp['priority'] == 'high' and opp.get('estimated_effort') == 'low':

roadmap['phase1_immediate'].append(opp)

elif opp['priority'] == 'high' and opp.get('estimated_effort') == 'medium':

roadmap['phase2_short_term'].append(opp)

elif opp['priority'] == 'high' or opp.get('estimated_effort') == 'high':

roadmap['phase3_medium_term'].append(opp)

else:

roadmap['phase4_long_term'].append(opp)

return roadmap

def estimate_improvement_impact(self, current_analysis, opportunities):

"""预估改进影响"""

current_rating = current_analysis['product_overview']['overall_rating']

current_reviews = current_analysis['product_overview']['total_reviews']

impact_estimation = {

'rating_improvement': {

'current': current_rating,

'potential': min(5.0, current_rating + 0.5), # 保守估计提升0.5分

'confidence': 'medium'

},

'review_growth': {

'current': current_reviews,

'potential': int(current_reviews * 1.3), # 预估增长30%

'confidence': 'low'

},

'business_impact': {},

'timeline': '6-12个月'

}

# 计算业务影响

rating_lift = impact_estimation['rating_improvement']['potential'] - current_rating

review_lift = impact_estimation['review_growth']['potential'] - current_reviews

# 简化的转化率影响模型(实际应用中需要更复杂的建模)

conversion_lift = rating_lift * 0.1 + (review_lift / current_reviews) * 0.05 if current_reviews > 0 else 0.05

impact_estimation['business_impact'] = {

'estimated_conversion_lift': f'{conversion_lift:.1%}',

'estimated_revenue_impact': 'medium',

'risk_assessment': 'low'

}

return impact_estimation

# 产品优化分析使用示例

def run_product_optimization_analysis():

"""运行产品优化分析"""

# 初始化优化顾问

pangolin_client = PangolinAmazonReviewAPI("your-api-key")

advisor = ProductOptimizationAdvisor(pangolin_client)

# 定义要优化的产品和竞品

target_product_url = "https://www.amazon.com/dp/B0DYTF8L2W"

competitor_urls = [

"https://www.amazon.com/dp/B08N5WRWNW",

"https://www.amazon.com/dp/B07YN2ZZQC",

"https://www.amazon.com/dp/B09JQT5XLV"

]

print("开始产品优化分析...")

# 执行完整分析

optimization_report = advisor.analyze_product_improvement_opportunities(

target_product_url,

competitor_urls

)

if optimization_report:

print("\n=== 产品优化报告摘要 ===")

current_rating = optimization_report['current_product_analysis']['product_overview']['overall_rating']

print(f"当前产品评分: {current_rating}/5.0")

opportunities = optimization_report['improvement_opportunities']

print(f"识别改进机会: {len(opportunities)}个")

high_priority = [opp for opp in opportunities if opp['priority'] == 'high']

print(f"高优先级改进项: {len(high_priority)}个")

print("\n=== 优先改进项目 ===")

for i, opp in enumerate(high_priority[:3], 1):

print(f"{i}. {opp['description']}")

print(f" 当前状态: {opp['current_state']}")

print(f" 目标改进: {opp['target_improvement']}")

print()

print("=== 实施路线图 ===")

roadmap = optimization_report['implementation_roadmap']

if roadmap['phase1_immediate']:

print("第一阶段 (0-1个月):")

for opp in roadmap['phase1_immediate']:

print(f" - {opp['description']}")

if roadmap['phase2_short_term']:

print("第二阶段 (1-3个月):")

for opp in roadmap['phase2_short_term'][:2]: # 显示前2个

print(f" - {opp['description']}")

# 保存详细报告

import json

with open('product_optimization_report.json', 'w', encoding='utf-8') as f:

json.dump(optimization_report, f, indent=2, ensure_ascii=False)

print("\n详细报告已保存至: product_optimization_report.json")

# 预估影响

impact = optimization_report['expected_impact']

potential_rating = impact['rating_improvement']['potential']

print(f"\n预估影响:")

print(f" 评分提升潜力: {current_rating:.1f} → {potential_rating:.1f}")

print(f" 转化率提升估计: {impact['business_impact']['estimated_conversion_lift']}")

if __name__ == "__main__":

run_product_optimization_analysis()

第八部分:总结与发展展望

8.1 Amazon评论爬虫的技术演进

通过本文的深入分析,我们可以看到Amazon评论爬虫技术正在经历重要的演进过程。传统的Python爬取亚马逊评论方法虽然仍有一定价值,但面临着越来越多的技术和政策挑战。

传统方法的局限性总结:

- 技术壁垒日益提高:亚马逊的反爬虫机制不断升级,包括更复杂的验证码系统、行为分析算法和IP封禁策略。

- 数据获取限制加强:完整评论数据需要登录访问,匿名用户只能获取有限的评论信息。

- 维护成本持续上升:自建爬虫系统需要不断更新以应对平台政策变化,技术维护成本高昂。

- 合规风险增加:数据采集的法律合规要求越来越严格,需要更专业的技术方案。

8.2 专业API服务的价值凸显

在这种背景下,Pangolin Scrape API这样的专业亚马逊评论采集工具展现出明显的优势:

核心价值体现:

- 技术专业性:专业团队持续优化反爬虫技术,保证数据获取的稳定性和完整性。

- 规模化处理能力:支持大规模并发处理,满足企业级数据需求,单日可处理数百万页面。

- 成本效益优势:相比自建团队的人力成本和技术投入,专业API服务具有明显的成本优势。

- 合规保障:专业服务提供商在数据合规方面具有更强的保障能力。

8.3 适用企业类型分析

基于我们的深入分析,Pangolin Scrape API特别适合以下类型的企业和个人:

大中型电商卖家

- 拥有多个产品线,需要定期监控产品表现

- 有专门的数据分析团队或技术人员

- 希望通过数据驱动优化产品和营销策略

- 寻求跳出同质化竞争的差异化路径

卖家工具开发公司

- 为其他卖家提供数据分析服务

- 需要大量、稳定的数据源支持产品功能

- 希望避免对传统工具(如卖家精灵)的依赖

- 寻求构建自有数据分析能力

市场研究机构

- 需要准确、全面的消费者反馈数据

- 进行行业趋势分析和竞争格局研究

- 为客户提供基于真实数据的咨询服务

品牌方和制造商

- 希望深入了解终端消费者的真实反馈

- 需要监控品牌声誉和产品表现

- 寻求产品改进和创新的数据支持

8.4 实施建议与最佳实践

对于准备使用Amazon评论爬虫技术的企业,我们提供以下实施建议:

1. 技术路径选择

对于初学者或小规模需求:

- 可以从Python基础爬虫开始学习,了解基本原理

- 重点掌握数据解析和清洗技术

- 注意控制请求频率,避免被平台封禁

对于企业级应用:

- 强烈推荐使用Pangolin Scrape API等专业服务

- 重点投入在数据分析和业务应用上

- 建立完整的数据处理和分析流程

2. 数据应用策略

建立数据驱动的决策机制:

class DataDrivenDecisionFramework:

def __init__(self, pangolin_client):

self.client = pangolin_client

self.decision_rules = self.setup_decision_rules()

def setup_decision_rules(self):

"""设置决策规则"""

return {

'product_launch': {

'market_validation': {

'min_competitor_rating': 3.5,

'max_negative_sentiment': 0.3,

'min_review_sample': 100

},

'pricing_strategy': {

'price_gap_threshold': 0.2,

'value_perception_keywords': ['value', 'worth', 'price']

}

},

'product_optimization': {

'urgency_indicators': {

'rating_below': 3.5,

'negative_spike_threshold': 0.4,

'complaint_frequency': 10

},

'improvement_priorities': {

'quality_issues': ['broken', 'defective', 'poor quality'],

'usability_issues': ['difficult', 'confusing', 'complicated'],

'value_issues': ['expensive', 'overpriced', 'not worth']

}

},

'marketing_optimization': {

'messaging_insights': {

'positive_attributes': ['excellent', 'amazing', 'perfect'],

'competitive_advantages': ['better than', 'superior', 'best']

},

'target_audience': {

'user_segments': ['beginner', 'professional', 'family']

}

}

}

def generate_actionable_insights(self, product_data):

"""生成可执行的洞察"""

insights = {

'immediate_actions': [],

'strategic_recommendations': [],

'monitoring_alerts': []

}

# 基于决策规则分析数据

rating = product_data['product_overview']['overall_rating']

if rating < self.decision_rules['product_optimization']['urgency_indicators']['rating_below']:

insights['immediate_actions'].append({

'action': 'emergency_review',

'description': '产品评分过低,需要紧急审查',

'timeline': '立即',

'responsible_team': '产品团队'

})

# 分析关键词趋势

keywords = product_data.get('keyword_analysis', {})

quality_issues = self.decision_rules['product_optimization']['improvement_priorities']['quality_issues']

for issue_keyword in quality_issues:

if issue_keyword in keywords and keywords[issue_keyword] > 5:

insights['strategic_recommendations'].append({

'recommendation': f'解决{issue_keyword}相关问题',

'priority': 'high',

'expected_impact': 'rating_improvement',

'timeline': '1-2个月'

})

return insights

def create_action_plan(self, insights):

"""创建行动计划"""

action_plan = {

'week_1': [],

'month_1': [],

'quarter_1': [],

'ongoing': []

}

# 分配行动项到时间线

for action in insights['immediate_actions']:

action_plan['week_1'].append(action)

for recommendation in insights['strategic_recommendations']:

if recommendation['timeline'] == '1-2个月':

action_plan['month_1'].append(recommendation)

elif 'quarter' in recommendation.get('timeline', ''):

action_plan['quarter_1'].append(recommendation)

else:

action_plan['ongoing'].append(recommendation)

return action_plan

3. 团队能力建设

数据分析能力:

- 培养团队的数据解读和分析能力

- 建立标准的数据处理流程和工具

- 定期进行数据驱动决策的培训

技术集成能力:

- 掌握API集成和数据处理技术

- 建立自动化的数据采集和分析流程

- 培养问题诊断和解决能力

4. 合规与风险管理

数据使用合规:

- 确保数据使用符合平台服务条款

- 建立数据安全和隐私保护机制

- 定期审查数据使用政策的变化

风险控制:

- 建立多渠道的数据获取策略

- 避免对单一数据源的过度依赖

- 制定数据服务中断时的应急预案

8.5 未来发展趋势

技术发展方向

- AI增强的数据分析:未来的Amazon评论爬虫将更多地集成AI技术,提供更深入的语义分析、情感识别和趋势预测功能。

- 实时数据处理:数据采集将向实时化方向发展,支持分钟级的数据更新和预警。

- 多平台整合:不仅仅是亚马逊,还将整合更多电商平台的数据,提供全景式的市场洞察。

- 可视化增强:数据展示将更加直观和交互化,支持自定义的仪表板和报告生成。

应用场景扩展

- 供应链优化:通过评论数据预测需求变化,优化库存管理。

- 产品创新指导:基于用户反馈数据指导新产品开发方向。

- 客户服务优化:分析评论中的客户问题,优化客服策略。

- 品牌管理自动化:建立基于数据的品牌声誉管理体系。

8.6 结语

Amazon评论爬虫技术作为现代电商数据分析的重要工具,正在从简单的数据采集向智能化的商业洞察平台演进。虽然Python爬取亚马逊评论的传统方法仍有学习价值,但对于真正希望在激烈竞争中脱颖而出的企业而言,选择Pangolin Scrape API这样的专业亚马逊评论采集工具已经成为必然趋势。

这种选择不仅仅是技术路径的选择,更是商业策略的选择。在数据驱动的商业环境中,谁能更快、更准确地获取和分析市场数据,谁就能在竞争中占据先机。Pangolin Scrape API通过其强大的技术能力、完整的数据覆盖和专业的服务支持,为企业提供了跳出传统工具局限、建立自主数据分析能力的可能。

对于那些希望通过个性化数据分析避免同质化竞争内卷的企业来说,现在正是行动的最佳时机。随着平台政策的不断收紧和竞争的日益激烈,早期采用先进数据采集技术的企业将获得难以复制的竞争优势。

无论你是电商卖家、数据分析师,还是产品经理,掌握现代化的Amazon评论爬虫技术都将为你的职业发展和业务成功提供强有力的支撑。在这个数据为王的时代,让数据成为你最强大的武器,而Pangolin Scrape API将是你手中最锋利的那把剑。

关于Pangolin

Pangolin专注于电商数据采集API服务,提供包括Amazon、Walmart、Shopify、Shopee、eBay等主流电商平台的数据采集解决方案。我们的Scrape API和Data Pilot产品为全球企业提供稳定、高效、合规的数据服务,助力企业在数据驱动的商业环境中取得成功。

如需了解更多信息或申请API试用,请访问:www.pangolinfo.com

本文所提供的代码示例仅供学习和参考使用,实际应用时请确保遵守相关法律法规和平台服务条款。 ‘