There is a number that separates winning Amazon sellers from the ones grinding through dead inventory cycles: twelve hours. That is the average delay between when a market event happens on Amazon — a top competitor runs out of stock, a holiday demand wave begins, a pricing war ignites — and when that event shows up in the daily-update SaaS tools that most small sellers rely on.

In twelve hours, a large seller’s data team has already spotted the signal, reallocated ad budget, and captured the traffic lift. By the time a small seller opens Jungle Scout the next morning, the window is closed.

This is the data gap. It is not a funding gap or a supply chain gap. It is a time resolution gap — and it is the reason enterprise-scale sellers consistently outperform smaller competitors in trend-sensitive categories like POD wall art, seasonal home decor, and fast-moving lifestyle products.

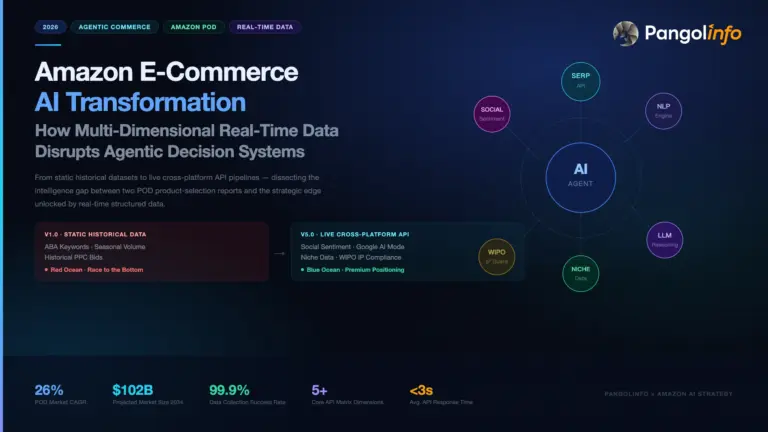

The argument of this article is that this gap is now closable. Three things happened in the 2024-2026 window that changed the economics of real-time Amazon data for small sellers: AI agent frameworks matured from research prototypes to production-ready tools; Amazon real-time data API services made minute-level BSR sampling available at consumer-grade pricing; and automated WIPO patent scanning made IP risk assessment something a solo operator can run without a lawyer on retainer.

We will cover each of these shifts, show how they play out in three real POD product categories, provide a working Python implementation for BSR monitoring, and give you a decision framework for choosing between SaaS tools and API-first infrastructure based on your current scale.

1. The Anatomy of the Amazon Data Gap

To understand what real-time data actually changes, you need a precise picture of what large sellers are doing that small sellers are not.

What Enterprise Data Infrastructure Looks Like

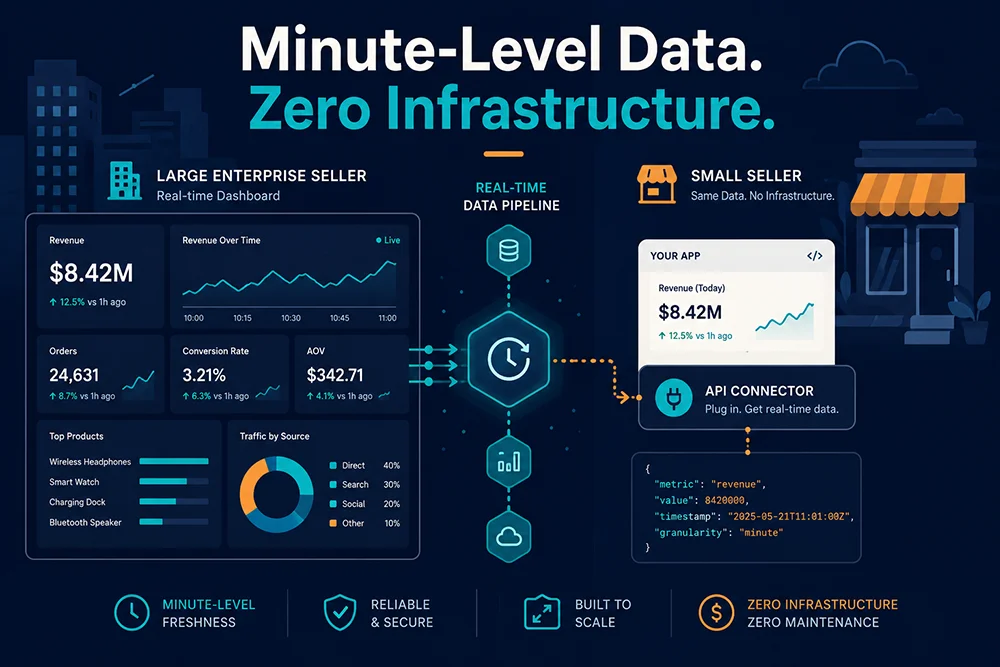

A large Amazon seller operating at eight-figure annual GMV typically maintains an internal data engineering function — sometimes three to five people — whose core job is building and maintaining a custom Amazon data pipeline. This system queries BSR, price, and inventory data across their competitive set every 15 to 30 minutes, stores everything in a data warehouse, and feeds dashboards that drive real-time decisions on ad spend, replenishment, and pricing.

The cost of running this infrastructure in-house: residential proxy pools run $2,000-$5,000/month for reliable at-scale coverage; servers and storage add $500-$1,500/month; and a single data engineer capable of maintaining the system against Amazon’s evolving anti-scraping mechanisms costs $80,000-$120,000/year in salary. Total infrastructure cost: $60,000-$120,000 annually.

That number is why most small sellers have never tried to replicate it. But that number is also now largely irrelevant — because Pangolinfo Scrape API commoditizes the entire stack.

The Three Scenarios Where the Gap Costs You Money

Competitor stockout windows. When a top ASIN runs low on inventory, its BSR begins degrading — slowly at first, then accelerating as the listing moves from “Only 3 left in stock” to “Temporarily unavailable.” The entire window from first signal to BSR collapse typically spans 12 to 36 hours in high-velocity categories. A seller with 30-minute BSR sampling captures this signal in the first hour and immediately increases ad spend to absorb the displaced traffic. A seller checking daily data sees a different number the next morning and has no idea what triggered it.

Holiday demand ramp signals. In POD wall art, the pre-Thanksgiving demand curve begins shifting approximately 6-8 weeks before the holiday — specifically, BSR for gift-oriented SKUs starts improving week-over-week in the first week of October. A minute-level data system flags this signal in week one. A daily-update tool, averaging data across the week, surfaces it in week three. By week three, lead time for air freight from Chinese suppliers is exactly the wrong length to catch the peak.

Pricing event classification. When a competitor drops price by 15%, the right response depends entirely on why they did it: clearing overstock (temporary — wait it out or match briefly) versus a strategic move to consolidate category share (sustained — respond proportionally). The difference is visible in inventory depletion rate relative to the price change. With daily data, you can only see a price drop. With hourly or sub-hourly inventory data, you can see whether their stock is moving faster or slower after the cut.

Quantifying the Opportunity Cost

For a seller running $15,000-$20,000/month GMV in a competitive decor subcategory, the measurable cost of 12-hour data delay during Q4’s six critical weeks looks approximately like this:

- Missed stockout windows (estimated 3-4 per season, $1,500-$3,000 each): $4,500-$12,000

- Late holiday demand signal, leading to suboptimal inventory position and excess FBA storage fees: $800-$1,500

- Delayed ad reallocation during competitor weakness periods (ACoS running 8-12 points high on $8,000 ad spend): $640-$960

Conservative seasonal opportunity cost: $5,940 to $14,460 — against an Amazon real-time data API subscription cost of well under $600/year.

2. Three Converging Shifts That Made Data Democratization Real

Shift One: AI Agent Frameworks Reached Production Maturity

In 2023, “AI-assisted product research” largely meant using ChatGPT to draft listing copy. By late 2025, production-ready agent frameworks — LangChain, CrewAI, AutoGen — allowed a developer with standard Python skills to build an automated product research workflow that runs without human intervention: pulling live category data, scoring candidates against predefined criteria, running patent risk checks, and generating a structured sourcing recommendation.

The ceiling of any AI agent is the quality of data it consumes. An agent fed daily-snapshot data produces precise analysis of yesterday’s market. An agent connected to a real-time Amazon data API produces actionable analysis of the market as it stands right now.

Shift Two: Real-Time Data API Costs Collapsed

The economic barrier to minute-level Amazon data was not technical — it was cost. In 2020, replicating enterprise-grade BSR sampling required building and maintaining a custom scraping stack at five-figure monthly cost. Pangolinfo Scrape API eliminates that barrier entirely: the full infrastructure stack — residential IPs, anti-detection, data parsing, 99%+ success rate SLA — is delivered as a pay-per-request API. A small seller monitoring 50 ASINs at 30-minute intervals pays under $35/month.

This is infrastructure democratization in the same sense that AWS democratized compute. The capability was never technically impossible for small sellers — it was economically inaccessible. That access constraint is now gone.

Shift Three: Automated WIPO Patent Scanning

For POD sellers in particular, intellectual property risk has historically been a capability asymmetry: large brands maintain IP counsel who review new product designs before launch; small sellers often discover infringement risk only after a DMCA strike or account warning. Automated patent scanning APIs (several now expose structured access to USPTO and WIPO design patent databases) allow a product research workflow to include a patent check as a standard non-negotiable step — not an optional human review that gets skipped under deadline pressure.

When patent scanning is a tool call inside an AI agent workflow, it happens every time, for every candidate SKU, automatically. That is a compliance posture previously available only to well-resourced operations.

3. Three POD Product Categories: Real-World Data Democratization in Practice

Case One: 3D Sandstone Relief Wall Art — Catching the Pre-Holiday Demand Signal

3D sandstone relief wall art is a gift-driven category with a sharply concentrated sales curve: roughly 60% of annual volume moves in a six-week window from mid-October through Thanksgiving. The critical insight that separates profitable operations in this category from ones sitting on post-holiday overstock is catching the demand ramp signal early enough to act on it.

The daily-data path: An operator checks BSR in Jungle Scout on October 15th and notices a key ASIN has improved from #3,200 to #1,800 over the past week. They initiate a reorder — but the supplier’s lead time is 12-14 days, putting arrival at October 29th. By then, the top-performing ASINs have consolidated their ranking position and the prime ad placements are saturated.

The real-time API path: An AI agent running on Pangolinfo’s Amazon real-time data API monitors the category’s top 200 ASINs at 15-minute intervals. On the morning of October 15th at 3:00 AM, it detects that a key ASIN’s BSR is declining (improving) at an average rate of -45 positions/hour — a pattern that matches the historical signature of holiday demand onset in this subcategory. The agent automatically triggers a WIPO patent scan on “3D sandstone relief wall decor,” confirms the design category is clear, and generates a sourcing recommendation with three risk-tiered SKU candidates before the operator’s workday begins.

The team places an air freight order on October 17th. Their SKUs arrive on October 22nd. They hold a top-50 BSR position through Thanksgiving at 22% ACoS.

Case Two: LED Aurora Night Light — Identifying Competitor Stockout Windows

LED aurora projection lights maintain steady year-round demand but operate in a concentrated competitive landscape: five ASINs account for approximately 60% of category traffic. For small sellers, sustainable growth in this environment requires a different strategy than frontal competition — specifically, identifying and exploiting temporary weaknesses in the dominant positions.

A competitor stockout in a high-velocity listing follows a predictable data signature: inventory status shifts from normal to “Only 2-3 left in stock,” BSR begins degrading at an accelerating non-linear rate, and the listing often disappears from sponsored ad placements (since out-of-stock ASINs are deprioritized by Amazon’s ad auction). The entire window — from first inventory warning to BSR recovery after restocking — typically spans 12 to 48 hours.

Using Amazon real-time data infrastructure, the monitoring system polls competitor inventory status every 30 minutes. When a top-5 ASIN triggers an “Only X left” alert, the system immediately sends a push notification and suggests a 40-60% ad budget increase for the next 18 hours. Over a three-month period (January-March 2026), the team captured seven usable stockout windows with an average incremental GMV contribution of $2,200 per window — a total of approximately $15,400 in additional revenue against a monthly API cost of under $50.

Case Three: Louvre Plaster Bust Wall Decor — WIPO Patent Scanning as a Non-Negotiable Step

Classical sculpture reproductions (Venus de Milo, Michelangelo’s David, Winged Victory) occupy a legally complex corner of the wall decor category. While the underlying sculptures are centuries into the public domain, specific photographic interpretations, 3D scan derivatives, and commercially stylized adaptations may carry active design patents or copyright registrations that sellers rarely check before sourcing.

When the team’s AI agent evaluated this category, its automated patent scan returned two specific Design Patent numbers associated with a supplier’s sample design that closely matched the search terms “Louvre plaster bust wall art.” One of the patent holders had an active enforcement history — three DMCA complaints against marketplace sellers in the preceding 18 months.

The team declined both SKUs and sourced an alternative design with sufficiently differentiated proportions to fall outside the patent claims. This decision avoided a potential account health warning and the associated legal cost — an outcome that previously required either expensive IP counsel or getting lucky with informal due diligence.

The broader point: when patent scanning is embedded as a required step in an AI agent workflow rather than an optional manual check, it becomes structurally impossible to skip under deadline pressure. The compliance posture this creates is what large brands have always had. The AI agent plus real-time data API combination extends it to solo operators.

4. Technical Implementation: Building a Real-Time Amazon Monitoring System

Architecture Overview

The minimum viable real-time Amazon data system has three layers:

- Data acquisition layer: Pangolinfo Scrape API handles all Amazon data retrieval — no proxy infrastructure, no anti-detection engineering required on your side

- Processing layer: Lightweight Python scripts or an AI agent framework (LangChain recommended) for data cleaning, anomaly detection, and scoring

- Output layer: Structured reports, real-time alerts via Slack/email webhook, and optional visualization in Google Sheets or Notion

Working Python Implementation: BSR Monitoring with Stockout Alerts

import requests

import json

from datetime import datetime

PANGOLINFO_API_KEY = "your_api_key_here"

WATCH_ASINS = ["B0XXXXXXXXX", "B0YYYYYYYYY", "B0ZZZZZZZZZ"]

BSR_SURGE_THRESHOLD = 200 # positions gained in one interval

LOW_STOCK_KEYWORDS = ["Only 1 left", "Only 2 left", "Only 3 left", "Temporarily out of stock"]

def fetch_product_data(asin: str) -> dict:

"""Retrieve live BSR, price, and inventory data via Pangolinfo Scrape API."""

url = "https://api.pangolinfo.com/v1/amazon/product"

response = requests.get(

url,

headers={"Authorization": f"Bearer {PANGOLINFO_API_KEY}"},

params={"asin": asin, "marketplace": "US", "fields": "bsr,price,inventory_status,review_count"},

timeout=10

)

response.raise_for_status()

return response.json()

def run_monitoring_cycle(bsr_history: dict) -> tuple:

"""Single monitoring pass — returns alerts list and updated BSR history."""

alerts = []

for asin in WATCH_ASINS:

data = fetch_product_data(asin)

current_bsr = data.get("bsr", 0)

inv_status = data.get("inventory_status", "")

# Stockout alert

if any(kw in inv_status for kw in LOW_STOCK_KEYWORDS):

alerts.append({

"type": "STOCKOUT_IMMINENT",

"asin": asin,

"inventory_status": inv_status,

"action": "Increase ad budget 40-60% for next 18 hours",

"timestamp": datetime.utcnow().isoformat()

})

# BSR surge alert (rank number dropping = sales accelerating)

if asin in bsr_history and bsr_history[asin] > 0:

positions_gained = bsr_history[asin] - current_bsr

if positions_gained > BSR_SURGE_THRESHOLD:

alerts.append({

"type": "BSR_SURGE",

"asin": asin,

"positions_gained": positions_gained,

"current_bsr": current_bsr,

"action": "Investigate demand driver; consider inventory reorder",

"timestamp": datetime.utcnow().isoformat()

})

bsr_history[asin] = current_bsr

return alerts, bsr_history

# Run one cycle (in production: wrap in a scheduler like APScheduler or cron)

history = {}

alerts, history = run_monitoring_cycle(history)

if alerts:

print(json.dumps(alerts, indent=2))

# TODO: send to Slack webhook or email

Full implementation guides — including database persistence, scheduler setup, and Slack integration — are available in the Pangolinfo documentation center.

No-Code Path: AMZ Data Tracker

For sellers without a technical resource, AMZ Data Tracker provides a visual monitoring interface requiring no code. Input your ASIN watchlist, configure BSR change thresholds and inventory alerts, and receive notifications when conditions are met. Update frequency is higher than daily SaaS tools and sufficient for most competitive monitoring use cases with watchlists under 50 ASINs.

5. SaaS Tools vs. Real-Time API: A Decision Framework

| Dimension | Jungle Scout / Helium10 | Pangolinfo Scrape API | AMZ Data Tracker |

|---|---|---|---|

| Data refresh rate | Daily (some weekly) | Minute-level (configurable) | Hourly |

| AI agent integration | Not supported | Native JSON output | Limited |

| Customization | Fixed feature set | Fully custom | Preset fields |

| Cost (light user) | $49-$299/month | <$35/month | Pay-as-you-go |

| Technical requirement | None | Basic Python | None |

| Multi-marketplace | Major markets | All Amazon sites | Major markets |

Who Should Use What

Monthly GMV under $5,000: Start with Helium10’s free tier or Jungle Scout’s starter plan to build the data analysis habit. Real-time API is not yet the highest-leverage investment at this scale.

Monthly GMV $5,000-$50,000, managing 10-50 ASINs: AMZ Data Tracker is the right upgrade — real-time competitive monitoring with zero technical setup, at a cost that makes sense for your margin structure.

Monthly GMV over $50,000, or technical resource available: Pangolinfo Scrape API is the optimal path. You are operating in the range where data delay has measurable GMV impact, and the API’s AI agent integration capability allows you to build competitive advantages that no fixed-feature SaaS tool can replicate.

SaaS tool developers and data service providers: Pangolinfo Scrape API as the data acquisition layer eliminates 80%+ of infrastructure engineering cost versus a self-built scraping stack, with higher reliability and a commercial SLA.

6. Frequently Asked Questions

Does using an Amazon scraper API violate Amazon’s terms of service?

Amazon’s terms of service restrict automated access through Seller Central account credentials. Pangolinfo Scrape API does not use any seller account credentials — it accesses the same public-facing product listing pages available to any consumer or browser. The US Ninth Circuit’s hiQ Labs v. LinkedIn ruling (2022) established that collecting publicly displayed web data is generally lawful, and this principle has been widely applied to commercial data collection from public marketplaces.

What data success rate can I expect from the API?

Pangolinfo guarantees 99%+ data collection success rate through its SLA, backed by a distributed residential IP pool and intelligent rotation system. The API returns structured JSON for all supported data types — product detail pages, search results pages, BSR rankings, price history, and review data — with no parsing required on the client side.

How quickly can I get started?

Register at the Pangolinfo console and receive free credits immediately — no credit card required. The documentation center provides working Python, Node.js, and PHP examples. Most developers make their first successful API call within 30 minutes of registration. A basic BSR monitoring script like the one above can be production-ready within a few hours.

Beyond Amazon, what other platforms does the API support?

Pangolinfo Scrape API covers all Amazon global marketplaces (US, UK, DE, JP, CA, AU, and more), plus Walmart, eBay, Etsy, and Google Shopping. For multi-platform sellers, this means a unified API interface for cross-platform competitive monitoring without maintaining separate scraping systems per platform.

What Data Democratization Actually Means for Your Operations

The data gap between large and small Amazon sellers was never primarily about money. It was about access — specifically, access to real-time market intelligence that allowed enterprise operations to act on events as they happened rather than after the fact.

That access barrier has fallen. The same AI agent frameworks, the same minute-level BSR data, the same automated IP risk assessment that cost enterprise sellers six figures a year to build in-house are now available to any seller willing to spend $35/month and a weekend on setup.

In the three POD wall art categories examined here — 3D sandstone relief, LED aurora lights, Louvre plaster busts — the sellers capturing outsized returns are not the best-capitalized. They are the most data-responsive. They see the stockout window in hour one, not day two. They see the holiday demand signal in week one, not week three. They check patent risk automatically, not occasionally.

That operating posture is now available to small sellers. The question is only whether you build it.

Start with a free account: Pangolinfo Scrape API — minute-level Amazon data, no infrastructure required.

No-code option: AMZ Data Tracker — real-time competitor monitoring, zero setup.