AI Transformation in Amazon E-commerce Operations

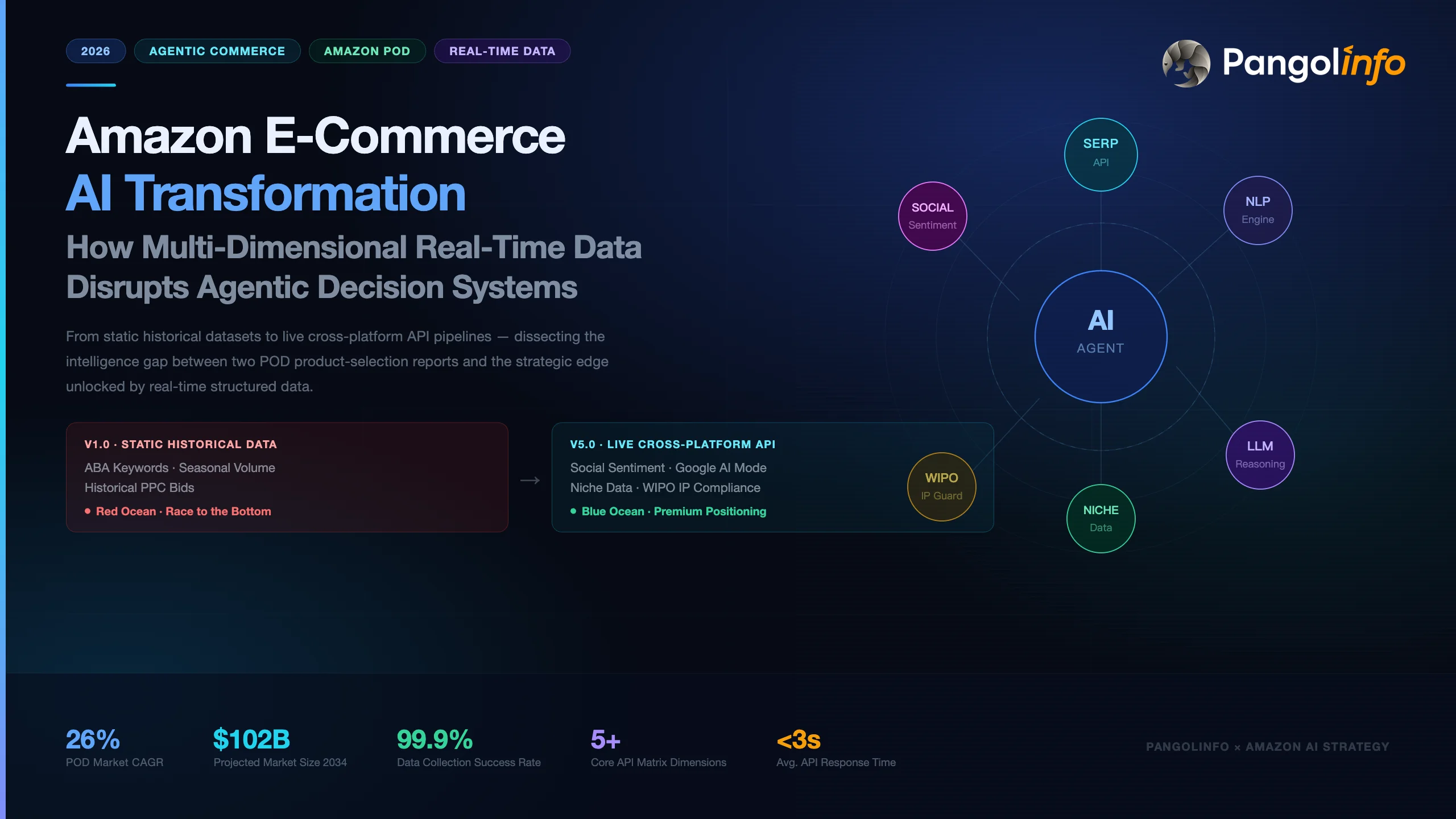

In the highly competitive and complex ecosystem of global e-commerce, Amazon has built an intensely rigorous commercial landscape powered by its massive user base and tens of millions of active products. Over the past decade, Business Intelligence (BI) tools relying on historical big data have dominated sellers’ product selection, pricing, and advertising strategies. However, with the explosion of Large Language Models (LLMs) and Agentic AI, traditional e-commerce operations are undergoing a fundamental paradigm shift from “passive descriptive analysis” to “Agentic Commerce”.

During this critical transition to agentic systems, a common misconception has emerged in the industry: the belief that simply plugging a foundational AI model into e-commerce workflows is enough to achieve intelligent cost reduction and efficiency gains. However, numerous practical cases demonstrate that the core barrier determining the output quality of an intelligent decision-making system has shifted away from single “algorithmic model parameters” toward the “dimensionality, timeliness, and structural level of the underlying data sources”. Without the nourishment of real-time, cross-domain data, AI agents not only fail to leverage their powerful predictive and reasoning capabilities but also fall into severe “information cocoons” and data hallucinations due to the latency of their training corpora.

This report uses the H2 2026 product selection for the Amazon POD (Print on Demand) decorative painting category as a case study to deeply analyze two AI selection reports driven by different data architectures. Through comparative analysis, we will systematically demonstrate how the integration of real-time omni-channel data, specific AI skill plugins (such as the(https://www.pangolinfo.com/open-claw-amazon-scraper-skill/)), and deep Niche data fundamentally reshapes the commercial insights of AI agents, thereby enabling cross-border enterprises to strategically upgrade from experience-driven to real-time algorithm-driven operations.

Part 1: Baseline Differences in Data Source Dimensions—A Deep Deconstruction of Two POD Decorative Painting Selection Reports

The Print on Demand (POD) market is currently in a phase of rapid growth. Global data forecasts indicate that this market is expanding at a Compound Annual Growth Rate (CAGR) of 26%, with its market size expected to soar from $6.99 billion in 2025 to $10.2 billion by 2034. This business model, built on “zero inventory” and “highly personalized customization,” is extremely sensitive to consumer aesthetic trends and emotional value. In the given case study for H2 2026 product selection, the two AI reports—driven by different data sources—demonstrate starkly different commercial viabilities and competitive levels.

The first report (V1.0) relies on static historical data extracted from traditional keyword tools on the Amazon platform, limiting its data sources to Amazon Brand Analytics (ABA) keyword fluctuations and seasonal search volumes. The second report (V5.0) comprehensively integrates the API matrix provided by Pangolinfo, incorporating cross-platform social media trends, real-time public sentiment from Google AI Mode, and structured deep physical delivery metrics. The difference in these underlying data acquisition methods directly leads to a massive gap in the AI’s selection conclusions, competitive strategies, and risk forecasting.

| Evaluation Dimension | V1.0 Report (Based on Static Historical In-site Data) | V5.0 Report (Based on Omni-channel Real-time API Data) | Commercial Consequence Forecast |

| Underlying Data Source | Amazon in-site monthly search volume, PPC bids, conversion rates | Cross-platform social media sentiment, Google AI Mode trends, structured multi-dimensional review parsing | Static data leads to red ocean price wars; real-time data helps capture blue ocean market share early |

| Visual Element Insights | Custom photos, motivational quotes, beach/coastal vibes | 3D plaster textures, muted earthy tones, crossing street sign anniversary art | Surface-level homogenous competition vs. High aesthetic premium and physical barrier construction |

| Trend Forecast Granularity | Relies on holiday tags (e.g., Independence Day, Father’s Day) | Deep emotional scenarios and implicit demands (personalized 3D texture YoY growth +150%) | Blind stocking leading to inventory buildup vs. Precision customization leading to high conversion rates |

| Product Pain Point Closed-loop | Shallow operational reminders (unclear sizing, color differences) | Physical craft-integrated prompt generation (1.5 inch thickness, flat canvas mapping) | Remains on paper vs. Achieving a closed-loop execution from concept to manufacturing |

The Latency Trap of Static In-site Data: From “False Blue Ocean” to Homogenized Red Ocean

Analyzing the data logic of the first report (V1.0) reveals that its conclusions are highly dependent on search volume rankings and ad click costs. The AI performed a shallow sentiment analysis based on authentic reviews of 469 custom canvases and 386 photo prints, extracting Top 5 elements such as “Photo Customization” and “Beach/Coastal”. Interpreting the metrics, the report highlights that the PPC bid for the “Beach/Coastal” style is only $0.20 with a supply-demand ratio of 5.27. The AI agent subsequently deemed this a “blue ocean opportunity” and recommended that sellers invest heavily to execute it immediately.

From the perspective of deeper, second-order insights, this purely quantitative historical data analysis contains a fatal latency trap. Data provided by traditional keyword tools often reflects the accumulated demand of the previous market cycle. When all sellers relying on similar static databases simultaneously receive the signal that “Beach style PPC is only $0.20” and flood the market, the initially fragile blue ocean will rapidly deteriorate into a bottom-line price war within weeks. Furthermore, the V1.0 report’s analysis of “pitfall elements” stops at surface-level phenomena like “unclear sizing” and “color deviation,” failing to provide fundamental guidance for upgrading the product’s physical design and manufacturing process.

The Dimensional Strike of Omni-channel Real-time Data: Predicting Trends Beyond Platform Boundaries

In contrast, the second report (V5.0) demonstrates an entirely different cognitive dimension. By connecting to the real-time overviews of the Google search engine via the(AI SERP API) and integrating public sentiment monitoring from social platforms like Reddit, TikTok, and Pinterest, the AI agent captured the upstream evolution of consumer aesthetics. The report accurately points out that “the market in 2026 is undergoing a visual-tactile revolution, evolving from flat to three-dimensional,” and deeply extracts high-premium visual elements such as “3D Plaster,” “Embossed,” and “Muted Tones”.

This predictive capability does not come from existing hot search terms on Amazon, but from the spillover of cross-platform aesthetic emotions. By sensing demand upstream, sellers can finalize product listings and secure market positioning before consumers translate these vague desires into specific Amazon search queries. Particularly in the conception of “Plan A: Minimalist Crossroads Anniversary Art,” the AI noticed a 65% surge in this specific trend. This represents a profound third-order insight: the design is no longer just selling a decorative frame, but using “two intersecting street signs” as a psychological metaphor for specific coordinates and the exact location where a couple first met. This highly customized emotional connection completely escapes the quagmire of low-price competition and grants the product immense pricing power.

Part 2: Data Ingestion Bottlenecks and Architectural Restructuring for LLMs in E-commerce Operations

To understand the massive disparity in the quality of the aforementioned selection reports, one must deeply analyze the underlying mechanisms and technical bottlenecks of current Large Language Models when navigating complex business environments. In the evolution of “Intelligent Retail,” e-commerce platforms need systems to predict consumer needs—and provide solutions—even before the consumers realize them. This requires AI to be more than just a chatbot; it must be an execution engine capable of tapping into real-time data streams.

The Failed Path of Combining Traditional Scrapers with AI Models: Context Pollution and Anti-Bot Blockades

When attempting to build custom Amazon AI agents, many development teams adopt a simple “native scraper + LLM” splicing model. For example, a seller once attempted to use Clawdbot (powered by Claude 3.5 Sonnet) to directly control a browser to scrape competitor pricing and inventory data. However, empirical results showed this method to be highly inefficient: the AI’s process of controlling the browser to load pages, bypass anti-bot mechanisms, and extract dynamic content failed frequently. The success rate was roughly 60%, the cost per run was high at $1.50, and it took over 30 minutes to complete.

A more fatal issue lies in data quality and the Context Window limitations of large models. Amazon intercepts over 210 million malicious crawling requests daily. Its AI-driven anti-bot systems can identify and block abnormal traffic via request fingerprints and behavioral models within 0.8 seconds. Even if developers manage to scrape the page source code, directly feeding hundreds of thousands of characters of messy HTML (containing massive amounts of JavaScript, ad-tracking codes, and invisible CSS) into an LLM causes severe “context pollution.” Amidst this massive noise interference, the AI is highly prone to hallucination, mistaking the price of a “similar recommended product” at the bottom of the page for the target product’s price, leading to disastrous automated repricing decisions.

The Real-time Data Pipeline for Agentic Commerce

A truly efficient architecture demands that we strictly decouple “data collection” from “logical analysis”. As demonstrated by Pangolinfo’s enterprise-grade data acquisition capabilities, the system can convert highly dynamic front-end rendered pages into pure, structured JSON data streams with an average response time of under 3 seconds and a 99.9% success rate.

This real-time Data Pipeline forms the foundation of Agentic Commerce. When an AI agent runs in the background, it no longer relies on stale knowledge graphs. Instead, it continuously receives the latest status from product catalogs, inventory systems, and real-time review modules via Change Data Capture (CDC) pipelines. For example, if a specific size of decorative painting suddenly goes out of stock, or a competitor slashes their price by 40% within the last hour, an agent connected to the(https://www.pangolinfo.com/scrape-api/) can instantly detect these changes and automatically suppress promotional recommendations or adjust bidding models, compressing the decision cycle from days to mere minutes.

Part 3: Underlying Empowerment of Multi-Agent Synergy by Pangolinfo Scraper Skill

To completely break down the technical barriers between data acquisition and AI reasoning, Pangolinfo innovatively launched the(Amazon Scraper Skill). This core plugin, designed specifically for AI Agent IDEs (such as OpenClaw, Claude Code, Cursor, and LangChain frameworks), is reshaping the development ecosystem for e-commerce AI tools.

Terminal Plug-and-Play Integration and Cloud-Hosted Anti-Bot Mechanisms

For engineers developing AI agents, a simple NPM command (e.g., npx clawhub@latest install pangolinfo-amazon-scraper) and API key configuration can seamlessly mount powerful real-time scraping capabilities into the Agent environment. The underlying layer of this plugin is driven by Pangolinfo’s cloud engine, which fully assumes the most headache-inducing infrastructure maintenance tasks: including the dynamic routing of a global pool of millions of residential IPs, automatic CAPTCHA solving, and intelligent disguising of TLS handshake signatures.

This cloud-hosted model frees development teams from bearing the $120,000 annual maintenance cost of self-built proxies, and spares them from enduring valid data acquisition rates below 40%. Technical teams can focus all their computing power and R&D resources on core Prompt Chaining engineering and business logic deduction.

Structured JSON Washer and Extreme Token Cost Optimization

The most revolutionary feature provided by the( Amazon Scraper Skill) is its built-in “JSON Washer” mechanism. It can strip away the HTML noise of the original webpage in milliseconds, returning highly standardized, deterministic JSON or Markdown formats.

In a Multi-Agent collaboration flow, this determinism is paramount. A typical Amazon product research agent is actually a chain composed of multiple “Narrow Agents”: one agent collects price and sales signals, another retrieves historical review features via a vector database and LangChain framework, a third evaluates competition intensity, and finally, a decision agent synthesizes the action plan.

When the first agent acquires data via the( Amazon Scraper Skill), it does not receive megabytes of code, but rather streamlined core fields like {"price": 39.99, "rating": 4.5, "review_count": 469}. This not only exponentially reduces the Token consumption of the LLM but also drastically improves the reasoning accuracy of downstream NLP models, entirely eliminating the risk of hallucinations caused by data parsing errors. According to feedback from veteran Amazon sellers, utilizing this workflow that combines APIs with LLM analysis saves 25 hours of manual repetitive labor per month, saves approximately $5,400 in annual operational costs, and provides second-level reaction capabilities when responding to sudden market changes.

Part 4: Connecting Amazon’s Omni-channel Data—Deep Application of the Multi-dimensional API Matrix

In the V5.0 report, the AI agent demonstrated “blue ocean detection” and “physical barrier construction” capabilities that transcend conventional methods. The cornerstone of this capability lies in Pangolinfo’s omni-channel API matrix, which completes the business intelligence puzzle from various entry points.

1. Breaking Static Category Limits: Deep Sniffing with Pangolinfo Niche Data

Traditional market research tools usually restrict sellers to filtering layer-by-layer within Amazon’s official Browse Node tree. However, official category settings are often lagging and struggle to map the micro-demands dynamically formed by consumers. By calling the Pangolinfo Niche Filter API, the AI agent can execute true “Blue Ocean Strategy” scanning.

This API provides evaluation across over 50 deep commercial metrics. The AI Agent does not search aimlessly but executes automated filtering based on specific algorithmic logic:

- Market Fundamentals and Health: Setting a minimum 90-day search volume (

searchVolumeT90Min) and demanding an extremely low 360-day return rate (returnRateT360) to filter out tracks with scarce traffic or high quality risks. - Anti-Monopoly and Competitive Barrier Analysis: By extracting the click share of the top 5 brands (

top5BrandsClickShare) and the average brand age (avgBrandAge), the AI can accurately determine whether a niche is tightly controlled by traditional giants or if there is immense room for new brand breakthroughs. - Real Breakthrough Rate Calculation: Most crucially, the API provides comparative metrics between new product launches (

newProductsLaunchedT360) and the actual number of successful new product breakthroughs (successfulLaunchesT360).

In the V5.0 report, the reason the AI did not recommend broad red oceans like “Beach Style” is precisely because, when calling such Niche data in the background, it found an extremely low new product breakthrough rate and deteriorating ad competition costs. Conversely, long-tail scenarios like “Family Name Signs” and “Custom Anniversary” showed highly robust conversion stability and low monopoly characteristics.

2. Reshaping Consumer Emotional Perception: Structured NLP via Reviews Scraper API

Understanding consumer pain points is the core of product iteration. Traditional 200-review sample analyses (as shown in the V1.0 report) are highly prone to generalization biases. Once connected to the(Reviews Scraper API), the AI can concurrently scrape thousands of authentic reviews in seconds and use the “Verified Purchase” filter to eliminate brushing noise.

| Analysis Module | NLP Sentiment Analysis Mechanism | Insight Value | Response Strategy Generation |

| Negative Sentiment Clustering | Automatically filters and extracts high-frequency word clusters like “cheap physical feel,” “dark colors,” “logistics deformation” | Reveals true pain points, avoiding conventional design blind spots | Upgrades packaging protection, emphasizes side thickness and color calibration |

| Micro-Variant Tracking | Contrasts emotional differences of “Variation Used” across different sizes and colors | Discovers hidden high-rating specifications | Optimizes inventory ratios, phases out variants prone to bad reviews |

| High-Quality UGC Extraction | Identifies Vine Voice and high-weight Helpful Votes reviews | Captures authentic secondary transmission pain points and scenarios of consumers | Extracts keywords like “healing vibe” and “modern farmhouse” to guide main image design |

After obtaining the structured JSON file of reviews, the AI system not only summarizes the problems but also reverse-engineers the product’s upgrade path. Regarding the complaints about “thin plastic materials” in the V5.0 report, the AI creatively proposed improvement solutions introducing “Brush Strokes” and “Modern Farmhouse” aesthetics. This product reconstruction, originating from native consumer complaints, achieves a depth unmatched by traditional tools.

3. The Intersection of Google AI Mode and Social Media Sentiment: The Cross-Domain Vision of the SERP API

Being confined to Amazon’s in-site search data often means only catching the tail-end of a demand explosion. True trend foresight stems from the fermentation of social media sentiment across the broader internet. In the V5.0 report, the AI agent captured external engine data via the(https://docs.pangolinfo.com/en-api-reference/serpApi/serpAPI) and specific plugins.

More importantly, Google’s AI Overview (formerly SGE) module currently occupies the “Rank Zero” position for nearly a third of search results, reaching up to 75% coverage for information retrieval and “How-to” queries. Through the(AI SERP API ), developers’ AI agents can directly intercept the consensus summaries, knowledge graphs, and citation sources generated by the world’s top search engine, without having to expend massive computing power independently summarizing tens of thousands of webpages.

For example, when querying “2026 interior decor trends,” the high-frequency summaries returned by AI Overview inevitably encompass annual color trends extending from Pinterest (e.g., Muted Tones like terracotta red and sage green). Combined with the UULE parameter, this API can even precisely simulate authentic local search results originating from downtown Manhattan in New York or central London, thereby capturing the most authentic regional preference differences. This strategy of fusing off-site “grassroots sentiment” with machine “intelligent consensus” constitutes the V5.0 report’s highly disruptive and accurate anticipation of future trends.

4. IP Defense and Offline Layouts: Synergy between WIPO and Map Data

The Print on Demand (POD) industry is a disaster zone for Intellectual Property (IP) infringement. Many sellers have their accounts banned due to blindly scraping trending patterns. Integrating the(WIPO API) into the AI agent’s workflow enables a seamless transition from creative generation to compliance auditing.

When the AI Agent conceives design schemes such as the “Crossroads Street Sign” or “3D Embossed Family Name,” the system automatically calls the World Intellectual Property Organization (WIPO) database in the background to conduct a global, front-end screening of key graphic elements and phrases (such as specific quotes or brand names). Explicitly marking “Infringement Risk: Extremely Low (Only involves text and generic geometric designs)” in the report is not just a bold creative assumption, but commercial risk management based on serious compliance data.

Furthermore, for brand sellers seeking multi-channel development, the(Map Data API) provides an effective avenue to understand physical business distributions. By bulk extracting merchant names, ratings, business hours, and addresses from map search results, the AI agent can assist in evaluating the geographical distribution of offline physical galleries and home decor stores, providing solid data support for subsequent omni-channel brand expansion.

Part 5: Closed-loop Implementation—Connecting Real-time Dynamic Input and Multi-dimensional Collaborative Smart Workflows

No matter how grand the strategic insights are, if they cannot be integrated into the enterprise’s existing daily operational system, they remain mere paper talk. The part of the V5.0 report that inspired and astounded the client the most was its realization of a dynamic closed-loop from trend mining to “on-site instant feedback and mockup generation”. This flexibility absolutely relies on a highly efficient collaborative environment.

Synergy Between(AMZ Data Tracker) and Customized Feishu Multi-dimensional Tables

To lower the barrier to entry for non-technical personnel, Pangolinfo offers the zero-code visual tool,(https://www.pangolinfo.com/amz-data-tracker/). Sellers can use a simple interface to configure tracking targets (specific ASINs, keyword rankings, or category bestseller lists) and set execution frequencies on a daily or hourly basis.

Through the customized multi-dimensional table architecture, this real-time scraped data is automatically synced to the enterprise’s private Feishu (Lark) workspace. This localized deployment ensures that the enterprise’s most core commercial secrets (such as selection algorithm logic, bottom-line suppliers, and conversion rate models) are never exposed to the public cloud.

Within the Feishu multi-dimensional tables, users can define extremely complex Smart Alerts. When the system detects a “hidden champion” (a newly listed product with surging sales) in a tracked category, or monitors a competitor’s core ASIN quietly altering its main image or dropping its price, the system immediately triggers an automated notification pushed to the operations team.

Craft-Level Physical Delivery Constraints of Generative AI

Today, with the widespread adoption of AI drawing tools (like Midjourney), designing visually stunning decorative paintings is no longer a challenge. The real challenge lies in “Manufacturability.” The V5.0 report concludes by demonstrating how to use Prompt strategies to control AI to generate texture designs with printing feasibility.

Because the AI system had already grasped the consumers’ core demands (premium feel) and logistics/craft pain points (canvas wrinkling, deformation) through real-time review scraping in the early stages, it forced special engineering physical protocols when generating AI painting prompts. When generating the “3D plaster texture” street sign, the prompt explicitly included "1.5 inch Gallery Wrap wooden frame visible on the side" and "flat printable surface".

This highly technical instruction design forces the generative AI to construct high-contrast physical shadows and present a 3D depth illusion, while simultaneously ensuring that the final image can actually be inkjet-printed by a traditional 2D printer onto a flat canvas, with sufficient side thickness to withstand logistics compression. This is the precise convergence and empowerment of AI creativity by real-time market feedback data, representing an engineering-level closed-loop operation completely unattainable by traditional static data analysis.

Conclusion: Reconstructing Cognitive Boundaries and Harnessing Intelligent Dividends in the Data Flood

Synthesizing the comprehensive analysis of the 2026 Amazon POD decorative painting selection case, we can draw a definitive conclusion: In the era of Agentic Commerce, the nature of e-commerce competition has evolved from the “quantity of information acquired” to the “dimensionality, timeliness, and structured processing capability of data acquired.”

Systems relying on traditional static analysis and lagging data (like the V1.0 report) will inevitably push sellers into the abyss of homogenized competition and price wars. Conversely, AI systems connected to modern real-time API matrices represented by Pangolinfo (like the V5.0 report) demonstrate an overwhelming commercial dimensional strike capability. This ranges from solving anti-bot and data cleaning challenges via the(Amazon Scraper Skill), to piercing through surface-level data to hit blue ocean metrics using Niche Data; from reshaping consumer emotional perception via the(Amazon Reviews API), to achieving cross-domain foresight and copyright compliance defense in synergy with the(AIO API) and(WIPO API).

Connecting to omni-channel real-time data is not just to make a product selection report look more detailed; its fundamental significance lies in endowing AI Agents with the “visual perception” and “tactile feedback” required to interact at high frequencies with the real world. As the underlying technological ecosystem continues to mature, those cross-border e-commerce enterprises that can pioneer the seamless integration of real-time structured data streams with LLM reasoning capabilities—and build highly automated operational closed-loops guarded against physical risks—will undoubtedly seize the highest level of strategic initiative in the fierce global market competition of the future, continuously reaping outsized intelligent dividends centered around algorithms and insights.