Article Summary

This guide covers five high-impact Open Claw cross-border e-commerce use cases: competitor monitoring, intelligent product research, review analysis, advertising keyword intelligence, and multi-platform inventory aggregation. Each scenario includes technical implementation details, Python code examples, and integration guidance with Pangolinfo’s real-time data API to help Amazon sellers deploy AI agent automation workflows quickly and at scale.

Bottom Line First: 5 Open Claw Cross-Border E-Commerce Use Cases Worth Implementing Today

Before diving into details, here’s what matters most. These five Open Claw cross-border e-commerce applications deliver measurable ROI and can be deployed with existing technical resources most seller teams already have:

① Real-Time Competitor Monitoring — Query competitor BSR trends, price movements, and promotional patterns via natural language instead of manually refreshing spreadsheets across a dozen tabs every morning. ② AI-Powered Product Research — Let agents auto-pull new releases, bestseller lists, and category data, combine with LLM analysis, and output structured product research reports while your team focuses on sourcing decisions. ③ Review Intelligence & Listing Optimization — Batch-collect competitor negative reviews, extract recurring pain points automatically, and feed those insights directly into product iteration and listing copy generation. ④ Advertising Keyword & SERP Intelligence — Unify search results page ad position data, organic keyword rankings, and SP ad performance metrics in a single agent workflow for data-driven bidding strategy recommendations. ⑤ Multi-Platform Inventory & Sales Aggregation — From Amazon to Walmart to Shopee, use one AI interface to pull inventory levels, daily sales velocity, and pricing data across platforms, generating a prioritized restocking recommendation in seconds.

Every one of these use cases depends on the same foundation: reliable, real-time, structured e-commerce data APIs. Without dependable data sources, even the most sophisticated AI agent frameworks are just inference engines running on outdated or fabricated information. The sections below break down each scenario’s technical architecture, implementation details, and why Pangolinfo’s data infrastructure is the production-grade choice for powering Open Claw cross-border e-commerce deployments.

The Cross-Border E-Commerce Data Problem Nobody Talks About

Most Amazon sellers subscribe to an arsenal of SaaS tools—Helium 10, Jungle Scout, DataDive, Perpetua—each doing one thing reasonably well. But here’s what nobody tells you when you’re stacking up subscriptions: tools that don’t communicate with each other create invisible cognitive overhead. Every time you need to answer a real business question—”Should we increase ad spend on this ASIN right now?”—you’re mentally acting as the integration layer between five different dashboards, pulling numbers from each, reconciling discrepancies, then making a call that may already be outdated by the time you make it.

This isn’t a problem of intelligence or effort. It’s a structural flaw in how traditional seller tools were built: each tool is designed to be a data destination, not a data pipeline. You get information, but you have no efficient way to transform it into decisions at the speed markets actually move. During peak season price wars, a competitor can drop prices 30% at 2 AM and capture your Buy Box by the time your team logs in at 9. Traditional monitoring workflows simply aren’t fast enough to compete at that level.

There’s a second, less obvious problem that emerges when sellers turn to AI assistants hoping for help: large language model hallucination. Ask GPT-4 or Claude about “the current top 10 ASINs in wireless earbuds on Amazon US” and you’ll get a response that sounds authoritative and is almost certainly wrong—because LLMs have training data cutoffs, and Amazon marketplace data changes by the hour. BSR rankings can swing by hundreds of positions within a single day. Pricing, availability, review counts—all of it is live, ephemeral data that no static model can reliably answer.

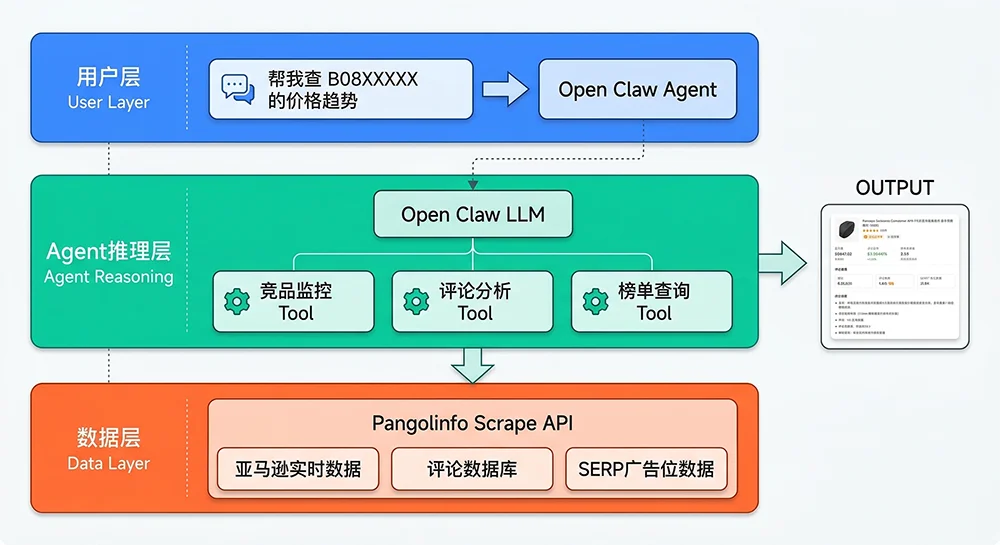

Open Claw addresses both problems through its tool-calling architecture. Rather than storing or guessing at data, it routes LLM reasoning toward live external APIs—real-time market data, structured and fresh, pulled at query time. The model thinks; the API fetches; the agent synthesizes. The result is a workflow where sellers interact with current data through natural language, without needing to know which endpoint to call or how to parse the response format.

Why AI Agents Outperform Traditional Tools for Cross-Border E-Commerce Data Workflows

To understand where Open Claw fits in a cross-border seller’s tech stack, it helps to map how data moves through traditional versus agent-based workflows.

In the traditional model: Amazon Platform → third-party data vendor’s database (cached, updated every 1-3 days) → SaaS dashboard UI → seller reads charts → human analysis → decision. The seller receives secondhand data, no cross-source correlation is possible without manual effort, and every query requires knowing which tool to open and how to configure the filter settings.

In the Open Claw model: Seller asks natural language question → Open Claw agent (LLM reasoning layer) → real-time call to Pangolinfo Scrape API (minute-level freshness) → structured JSON returned → agent parses, corroborates, synthesizes → actionable natural language conclusion delivered back to seller. Data never sits stale. No dashboard navigation is required. Cross-source analysis (e.g., correlating BSR changes with review velocity) happens within a single agent workflow.

The performance gap between these two approaches scales with market volatility. In stable categories with slow-moving competitors, traditional tools are adequate. In fast-moving categories—electronics, seasonal products, trending niches—the agent-based approach isn’t just incrementally better; it operates in a fundamentally different time domain that traditional tools can’t match.

Open Claw vs. Traditional Tools: A Comparison Framework

Four dimensions matter most when comparing Open Claw’s cross-border e-commerce capabilities against conventional tools:

Data freshness: Traditional SaaS tools typically refresh data every 24-72 hours. Open Claw connected to Pangolinfo’s scraping infrastructure delivers data at query time, with sub-minute latency on most Amazon product endpoints. For time-sensitive decisions like promotional response or inventory reorder trigger points, this gap is decisive.

Query flexibility: Traditional tools answer predefined questions via fixed dashboard widgets. Open Claw agents answer arbitrary natural language questions by dynamically selecting and combining the right API calls. “Which of our top 20 ASINs by revenue has the highest review-to-BSR improvement ratio this month?” is a query no standard tool dashboard was designed to answer—but it’s trivial to formulate for an agent with the right data tools.

Integration depth: Traditional tools sit outside your workflow, requiring manual context-switching. Open Claw agents embed into Slack, DingTalk, Feishu, or any webhook-capable communication platform. Your team asks questions where they already work; answers appear in context without app-switching.

Customization ceiling: SaaS tools evolve on vendor roadmaps. Open Claw’s architecture lets you define custom tools, custom workflows, and custom output formats—adapting precisely to your team’s unique analytical needs rather than working around a vendor’s fixed feature set.

Open Claw Cross-Border E-Commerce: Five Use Cases in Depth

Use Case 1: Real-Time Competitor Monitoring — Replace Daily Spreadsheet Updates with a 30-Second Query

Most seller operations teams have a version of the same spreadsheet: a tab for each major competitor, updated every morning by someone manually checking BSR, price, review count, and Buy Box status across 15-30 ASINs. On a good day, this takes 45 minutes. On a bad day—when someone quits, when the spreadsheet formula breaks, when nobody remembered to check—critical competitive intelligence goes unnoticed until the damage is done.

Open Claw cross-border e-commerce competitor monitoring replaces this workflow fundamentally. A seller’s operations team sets up a monitoring agent with a curated ASIN watchlist and a set of alert thresholds—say, price drops exceeding 12%, BSR improvement of more than 300 positions in 24 hours, or inventory going out of stock. The agent queries Pangolinfo Scrape API on a scheduled cadence (every 2-4 hours is common), compares current data against baselines, and triggers Slack notifications only when meaningful changes are detected.

The practical outcome: instead of spending 45 minutes every morning manually checking what hasn’t changed, your team receives targeted alerts when something actually matters. The cognitive load of surveillance shifts from humans to the agent. Human attention focuses where it should—on strategy response to the signals the agent surfaces.

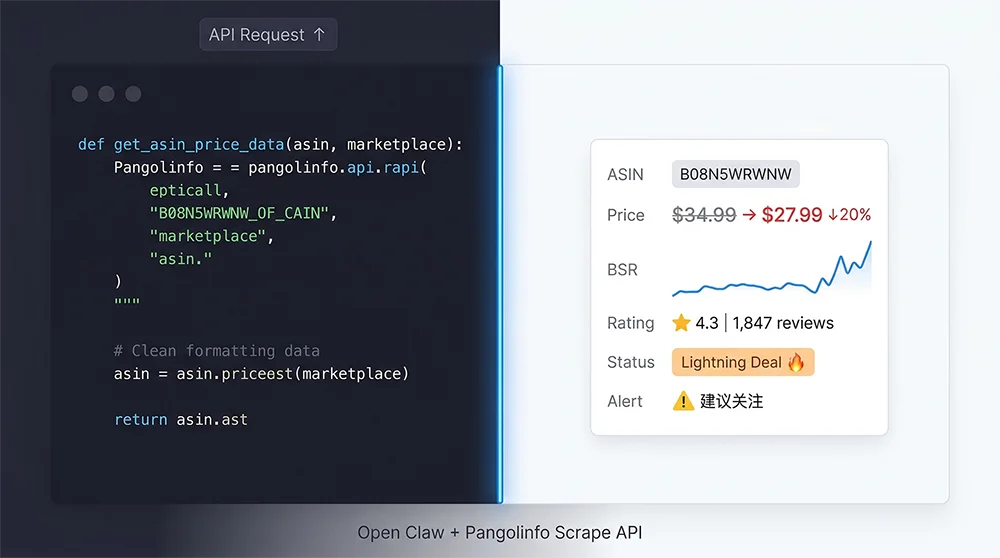

For teams with more complex needs, the monitoring workflow can be extended to include historical trend analysis. When an alert fires, the agent doesn’t just report the current price—it contextualizes it: “ASIN B08XXXXXX dropped from $34.99 to $27.99 (20.0% decrease). This is the lowest price point in 90 days, 15% below its 30-day average. Two similar events in the past 6 months preceded BSR improvements of 200+ positions within 48 hours. Recommend reviewing your pricing on competing ASINs B09AAAAAA and B09BBBBBB.”

# Open Claw Cross-Border E-Commerce: Competitor Monitoring Tool

# Integrates with Pangolinfo Scrape API for real-time ASIN data

import requests

import json

from datetime import datetime, timedelta

from typing import Optional

PANGOLINFO_API_KEY = "your_api_key_here"

API_BASE = "https://api.pangolinfo.com/v1"

def get_asin_current_data(asin: str, marketplace: str = "US") -> dict:

"""

Fetch real-time data for a single ASIN via Pangolinfo Scrape API.

Registered as an Open Claw MCP Tool for agent use.

Args:

asin: Amazon Standard Identification Number (e.g., B08N5WRWNW)

marketplace: Two-letter marketplace code (US, UK, DE, JP, CA, etc.)

Returns:

Structured dict with price, BSR, rating, review_count, availability

"""

headers = {

"Authorization": f"Bearer {PANGOLINFO_API_KEY}",

"Content-Type": "application/json"

}

payload = {

"source": "amazon_product",

"asin": asin,

"marketplace": marketplace,

"fields": [

"price", "original_price", "currency",

"bsr", "bsr_category", "bsr_subcategory",

"rating", "review_count",

"availability", "seller_type",

"deal_type", "deal_end_time" # capture Lightning Deals

],

"output_format": "json"

}

try:

response = requests.post(

f"{API_BASE}/scrape",

headers=headers,

json=payload,

timeout=30

)

response.raise_for_status()

data = response.json()

return {

"success": True,

"asin": asin,

"marketplace": marketplace,

"fetched_at": datetime.utcnow().isoformat() + "Z",

"price": data.get("price"),

"original_price": data.get("original_price"),

"discount_pct": _calc_discount(data.get("original_price"), data.get("price")),

"currency": data.get("currency", "USD"),

"bsr": data.get("bsr"),

"bsr_category": data.get("bsr_category"),

"rating": data.get("rating"),

"review_count": data.get("review_count"),

"availability": data.get("availability"),

"seller_type": data.get("seller_type"),

"active_deal": data.get("deal_type"),

}

except requests.exceptions.Timeout:

return {"success": False, "error": "Request timed out", "asin": asin}

except Exception as e:

return {"success": False, "error": str(e), "asin": asin}

def _calc_discount(original: Optional[float], current: Optional[float]) -> Optional[float]:

if original and current and original > 0:

return round((original - current) / original * 100, 1)

return None

def batch_monitor_watchlist(asin_list: list,

baseline_data: dict,

alert_config: dict) -> list:

"""

Monitor a list of competitor ASINs against historical baselines.

alert_config example:

{

"price_drop_threshold_pct": 12,

"bsr_improvement_threshold": 300,

"notify_on_deal_detected": True,

"notify_on_out_of_stock": True

}

"""

alerts = []

for asin in asin_list:

current = get_asin_current_data(asin)

if not current.get("success"):

continue

baseline = baseline_data.get(asin, {})

# Price drop alert

if (current.get("discount_pct") and

current["discount_pct"] > alert_config.get("price_drop_threshold_pct", 15)):

alerts.append({

"asin": asin,

"alert_type": "significant_price_drop",

"severity": "high",

"detail": f"Price dropped {current['discount_pct']}% "

f"(from ${current.get('original_price')} to ${current.get('price')})",

"current_data": current

})

# BSR improvement alert

baseline_bsr = baseline.get("bsr")

current_bsr = current.get("bsr")

if baseline_bsr and current_bsr:

improvement = baseline_bsr - current_bsr

if improvement > alert_config.get("bsr_improvement_threshold", 300):

alerts.append({

"asin": asin,

"alert_type": "bsr_significant_improvement",

"severity": "medium",

"detail": f"BSR improved by {improvement} positions "

f"({baseline_bsr} → {current_bsr})",

"current_data": current

})

# Lightning Deal alert

if (alert_config.get("notify_on_deal_detected") and

current.get("active_deal")):

alerts.append({

"asin": asin,

"alert_type": "active_promotion_detected",

"severity": "high",

"detail": f"Active deal detected: {current['active_deal']}",

"current_data": current

})

return alerts

# MCP Tool definition for Open Claw agent registration

COMPETITOR_MONITOR_TOOL = {

"name": "get_asin_current_data",

"description": (

"Fetch real-time product data for an Amazon ASIN including price, BSR ranking, "

"rating, review count, and availability. Use this when the user asks about "

"current competitor product status, pricing, or ranking. Do NOT use for "

"historical trend analysis (use the historical data tool instead)."

),

"input_schema": {

"type": "object",

"properties": {

"asin": {

"type": "string",

"description": "Amazon ASIN, exactly 10 characters (e.g., B08N5WRWNW)"

},

"marketplace": {

"type": "string",

"enum": ["US", "UK", "DE", "JP", "CA", "FR", "IT", "ES", "AU", "IN"],

"description": "Amazon marketplace code. Default is US.",

"default": "US"

}

},

"required": ["asin"]

}

}

Use Case 2: AI-Powered Product Research — From Bestseller Lists to Sourcing Decisions in Minutes

Product research is where most seller teams spend a disproportionate amount of time relative to the outcome quality they achieve. The traditional process—manually browsing category bestseller pages, filtering by estimated monthly sales, checking review counts, eyeballing competition intensity, cross-referencing with supplier databases—typically consumes 2-3 full working days per research cycle. And after all that effort, the results are only as current as the last time someone ran through the process, which means opportunities identified on Monday may have shifted significantly by Thursday when sourcing decisions are finally made.

Open Claw cross-border e-commerce product research transforms this workflow from episodic manual effort into a continuous, automated intelligence feed. The setup: define your product criteria as a structured JSON config (minimum monthly sales estimate, maximum review count for top competitors, price range, acceptable BSR range, prohibited categories). Attach that config to a research agent with access to Pangolinfo’s category bestseller and new releases data endpoints. Schedule it to run weekly or trigger it on demand.

When the agent runs, it pulls the top 100 ASINs from target category pages, filters against your criteria in a batch evaluation pass, queries detailed data for candidates that survive initial screening, and then passes the shortlist to the LLM for opportunity analysis and narrative summary. The output is a formatted product research report with ranked candidates, each accompanied by a 200-250 word opportunity assessment covering market saturation, differentiation potential, and risk factors. Your team reviews and refines; sourcing outreach begins within hours rather than days.

The combination of Pangolinfo Scrape API‘s new releases and bestseller endpoints with Open Claw’s multi-step agent chains turns product research from a manual, time-boxed process into a continuously running background intelligence system—something that was simply impossible to build at reasonable cost before API-accessible real-time e-commerce data became available at production scale.

Use Case 3: Review Intelligence — Mining Competitor Weaknesses at Scale

Amazon reviews represent the most honest, unfiltered market research data available to e-commerce sellers—direct voice-of-customer feedback at scale, impossible to manufacture or spin. A competitor with 4,000 reviews contains within those reviews an extraordinarily detailed map of what buyers love, what they hate, what they wish were different, and what they care about enough to write 300 words explaining. The problem has never been the data’s existence; it’s been the cost of extracting signal from that volume of text manually.

An Open Claw cross-border e-commerce review analysis workflow changes this calculus entirely. Using Pangolinfo’s Reviews Scraper API—which supports star-rating filtering, chronological sorting, verified purchase flag extraction, and complete Customer Says field capture—an agent can collect 200-500 relevant reviews, preprocess them to extract the highest-signal content (title, rating, first 250 characters of body text, helpful vote count), and pass that condensed dataset to an LLM for thematic analysis.

The LLM output structures findings into three actionable categories: pain points appearing in 3-star-and-below reviews (what the product fails at, ranked by frequency), positive attributes consistently cited in 4-5 star reviews (what buyers value most), and product improvement opportunities (specific, actionable changes that would address the most common complaints). This analysis, which would take a skilled analyst 3-4 hours to produce manually, completes in under 2 minutes with the agent workflow.

The downstream applications multiply the value further. Review pain points feed directly into listing copywriting—Bullet Points that explicitly address the weaknesses buyers complain about in competitor products. Product iteration teams get a ranked feature wish list. Customer service teams learn the questions buyers have before purchasing, informing proactive FAQ content. And review velocity monitoring via scheduled agent jobs creates an early warning system for product quality issues before they accumulate into a ratings crisis.

# Open Claw Review Intelligence: Competitor Analysis Workflow

# Uses Pangolinfo Reviews Scraper API + LLM thematic analysis

import requests

from anthropic import Anthropic

from dataclasses import dataclass

from typing import List, Dict

PANGOLINFO_API_KEY = "your_api_key_here"

client = Anthropic()

@dataclass

class ReviewRecord:

rating: int

title: str

body_excerpt: str # first 250 chars

helpful_votes: int

verified_purchase: bool

date: str

def fetch_reviews_for_analysis(asin: str,

max_reviews: int = 300,

min_rating: int = 1,

max_rating: int = 5) -> List[ReviewRecord]:

"""

Fetch Amazon reviews via Pangolinfo Reviews Scraper API.

Filters and preprocesses for LLM consumption.

"""

headers = {"Authorization": f"Bearer {PANGOLINFO_API_KEY}"}

all_reviews = []

page = 1

while len(all_reviews) < max_reviews:

params = {

"asin": asin,

"marketplace": "US",

"page": page,

"sort_by": "helpful", # harvest highest-value reviews first

"verified_only": False, # include all for comprehensive view

"output_format": "json"

}

response = requests.get(

"https://api.pangolinfo.com/v1/reviews",

headers=headers,

params=params

)

data = response.json()

reviews = data.get("reviews", [])

if not reviews:

break

for r in reviews:

rating = r.get("rating", 0)

if min_rating <= rating <= max_rating:

all_reviews.append(ReviewRecord(

rating=rating,

title=r.get("title", ""),

body_excerpt=r.get("body", "")[:250],

helpful_votes=r.get("helpful_votes", 0),

verified_purchase=r.get("verified_purchase", False),

date=r.get("date", "")

))

page += 1

if len(all_reviews) >= max_reviews:

break

return all_reviews[:max_reviews]

def generate_competitive_review_intelligence(asin: str,

product_category: str,

your_product_notes: str = "") -> dict:

"""

Complete review intelligence pipeline:

1. Fetch competitor reviews via Pangolinfo API

2. Separate into positive / negative pools

3. LLM thematic analysis

4. Output actionable intelligence report

"""

print(f"Fetching reviews for ASIN: {asin}...")

# Get negative reviews (1-3 stars) for pain point analysis

negative_reviews = fetch_reviews_for_analysis(asin, max_reviews=150,

min_rating=1, max_rating=3)

# Get positive reviews (4-5 stars) for value proposition analysis

positive_reviews = fetch_reviews_for_analysis(asin, max_reviews=100,

min_rating=4, max_rating=5)

print(f"Fetched {len(negative_reviews)} negative, {len(positive_reviews)} positive reviews")

# Format for LLM

neg_text = "\n".join([

f"[{r.rating}★ | Helpful: {r.helpful_votes}] {r.title}: {r.body_excerpt}"

for r in sorted(negative_reviews, key=lambda x: -x.helpful_votes)[:80]

])

pos_text = "\n".join([

f"[{r.rating}★ | Helpful: {r.helpful_votes}] {r.title}: {r.body_excerpt}"

for r in sorted(positive_reviews, key=lambda x: -x.helpful_votes)[:50]

])

analysis_prompt = f"""

You are a senior e-commerce product strategist analyzing Amazon customer reviews

for competitive intelligence.

Product Category: {product_category}

Total negative reviews analyzed: {len(negative_reviews)}

Total positive reviews analyzed: {len(positive_reviews)}

{f"Notes about our competing product: {your_product_notes}" if your_product_notes else ""}

NEGATIVE REVIEW DATA (sorted by helpful votes):

{neg_text}

POSITIVE REVIEW DATA (sorted by helpful votes):

{pos_text}

Please provide:

## 1. Pain Points Map (Top 10 complaints, ranked by frequency/severity)

For each: complaint description, estimated frequency %, severity rating (1-5),

and whether it represents a design/quality/expectation/shipping issue.

## 2. Value Drivers (Top 5 attributes buyers praise most)

For each: attribute description, why buyers value it, how often mentioned.

## 3. Product Improvement Opportunity Matrix

Specific, actionable product improvements that would directly address the

top 5 pain points. Include estimated difficulty (Easy/Medium/Hard) and

potential impact on conversion rate.

## 4. Listing Optimization Recommendations

3-5 specific Bullet Point recommendations for a competing product that

positions against these pain points. Write them in the seller's voice.

## 5. Risk Signals

Any patterns suggesting systemic product quality issues, safety concerns,

or regulatory compliance risks that should be flagged before sourcing.

"""

response = client.messages.create(

model="claude-3-7-sonnet-20250219",

max_tokens=3000,

messages=[{"role": "user", "content": analysis_prompt}]

)

return {

"asin": asin,

"category": product_category,

"reviews_analyzed": {

"negative": len(negative_reviews),

"positive": len(positive_reviews)

},

"intelligence_report": response.content[0].text,

"generated_at": datetime.utcnow().isoformat() + "Z"

}

Use Case 4: Advertising Intelligence — Connecting SERP Data to Bidding Strategy

Amazon advertising optimization is one of the most data-intensive disciplines in e-commerce operations, and paradoxically, one of the least well-served by existing tooling when it comes to cross-referencing competitive context with owned campaign data. Your advertising console tells you your ACoS, your spend, your sales attribution. What it doesn’t tell you with any granularity: who specifically is outbidding you for Sponsored Product positions, how their presence has changed week-over-week, and whether their pricing strategy is creating a structural disadvantage for your keyword economics.

This cross-referencing is exactly where an Open Claw agent powered by Pangolinfo’s SERP data delivers outsized value. Pangolinfo’s advertising data capture—including SP ad position data with an industry-leading 98% capture rate—enables agents to query which ASINs are occupying the top ad positions for your target keywords, how that competitive landscape has shifted over recent periods, and how advertised ASINs’ pricing compares to your own. Combined with your own ACoS trends from campaign reporting, an agent can synthesize a coherent picture of whether your current keyword strategy needs adjustment.

The interaction model is natural: “Check who’s running ads for ‘wireless earbuds under $30’ on Amazon US right now, and compare it to last week. Have any new competitors entered the top positions? What are their price points?” The agent queries the SERP data API, formats the comparison, and returns a concise competitive intelligence brief—a task that previously required manual SERP screenshots, spreadsheet tabulation, and 30-90 minutes of analysis time compressed into a 45-second agent workflow.

Use Case 5: Multi-Platform Inventory Aggregation — One AI Interface for All Your Marketplaces

Multi-marketplace selling has become the norm for sellers with meaningful scale, but the operational complexity it introduces is rarely discussed honestly. Running concurrent operations across Amazon, Walmart, Shopee, and other platforms means maintaining separate logins, separate dashboards, separate export processes, and separate mental models of each marketplace’s business pulse. The synthesis of all these data streams into a coherent multi-platform picture typically happens in a weekly sync meeting—by which point inventory decisions may already be overdue.

Open Claw’s architecture handles multi-platform aggregation elegantly through its multi-tool workflow capability: a single agent query can trigger parallel calls to multiple platform data sources simultaneously, collect the structured responses, and synthesize them into a unified answer. “Give me our wireless earbuds SKU inventory status and 7-day average daily units sold across all platforms, ranked by urgency for restock” becomes a single agent task that runs in parallel across platforms and returns a prioritized restocking recommendation within a minute.

Pangolinfo’s Scrape API supports cross-platform data collection spanning Amazon, Walmart, Shopify, Shopee, and eBay with consistent JSON output schema across platforms—giving Open Claw agents a uniform interface regardless of which marketplace data is being requested. For operations teams managing 5+ marketplaces, this consistency dramatically simplifies the tool registration overhead and makes multi-platform query logic straightforward to implement and maintain.

Getting Started: Open Claw Cross-Border E-Commerce Integration in Three Steps

The technical barrier to deploying the use cases described above is lower than most teams expect. Here’s a realistic picture of what’s involved at each stage.

Step 1: Register your data tools. Wrap Pangolinfo API endpoints as Open Claw MCP Tools. Each tool needs: a name, a clear natural-language description (critical—this is how the LLM decides when to use the tool), an input parameter schema, and the actual HTTP request implementation. For the competitor monitoring use case, you need 2-3 tools. For review analysis, 1-2. Total setup time for a developer familiar with Python and REST APIs: 4-8 hours.

Step 2: Configure agent workflows. Open Claw supports both visual workflow editors and code-based agent definition. For the use cases above, we recommend starting with the visual editor for simple single-step agents (real-time ASIN lookup, basic SERP query), then graduating to code for multi-step orchestration (product research pipeline, batch review analysis). The key design decision: err on the side of narrow agent scope—agents with 2-5 tightly related tools significantly outperform agents with broad tool access in terms of accuracy and response coherence.

Step 3: Connect to your team’s workflow. This is where adoption either happens or stalls. Push agent outputs via webhook to wherever your team actually makes decisions—Slack/DingTalk alerts for competitor price drops, Notion database entries for product research reports, email summaries for weekly review intelligence digests. The technical implementation is typically 1-2 days; the design work of mapping agent outputs to team decision touchpoints is the more critical investment.

For teams without dedicated technical headcount, there are no-code pathways using n8n or Make.com to connect Pangolinfo API with cloud LLM services—less flexible but sufficient for single-use-case deployments, and deployable by a technically capable operations manager without full engineering support.

Production Considerations: Error Handling and Data Quality

Three failure modes to design around before going to production:

First, LLM tool selection errors. When multiple tools have overlapping conceptual scope, the LLM will sometimes call the wrong one. The remedy is precise, differentiated tool descriptions with explicit guidance on “when to use” and “when NOT to use.” More tokens in the tool description is almost always worth the investment in accuracy.

Second, context window overflow with large data payloads. Review analysis workflows that retrieve 300 reviews in full JSON format will breach context limits on most current models. Always pre-process API responses to extract only the fields and character lengths the LLM actually needs. Building a preprocessing layer between the API response and LLM input is not optional for production deployments.

Third, API timeout and rate limit handling. Network issues and rate limiting are inevitable at scale. Build retry logic (exponential backoff, 3 attempts max), degrade gracefully with user-visible status messages when data is unavailable rather than agent errors, and monitor API usage against your plan limits as traffic grows.

The Competitive Window for Open Claw Cross-Border E-Commerce Is Open Now

The five Open Claw cross-border e-commerce use cases described in this guide share a common characteristic: they’re all operational advantages that compound over time. Every week your team runs automated competitor monitoring is a week of market intelligence your competitors are collecting manually—slower, less consistently, and at higher labor cost. The delta between AI-automated intelligence workflows and manual data processes widens with each iteration cycle.

The infrastructure to build this capability is available and accessible today. Open Claw provides the agent framework. Pangolinfo provides the real-time data backbone—structured, production-grade Amazon data including product details, bestseller rankings, review datasets, SERP and SP ad position data, all via a consistent API interface specifically designed to power the kind of automated workflows described here. The combination requires real technical effort to deploy well, but the operational leverage it creates—and the competitive disadvantage of not building it as peers do—makes the investment straightforward to justify.

Open Claw cross-border e-commerce automation isn’t a future state to plan toward. The adoption curve is moving fast enough that the question is no longer whether to build this capability, but how quickly your team can ship it.

Ready to build your Open Claw cross-border e-commerce data automation workflow? Start with Pangolinfo Scrape API — real-time Amazon data in structured JSON, designed for agent integration. Full API documentation available, with sandbox access for testing your integration before going live.

For enterprise custom data solutions or technical integration consulting, contact the Pangolinfo solutions team via our official website.