Your AI Agent Has a Data Problem—And You Probably Already Know It

There’s a pattern that keeps showing up across AI startup post-mortems: the team spent eight months fine-tuning a large language model for e-commerce intelligence, shipped it, and then discovered that users didn’t find it useful. The model could explain the concept of “competitive moat” on Amazon, but couldn’t tell a seller which new product entered the top 100 BSR in the Hand Vacuum category last Tuesday. It understood that review velocity matters, but had no idea what review velocity looks like for any real product that’s actually selling today.

This isn’t a model architecture problem. It isn’t even a prompting problem. It’s an AI training data collection problem—specifically, the kind that stems from building on stale, sparse, or structurally incomplete e-commerce datasets. The training set used? A two-year-old Kaggle competition dataset supplemented with some static HTML scraped without a consistent schema. Data lag exceeded 18 months; field completeness hovered around 63%. The AI Agent was, in plain terms, hallucinating e-commerce decisions.

This scenario is far from isolated. As large language model deployment accelerates across the e-commerce vertical, the failure mode is consistent: teams invest disproportionately in model selection and prompt engineering while treating data engineering as an afterthought. The result is an AI Agent that’s architecturally sophisticated but operationally unreliable—because the machine learning data sources feeding it are fundamentally broken.

This article breaks down what high-quality AI training data collection actually looks like for e-commerce AI systems, with a particular focus on two data types that matter most in 2025 and beyond: Amazon product data and Google AI Overview data.

What AI Agents Actually Need from E-commerce Data

Most discussions of LLM training data stay at a comfortable level of abstraction. When the end goal is an AI Agent capable of product selection analysis, competitive pricing intelligence, review sentiment tracking, or demand forecasting, the data requirements become both specific and demanding.

For an Amazon-focused AI Agent, the minimum viable training corpus spans several distinct data types. Structural product data comes first: ASIN, category path, title, bullet points, A+ content, dimension and weight specifications. This layer gives the model its ontological foundation—a working understanding of what a product is, how it’s positioned, and how it’s described. Dynamic sales signals form the second tier: BSR rank time series, price point history, estimated monthly sales velocity. Without temporal data, a model can describe Amazon’s marketplace in static terms but cannot reason about trends, seasonality, or the lifecycle of a product opportunity. Competitive signals—review count distribution across a category, rating curves, review acceleration patterns for newly launched products—complete the picture by allowing the model to assess competitive intensity rather than simply describing it.

Google AI Overview data represents a second, equally important machine learning data source that most e-commerce AI teams haven’t fully exploited yet. Since Google rolled out AI Overview (AIO) across core search results, purchasing decisions increasingly begin and sometimes end at the SERP level. For an AI Agent designed to understand user search intent or model the relationship between search behavior and purchase conversion, AIO data provides something that no traditional e-commerce dataset can: a direct window into how AI-mediated search is reshaping product discovery. Which brands does Google’s AI consistently feature for specific category queries? What product attributes appear in AI-generated summaries? How does AIO coverage correlate with actual BSR rank? These patterns constitute high-value ecommerce AI training samples that are genuinely novel.

The hard truth about most available open-source e-commerce datasets is that they fail on both counts simultaneously—stale timestamps and missing the AIO dimension entirely make them inadequate as machine learning data sources for production AI Agents in 2025.

The Four Quality Dimensions That Actually Matter

Freshness tops the list for any AI Agent operating in a dynamic marketplace: pricing data older than 30 days is unreliable for most decision contexts; Google AIO content changes daily, making week-old snapshots potentially misleading for intent modeling. Structural completeness is second: a schema with a 30% field null rate introduces noise that actively degrades model quality—worse, in many cases, than simply having fewer records. Scale is third: statistically meaningful patterns in a niche category require hundreds of SKUs minimum; cross-category generalization in a fine-tuned model requires thousands. Annotation quality closes the loop: raw HTML scraped from Amazon is not a usable LLM training dataset; it requires parsing, field normalization, and semantic labeling before it qualifies as a machine learning data source.

Three Paths to E-commerce Training Data: What Each One Actually Costs

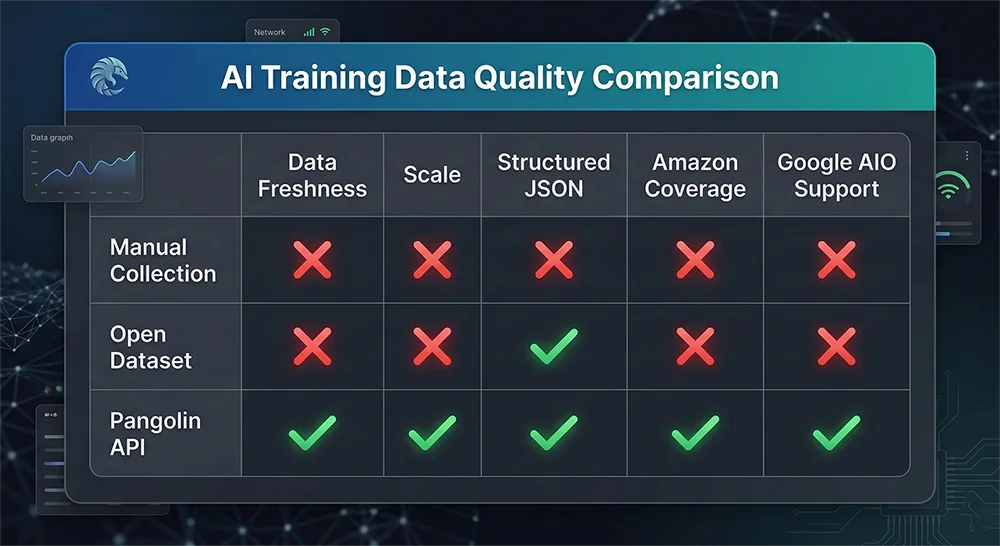

AI teams building e-commerce AI training datasets typically evaluate three approaches: in-house web scraping infrastructure, open dataset reliance, and third-party data APIs. The practical differences between these paths are larger than they appear from the outside.

Building in-house scraping infrastructure has genuine appeal—complete control over data schema, collection timing, and depth of coverage. The operational reality is considerably harsher. Amazon’s anti-scraping infrastructure is among the most sophisticated on the internet: dynamic IP blocking, aggressive CAPTCHA deployment, JavaScript-rendered content that static scrapers cannot parse, and session management requirements that demand substantial engineering investment to handle reliably. A realistic estimate for maintaining a production-grade Amazon scraper across major product categories runs to $6,000–$10,000 per month in engineering time and infrastructure costs, with continued fragility every time Amazon updates its page templates—which happens frequently and without notice. Google AI Overview presents a harder challenge still: AIO content is dynamically generated for each individual query and requires full headless browser rendering with sophisticated content extraction logic that no static scraper can handle.

Open datasets have the fatal combination of poor freshness and poor coverage. Platforms like Hugging Face and Kaggle host e-commerce collections that are typically one to three years old, with field completeness rates that rarely exceed 70%, and no AIO data whatsoever. Using them introduces systematic bias into any time-series or trend-based AI application.

Professional data APIs separate the engineering guarantee of data quality from the application layer. The Pangolin Scrape API handles IP rotation, session management, JS rendering, and anti-detection mechanisms as infrastructure, delivering structured JSON output that plugs directly into data processing pipelines. For teams building AI training data collection workflows, this means the engineering effort starts at cleaning and labeling—not at keeping a fragile scraper alive against an adversarial detection system.

Pangolin API: Designed for Production AI Training Data Pipelines

What makes Pangolin’s data collection infrastructure particularly well-suited for AI training data collection isn’t a single capability—it’s the combination of several production-oriented design decisions that matter specifically in the ML data engineering context.

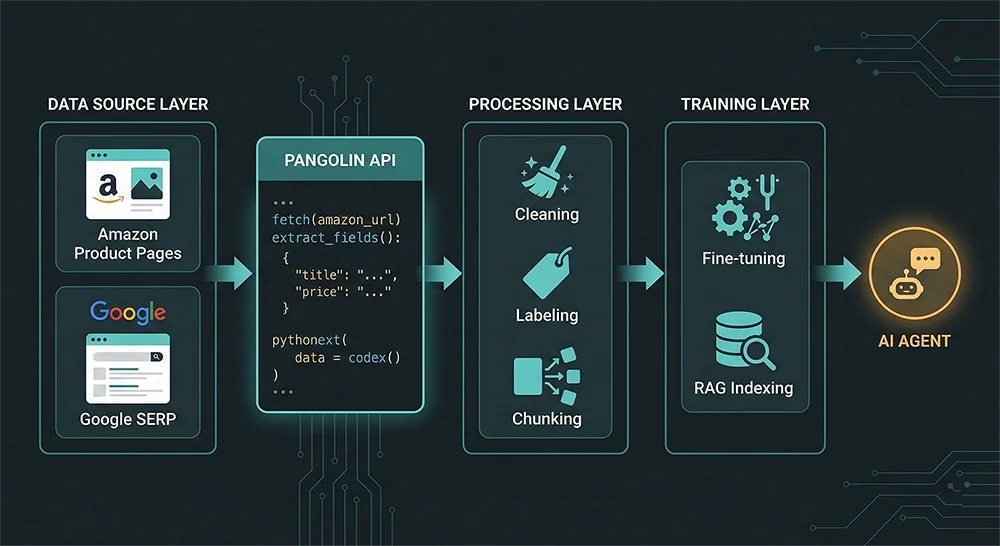

On the Amazon data side, the Scrape API covers every data type required for a comprehensive AI Agent training corpus: full product detail pages (including A+ content, variation matrices, and shipping dimension tables), new product release rankings with historical comparison support, keyword search results pages with ad slot distribution data (Sponsored Product slot capture rate leading the industry), and complete review data including the Customer Says field that Amazon’s own interface now surfaces prominently. Output arrives in structured JSON with a stable, versioned schema—meaning your data pipeline doesn’t break when Amazon makes UI changes, because Pangolin maintains the extraction layer. The zip-code-level targeting capability deserves special mention for AI training applications: pricing, Prime eligibility, and delivery estimates vary materially by fulfillment region, and models built on geographically indiscriminate data will systematically misrepresent regional competitive dynamics.

On the Google AIO side, Pangolin’s AI Overview SERP API provides full-field extraction of AI Overview content—including the generated summary text, cited source URLs, related questions, and featured product cards that appear within AIO panels. For teams building RAG knowledge bases, training intent classification models, or studying the relationship between AIO coverage and e-commerce performance, this represents the only scalable path to structured AIO data. Manual collection at any meaningful scale is operationally impossible; purpose-built APIs are the only realistic option.

For review-centric use cases—training sentiment analysis models, building product quality classifiers, or creating defect detection systems—the Reviews Scraper API provides complete extraction of Amazon review content including the Customer Says aggregate field, which is among the highest-quality naturally-occurring labeled sentiment data available for e-commerce applications.

From Raw Data to Clean Training Set: The Essential Processing Pipeline

Raw API output requires several processing stages before it qualifies as a usable AI training dataset. Deduplication and temporal merging comes first: the same ASIN collected at multiple timestamps needs intelligent merging logic that preserves historical state rather than collapsing into a single record. Field type normalization handles the predictable inconsistencies: price fields containing currency symbols or ranges, rating fields with non-numeric noise, BSR values that spike anomalously due to collection errors rather than actual rank changes. Schema validation enforces minimum completeness thresholds—records missing title, price, or category path should be quarantined rather than included in a training set where they’ll introduce noise. Semantic annotation elevates raw structured data into training-ready examples: labeling reviews by complaint category, tagging bullet points by attribute type (functional claim vs. use case vs. comparison statement), or marking search result positions with their corresponding AIO coverage status.

End-to-End Walkthrough: Building an AI Agent Training Dataset with Pangolin

Here’s a practical code walkthrough showing how to construct a fused Amazon + Google AIO training dataset using Pangolin’s APIs—the same pattern used by e-commerce AI teams building production-grade training pipelines:

import requests

import json

import pandas as pd

from datetime import datetime

from typing import Optional

PANGOLIN_API_KEY = "your_api_key_here"

BASE_HEADERS = {

"X-API-Key": PANGOLIN_API_KEY,

"Content-Type": "application/json"

}

# ————————————————————————————————————————————————

# Module 1: Amazon Product Data Collection

# Fetch new releases + full product details for training corpus

# ————————————————————————————————————————————————

def collect_amazon_category_data(

category_id: str,

marketplace: str = "US",

zip_code: Optional[str] = None

) -> list[dict]:

"""

Collect structured product data from Amazon new releases

for a specific category. Optionally target a specific zip code

to capture region-specific pricing and fulfillment data.

"""

payload = {

"category_id": category_id,

"marketplace": marketplace,

"fields": [

"asin", "title", "brand", "price", "currency",

"rating", "review_count", "bsr_rank", "bsr_category",

"bullet_points", "description", "category_path",

"date_first_available", "dimensions", "weight",

"sponsored_ad_presence", "prime_eligible"

],

"page_size": 100

}

if zip_code:

payload["zip_code"] = zip_code # Region-specific data collection

response = requests.post(

"https://api.pangolinfo.com/v1/amazon/new-releases",

headers=BASE_HEADERS,

json=payload,

timeout=30

)

response.raise_for_status()

products = response.json().get("products", [])

# Attach collection metadata

for p in products:

p["collection_timestamp"] = datetime.utcnow().isoformat()

p["source_marketplace"] = marketplace

p["source_zip"] = zip_code or "default"

return products

# ————————————————————————————————————————————————

# Module 2: Google AI Overview Data Collection

# Capture AIO-generated content for intent modeling

# ————————————————————————————————————————————————

def collect_google_aio_corpus(

keywords: list[str],

locale: str = "en-US",

device: str = "desktop"

) -> list[dict]:

"""

Collect Google AI Overview content for a list of keywords.

Returns structured AIO data for RAG indexing or intent classification training.

"""

aio_corpus = []

for keyword in keywords:

payload = {

"keyword": keyword,

"locale": locale,

"device": device,

"include_fields": [

"ai_summary", "ai_summary_sources",

"related_questions", "featured_brands",

"featured_products", "has_ai_overview",

"organic_results_count"

]

}

try:

resp = requests.post(

"https://api.pangolinfo.com/v1/serp/ai-overview",

headers=BASE_HEADERS,

json=payload,

timeout=30

)

resp.raise_for_status()

data = resp.json()

if data.get("has_ai_overview"):

aio_corpus.append({

"keyword": keyword,

"locale": locale,

"has_ai_overview": True,

"ai_summary": data["ai_summary"],

"cited_sources": data.get("ai_summary_sources", []),

"related_questions": data.get("related_questions", []),

"featured_brands": data.get("featured_brands", []),

"featured_products": data.get("featured_products", []),

"collection_timestamp": datetime.utcnow().isoformat()

})

except requests.RequestException as e:

print(f"[Warning] Failed to collect AIO for '{keyword}': {e}")

return aio_corpus

# ————————————————————————————————————————————————

# Module 3: Dataset Construction & Quality Filtering

# ————————————————————————————————————————————————

def build_training_dataset(

amazon_products: list[dict],

aio_corpus: list[dict],

min_reviews: int = 5,

min_rating: float = 3.5

) -> pd.DataFrame:

"""

Merge Amazon product data with Google AIO signals to produce

a multi-dimensional training dataset for e-commerce AI Agents.

Quality filters applied: minimum review threshold, rating floor.

"""

df = pd.DataFrame(amazon_products)

# --- Structural quality filtering ---

required_fields = ["asin", "title", "price", "category_path"]

df = df.dropna(subset=required_fields)

df["price"] = pd.to_numeric(df["price"], errors="coerce")

df = df[df["price"] > 0]

df = df[df["review_count"] >= min_reviews]

df = df[df["rating"] >= min_rating]

# --- Build AIO brand signal index ---

aio_featured_brands = set()

aio_keyword_associations = {}

for item in aio_corpus:

for brand in item.get("featured_brands", []):

aio_featured_brands.add(brand.lower())

# Map keywords to AIO summary content for RAG training pairs

aio_keyword_associations[item["keyword"]] = item.get("ai_summary", "")

# --- Annotate products with AIO signals ---

df["aio_brand_featured"] = df["brand"].str.lower().isin(aio_featured_brands)

df["competitive_tier"] = df["review_count"].apply(

lambda x: "established" if x > 1000 else ("growing" if x > 100 else "new_entrant")

)

# --- Add RAG training pairs from AIO corpus ---

rag_pairs = [

{"query": kw, "context": summary, "source": "google_aio"}

for kw, summary in aio_keyword_associations.items()

if summary

]

print(f"\n✅ Training dataset summary:")

print(f" Amazon products (quality-filtered): {len(df):,}")

print(f" AIO-featured brands indexed: {len(aio_featured_brands)}")

print(f" RAG training pairs generated: {len(rag_pairs)}")

print(f" Products with AIO brand signal: {df['aio_brand_featured'].sum()} ({df['aio_brand_featured'].mean():.1%})")

return df

# ————————————————————————————————————————————————

# Main execution

# ————————————————————————————————————————————————

if __name__ == "__main__":

CATEGORY_ID = "3944" # Hand Vacuums subcategory

MARKETPLACE = "US"

TARGET_ZIP = "10001" # NYC zip for region-specific pricing

CATEGORY_KEYWORDS = [

"best handheld vacuum cleaner 2025",

"cordless stick vacuum for pet hair",

"portable car vacuum cleaner",

"handheld vacuum vs stick vacuum"

]

print("📡 Step 1: Collecting Amazon product data...")

amazon_data = collect_amazon_category_data(CATEGORY_ID, MARKETPLACE, TARGET_ZIP)

print(f" Collected {len(amazon_data)} raw product records")

print("🔍 Step 2: Collecting Google AI Overview corpus...")

aio_data = collect_google_aio_corpus(CATEGORY_KEYWORDS, locale="en-US")

print(f" AIO data points with AI Overview: {len(aio_data)}/{len(CATEGORY_KEYWORDS)} keywords")

print("🛠️ Step 3: Building training dataset...")

training_df = build_training_dataset(amazon_data, aio_data)

output_path = f"training_dataset_{CATEGORY_ID}_{datetime.now().strftime('%Y%m%d')}.jsonl"

training_df.to_json(output_path, orient="records", lines=True, force_ascii=False)

print(f"\n💾 Dataset saved to: {output_path}")

The key design decision in this pipeline is the fusion of Amazon structural data with Google AIO brand signals. The resulting training dataset captures not just what products exist, but which brands are receiving AI-mediated endorsement in search results—a feature dimension unavailable from any static open dataset and increasingly predictive of actual sales performance as AIO adoption grows.

AI Training Data Collection Is a Strategic Bet, Not an Engineering Task

The ceiling on any AI Agent’s capability is set by the quality ceiling of its training data. In e-commerce AI, that ceiling is defined largely by two inputs: Amazon product data, which provides the structured factual substrate of the marketplace, and Google AI Overview data, which maps the emerging landscape of AI-mediated user intent. Both are difficult to collect at scale and quality without purpose-built infrastructure. Both are increasingly essential for AI Agents that need to perform reliably in production settings.

Pangolin’s Scrape API and AI Overview SERP API address both collection challenges with production-grade reliability. For AI teams evaluating machine learning data sources for their e-commerce AI training pipelines—whether at the early exploration stage or scaling toward millions of daily records—these APIs represent the fastest path from “we need data” to “we have training data we can trust.”

Your AI Agent’s intelligence starts with the quality of its training data. Get the AI training data collection layer right, and the rest of the stack has a fighting chance.

🚀 Start collecting production-grade AI training data: Pangolin Scrape API | AI Overview SERP API

📖 Full API documentation: docs.pangolinfo.com | Free trial console: tool.pangolinfo.com