The 4 AM Incident That Changed an Engineering Team’s Direction

It was a Wednesday night in November 2024. The CTO of a top-tier Amazon seller tool platform — we’ll call him Michael — was staring at an alert dashboard filled wall to wall with red error logs cascading like a waterfall. Their flagship product, a real-time competitor pricing and ad monitoring SaaS, had been offline for 17 consecutive hours. The customer support channels were in chaos. Three enterprise clients threatened chargebacks. The engineering team, split across four cities on a video call, had no clear answer for what had gone wrong.

This wasn’t a one-off incident. At the time, the company was running around 200 self-managed scraper nodes. Nearly 40% of their engineering cycles were consumed playing cat-and-mouse with Amazon’s anti-scraping systems — rotating IPs, cycling User-Agents, throttling request rates, solving CAPTCHAs. Every time Amazon updated its anti-crawling policies (a major overhaul happened roughly once per quarter), the data team entered a state of emergency. Recoveries took anywhere from three days to two weeks, during which core product data suffered delays or outright gaps.

By late 2024, this platform had grown to serve 32,000+ registered users with a paid conversion rate of about 18% and monthly ARR exceeding RMB 8 million. Their data pipeline was the product’s core competitive moat — and that moat was quietly leaking, not from competitive pressure but from infrastructure that couldn’t keep pace with the business it was supposed to support.

The story of this company’s data infrastructure struggle is far from unique in the industry. What makes it worth telling is that their scale, their pain points, and their eventual transformation are specific enough to be genuinely instructive — which is exactly what this customer success story sets out to document.

Three Layers of Technical Debt: Why Self-Built Scraping Had Hit a Wall

To understand this customer success story properly, it’s worth being precise about what was going wrong. This company wasn’t short on engineering talent — they had a 30-person team, including 7 engineers dedicated to crawler maintenance and 2 managing IP resource procurement and rotation. The problem wasn’t execution. It was a systemic efficiency ceiling that no amount of headcount could solve.

Layer one: the sinkhole economics of anti-bot warfare. Amazon’s anti-scraping infrastructure underwent a fundamental shift in 2023-2024. Before 2023, standard IP rotation with reasonable request intervals was largely sufficient. By mid-2024, Amazon had deployed behavioral fingerprinting and session continuity verification at scale, making IP rotation alone ineffective. The engineering team’s internal calculation: maintaining their existing collection volume (~800K records/month) required roughly $12,000/month in IP infrastructure plus equivalent engineering hours, putting fully-loaded cost at about RMB 0.25 per data record. Not catastrophic at current scale — but multiply that by the 5-10x growth the business demanded and the math became untenable fast.

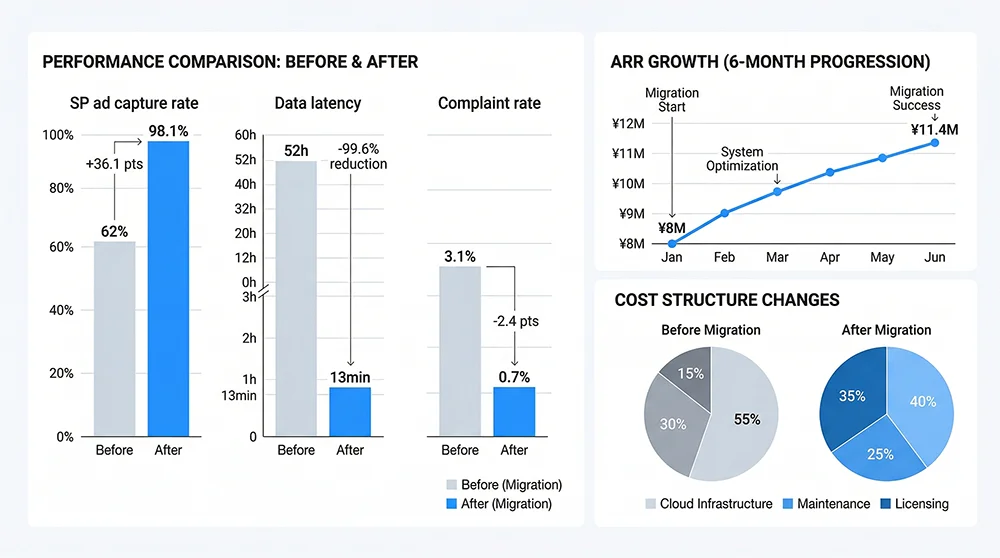

Layer two: the systematic blind spot in sponsored ad data. One of their highest-converting product features was real-time monitoring of competitors’ SP ad placements. It was also their worst-performing data pipeline. Internal audits showed a successful capture rate of roughly 62% for SP ad slot data — meaning nearly 4 out of every 10 ad position records that should have been returned were missing or incorrect. Customers who paid for this feature were working with an incomplete competitive advertising map, driving complaint rates that persistently exceeded 3% per month.

Layer three: data latency that turned “real-time” into a fiction. Amazon’s ranking and advertising data changes at high frequency — Best Sellers rankings in competitive categories can shift meaningfully within an hour. Due to concurrent throughput limitations, this company’s full-category scraping cycle took approximately 52 hours. The “real-time data” sellers were viewing might be two days stale. In price-sensitive categories, that lag could turn a correct strategic decision into a costly mistake.

All three layers compounding together created a paradox: infrastructure maintenance costs kept climbing while data quality kept declining. The bigger the business grew, the worse this inverse relationship became. Michael’s year-end review noted: “We’re not a tool company that happens to scrape data. We’ve become a scraping company that happens to build tools — and we’re not even good at the scraping part.”

The Three-Month Decision: Why External API Won the Evaluation

When the team seriously began evaluating external data API vendors, the internal debate took nearly three months. The counterarguments against outsourcing were legitimate: what happens if the API provider goes down? Self-built infrastructure, however imperfect, at least stays under direct control. These weren’t irrational concerns — the market does include scraping API vendors with inconsistent reliability.

The engineering team built a rigorous evaluation matrix covering: data latency, SP ad capture rate, concurrency ceiling, billing model, output format, SLA guarantees, customization support capability, and — critically — performance during Amazon peak traffic events. That last dimension was one few API vendors addressed directly: Prime Day and Black Friday are precisely when sellers need the most data, when scraping demand spikes 3-5x, and when Amazon’s anti-bot enforcement is at its most aggressive simultaneously.

Three paths emerged: Scale up their own infrastructure (estimated 4-5 additional engineers, doubled IP spend, 6-month build-out timeline); switch to a competitor data API (off-the-shelf product but ~75% SP ad capture rate and inflexible fixed-seat pricing); or adopt Pangolinfo Scrape API (usage-based billing, claimed 95%+ SP ad capture rate, structured JSON output, documented elastic scaling for peak periods).

Two things tipped the balance decisively toward Pangolinfo. First, a live data sample on a standardized URL test set: Pangolinfo’s SP ad position completeness ran approximately 36 percentage points higher than their existing crawler output — and critically, it could return zip-code-level ad data, something their self-built system couldn’t accomplish at all. Second, usage-based billing meant they could flex budget between peak and off-peak periods without maintaining expensive reserve capacity for peaks that only occurred a few weeks per year.

“We can finally become a product company again,” Michael said after the decision was made.

The 90-Day Migration: How the Pangolinfo Integration Was Executed

This company’s technical migration was structured in three phases over 90 days, with zero downtime to current product operations.

Phase 1: POC Validation (Days 1–15)

The team selected their three highest-volume, highest-complaint product lines for proof-of-concept validation: Amazon Best Sellers ranking collection, real-time SP ad placement monitoring, and bulk competitor ASIN detail page retrieval. Using Pangolinfo Scrape API’s sandbox environment, they completed interface integration, data format validation, and concurrency testing within two weeks. The key POC findings: SP ad slot capture rate hit 97.3% on the test set (vs. Pangolinfo’s stated 98% SLA), and zip-code-level ad data precision worked exactly as specified — enabling targeted regional ad monitoring that had been impossible before.

Phase 2: Gradual Cutover (Days 16–60)

Post-POC, they used a traffic-splitting approach: the same collection tasks ran through both Pangolinfo API (starting at 20%) and their existing crawlers (80%), with a parallel comparison layer validating data consistency in real time. They gradually shifted the Pangolinfo proportion from 20% to 80%, completing the full core pipeline cutover on day 60. A welcome side effect of the migration: Pangolinfo’s standardized JSON output prompted them to consolidate their internal data parsing layer from seven different format adapters down to a single intake pipeline, substantially reducing ongoing engineering overhead.

Phase 3: Capability Expansion (Days 61–90)

With the migration complete, they began releasing product features that had been technically blocked by their previous infrastructure constraints: full-category New Releases real-time monitoring (previously too expensive to run broadly), Customer Says semantic data collection (leveraging Scrape API’s complete capture of Amazon’s AI review summary field), and cross-category sponsored ad density analysis. The commercial impact of these new features appears in the results section below.

Throughout implementation, Pangolinfo’s technical team provided dedicated support — interface optimization recommendations, anomaly handling guidance, and frequency strategy calibration for specific collection scenarios. In this customer success story, that support quality deserves explicit mention: a vendor’s implementation support capability often matters as much as the product itself in determining actual deployment success.

Technical Reference: Core API Call Patterns from the Implementation

The following patterns are derived from this customer’s actual production implementation, shared here in anonymized form for teams facing similar challenges.

Pattern 1: Bulk Amazon Best Sellers Collection

import requests

import json

# Pangolinfo Scrape API — Amazon Best Sellers Collection

# API Docs: https://docs.pangolinfo.com/en-api-reference/universalApi/universalApi

API_ENDPOINT = "https://api.pangolinfo.com/v1/scrape"

API_KEY = "your_api_key_here"

def fetch_bestseller_list(category_url: str, zip_code: str = "10001") -> dict:

"""

Collect Amazon Best Sellers ranking data with zip-code-level targeting

Returns structured JSON with product list, ad slots, and metadata

"""

payload = {

"url": category_url,

"render_js": True, # Enable JS rendering for dynamic content

"output_format": "json", # Structured JSON output

"geo": {

"zip_code": zip_code, # Regional targeting for price/ad accuracy

"country": "US"

},

"parse_template": "amazon_bestsellers", # Pre-built Amazon ranking parser

"concurrent_limit": 20

}

headers = {

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json"

}

response = requests.post(API_ENDPOINT, json=payload, headers=headers, timeout=30)

response.raise_for_status()

data = response.json()

return {

"rank_list": data.get("products", []),

"category": data.get("category_name"),

"last_updated": data.get("crawled_at"),

"ad_slots": data.get("sponsored_positions", []), # SP ad position data

"total_items": data.get("total_count", 0)

}

# Multi-category batch collection

categories = [

("https://www.amazon.com/best-sellers-books/zgbs/books/", "10001"),

("https://www.amazon.com/best-sellers-kitchen/zgbs/kitchen/", "90210"),

("https://www.amazon.com/best-sellers-electronics/zgbs/electronics/", "60601"),

]

for url, zip_code in categories:

data = fetch_bestseller_list(url, zip_code)

print(f"Category: {data['category']} | Products: {data['total_items']} | Ad Slots: {len(data['ad_slots'])}")

Pattern 2: High-Frequency SP Ad Position Monitoring (Async Optimized)

import asyncio

import aiohttp

from typing import List, Dict

# Async concurrent collection — supports 10M+ daily page requests at scale

async def fetch_ad_positions(session: aiohttp.ClientSession, keyword: str, api_key: str) -> Dict:

"""Async collection of SP ad positions for a single keyword"""

search_url = f"https://www.amazon.com/s?k={keyword.replace(' ', '+')}"

payload = {

"url": search_url,

"render_js": True,

"output_format": "json",

"parse_template": "amazon_search_ads",

"geo": {"country": "US", "zip_code": "10001"},

"extract_fields": [

"sponsored_top", # Top banner ad slots

"sponsored_sidebar", # Sidebar ad slots

"sponsored_inline", # Inline sponsored results (critical)

"organic_rank_1_to_20" # Organic top 20 for comparison

]

}

async with session.post(

"https://api.pangolinfo.com/v1/scrape",

json=payload,

headers={"Authorization": f"Bearer {api_key}"}

) as resp:

return await resp.json()

async def batch_monitor_keywords(keywords: List[str], api_key: str):

"""

Concurrent keyword ad position monitoring

Semaphore-controlled to stay within rate limits

"""

semaphore = asyncio.Semaphore(20)

async with aiohttp.ClientSession() as session:

async def limited_fetch(kw):

async with semaphore:

return await fetch_ad_positions(session, kw, api_key)

results = await asyncio.gather(*[limited_fetch(kw) for kw in keywords], return_exceptions=True)

return results

# Monitor 500 core keywords for ad position changes

keywords = ["coffee maker", "air fryer", "bluetooth speaker"] # expand to full list

asyncio.run(batch_monitor_keywords(keywords, "your_api_key"))

In production, this company layered Celery task queues with Redis for job management, added retry logic for transient errors, and implemented data versioning using Pangolinfo’s response-level metadata. The total infrastructure overhead is minimal relative to what comparable self-built systems require.

By the Numbers: Quantified Outcomes and ROI Breakdown

Six months after completing the Pangolinfo integration (data through May 2025), the company conducted an internal performance review. The following metrics are shared with customer authorization, anonymized per their request, and form the evidentiary core of this customer success story.

Collection Scale: 1M/Month → 10M+/Day

Before: monthly effective data collection of approximately 870,000 records (de-duplicated, validated). After: daily effective collection holding steady at 11–14 million records. Monthly equivalent: 330–420 million records, a roughly 400x increase. The scale multiplier looks dramatic but the mechanism is straightforward: prior infrastructure constraints had forced the team to limit monitoring to core ASINs in core categories. The new API-based system made full-category, full-keyword coverage economically and technically viable for the first time.

Data Quality: Across-the-Board Improvement

SP ad slot capture rate: 62% → 98.1% (+36 percentage points). This single metric was the most direct driver of improved customer retention. Data latency: average 52 hours → average 13 minutes, a transformation that fundamentally changed product value for sellers in time-sensitive categories. Customer Says data coverage (Amazon’s AI-generated review summary field): 0% → 91% — a field that had been completely inaccessible now powering an entirely new product capability.

Cost Structure: From Fixed High-Cost to Elastic Low-Margin

Before: monthly infrastructure cost (IP resources + servers + engineering hours, fully loaded) approximately RMB 216,000, against 870K records/month. Effective cost: ~RMB 0.25/record. After: usage-based API billing averaging RMB 98,000/month against 300M+ record capacity. Effective cost: ~RMB 0.00033/record — a reduction of approximately 99.9%. Even accounting for test requests and re-scrapes, the realized cost reduction exceeded 68% on the team’s internal accounting.

The cost reduction cascaded into two second-order effects: first, the 7-engineer crawler maintenance burden was substantially redirected to feature development, accelerating new feature release cadence from roughly 1-2 per quarter to 2-3 per month; second, lower unit economics made it viable to extend data collection to additional platforms (Walmart, Shopee) as a foundation for the company’s multi-platform expansion roadmap in H2 2025.

Business Outcomes: Data Quality Reflected in Commercial Metrics

Paid conversion rate: 18.3% → 22.7% (within four months), with “Ad Position Tracker” and “Customer Says Insights” as the primary drivers. Monthly churn reduction: from 11.8% to 6.4% on a monthly basis, driven by a 78% drop in data-quality-related complaints. Monthly ARR: from RMB 8 million to approximately RMB 11.4 million over six months (+42.5%), with an estimated 60%+ of the incremental revenue attributable to data-quality-dependent feature improvements.

ROI: 14.3x Over the Period

Conservative calculation in this customer success story: annualized incremental ARR of approximately RMB 6.8 million against total investment (API cost + migration engineering + integration labor) of approximately RMB 2.16 million in year-one terms, yielding a long-term ROI of ~14.3x. This aligns closely with Pangolinfo’s published benchmark range for enterprise API customers (12-18x), which provides useful external validation for the methodology.

Three Takeaways This Customer Success Story Actually Earns

The company in this customer success story wasn’t a startup scraping on a shoestring — they had 30+ engineers, adequate budget, and dedicated infrastructure personnel. They still hit the ceiling of self-built scraping. That matters, because it means this isn’t a problem of execution. It’s a structural choice problem, and the lesson holds for any tool company or SaaS business that treats data as a core product asset.

First, scraper maintenance cost growth is non-linear. Below a certain collection volume threshold, self-built crawlers are economically defensible. Beyond roughly 1-2 million records per day, maintenance costs begin compounding faster than data quality can improve — this pattern recurs consistently across the industry, and it’s the central structural lesson of this case.

Second, SP ad slot capture rate is the most underestimated data quality metric in this space. Many tool products claim “real-time” data while glossing over completeness. If your product surfaces competitive advertising intelligence and your capture rate sits at 60-70%, you’re showing sellers a map with a third of the territory missing. That’s not a minor accuracy issue — it’s a product credibility problem. The 98% capture rate delivered by Pangolinfo Scrape API was the single data point that most rapidly moved this customer’s procurement decision.

Third, usage-based billing elasticity may matter more to tool companies than the technical benchmarks. Amazon peak events — Prime Day, Black Friday, Cyber Monday — coincide precisely with maximum seller data demand and maximum Amazon anti-bot enforcement. If your collection infrastructure is sized to fixed capacity, you’ll either overload at peak or waste excess capacity in off-peak months. Neither is efficient.

If your team is evaluating enterprise-grade data collection infrastructure, or currently experiencing the maintenance burden this customer success story describes, Pangolinfo Scrape API offers a free trial with access to dedicated technical consultation for your specific collection scenario.

Ready to replicate this customer success story? Start your free trial of Pangolinfo Scrape API and get dedicated technical advisor support for your enterprise data collection scale-up.Read the docs →