Late 2025. The AI lead at a mid-sized cross-border e-commerce SaaS company wrote in an internal memo: “Our agent can answer virtually any question — except who’s today’s Best Seller on Amazon, or what price a competitor adjusted to last night.” That sentence cuts to the core of the deepest tension in the AI-plus-e-commerce space right now: demand for e-commerce agent training data is scaling at a pace nobody predicted, while the infrastructure for acquiring that data has barely moved in three years.

The capability curve of large language models has consistently outrun every forecast. From GPT-4 to Claude 3.5 and a growing roster of vertically specialized models, “agent” has transitioned from a buzzword to a commercial reality at serious scale. E-commerce agents — AI systems capable of autonomously executing product research, competitor monitoring, pricing decisions, and ad campaign optimization — are rapidly becoming standard equipment for established cross-border sellers, SaaS platforms, and brand operators. Gartner’s early 2026 forecast projects that by 2027, over 60% of B2B e-commerce decisions will involve AI agent assistance. That projection rests on one critical assumption: a sustained, high-quality supply of LLM fine-tuning e-commerce data.

The problem lives precisely in that assumption. Training an agent that genuinely performs in e-commerce contexts doesn’t require generalized internet-crawled corpora — it requires highly time-sensitive, structurally clean e-commerce domain data: the actual listed price of a specific ASIN in a specific delivery zone, the day-over-day movement of Best Seller rankings within a niche category, the keyword density patterns in top-competing listings, the recurring pain points surfacing in recent reviews. This data needs to exist, be current, exist at scale, and arrive in formats clean enough to enter a training pipeline without extensive preprocessing. Acquiring this kind of data at scale is, somewhat ironically, one of the clearest capability gaps in current AI systems.

The industry keeps asking “is our agent smart enough?” The more important question to ask first: is the data we’re feeding it actually real?

Scaling E-Commerce Data Collection: The Structural Blind Spot of AI Systems

Most AI engineers building e-commerce agents hit their first real wall at exactly this step — data acquisition. Picture a typical scenario: you want your agent to continuously track the competitive landscape in a specific Amazon category, pulling complete listing data for the Top 100 BSR products every day. In theory, this sounds manageable: crawl the pages, parse the structure, store JSON. In practice, Amazon’s anti-bot mechanisms, dynamic JavaScript rendering, geographically differentiated pricing, and frequent structural updates to page layouts combine to transform that “simple” requirement into an engineering resource sinkhole.

The more fundamental issue: large language models don’t have the intrinsic ability to actively and continuously acquire structured data from external systems. An LLM is a static compression of knowledge — its “knowing” is a snapshot of the internet at a training cutoff date. And e-commerce data derives its value precisely from its dynamism. A pricing data point is effectively stale after six hours. A batch of user reviews processed with a month’s lag has near-zero value for product sourcing decisions. Time is the variable that transforms data from an asset into a liability.

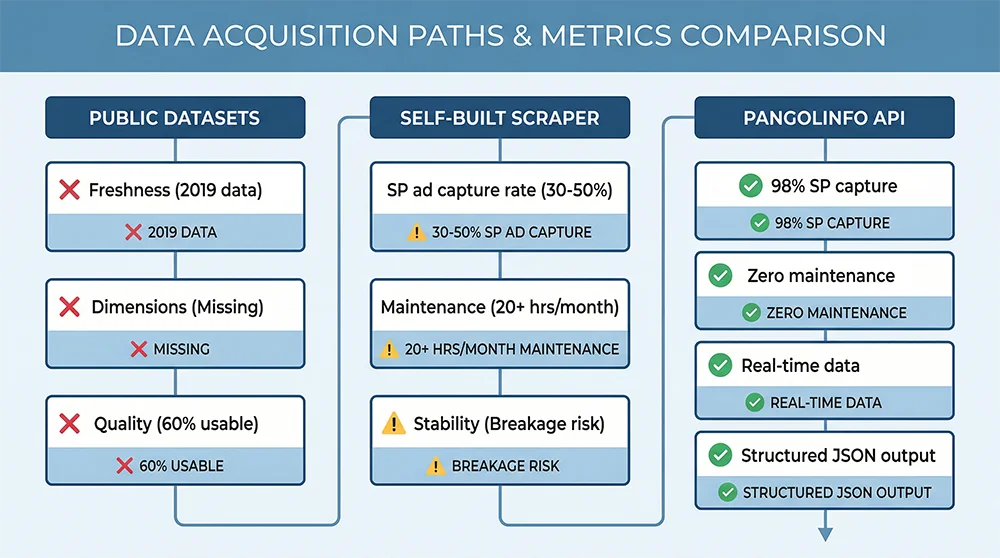

Three Fatal Limitations of Public E-Commerce Datasets

Early-stage teams frequently turn to publicly available AI agent datasets for e-commerce, such as the Amazon Product Data collections on Hugging Face, MAVE, or Esci. These datasets have genuine value in academic research contexts. In production agent development, they expose three structural failures that can’t be engineered around.

First: temporal obsolescence. Public e-commerce datasets update on yearly timescales, if at all. The MAVE dataset draws from 2019–2020 Amazon data. In today’s commercial environment, many of those products no longer exist, the competitive price bands in those categories have shifted substantially, and the keyword search volumes that characterized those categories have evolved beyond recognition. An agent trained on this data perceives current market conditions through frosted glass.

Second: missing data dimensions. Public datasets typically cover product fundamentals — title, description, ASIN, price. But production e-commerce agents require significantly richer dimensionality: SP ad slot occupancy, real displayed pricing by delivery zone, Customer Says aggregated sentiment, New Releases ranking dynamics, BSR movement curves over time. These dimensions are nearly absent from public datasets.

Third: format inconsistency and the hidden cost of cleaning. Public datasets are typically assembled from multiple sources, with inconsistent field naming conventions, arbitrary handling of missing values, and a mix of residual HTML markup with plain text. A modestly staffed AI team can find itself allocating 30% or more of engineering time to cleaning pipelines — time that isn’t going toward anything that actually improves agent capability.

Building Your Own Scrapers: Three Structural Risks

Recognizing the limits of public datasets, many teams attempt to build custom scrapers. This path carries its own systemic risks. Amazon’s anti-scraping capabilities are among the most sophisticated in the industry — IP blocking, behavioral fingerprinting, JavaScript dynamic rendering, CAPTCHA challenges. These mechanisms stack in ways that make maintaining a stable Amazon scraper roughly comparable in operational overhead to maintaining a moderately complex production service.

Compounding the challenge: Amazon updates page structures on a rolling basis. Each update may silently invalidate your parsing logic. The discovery mechanism — a data sync job that failed sometime overnight — often means hours of uncollected data before the issue is diagnosed and patched. At the pace of AI iteration today, the engineering bandwidth consumed by scraper maintenance is opportunity cost drawn directly from model improvement work.

There’s a third dimension increasingly impossible to ignore: regulatory exposure. The space where custom scraping operates legally has narrowed as data privacy frameworks have matured. Platform terms of service violations, even if civil rather than criminal, carry real reputational and operational risk for any company whose AI products depend on consistent data access.

Why AI’s Accelerating Iteration Amplifies, Not Reduces, Data Infrastructure Importance

There’s a common but mistaken inference that as model capabilities grow, the criticality of external data infrastructure should decline. The actual dynamic runs opposite. Stronger models have higher sensitivity to data quality — the gap between clean, representative training data and noisy, biased data surfaced in model outputs becomes more visible as the model gets better at exploiting whatever signal exists. Faster iteration cycles amplify the cost of unstable data pipelines — because every iteration requires validating data quality, and ad-hoc data acquisition introduces hard-to-trace quality variance that complicates iteration attribution.

The implication: at this moment in the evolution of AI, establishing a stable, scaled e-commerce agent training data API infrastructure is becoming the invisible competitive separator between frontier AI teams and the rest. The separator isn’t visible in demo videos. It shows up six months later in the quality gap between agents that have been trained on consistently fresh, structurally clean data and those that haven’t.

The Cost-Benefit Matrix Across Three Data Acquisition Paths

Before going specific, let’s define a common comparison baseline. Assume a production requirement: daily, stable acquisition of complete product data for the Top 200 listings across three target Amazon US categories — full listing details, BSR rankings, review summaries, and ad slot data — supporting monthly iteration of an e-commerce product research agent.

| Approach | Startup Cost | Monthly Operations | Data Freshness | Stability | Scalability | Regulatory Risk |

|---|---|---|---|---|---|---|

| Custom Scraper | High (2–3 months of engineer time) | High (dedicated maintenance + proxy costs) | Variable, maintenance-dependent | Low (frequent breakages) | Poor (page changes require rebuilds) | High |

| Public Datasets | Near zero (download and use) | Negligible | Very poor (annual updates or stale) | High (static files) | None (fixed dimensions) | Low |

| Commercial Data API (Pangolinfo) | Low (API integration under 1 day) | Medium (usage-based, low marginal cost) | Excellent (minute-level real-time data) | Very high (SLA-backed) | Excellent (multi-platform, multi-dimensional, elastic) | Very low (compliant data collection) |

This matrix makes the commercial API path look dominant across nearly every key dimension, with monthly usage cost as the primary variable. But when you fold in the hidden costs of the custom scraper path — engineer time, opportunity cost of maintenance incidents, cost of retraining on data quality failures — the total ROI of commercial APIs typically runs several times higher across comparable time horizons.

Pangolinfo API: Infrastructure Built for Scaled E-Commerce Data Collection

In the specific context of building data infrastructure for e-commerce agents, Pangolinfo Scrape API has become the default choice for a growing number of AI teams — not because it’s marketed well, but because its design logic maps directly onto what AI teams actually need: structured output, high concurrency support, real-time data updates, and transparent compliant collection mechanisms.

Core Capabilities: What Your Agent Needs, You Can Get

Building a high-quality e-commerce agent training dataset requires spanning multiple data dimensions simultaneously. Pangolinfo’s product suite covers these dimensions with precision:

Product listing and detail data: Scrape API retrieves complete product information from Amazon, Walmart, Shopify, and other major platforms — title, description, A+ content, price ranges, variant configurations, image counts, seller information, FBA/FBM status. Output formats include raw HTML, Markdown, and structured JSON. That last format is particularly valuable for AI teams: it eliminates most preprocessing overhead and integrates directly with standard training frameworks.

BSR rankings and category data: This is the core raw material for building product research agent training datasets. Pangolinfo supports scheduled scraping of Best Seller, New Releases, and Movers & Shakers rankings by category, with timestamps. Combined with historical storage, you can build time-series datasets with ranking trajectory curves — directly valuable for training agents to identify trending category dynamics.

Ad slot data (industry-leading 98% capture rate): SP ad slot data is among the hardest e-commerce dimensions to acquire reliably. Pangolinfo’s 98% SP ad slot capture rate is recognized as the industry benchmark. This means AI teams can build training datasets with complete “search results page competitive landscape” samples in them — giving agents what they need to genuinely understand the relationship between paid placement and organic ranking.

Review data (precise sentiment training corpus): The Reviews Scraper API enables systematic collection of Amazon reviews including rating distribution, high-frequency positive and negative terms, verified purchase flags, and review time series. This data is the optimal training corpus for agents performing user feedback analysis, negative review alerting, and product improvement recommendation tasks.

Delivery zone-specific pricing: A commonly overlooked but high-value data dimension. Amazon displays meaningfully different prices to users in different delivery zones, and capturing accurate local pricing data is essential for training agents that need to understand localized competitive pricing dynamics.

Customer Says aggregated sentiment: Amazon’s Customer Says module is an AI-generated synthesis of user sentiment across reviews. The ability to capture this module’s output is uniquely valuable for researchers studying Amazon’s own AI interpretation logic and for building reverse-engineering-style training corpora.

Built for AI Engineers: The Shortest Path from Data Collection to Training Dataset

For AI teams, Pangolinfo’s core value isn’t just in the breadth of data coverage — it’s in how short the path from API response to training-ready dataset actually is. The structured JSON output is highly compatible with data ingestion interfaces across major training frameworks (HuggingFace Datasets, LlamaIndex, LangChain), eliminating the need for intermediate format conversion layers. Paired with a scheduler or message queue, it becomes straightforward to build data pipelines that maintain whatever update frequency your training cycle requires.

For e-commerce agents built on a RAG (Retrieval-Augmented Generation) architecture, Pangolinfo’s Markdown output format is especially well-suited: it can be vectorized directly as document content and stored in vector databases like Pinecone, Weaviate, or Chroma, forming a live e-commerce knowledge base that gives agents access to real-time market data during inference. This is the cleanest solution to the problem of “the model doesn’t know who today’s Best Seller is” — without the cost and latency of model retraining.

In Practice: Building an E-Commerce Agent Training Data Pipeline with Pangolinfo API

The following Python example demonstrates a complete pipeline — from scheduled Amazon BSR data collection through formatting agent training samples — built on Pangolinfo’s API.

import requests

import json

import time

from datetime import datetime

from pathlib import Path

# Pangolinfo Scrape API Configuration

PANGOLINFO_API_KEY = "your_api_key_here"

API_ENDPOINT = "https://api.pangolinfo.com/v2/amazon/browse-node"

def fetch_bsr_data(category_id: str, marketplace: str = "amazon.com") -> dict:

"""

Fetch BSR ranking data for a given Amazon category.

Args:

category_id: Amazon Browse Node ID for the target category

marketplace: Target marketplace domain

Returns:

Structured BSR data dict from Pangolinfo API

"""

headers = {

"Authorization": f"Bearer {PANGOLINFO_API_KEY}",

"Content-Type": "application/json"

}

payload = {

"url": f"https://www.amazon.com/best-sellers/{category_id}",

"marketplace": marketplace,

"output_format": "json", # Structured JSON directly into the pipeline

"include_reviews_summary": True, # Include aggregated review signals

"include_ad_slots": True, # Capture SP ad slots (98% capture rate)

}

response = requests.post(API_ENDPOINT, headers=headers, json=payload)

response.raise_for_status()

return response.json()

def transform_to_sft_sample(product: dict, category: str, rank: int) -> dict:

"""

Transform raw API product data into an SFT training sample.

Scenario: training an agent to answer "What are the key competitive

characteristics of top products in this category?"

"""

# Build instruction-response pairs compatible with standard SFT training formats

instruction = (

f"Analyze the #{rank} ranked product in the Amazon {category} category. "

f"Identify its core competitive characteristics and provide strategic recommendations for sellers."

)

product_context = {

"asin": product.get("asin"),

"title": product.get("title"),

"price": product.get("price"),

"rating": product.get("rating"),

"review_count": product.get("reviews"),

"brand": product.get("brand"),

"bsr_rank": rank,

"ad_positions": product.get("ad_slots", []),

"features": product.get("bullet_points", [])[:3], # Top 3 feature bullets

"customer_says": product.get("customer_says", ""),

}

# Build high-quality response (can be GPT-4/Claude pre-generated and human-reviewed)

review_signal = (

"Strong social proof — study their review generation strategy"

if product_context["review_count"] and product_context["review_count"] > 1000

else "Moderate review base with growth headroom"

)

ad_intensity = (

"High competitive pressure"

if len(product_context["ad_positions"]) > 3

else "Moderate competition"

)

features_summary = (

" / ".join(product_context["features"][:2])

if product_context["features"]

else "Requires deeper listing analysis"

)

response_text = f"""

Analysis of the #{rank} product in the {category} category:

**Positioning**: {product_context['title'][:60]}...

**Pricing**: {product_context['price']} — Positioned within the competitive price band; differentiation strategy recommended

**Trust signals**: {product_context['rating']} stars / {product_context['review_count']} reviews — {review_signal}

**Ad landscape**: {len(product_context['ad_positions'])} SP ad slots occupied — {ad_intensity}

**Core value props**: {features_summary}

**Customer sentiment summary**: {product_context['customer_says'][:200] if product_context['customer_says'] else 'Not available'}

**Data captured**: {datetime.now().strftime('%Y-%m-%d %H:%M')} UTC+8

""".strip()

return {

"instruction": instruction,

"input": json.dumps(product_context, ensure_ascii=False),

"output": response_text,

"metadata": {

"source": "pangolinfo_api",

"category": category,

"bsr_rank": rank,

"timestamp": datetime.now().isoformat(),

"marketplace": "amazon.com"

}

}

def build_training_dataset(

categories: list,

output_dir: str = "./training_data",

top_n: int = 50

) -> None:

"""

Build a complete e-commerce agent training dataset.

Args:

categories: [{"id": "browse_node_id", "name": "category name"}, ...]

output_dir: Output directory for the dataset

top_n: Number of top products to collect per category

"""

output_path = Path(output_dir)

output_path.mkdir(parents=True, exist_ok=True)

all_samples = []

for cat in categories:

print(f"[{datetime.now().strftime('%H:%M:%S')}] Collecting: {cat['name']}")

try:

raw_data = fetch_bsr_data(cat["id"])

products = raw_data.get("products", [])[:top_n]

for rank, product in enumerate(products, start=1):

sample = transform_to_sft_sample(product, cat["name"], rank)

all_samples.append(sample)

print(f" ✓ Collected {len(products)} products → {len(products)} training samples")

time.sleep(1.5) # Polite delay per API usage guidelines

except Exception as e:

print(f" ✗ Collection failed: {e}")

continue

# Save as JSONL file compatible with HuggingFace Datasets format

output_file = output_path / f"ecom_agent_training_{datetime.now().strftime('%Y%m%d')}.jsonl"

with open(output_file, "w", encoding="utf-8") as f:

for sample in all_samples:

f.write(json.dumps(sample, ensure_ascii=False) + "\n")

print(f"\n✅ Dataset build complete")

print(f" Total samples: {len(all_samples)}")

print(f" Output file: {output_file}")

# ── Usage Example ──────────────────────────────────────

if __name__ == "__main__":

target_categories = [

{"id": "16225007011", "name": "Bluetooth Headphones"},

{"id": "1055398", "name": "Home Cleaning Appliances"},

{"id": "2619526011", "name": "Pet Supplies"},

]

build_training_dataset(

categories=target_categories,

output_dir="./ecom_agent_data",

top_n=100 # Top 100 per category → 300 SFT training samples total

)

This demonstrates the core logic. A production deployment would add retry logic with exponential backoff, a data quality validation layer (filtering records missing critical fields), async collection scheduling via message queue, and vector database integration for RAG use cases. The critical point: the most difficult piece of this pipeline — reliably and at scale acquiring structured data from Amazon — is fully handled by Pangolinfo Scrape API. That’s precisely the component that’s hardest to build yourself and most costly to maintain when it breaks.

Integrating with RAG Architectures

For teams whose e-commerce agent architecture centers on RAG rather than fine-tuning, Pangolinfo works equally well as a real-time data source. The core pattern: collect product data via API in Markdown format, vectorize it using an embedding model (text-embedding-3-large performs well here), and write vectors to a database like Pinecone or Weaviate. During inference, the agent retrieves current market data through semantic search — eliminating the knowledge cutoff problem without retraining, and giving your agent reliable access to today’s competitive landscape rather than last year’s snapshot.

Full API documentation covering supported output formats and field specifications is available at Pangolinfo Docs.

Data Infrastructure: The Hidden Moat of AI-Era E-Commerce Competition

Stepping back to look at the longer arc: the e-commerce AI competitive dynamic is shifting rapidly from “whose model is smarter” toward “whose data supply is more stable.” When GPT-4-level reasoning has become a baseline capability, when Llama 3 lets anyone run a capable open-source model on consumer hardware, model capability itself is no longer the primary differentiator. The differentiation increasingly comes from data.

An AI team that reliably ingests 100,000 structured e-commerce data points per day and one that cobbles together 5,000 inconsistently formatted records per week will, six months from now, be training agents with a performance gap that far exceeds what most people would expect from that difference. Data infrastructure advantages compound through model iteration cycles.

Looking further forward: as AI agents evolve from decision-support tools into autonomous decision-making systems, data “authoritativeness” will become as important as data “freshness.” Agents will need to know that the data they’re calling is trustworthy, compliantly sourced, and version-tracked. This means the choice of Amazon data API for AI agent training is not just an engineering efficiency question — it’s a foundational component of AI system trustworthiness. On that dimension, the advantage of professional commercial APIs over custom-built scrapers will only widen.

The teams quietly establishing rigorous, scalable data collection infrastructure right now are accumulating a competitive advantage that becomes structurally difficult to replicate six months later. Data moats in AI systems are slow to build and fast to matter.

The Right Infrastructure Choice Compounds Faster Than You Think

The deepest opportunity created by the AI explosion isn’t about who can ship the fastest demo. It’s about who can establish the most stable, reusable supply chain for e-commerce agent training data. The core of that supply chain — scaled, real-time, structurally clean e-commerce data acquisition — is precisely where current AI systems have their largest capability gap, and precisely where purpose-built API services create the most defensible value.

For AI teams at this inflection point, the practical action path: audit your current data pipeline for freshness and structural quality, identify which missing data dimensions are most directly constraining agent performance, validate a commercial API service fit through a trial integration, and establish automated data collection scheduling within your first development cycle. Every month you delay building a disciplined data infrastructure is a month where your data accumulation curve starts from zero — in a world where AI iteration speed means that delay compounds faster than it looks like it should.

E-commerce agent competition is, fundamentally, a competition over data quality and supply efficiency. At the starting line of that competition, choosing the right infrastructure has more leverage than any amount of subsequent optimization.

Article Summary

This article examines the growing tension between the exponentially expanding demand for e-commerce agent training data and the inadequacy of current data acquisition methods in the AI explosion era. It breaks down the structural failures of public datasets, custom scrapers, and the comparative advantages of commercial APIs; presents Pangolinfo Scrape API’s core capabilities across product detail, BSR ranking, SP ad slot, and review data dimensions; provides complete Python code for a production-grade e-commerce agent SFT data pipeline; and concludes with a forward-looking argument that data infrastructure is becoming the defining hidden moat in AI-era e-commerce competition.

📊 Start building with Pangolinfo Scrape API — free trial credits to validate your e-commerce agent data pipeline.

📚 Read the API Documentation for complete field specifications and integration examples.

🖥️ Manage your API keys and usage at the Pangolinfo Console.